OCED

OCED Framework for Classifying of AI Systems

Over the past few months we have seen more and more countries looking at how they can support and regulate the use and development of AI within their geographic areas. For those in Europe, a lot of focus has been on the draft AI Regulations. At the time of writing this post there has been a lot of politics going on in relation to the EU AI Regulations. Some of this has been around the definition of AI, what will be included and excluded in their different categories, who will be policing and enforcing the regulations, among lots of other things. We could end up with a very different set of regulations to what was included in the draft (published April 2021). It also looks like the enactment of the EU AI Regulations will be delayed to the end of 2022, with some people suggesting it would be towards mid-2023 before something formal happens.

I mentioned above one of the things that may or may not change is the definition of AI within the EU AI Regulations. Although primarily focused on the inclusion/exclusion of biometic aspects, there are other refinements being proposed. When you look at what other geographic regions are doing, we start to see some common aspects on their definitions of AI, but we also see some differences. You can imagine the difficulties this will present in the global marketplace and how AI touches upon all/many aspects of most businesses, their customers and their data.

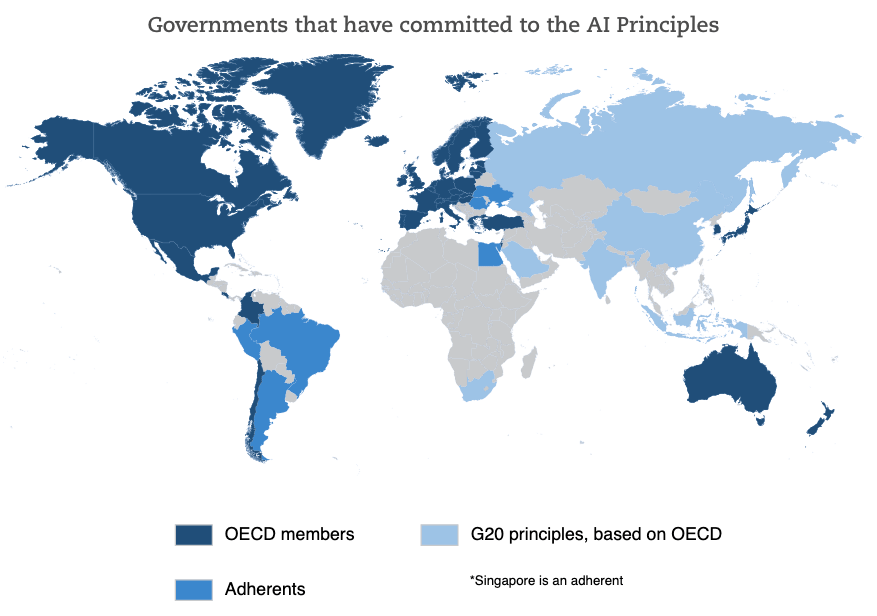

Most of you will have heard of OCED. In recent weeks they have been work across all member countries to work towards a Definition of AI and how different AI systems can be classified. They have called this their OCED Framework for Classifying of AI Systems.

The OCED Framework for Classifying AI System is a tool for policy-makers, regulators, legislators and others so that they can assess the opportunities and risks that different types of AI systems present and to inform their national AI strategies.

The Framework links the technical characteristics of AI with the policy implications set out in the OCED AI Principles which include:

- Inclusive growth, sustainable development and well-being

- Human-centred values and fairness

- Transparency and explainability

- Robustness, security and safety

- Accountability

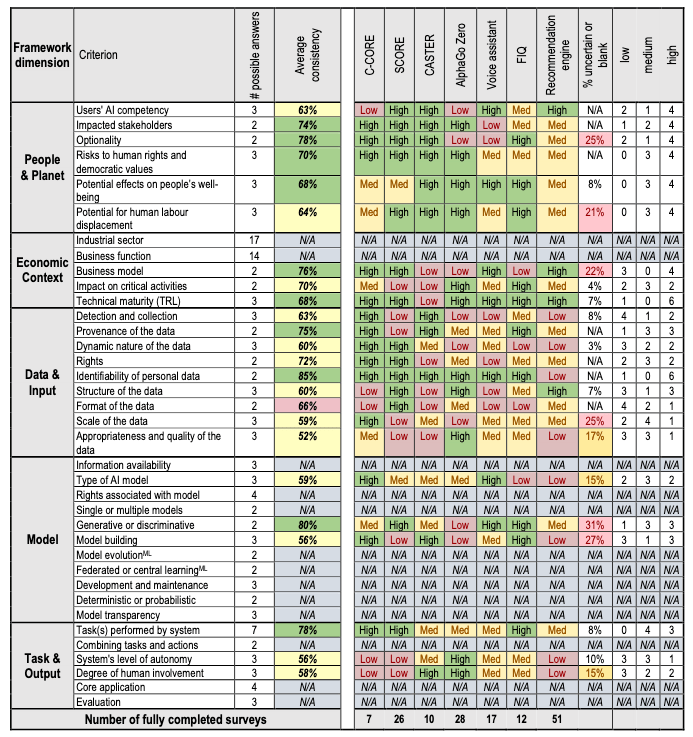

The framework looks are different aspects depending on if the AI is still within the lab (sandbox) environment or is live in production or in use in the field.

The framework goes into more detail on the various aspects that need to be considered for each of these. The working group have apply the frame work to a number of different AI systems to illustrate how it cab be used.

Check out the framework document where it goes into more detail of each of the criterion listed above for each dimension of the framework.

You must be logged in to post a comment.