AI Regulations

Tracking AI Regulations, Governance and Incidents

Here are the key Trackers to follow to stay ahead.

𝐀𝐈 Incidents & Risks

AI Risk Repository [MIT FutureTech]

A comprehensive database of 700 risks from AI systems https://airisk.mit.edu/

AI Incident Database [Partnership on AI]

Dedicated to indexing the collective history real-world of harms caused by the deployment of AI

https://lnkd.in/ewBaYitm

AI Incidents Monitor [OECD – OCDE]

AI incidents and hazards reported in international media globally are identified and classified using machine learning models https://lnkd.in/e4pJ7jcA

𝐀𝐈 Regulations & Policies

Global AI Law and Policy Tracker [IAPP]

Resource providing information about AI law and policy developments in key jurisdictions worldwide https://lnkd.in/eiGMk9Rm

National AI Policies and Strategies [OECD.AI]

Live repository of 1000+ AI policy initiatives from 69 countries, territories and the EU https://lnkd.in/ebVTQzdb

Global AI Regulation Tracker [Raymond Sun]

An interactive world map that tracks AI law, regulatory and policy developments around the world https://lnkd.in/ekaKzmzD

U.S. State AI Governance Legislation Tracker [IAPP]

Tracker which focuses on cross-sectoral AI governance bills that apply to the private sector https://lnkd.in/ee4N-ckB.

𝐀𝐈 Governance Toolkits & Resources

AI Standards Hub [The Alan Turing Institute]

Online repository of 300+ AI standards https://lnkd.in/erVdP4g7

AI Risk Management Framework Playbook [National Institute of Standards and Technology (NIST)]

Playbook of recommended actions, resources and materials to support implementation of the NIST AI RMF https://lnkd.in/eTzpfbCi

Catalogue of Tools & Metrics for Trustworthy AI [OECD.AI]

Tools and metrics which help AI actors to build and deploy trustworthy AI systems https://lnkd.in/e_mnAbpZ

Portfolio of AI Assurance Techniques [Department for Science, Innovation and Technology]

The Portfolio showcases examples of AI assurance techniques being used in the real-world to support the development of trustworthy AI. https://lnkd.in/eJ5V3uzb

EU AI Act has been passed by EU parliament

It feels like we’ve been hearing about and talking about the EU AI Act for a very long time now. But on Wednesday 13th March 2024, the EU Parliament finally voted to approve the Act. While this is a major milestone, we haven’t crossed the finish line. There are a few steps to complete, although these are minor steps and are part of the process.

The remaining timeline is:

- The EU AI Act will undergo final linguistic approval by lawyer-linguists in April. This is considered a formality step.

- It will then be published in the Official EU Journal

- 21 days after being published it will come into effect (probably in May)

- The Prohibited Systems provisions will come into force six months later (probably by end of 2024)

- All other provisions in the Act will come into force over the next 2-3 years

If you haven’t already started looking at and evaluating the various elements of AI deployed in your organisation, now is the time to start. It’s time to prepare and explore what changes, if any, you need to make. If you don’t the penalties for non-compliance are hefty, with fines of up to €35 million or 7% of global turnover.

The first thing you need to address is the Prohibited AI Systems and the EU AI Act outlines the following and will need to be addressed before the end of 2024:

- Manipulative and Deceptive Practices: systems that use subliminal techniques to materially distort a person’s decision-making capacity, leading to significant harm. This includes systems that manipulate behaviour or decisions in a way that the individual would not have otherwise made.

- Exploitation of Vulnerabilities: systems that target individuals or groups based on age, disability, or socio-economic status to distort behaviour in harmful ways.

- Biometric Categorisation: systems that categorise individuals based on biometric data to infer sensitive information like race, political opinions, or sexual orientation. This prohibition does not cover any labelling or filtering of lawfully acquired biometric datasets, such as images. There are also exceptions for law enforcement.

- Social Scoring: systems designed to evaluate individuals or groups over time based on their social behaviour or predicted personal characteristics, leading to detrimental treatment.

- Real-time Biometric Identification: The use of real-time remote biometric identification systems in publicly accessible spaces for law enforcement is heavily restricted, with allowances only under narrowly defined circumstances that require judicial or independent administrative approval.

- Risk Assessment in Criminal Offences: systems that assess the risk of individuals committing criminal offences based solely on profiling, except when supporting human assessment already based on factual evidence.

- Facial Recognition Databases: systems that create or expand facial recognition databases through untargeted scraping of images are prohibited.

- Emotion Inference in Workplaces and Educational Institutions: The use of AI to infer emotions in sensitive environments like workplaces and schools is banned, barring exceptions for medical or safety reasons.

In addition to the timeline given above we also have:

- 12 months after entry into force: Obligations on providers of general purpose AI models go into effect. Appointment of member state competent authorities. Annual Commission review of and possible amendments to the list of prohibited AI.

- after 18 months: Commission implementing act on post-market monitoring

- after 24 months: Obligations on high-risk AI systems specifically listed in Annex III, which includes AI systems in biometrics, critical infrastructure, education, employment, access to essential public services, law enforcement, immigration and administration of justice. Member states to have implemented rules on penalties, including administrative fines. Member state authorities to have established at least one operational AI regulatory sandbox. Commission review and possible amendment of the last of high-risk AI systems.

- after 36 months: Obligations for high-rish AI systems that are not prescribed in Annex III but are intended to be used as a safety component of a product, or the AI is itself a product, and the product is required to undergo a third-party conformity assessment under existing specific laws, for example, toys, radio equipment, in vitro diagnostic medical devices, civil aviation security and agricultural vehicles.

The EU has provided an official compliance check that helps identify which parts of the EU AI Act apply in a given use case.

EU AI Regulations: Common Questions and Answers

As the EU AI Regulations move to the next phase, after reaching a political agreement last Nov/Dec 2023, the European Commission has published answers to 28 of the most common questions about the act. These questions are:

- Why do we need to regulate the use of Artificial Intelligence?

- Which risks will the new AI rules address?

- To whom does the AI Act apply?

- What are the risk categories?

- How do I know whether an AI system is high-risk?

- What are the obligations for providers of high-risk AI systems?

- What are examples for high-risk use cases as defined in Annex III?

- How are general-purpose AI models being regulated?

- Why is 10^25 FLOPs an appropriate threshold for GPAI with systemic risks?

- Is the AI Act future-proof?

- How does the AI Act regulate biometric identification?

- Why are particular rules needed for remote biometric identification?

- How do the rules protect fundamental rights?

- What is a fundamental rights impact assessment? Who has to conduct such an assessment, and when?

- How does this regulation address racial and gender bias in AI?

- When will the AI Act be fully applicable?

- How will the AI Act be enforced?

- Why is a European Artificial Intelligence Board needed and what will it do?

- What are the tasks of the European AI Office?

- What is the difference between the AI Board, AI Office, Advisory Forum and Scientific Panel of independent experts?

- What are the penalties for infringement?

- What can individuals do that are affected by a rule violation?

- How do the voluntary codes of conduct for high-risk AI systems work?

- How do the codes of practice for general purpose AI models work?

- Does the AI Act contain provisions regarding environmental protection and sustainability?

- How can the new rules support innovation?

- Besides the AI Act, how will the EU facilitate and support innovation in AI?

- What is the international dimension of the EU’s approach?

This list of Questions and Answers are a beneficial read and clearly addresses common questions you might have seen being addressed in other media outlets. With these being provided and answered by the commission gives us a clear explanation of what is involved.

In addition to the webpage containing these questions and answers, they provide a PDF with them too.

EU AI Act adopts OECD Definition of AI

Over the recent months, the EU AI Act has been making progress through the various hoop in the EU. Various committees and working groups have examined different parts of the AI Act and how it will impact the wider population. Their recommendations have been added to the EU Act and it has now progressed to the next stage for ratification in the EU Parliament which should happen in a few months time.

There are lots of terms within the EU AI Act which needed defining, with the most crucial one being the definition of AI, and this definition underpins the entire act, and all the other definitions of terms throughout the EU AI Act. Back in March of this year, the various political groups working on the EU AI Act reached an agreement on the definition of AI (Artificial Intelligence). The EI AI Act adopts, or is based on, the OECD definition of AI.

“Artificial intelligence system’ (AI system) means a machine-based system that is designed to operate with varying levels of autonomy and that can, for explicit or implicit objectives, generate output such as predictions, recommendations, or decisions influencing physical or virtual environments”

The working groups wanted the AI definition to be closely aligned with the work of international organisations working on artificial intelligence to ensure legal certainty, harmonisation and wide acceptance. The wording includes reference to predictions includes content, this is to ensure generative AI models like ChatGPT are included in the regulation.

Other definitions included are, significant risk, biometric authentication and identification.

“‘Significant risk’ means a risk that is significant in terms of its severity, intensity, probability of occurrence, duration of its effects, and its ability to affect an individual, a plurality of persons or to affect a particular group of persons,” the document specifies.

Remote biometric verification systems were defined as AI systems used to verify the identity of persons by comparing their biometric data against a reference database with their prior consent. That is distinguished by an authentication system, where the persons themselves ask to be authenticated.

On biometric categorisation, a practice recently added to the list of prohibited use cases, a reference was added to inferring personal characteristics and attributes like gender or health.

AI Liability Act

Over the past few weeks we have seem a number of new Artificial Intelligence (AI) Acts or Laws, either being proposed or are at an advanced stage of enactment. One of these is the EU AI Liability Act (also), and is supposed be be enacted and work hand-in-hand with the EU AI Act.

There are different view or focus perspectives between these two EU AI acts. For the EU AI Act, the focus is from the technical perspective and those who develop AI solutions. On the other side of things is the EU AI Liability Act whose perspective is from the end-user/consumer point.

The aim of the EU AI Liability Act is to create a framework for trust in AI technology, and when a person has been harmed by the use of the AI, provides a structure to claim compensation. Just like other EU laws to protect the consumers from defective or harmful products, the AI Liability Act looks to do similar for when a person is harmed in some way by the use or application of AI.

Most of the examples given for how AI might harm a person includes the use of robotics, drones, and when AI is used in the recruitment process, where is automatically selects a candidate based on the AI algorithms. Some other examples include data loss from tech products or caused by tech products, smart-home systems, cyber security, products where people are selected or excluded based on algorithms.

Harm can be difficult to define, and although some attempt has been done to define this in the Act, additional work is needed to by the good people refining the Act, to provide clarifications on this and how its definition can evolve post enactment to ensure additional scenarios can be included without the need for updates to the Act, which can be a lengthy process. A similar task is being performed on the list of high-risk AI in the EU AI Act, where they are proposing to maintain a webpages listing such.

Vice-president for values and transparency, Věra Jourová, said that for AI tech to thrive in the EU, it is important for people to trust digital innovation. She added that the new proposals would give customers “tools for remedies in case of damage caused by AI so that they have the same level of protection as with traditional technologies”

Didier Reynders, the EU’s justice commissioner says, “The new rules apply when a product that functions thanks to AI technology causes damage and that this damage is the result of an error made by manufacturers, developers or users of this technology.

The EU defines “an error” in this case to include not just mistakes in how the A.I. is crafted, trained, deployed, or functions, but also if the “error” is the company failing to comply with a lot of the process and governance requirements stipulated in the bloc’s new A.I. Act. The new liability rules say that if an organization has not complied with their “duty of care” under the new A.I. Act—such as failing to conduct appropriate risk assessments, testing, and monitoring—and a liability claim later arises, there will be a presumption that the A.I. was at fault. This creates an additional way of forcing compliance with the EU AI Act.

The EU Liability Act says that a court can now order a company using a high-risk A.I. system to turn over evidence of how the software works. A balancing test will be applied to ensure that trade secrets and other confidential information is not needlessly disclosed. The EU warns that if a company or organization fails to comply with a court-ordered disclosure, the courts will be free to presume the entity using the A.I. software is liable.

The EU Liability Act will go through some changes and refinement with the aim for it to be enacted at the same time as the EU AI Act. How long will this process that is a little up in the air, considering the EU AI Act should have been adopted by now and we could be in the 2 year process for enactment. But the EU AI Act is still working its way through the different groups in the EU. There has been some indications these might conclude in 2023, but lets wait and see. If the EU Liability Act is only starting the process now, there could be some additional details if the EU wants both Acts to be effective at the same time.

NATO AI Strategy

Over the past 18 months there has been wide spread push buy many countries and geographic regions, to examine how the creation and use of Artificial Intelligence (AI) can be regulated. I’ve written many blog posts about these. But it isn’t just government or political alliances that are doing this, other types of organisations are also doing so.

NATO, the political and (mainly) military alliance, has also joined the club. They have release a summary version of their AI Strategy. This might seem a little strange for this type of organisation to do something like this. But if you look a little closer NATA also says they work together in other areas such as Standardisation Agreements, Crisis Management, Disarmament, Energy Security, Clime/Environment Change, Gender and Human Security, Science and Technology.

In October/November 2021, NATO formally adopted their Artificial Intelligence (AI) Strategy (for defence). Their AI Strategy outlines how AI can be applied to defence and security in a protected and ethical way (interesting wording). Their aim is to position NATO as a leader of AI adoption, and it provides a common policy basis to support the adoption of AI System sin order to achieve the Alliances three core tasks of Collective Defence, Crisis Management and Cooperative Security. An important element of the AI Strategy is to ensure inter-operability and standardisation. This is a little bit more interesting and perhaps has a lessor focus on ethical use.

NATO’s AI Strategy contains the following principles of Responsible use of AI (in defence):

- Lawfulness: AI applications will be developed and used in accordance with national and international law, including international humanitarian law and human rights law, as applicable.

- Responsibility and Accountability: AI applications will be developed and used with appropriate levels of judgment and care; clear human responsibility shall apply in order to ensure accountability.

- Explainability and Traceability: AI applications will be appropriately understandable and transparent, including through the use of review methodologies, sources, and procedures. This includes verification, assessment and validation mechanisms at either a NATO and/or national level.

- Reliability: AI applications will have explicit, well-defined use cases. The safety, security, and robustness of such capabilities will be subject to testing and assurance within those use cases across their entire life cycle, including through established NATO and/or national certification procedures.

- Governability: AI applications will be developed and used according to their intended functions and will allow for: appropriate human-machine interaction; the ability to detect and avoid unintended consequences; and the ability to take steps, such as disengagement or deactivation of systems, when such systems demonstrate unintended behaviour.

- Bias Mitigation: Proactive steps will be taken to minimise any unintended bias in the development and use of AI applications and in data sets.

By acting collectively members of NATO will ensure a continued focus on interoperability and the development of common standards.

Some points of interest:

- Bias Mitigation efforts will be adopted with the aim of minimising discrimination against traits such as gender, ethnicity or personal attributes. However, the strategy does not say how bias will be tackled – which requires structural changes which go well beyond the use of appropriate training data.

- The strategy also recognises that in due course AI technologies are likely to become widely available, and may be put to malicious uses by both state and non-state actors. NATO’s strategy states that the alliance will aim to identify and safeguard against the threats from malicious use of AI, although again no detail is given on how this will be done.

- Running through the strategy is the idea of interoperability – the desire for different systems to be able to work with each other across NATO’s different forces and nations without any restrictions.

- What about Autonomous weapon systems? Some members do not support a ban on this technology.

- Has similar wording to the principles adopted by the US Department of Defense for the ethical use of AI.

- Wants to make defence and security a more attractive to private sector and academic AI developers/researchers.

- NATO principles have no coherent means of implementation or enforcement.

AI Sandboxes – EU AI Regulations

The EU AI Regulations provides a framework for placing on the market and putting into service AI system in the EU. One of the biggest challenges most organisations will face will be how they can innovate and develop new AI systems while at the same time ensuring they are compliant with the regulations. But a what point do you know you are compliant with these new AI Systems? This can be challenging and could limit or slow down the development and deployment of such systems.

The EU does not want to limit or slow down such innovations and want organisations to continually research, develop and deploy new AI. To facilitate this the EU AI Regulations contains a structure under which this can be achieved.

Section or Title of EU AI Regulations contains Articles 53, 54, and 55 to support the development of new AI systems by the use of Sandboxes. We have already seen examples of these being introduced by the UK and Norwegian Data Protection Commissioners.

A Sandbox “provides a controlled environment that facilitates the development, testing and validation of innovative AI systems for a limited time before their placement on the market or putting into

service pursuant to a specific plan.“

Sandboxes are stand alone environments to allow the exploration and development of new AI solutions, which may or may not include some risky use of customer data or other potential AI outcomes which may not be allowed under the regulations. It becomes a controlled experiment lab for the AI team who are developing and testing a potential AI System and can do so under real world conditions. The Sandbox gives a “safe” environment for this experimental work.

The Sandbox are to be established by the Competent Authorities in each EU country. In Ireland the Competent Authority seems to be the Data Protection Commissioner, and this may be similar in other countries. As you can imagine, under the current wording of the EU AI Regulations this might present some challenges for the both the Competent Authority and also for the company looking to develop an AI solution. Firstly, does the Competent Authority need to provide sandboxes for all companies looking to develop AI, and each one of these companies may have several AI projects. This is a massive overhead for the Competent Authority to provide and resource. Secondly, will companies be willing to setup a self-contained environment, containing customer data, data insights, solutions with potential competitive advantage, etc in a Sandbox provided by the Competent Authority. The technical infrastructure used could be hosting many Sandboxes, with many competing companies using the same infrastructure at the same time. This is a big ask for the companies and the Competent Authority.

Let’s see what really happens regarding the implementation of the Sandboxes over the coming years, and how this will be defined in the final draft of the Regulations.

Article 54 defines additional requirements for the processing of personal data within the Sandbox.

- Personal Data being used is required, and can be fulfilled by processing anonymized, synthetic or other non-personal data. Even if it has been collected for other purposes.

- Continuous monitoring needed to identify any high risk to fundamental rights of the data subject, and response mechanism to mitigate those risks.

- Any personal data to be processed is in a functionally separate, isolated and protected data processing environment under the control of the participants and only authorised persons have access to that data.

- Any personal data processed are not be transmitted, transferred or otherwise accessed by other parties.

- Any processing of personal data does not lead to measures or decisions affecting the data subjects.

- All personal data is deleted once the participation in the sandbox is terminated or the personal data has reached the end of its retention period.

- Full documentation of what was done to the data, must be kept for 1 year after termination of Sandbox, and only to be used for accountability and documentation obligations.

- Documentation of the complete process and rationale behind the training, testing and validation of AI, along with test results as part of technical documentation. (see Annex IV)

- Short Summary of AI project, its objectives and expected results published on website of Competent Authorities

Based on the last bullet point the Competent Authority is required to write am annual report and submit this report to the EU AI Board. The report is to include details on the results of their scheme, good and bad practices, lessons learnt and recommendations on the setup and application of the Regulations within the Sandboxes.

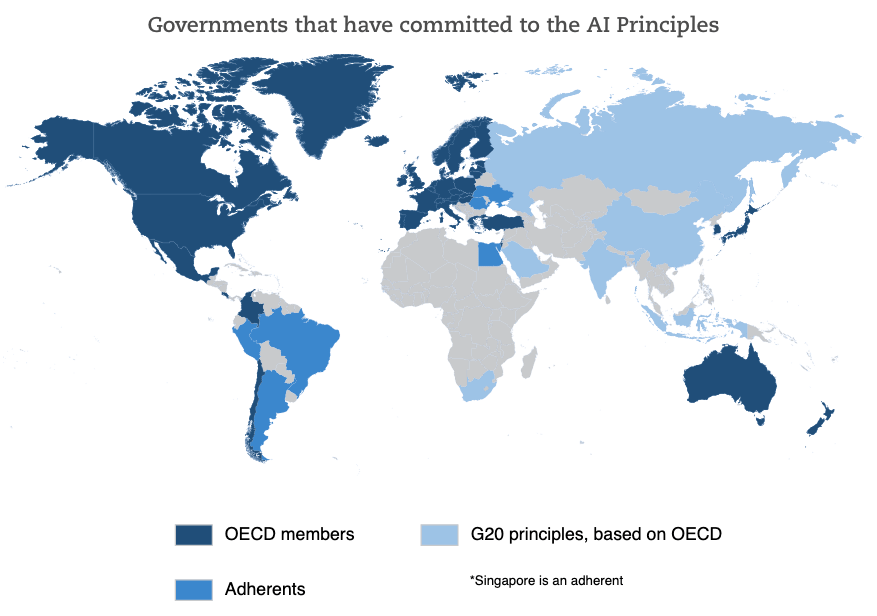

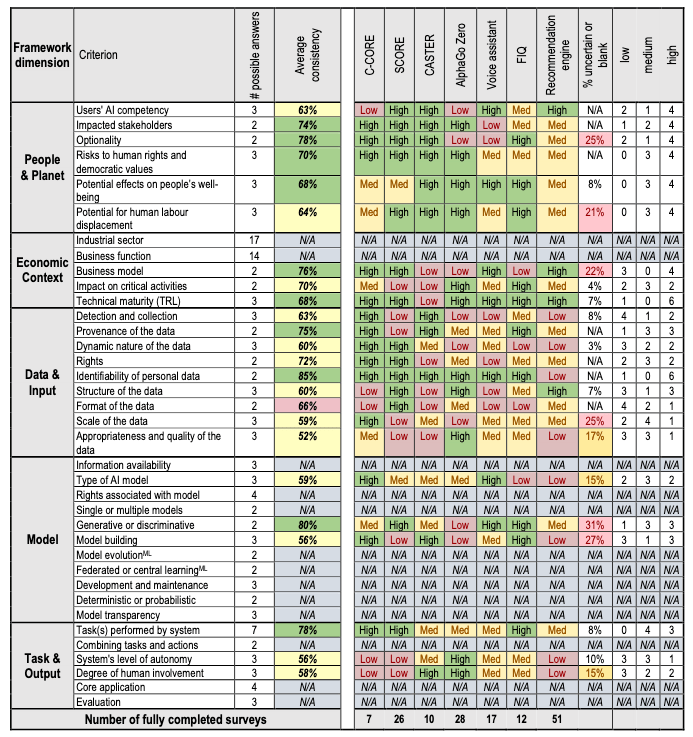

OCED Framework for Classifying of AI Systems

Over the past few months we have seen more and more countries looking at how they can support and regulate the use and development of AI within their geographic areas. For those in Europe, a lot of focus has been on the draft AI Regulations. At the time of writing this post there has been a lot of politics going on in relation to the EU AI Regulations. Some of this has been around the definition of AI, what will be included and excluded in their different categories, who will be policing and enforcing the regulations, among lots of other things. We could end up with a very different set of regulations to what was included in the draft (published April 2021). It also looks like the enactment of the EU AI Regulations will be delayed to the end of 2022, with some people suggesting it would be towards mid-2023 before something formal happens.

I mentioned above one of the things that may or may not change is the definition of AI within the EU AI Regulations. Although primarily focused on the inclusion/exclusion of biometic aspects, there are other refinements being proposed. When you look at what other geographic regions are doing, we start to see some common aspects on their definitions of AI, but we also see some differences. You can imagine the difficulties this will present in the global marketplace and how AI touches upon all/many aspects of most businesses, their customers and their data.

Most of you will have heard of OCED. In recent weeks they have been work across all member countries to work towards a Definition of AI and how different AI systems can be classified. They have called this their OCED Framework for Classifying of AI Systems.

The OCED Framework for Classifying AI System is a tool for policy-makers, regulators, legislators and others so that they can assess the opportunities and risks that different types of AI systems present and to inform their national AI strategies.

The Framework links the technical characteristics of AI with the policy implications set out in the OCED AI Principles which include:

- Inclusive growth, sustainable development and well-being

- Human-centred values and fairness

- Transparency and explainability

- Robustness, security and safety

- Accountability

The framework looks are different aspects depending on if the AI is still within the lab (sandbox) environment or is live in production or in use in the field.

The framework goes into more detail on the various aspects that need to be considered for each of these. The working group have apply the frame work to a number of different AI systems to illustrate how it cab be used.

Check out the framework document where it goes into more detail of each of the criterion listed above for each dimension of the framework.

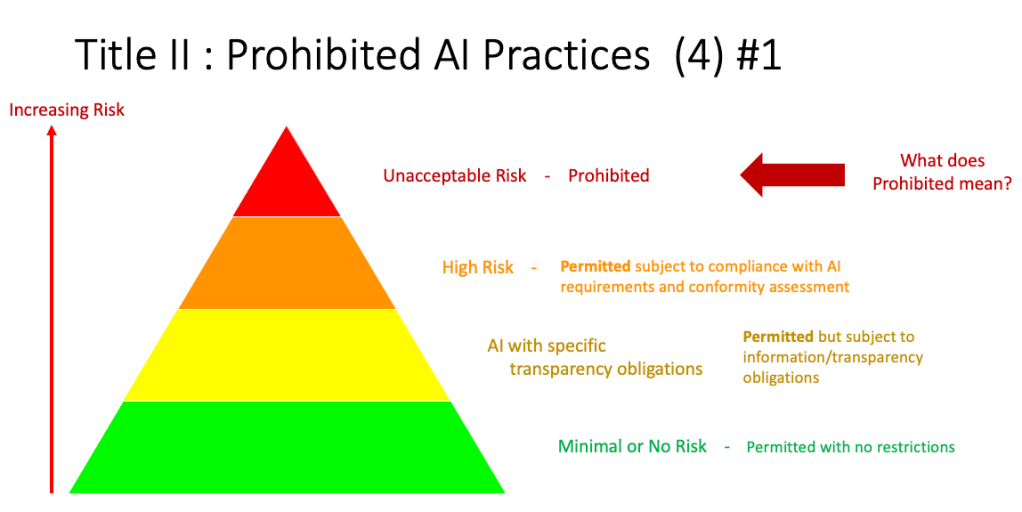

AI Categories in EU AI Regulations

The EU AI Regulations aims to provide a framework for addressing obligations for the use of AI applications in EU. These applications can be created, operated by or procured by companies both inside the EU and outside the EU, on data/people within the EU. In a previous post I get a fuller outline of the EU AI Regulations.

In this post I will look at proposed categorisation of AI applications, what type of applications fall into each category and what potential impact this may have on the operators of the AI application. The following diagram illustrates the categories detailed in the EU AI Regulations. These will be detailed below.

Let’s have a closer look at each of these categories

Unacceptable Risk (Red section)

The proposed legislation sets out a regulatory structure that bans some uses of AI, heavily regulates high-risk uses and lightly regulates less risky AI systems. The regulations intends to prohibit certain uses of AI which are deemed to be unacceptable because of the risks they pose. These would include deploying subliminal techniques or exploit vulnerabilities of specific groups of persons due to their age or disability, in order to materially distort a person’s behavior in a manner that causes physical or psychological harm; Lead to ‘social scoring’ by public authorities; Conduct ‘real time’ biometric identification in publicly available spaces. A more detailed version of this is:

- Designed or used in a manner that manipulates human behavior, opinions or decisions through choice architectures or other elements of user interfaces, causing a person to behave, form an opinion or take a decision to their detriment.

- Designed or used in a manner that exploits information or prediction about a person or group of persons in order to target their vulnerabilities or special circumstances, causing a person to behave, form an opinion or take a decision to their detriment.

- Indiscriminate surveillance applied in a generalised manner to all natural persons without differentiation. The methods of surveillance may include large scale use of AI systems for monitoring or tracking of natural persons through direct interception or gaining access to communication, location, meta data or other personal data collected in digital and/or physical environments or through automated aggregation and analysis of such data from various sources.

- General purpose social scoring of natural persons, including online. General purpose social scoring consists in the large scale evaluation or classification of the trustworthiness of natural persons [over certain period of time] based on their social behavior in multiple contexts and/or known or predicted personality characteristics, with the social score leading to detrimental treatment to natural person or groups.

There are some exemptions to these when such practices are authorised by law and are carried out [by public authorities or on behalf of public 25 authorities] in order to safeguard public security and are subject to appropriate safeguards for the rights and freedoms of third parties in compliance with Union law.

High Risk (Orange section)

AI systems identified as high-risk include AI technology used in:

- Critical infrastructures (e.g. transport), that could put the life and health of citizens at risk;

- Educational or vocational training, that may determine the access to education and professional course of someone’s life (e.g. scoring of exams);

- Safety components of products (e.g. AI application in robot-assisted surgery);

- Employment, workers management and access to self-employment (e.g. CV-sorting software for recruitment procedures);

- Essential private and public services (e.g. credit scoring denying citizens opportunity to obtain a loan);

- Law enforcement that may interfere with people’s fundamental rights (e.g. evaluation of the reliability of evidence);

- Migration, asylum and border control management (e.g. verification of authenticity of travel documents);

- Administration of justice and democratic processes (e.g. applying the law to a concrete set of facts).

All High risk AI applications will be subject to strict obligations before they can be put on the market:

- Adequate risk assessment and mitigation systems;

- High quality of the datasets feeding the system to minimise risks and discriminatory outcomes;

- Logging of activity to ensure traceability of results

- Detailed documentation providing all information necessary on the system and its purpose for authorities to assess its compliance;

- Clear and adequate information to the user;

- Appropriate human oversight measures to minimise risk;

- High level of robustness, security and accuracy.

These can also be categorised as (i) Risk management; (ii) Data governance; (iii) Technical documentation; (iv) Record keeping (traceability); (v) Transparency and provision of information to users; (vi) Human oversight; (vii) Accuracy; (viii) Cybersecurity robustness.

There will be some exceptions to this when the AI application is required by governmental and law enforcement agencies in certain circumstances.

Limited Risk (Yellow section)

“non-high-risk” AI systems should be encouraged to develop codes of conduct intended to foster the voluntary application of the mandatory requirements applicable to high-risk AI systems.

AI application within this Limited Risk category pose a limited risk, transparency requirements are imposed. For example, AI systems which are intended to interact with natural persons must be designed and developed in such a way that users are informed they are interacting with an AI system, unless it is “obvious from the circumstances and the context of use.”

Minimal Risk (Green section)

The Minimal Risk category a allows for all other AI systems can be developed and used in the EU without additional legal obligations than existing legislation For example, AI-enabled video games or spam filters. Some discussion suggest the vast majority of AI systems currently used in the EU fall into this category, where they represent minimal or no risk.

EU AI Regulations – An Introduction and Overview

In May this year (2021) the EU released a draft version of their EU Artificial Intelligence (AI) Regulations. It was released in May to allow all countries to have some time to consider it before having more detailed discussions on refinements towards the end of 2021, with a planned enactment during 2022.

The regulatory proposal aims to provide AI developers, deployers and users with clear requirements and obligations regarding specific uses of AI. One of the primary aims to ensure people can trust AI and to provide a framework for all to ensure the categorization, use and controls on the safe use of AI.

The draft EU AI Regulations consists of 81 papes, including 18 pages of introduction and background materials, 69 Articles and 92 Recitals (Recitals are the introductory statements in a written agreement or deed, generally appearing at the beginning, and similar to the preamble. They set out a précis of the parties’ intentions; what the contract is for, who the parties are and so on). It isn’t an easy read

One of the interesting things about the EU AI Regulations, and this will have the biggest and widest impact, is their definition of Artificial Intelligence.

Artificial Intelligence System or AI system’ means software that is developed with one or more of the approaches and techniques listed in Annex I and can, for a given set of human-defined objectives, generate outputs such as content, predictions, recommendations, or decisions influencing real or virtual environments. Influencing (real or virtual) environments they interact with. AI systems are designed to operate with varying levels of autonomy. An AI system can be used as a component of a product, also when not embedded therein, or on a stand-alone basis and its outputs may serve to partially or fully automate certain activities, including the provision of a service, the management of a process, the making of a decision or the taking of an action;

When you examine each part of this definition you will start to see how far reaching this regulation are. Most people assume it only affect the IT or AI industry, but it goes much further than that. It affects nearly all industries. This becomes clearer when you look at the techniques listed in Annex I.

ARTIFICIAL INTELLIGENCE TECHNIQUES AND APPROACHES (a) Machine learning approaches, including supervised, unsupervised and reinforcement learning, using a wide variety of methods including deep learning; (b) Logic- and knowledge-based approaches, including knowledge representation, inductive (logic) programming, knowledge bases, inference/deductive engines, (symbolic) reasoning and expert systems; (c)

Statistical approaches, Bayesian estimation, search and optimization methods.

It is (c) that will causes the widest application of the regulations. Statistical approaches to making decisions. But part of the problem with this is what do they mean by Statistical approaches. Could adding two number together be considered statistical, or by performing some simple comparison. This part of the definition will need some clarification, and they do say in the regulations, this list may get expanded over time without needing to update the Articles. This needs to be carefully watched and monitored by all.

At a simple level the regulations gives a framework for defining or categorizing AI systems and what controls need to be put in place to support this. This image below is typically used to represent these categories.

The regulations will require companies to invest a lot of time and money into ensure compliance. These will involve training, supports, audits, oversights, assessments, etc not just initially but also on an annual basis, with some reports estimating an annual cost of several tens of thousands of euro per model per year. Again we can expect some clarifications of this, as the costs of compliance may far exceed the use or financial benefit of using the AI.

At the same time there are many other countries who are looking at introducing similar regulations or laws. Many of these are complementary to each other and perhaps there is a degree of watching each each other are doing. This is to ensure there is a common playing field around the globe. This in turn will make it easier for companies to assess the compliance, to reduce their workload and to ensure they are complying with all requirements.

Most countries within the EU are creating their own AI Strategies, to support development and job creation, all within the boundaries set by the EU AI Regulations. Here are details of Ireland’s AI Strategy.

Watch this space to for more posts and details about the EU AI Regulations.

Ireland AI Strategy (2021)

Over the past year or more there was been a significant increase in publications, guidelines, regulations/laws and various other intentions relating to these. Artificial Intelligence (AI) has been attracting a lot of attention. Most of this attention has been focused on how to put controls on how AI is used across a wide range of use cases. We have heard and read lots and lots of stories of how AI has been used in questionable and ethical scenarios. These have, to a certain extent, given the use of AI a bit of a bad label. While some of this is justified, some is not, but some allows us to question the ethical use of these technologies. But not all AI, and the underpinning technologies, are bad. Most have been developed for good purposes and as these technologies mature they sometimes get used in scenarios that are less good.

We constantly need to develop new technologies and deploy these in real use scenarios. Ireland has a long history as a leader in the IT industry, with many of the top 100+ IT companies in the world having research and development operations in Ireland, as well as many service suppliers. The Irish government recently released the National AI Strategy (2021).

“The National AI Strategy will serve as a roadmap to an ethical, trustworthy and human-centric design, development, deployment and governance of AI to ensure Ireland can unleash the potential that AI can provide”. “Underpinning our Strategy are three core principles to best embrace the opportunities of AI – adopting a human-centric approach to the application of AI; staying open and adaptable to innovations; and ensuring good governance to build trust and confidence for innovation to flourish, because ultimately if AI is to be truly inclusive and have a positive impact on all of us, we need to be clear on its role in our society and ensure that trust is the ultimate marker of success.” Robert Troy, Minister of State for Trade Promotion, Digital and Company Regulation.

The eight different strands are identified and each sets out how Ireland can be an international leader in using AI to benefit the economy and society.

- Building public trust in AI

- Strand 1: AI and society

- Strand 2: A governance ecosystem that promotes trustworthy AI

- Leveraging AI for economic and societal benefit

- Strand 3: Driving adoption of AI in Irish enterprise

- Strand 4: AI serving the public

- Enablers for AI

- Strand 5: A strong AI innovation ecosystem

- Strand 6: AI education, skills and talent

- Strand 7: A supportive and secure infrastructure for AI

- Strand 8: Implementing the Strategy

Each strand has a clear list of objectives and strategic actions for achieving each strand, at national, EU and at a Global level.

Check out the full document here.

You must be logged in to post a comment.