Artificial Intelligence

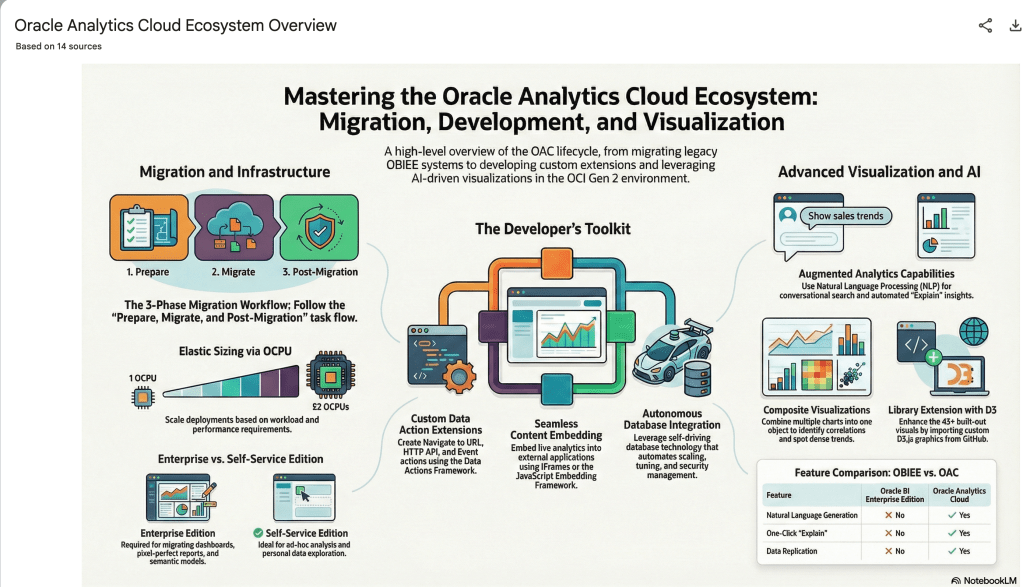

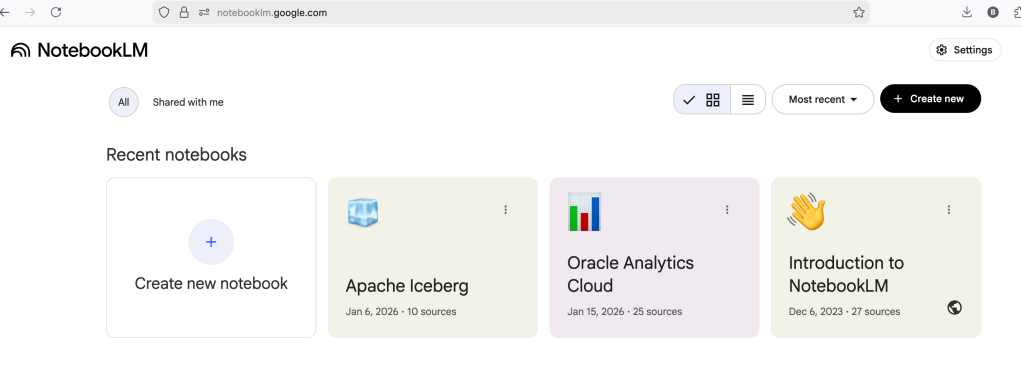

Using NotebookLM to help with understanding Oracle Analytics Cloud or any other product

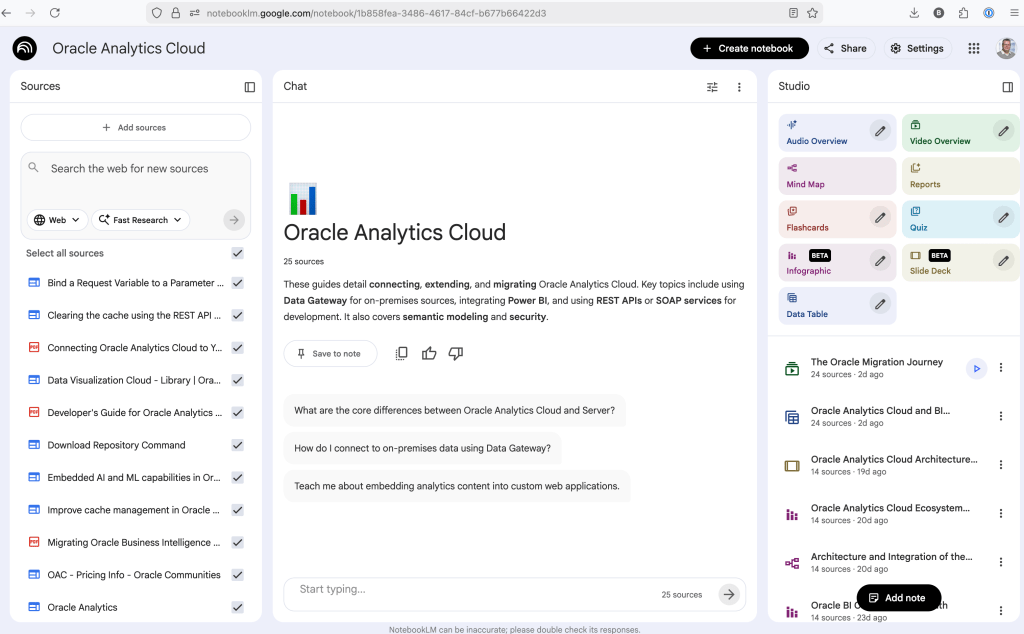

Over the past few months, we’ve seen a plethora of new LLM related products/agents being released. One such one is NotebookLM from Google. The offical description say “NotebookLM is an AI-powered research and note-taking tool from Google Labs that allows users to ground a large language model (like Gemini) in their own documents, such as PDFs, Google Docs, website URLs, or audio, acting as a personal, intelligent research assistant. It facilitates summarizing, analyzing, and querying information within these specific sources to create study guides, outlines, and, notably, “Audio Overviews” (podcast-style summaries)”

Let’s have a look at using NotebookLM to help with answering questions and how it can help with understanding Oracle Analytics Cloud (OAC).

Yes, you’ll need a Google account, and Yes you need to be OK with uploading your documents to NotebookLM. Make sure you are not breaking any laws (IP, GDPR, etc). It’s really easy to create your first notebook. Simply click on ‘Create new notebook’.

When the notebook opens, you can add your documents and webpages to the notebook. These can be documents in PDF, audio, text, etc to the notebook repository. Currently, there seems to be a limit of 50 documents and webpages that can be added.

The main part of the NotebookLM provides a chatbot where you can ask questions, and the NotebookLM will search through the documents and webpages to formulate an answer. In addition to this, there are features that allow you to generate Audio Overview, Video Overview, Mind Map, Reports, Flashcards, Quiz, Infographic, Slide Deck and a Data Table.

Before we look at some of these and what they have created for Oracle Analytics Cloud, there is a small warning. Some of these can take a long time to complete, that is, if they complete. I’ve had to run some of these features multiple times to get them to create. I’ve run all of the features, and the output from these can be seen on the right-hand side of the above image.

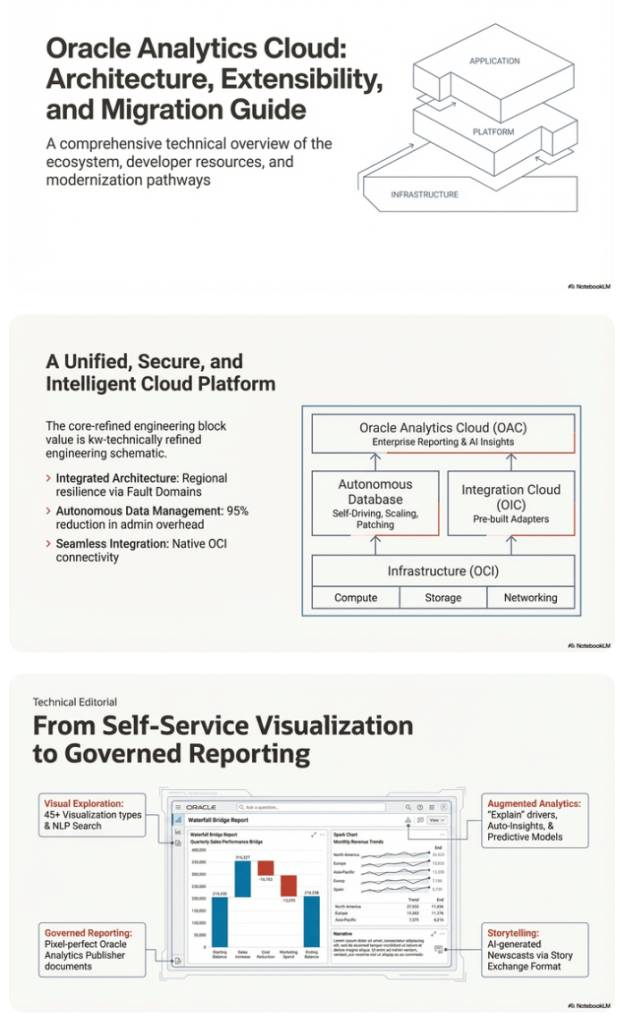

It created a 15-slide presentation on Oracle Analytics Cloud and its various features, and a five minute video on migrating OAC.

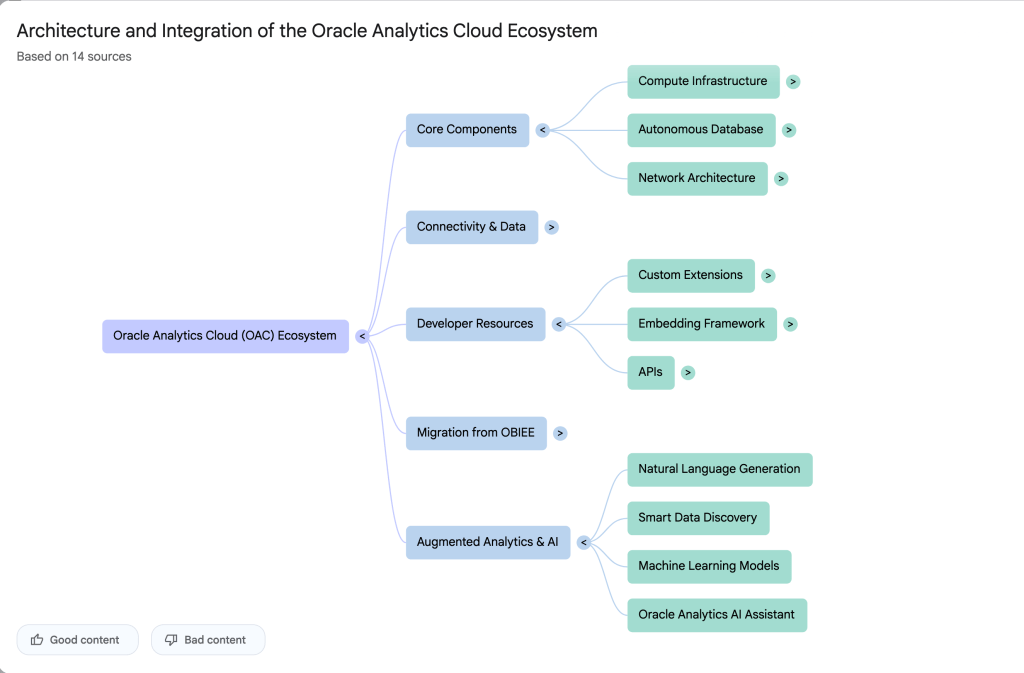

It also created a Mind-map, and an Infographic.

OCI Text to Speech example

In this post, I’ll walk through the steps to get a very simple example of Text-to-Speech working. This example builds upon my previous posts on OCI Language, OCI Speech and others, so make sure you check out those posts.

The first thing you need to be aware of, and to check, before you proceed, is whether the Text-to-Speech is available in your region. At the time of writing, this feature was only available in Phoenix, which is one of the cloud regions I have access to. There are plans to roll it out to other regions, but I’m not aware of the timeline for this. Although you might see Speech listed on your AI menu in OCI, that does not guarantee the Text-to-Speech feature is available. What it does mean is the text trans scribing feature is available.

So if Text-to-Speech is available in your region, the following will get you up and running.

The first thing you need to do is read in the Config file from the OS.

#initial setup, read Config file, create OCI Client

import oci

from oci.config import from_file

##########

from oci_ai_speech_realtime import RealtimeSpeechClient, RealtimeSpeechClientListener

from oci.ai_speech.models import RealtimeParameters

##########

CONFIG_PROFILE = "DEFAULT"

config = oci.config.from_file('~/.oci/config', profile_name=CONFIG_PROFILE)

###

ai_speech_client = ai_speech_client = oci.ai_speech.AIServiceSpeechClient(config)

###

print(config)

### Update region to point to Phoenix

config.update({'region':'us-phoenix-1'})A simple little test to see if the Text-to-Speech feature is enabled for your region is to display the available list of voices.

list_voices_response = ai_speech_client.list_voices(

compartment_id=COMPARTMENT_ID,

display_name="Text-to-Speech")

# opc_request_id="1GD0CV5QIIS1RFPFIOLF<unique_ID>")

# Get the data from response

print(list_voices_response.data)This produces a long json object with many characteristics of the available voices. A simpler listing gives the names and gender)

for i in range(len(list_voices_response.data.items)):

print(list_voices_response.data.items[i].display_name + ' [' + list_voices_response.data.items[i].gender + ']\t' + list_voices_response.data.items[i].language_description )

------

Brian [MALE] English (United States)

Annabelle [FEMALE] English (United States)

Bob [MALE] English (United States)

Stacy [FEMALE] English (United States)

Phil [MALE] English (United States)

Cindy [FEMALE] English (United States)

Brad [MALE] English (United States)

Richard [MALE] English (United States)Now lets setup a Text-to-Speech example using the simple text, Hello. My name is Brendan and this is an example of using Oracle OCI Speech service. First lets define a function to save the audio to a file.

def save_audi_response(data):

with open(filename, 'wb') as f:

for b in data.iter_content():

f.write(b)

f.close()We can now establish a connection, define the text, call the OCI Speech function to create the audio, and then to save the audio file.

import IPython.display as ipd

# Initialize service client with default config file

ai_speech_client = oci.ai_speech.AIServiceSpeechClient(config)

TEXT_DEMO = "Hello. My name is Brendan and this is an example of using Oracle OCI Speech service"

#speech_response = ai_speech_client.synthesize_speech(compartment_id=COMPARTMENT_ID)

speech_response = ai_speech_client.synthesize_speech(

synthesize_speech_details=oci.ai_speech.models.SynthesizeSpeechDetails(

text=TEXT_DEMO,

is_stream_enabled=True,

compartment_id=COMPARTMENT_ID,

configuration=oci.ai_speech.models.TtsOracleConfiguration(

model_family="ORACLE",

model_details=oci.ai_speech.models.TtsOracleTts2NaturalModelDetails(

model_name="TTS_2_NATURAL",

voice_id="Annabelle"),

speech_settings=oci.ai_speech.models.TtsOracleSpeechSettings(

text_type="SSML",

sample_rate_in_hz=18288,

output_format="MP3",

speech_mark_types=["WORD"])),

audio_config=oci.ai_speech.models.TtsBaseAudioConfig(config_type="BASE_AUDIO_CONFIG") #, save_path='I'm not sure what this should be')

) )

# Get the data from response

#print(speech_response.data)

save_audi_response(speech_response.data)How to Create an Oracle Gen AI Agent

In this post, I’ll walk you through the steps needed to create a Gen AI Agent on Oracle Cloud. We have seen lots of solutions offered by my different providers for Gen AI Agents. This post focuses on just what is available on Oracle Cloud. You can create a Gen AI Agent manually. However, testing and fine-tuning based on various chunking strategies can take some time. With the automated options available on Oracle Cloud, you don’t have to worry about chunking. It handles all the steps automatically for you. This means you need to be careful when using it. Allocate some time for testing to ensure it meets your requirements. The steps below point out some checkboxes. You need to check them to ensure you generate a more complete knowledge base and outcome.

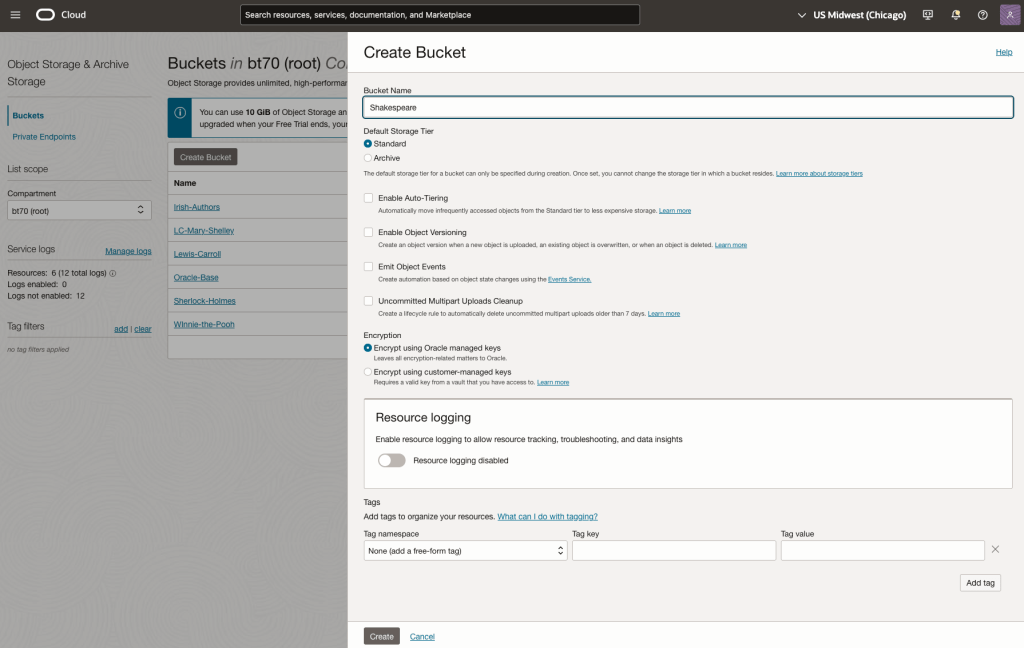

For my example scenario, I’m going to build a Gen AI Agent for some of the works by Shakespeare. I got the text of several plays from the Gutenberg Project website. The process for creating the Gen AI Agent is:

Step-1 Load Files to a Bucket on OCI

Create a bucket called Shakespeare.

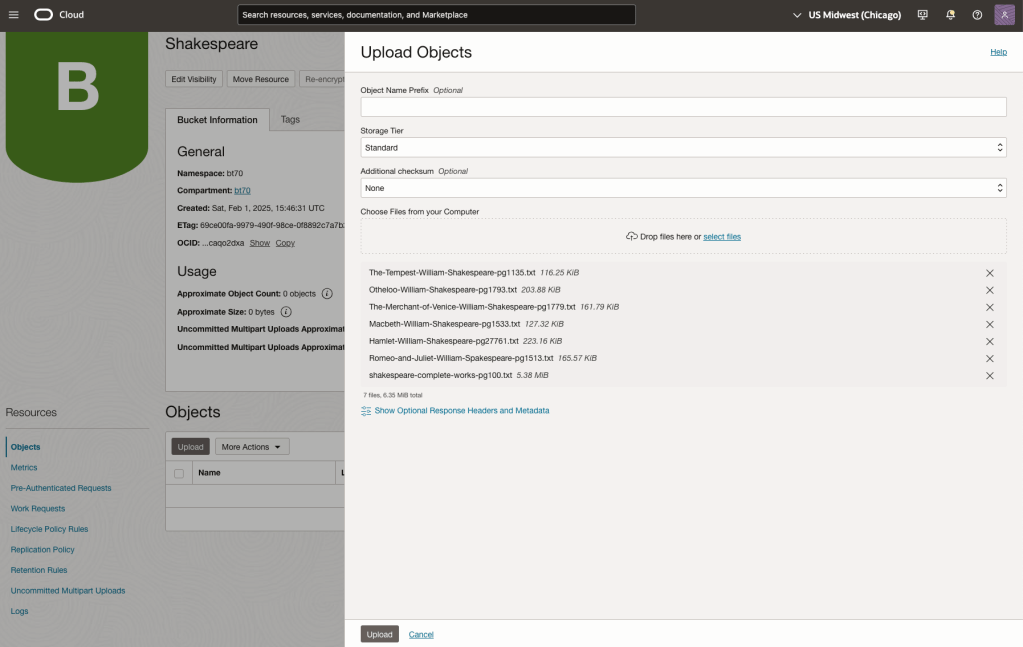

Load the files from your computer into the Bucket. These files were obtained from the Gutenberg Project site.

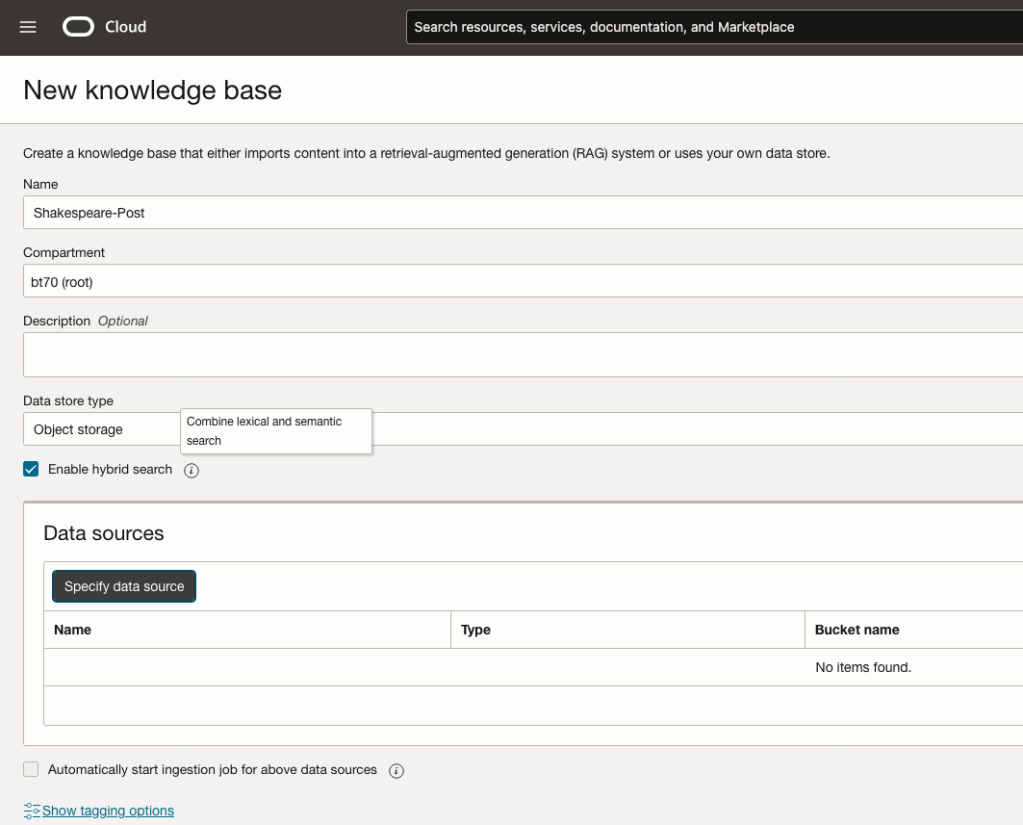

Step-2 Define a Data Source (documents you want to use) & Create a Knowledge Base

Click on Create Knowledge Base and give it a name ‘Shakespeare’.

Check the ‘Enable Hybrid Search’. checkbox. This will enable both lexical and semantic search. [this is Important]

Click on ‘Specify Data Source’

Select the Bucket from the drop-down list (Shakespeare bucket).

Check the ‘Enable multi-modal parsing’ checkbox.

Select the files to use or check the ‘Select all in bucket’

Click Create.

The Knowledge Base will be created. The files in the bucket will be parsed, and structured for search by the AI Agent. This step can take a few minutes as it needs to process all the files. This depends on the number of files to process, their format and the size of the contents in each file.

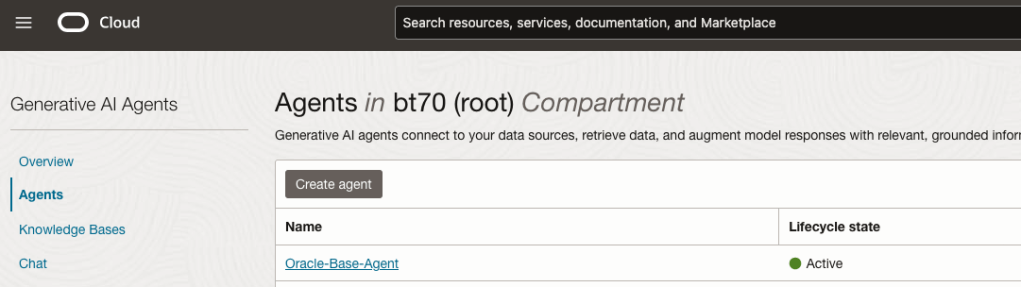

Step-3 Create Agent

Go back to the main Gen AI menu and select Agent and then Create Agent.

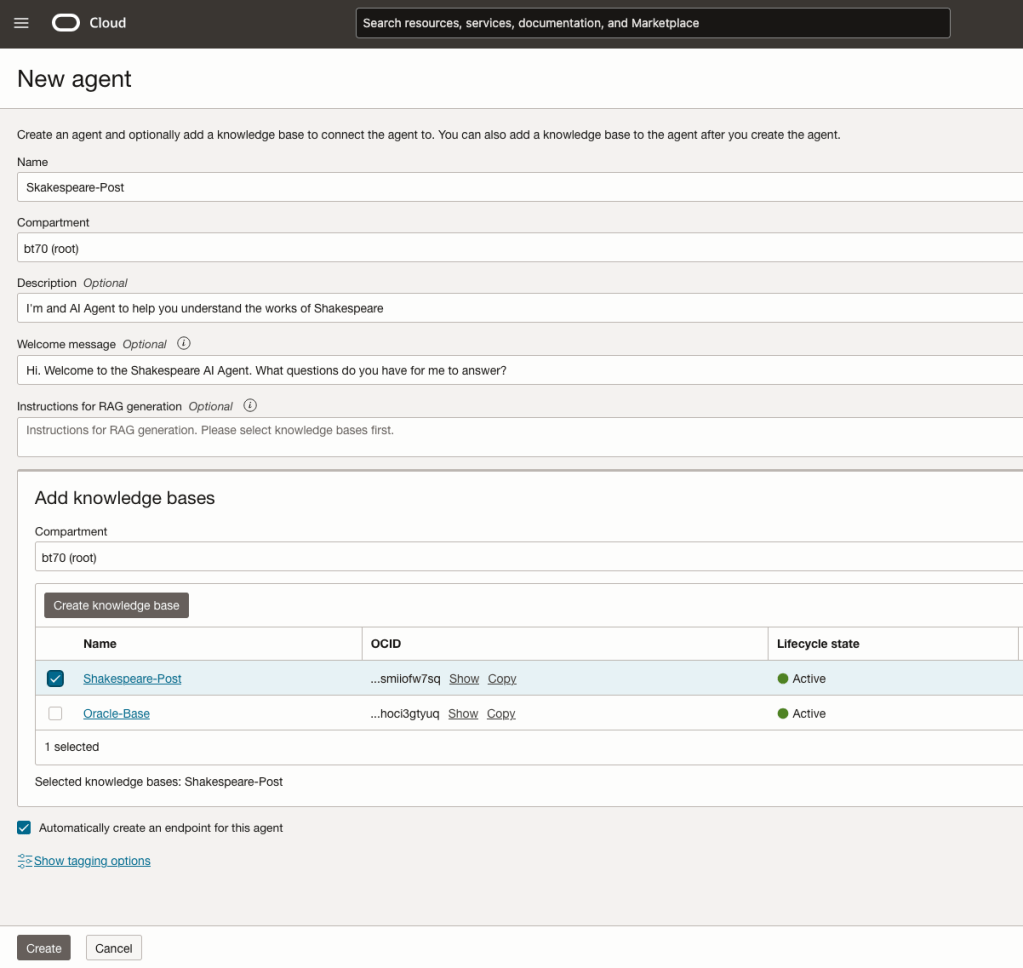

You can enter the following details:

- Name of the Agent

- Some descriptive information

- A Welcome message for people using the Agent

- Select the Knowledge Base from the list.

The checkbox for creating Endpoints should be checked.

Click Create.

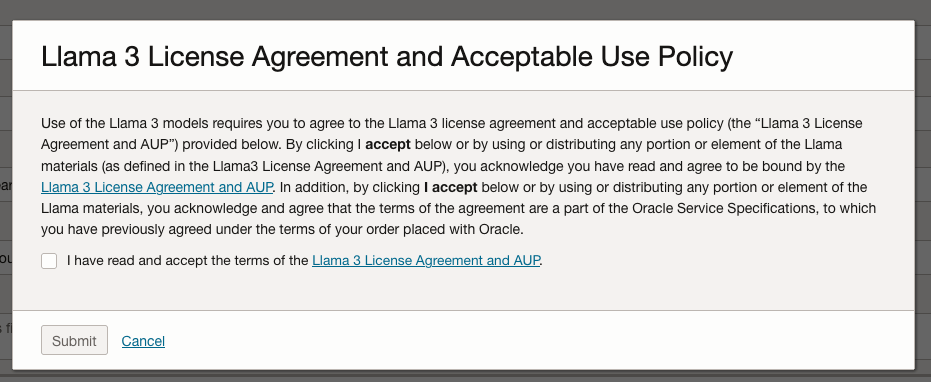

A pop-up window will appear asking you to agree to the Llama 3 License. Check this checkbox and click Submit.

After the agent has been created, check the status of the endpoints. These generally take a little longer to create, and you need these before you can test the Agent using the Chatbot.

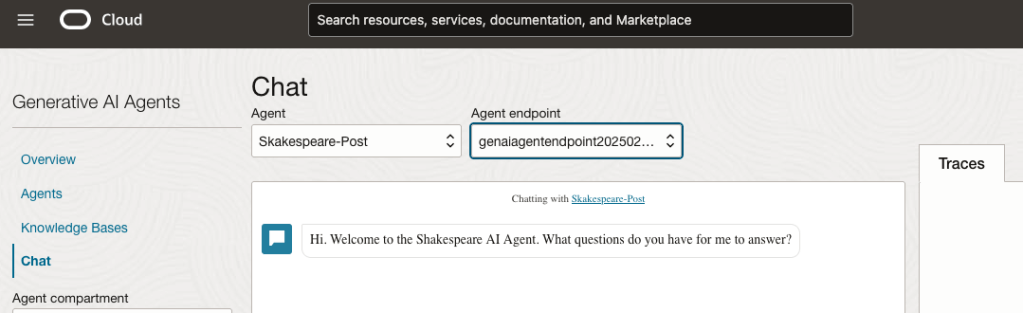

Step-4 Test using Chatbot

After verifying the endpoints have been created, you can open a Chatbot by clicking on ‘Chat’ from the menu on the left-hand side of the screen.

Select the name of the ‘Agent’ from the drop-down list e.g. Shakespeare-Post.

Select an end-point for the Agent.

After these have been selected you will see the ‘Welcome’ message. This was defined when creating the Agent.

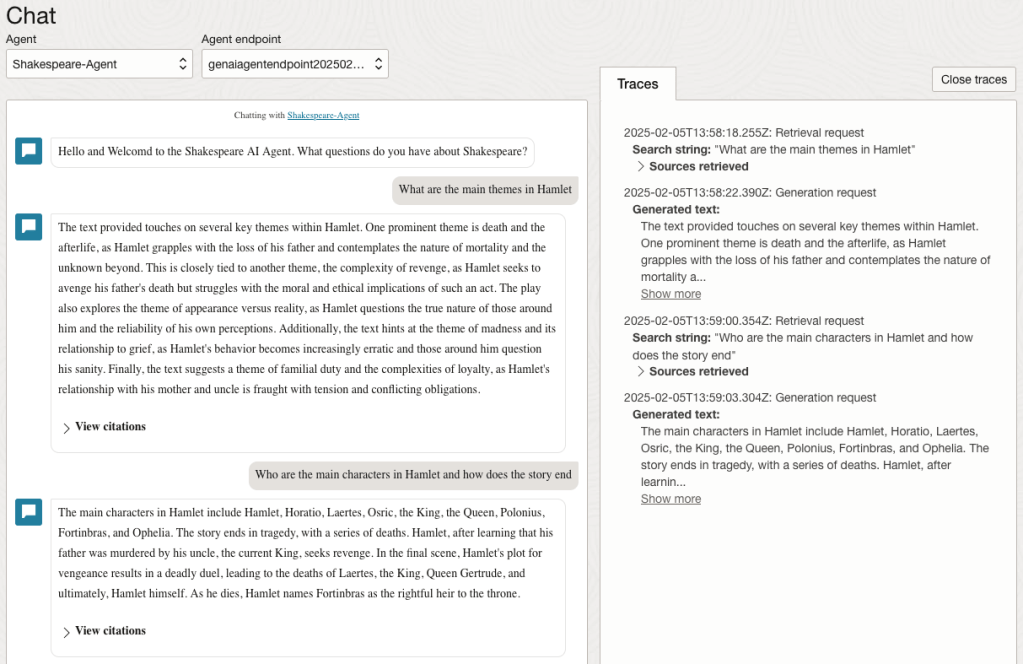

Here are a couple of examples of querying the works by Shakespeare.

In addition to giving a response to the questions, the Chatbot also lists the sections of the underlying documents and passages from those documents used to form the response/answer.

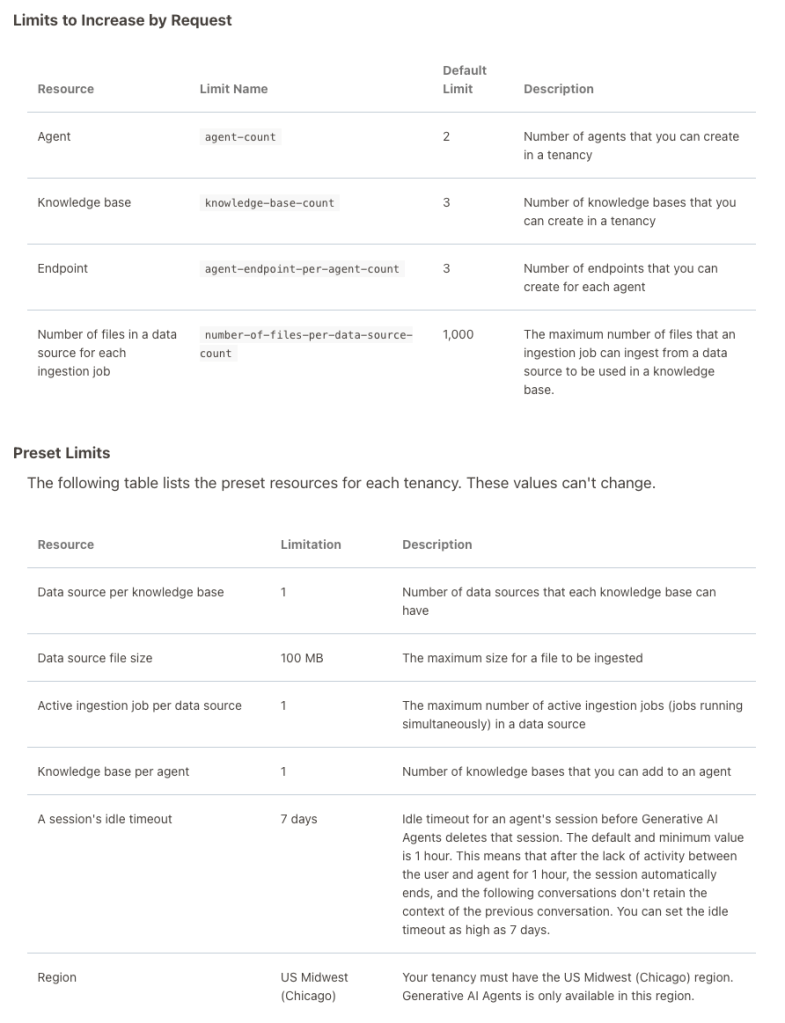

When creating Gen AI Agents, you need to be careful of two things. The first is the Cloud Region. Gen AI Agents are only available in certain Cloud Regions. If they aren’t available in your Region, you’ll need to request access to one of those or setup a new OCI account based in one of those regions. The second thing is the Resource Limits. At the time of writing this post, the following was allowed. Check out the documentation for more details. You might need to request that these limits be increased.

I’ll have another post showing how you can run the Chatbot on your computer or VM as a webpage.

AI Liability Act

Over the past few weeks we have seem a number of new Artificial Intelligence (AI) Acts or Laws, either being proposed or are at an advanced stage of enactment. One of these is the EU AI Liability Act (also), and is supposed be be enacted and work hand-in-hand with the EU AI Act.

There are different view or focus perspectives between these two EU AI acts. For the EU AI Act, the focus is from the technical perspective and those who develop AI solutions. On the other side of things is the EU AI Liability Act whose perspective is from the end-user/consumer point.

The aim of the EU AI Liability Act is to create a framework for trust in AI technology, and when a person has been harmed by the use of the AI, provides a structure to claim compensation. Just like other EU laws to protect the consumers from defective or harmful products, the AI Liability Act looks to do similar for when a person is harmed in some way by the use or application of AI.

Most of the examples given for how AI might harm a person includes the use of robotics, drones, and when AI is used in the recruitment process, where is automatically selects a candidate based on the AI algorithms. Some other examples include data loss from tech products or caused by tech products, smart-home systems, cyber security, products where people are selected or excluded based on algorithms.

Harm can be difficult to define, and although some attempt has been done to define this in the Act, additional work is needed to by the good people refining the Act, to provide clarifications on this and how its definition can evolve post enactment to ensure additional scenarios can be included without the need for updates to the Act, which can be a lengthy process. A similar task is being performed on the list of high-risk AI in the EU AI Act, where they are proposing to maintain a webpages listing such.

Vice-president for values and transparency, Věra Jourová, said that for AI tech to thrive in the EU, it is important for people to trust digital innovation. She added that the new proposals would give customers “tools for remedies in case of damage caused by AI so that they have the same level of protection as with traditional technologies”

Didier Reynders, the EU’s justice commissioner says, “The new rules apply when a product that functions thanks to AI technology causes damage and that this damage is the result of an error made by manufacturers, developers or users of this technology.

The EU defines “an error” in this case to include not just mistakes in how the A.I. is crafted, trained, deployed, or functions, but also if the “error” is the company failing to comply with a lot of the process and governance requirements stipulated in the bloc’s new A.I. Act. The new liability rules say that if an organization has not complied with their “duty of care” under the new A.I. Act—such as failing to conduct appropriate risk assessments, testing, and monitoring—and a liability claim later arises, there will be a presumption that the A.I. was at fault. This creates an additional way of forcing compliance with the EU AI Act.

The EU Liability Act says that a court can now order a company using a high-risk A.I. system to turn over evidence of how the software works. A balancing test will be applied to ensure that trade secrets and other confidential information is not needlessly disclosed. The EU warns that if a company or organization fails to comply with a court-ordered disclosure, the courts will be free to presume the entity using the A.I. software is liable.

The EU Liability Act will go through some changes and refinement with the aim for it to be enacted at the same time as the EU AI Act. How long will this process that is a little up in the air, considering the EU AI Act should have been adopted by now and we could be in the 2 year process for enactment. But the EU AI Act is still working its way through the different groups in the EU. There has been some indications these might conclude in 2023, but lets wait and see. If the EU Liability Act is only starting the process now, there could be some additional details if the EU wants both Acts to be effective at the same time.

OCED Framework for Classifying of AI Systems

Over the past few months we have seen more and more countries looking at how they can support and regulate the use and development of AI within their geographic areas. For those in Europe, a lot of focus has been on the draft AI Regulations. At the time of writing this post there has been a lot of politics going on in relation to the EU AI Regulations. Some of this has been around the definition of AI, what will be included and excluded in their different categories, who will be policing and enforcing the regulations, among lots of other things. We could end up with a very different set of regulations to what was included in the draft (published April 2021). It also looks like the enactment of the EU AI Regulations will be delayed to the end of 2022, with some people suggesting it would be towards mid-2023 before something formal happens.

I mentioned above one of the things that may or may not change is the definition of AI within the EU AI Regulations. Although primarily focused on the inclusion/exclusion of biometic aspects, there are other refinements being proposed. When you look at what other geographic regions are doing, we start to see some common aspects on their definitions of AI, but we also see some differences. You can imagine the difficulties this will present in the global marketplace and how AI touches upon all/many aspects of most businesses, their customers and their data.

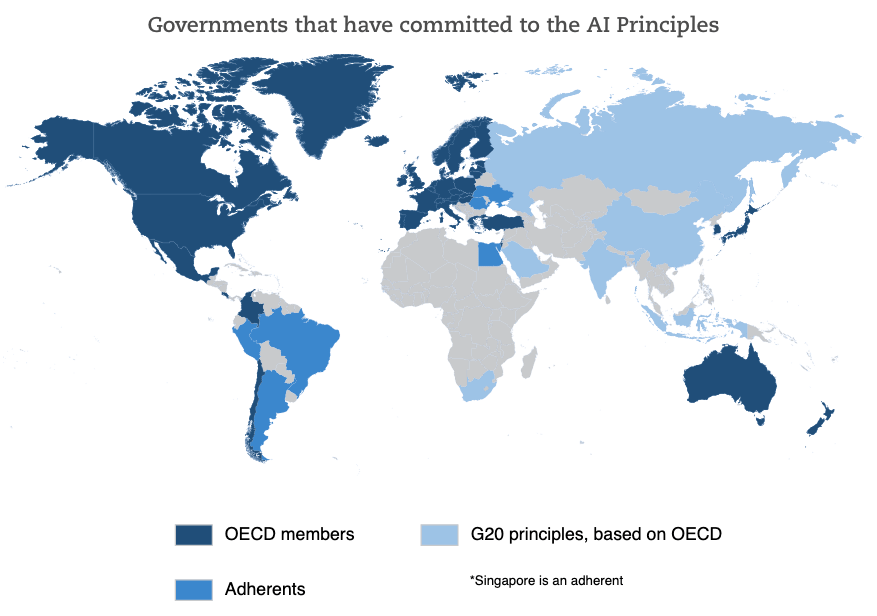

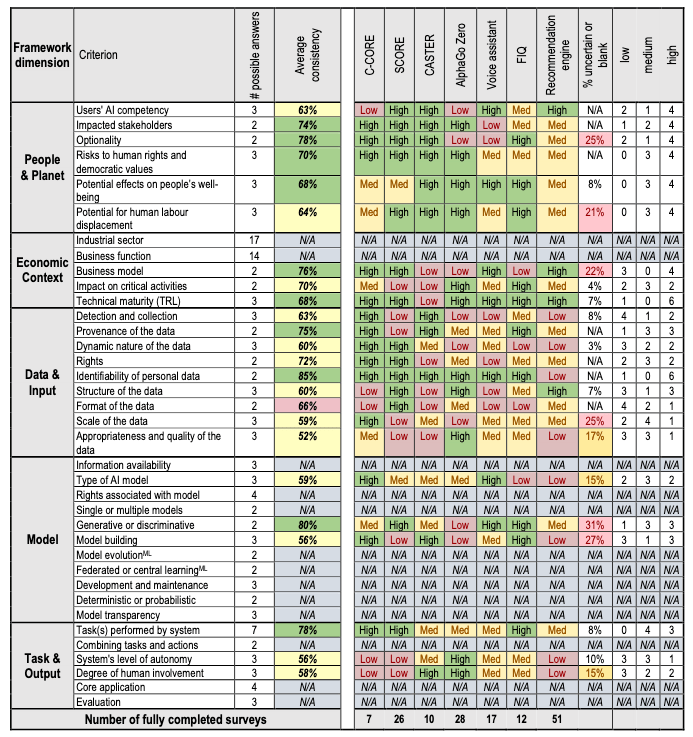

Most of you will have heard of OCED. In recent weeks they have been work across all member countries to work towards a Definition of AI and how different AI systems can be classified. They have called this their OCED Framework for Classifying of AI Systems.

The OCED Framework for Classifying AI System is a tool for policy-makers, regulators, legislators and others so that they can assess the opportunities and risks that different types of AI systems present and to inform their national AI strategies.

The Framework links the technical characteristics of AI with the policy implications set out in the OCED AI Principles which include:

- Inclusive growth, sustainable development and well-being

- Human-centred values and fairness

- Transparency and explainability

- Robustness, security and safety

- Accountability

The framework looks are different aspects depending on if the AI is still within the lab (sandbox) environment or is live in production or in use in the field.

The framework goes into more detail on the various aspects that need to be considered for each of these. The working group have apply the frame work to a number of different AI systems to illustrate how it cab be used.

Check out the framework document where it goes into more detail of each of the criterion listed above for each dimension of the framework.

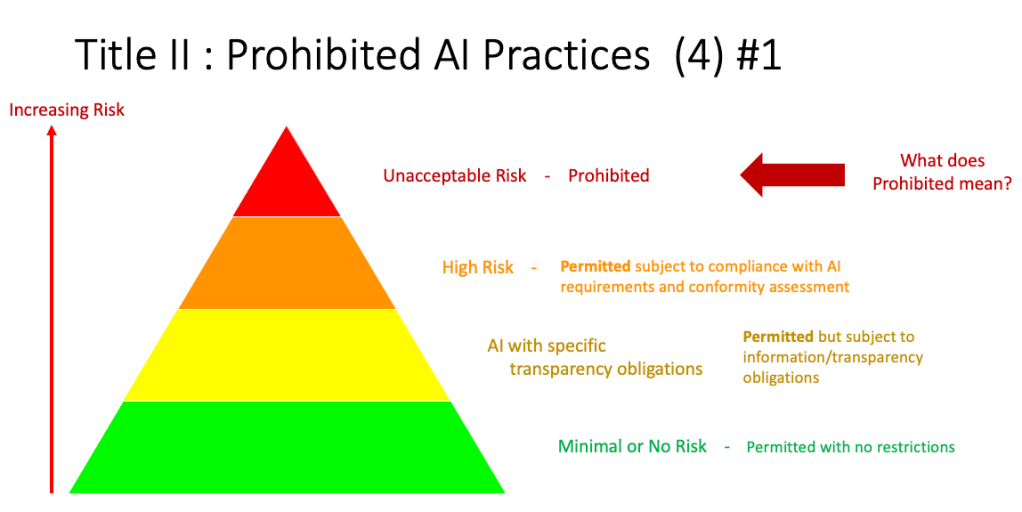

AI Categories in EU AI Regulations

The EU AI Regulations aims to provide a framework for addressing obligations for the use of AI applications in EU. These applications can be created, operated by or procured by companies both inside the EU and outside the EU, on data/people within the EU. In a previous post I get a fuller outline of the EU AI Regulations.

In this post I will look at proposed categorisation of AI applications, what type of applications fall into each category and what potential impact this may have on the operators of the AI application. The following diagram illustrates the categories detailed in the EU AI Regulations. These will be detailed below.

Let’s have a closer look at each of these categories

Unacceptable Risk (Red section)

The proposed legislation sets out a regulatory structure that bans some uses of AI, heavily regulates high-risk uses and lightly regulates less risky AI systems. The regulations intends to prohibit certain uses of AI which are deemed to be unacceptable because of the risks they pose. These would include deploying subliminal techniques or exploit vulnerabilities of specific groups of persons due to their age or disability, in order to materially distort a person’s behavior in a manner that causes physical or psychological harm; Lead to ‘social scoring’ by public authorities; Conduct ‘real time’ biometric identification in publicly available spaces. A more detailed version of this is:

- Designed or used in a manner that manipulates human behavior, opinions or decisions through choice architectures or other elements of user interfaces, causing a person to behave, form an opinion or take a decision to their detriment.

- Designed or used in a manner that exploits information or prediction about a person or group of persons in order to target their vulnerabilities or special circumstances, causing a person to behave, form an opinion or take a decision to their detriment.

- Indiscriminate surveillance applied in a generalised manner to all natural persons without differentiation. The methods of surveillance may include large scale use of AI systems for monitoring or tracking of natural persons through direct interception or gaining access to communication, location, meta data or other personal data collected in digital and/or physical environments or through automated aggregation and analysis of such data from various sources.

- General purpose social scoring of natural persons, including online. General purpose social scoring consists in the large scale evaluation or classification of the trustworthiness of natural persons [over certain period of time] based on their social behavior in multiple contexts and/or known or predicted personality characteristics, with the social score leading to detrimental treatment to natural person or groups.

There are some exemptions to these when such practices are authorised by law and are carried out [by public authorities or on behalf of public 25 authorities] in order to safeguard public security and are subject to appropriate safeguards for the rights and freedoms of third parties in compliance with Union law.

High Risk (Orange section)

AI systems identified as high-risk include AI technology used in:

- Critical infrastructures (e.g. transport), that could put the life and health of citizens at risk;

- Educational or vocational training, that may determine the access to education and professional course of someone’s life (e.g. scoring of exams);

- Safety components of products (e.g. AI application in robot-assisted surgery);

- Employment, workers management and access to self-employment (e.g. CV-sorting software for recruitment procedures);

- Essential private and public services (e.g. credit scoring denying citizens opportunity to obtain a loan);

- Law enforcement that may interfere with people’s fundamental rights (e.g. evaluation of the reliability of evidence);

- Migration, asylum and border control management (e.g. verification of authenticity of travel documents);

- Administration of justice and democratic processes (e.g. applying the law to a concrete set of facts).

All High risk AI applications will be subject to strict obligations before they can be put on the market:

- Adequate risk assessment and mitigation systems;

- High quality of the datasets feeding the system to minimise risks and discriminatory outcomes;

- Logging of activity to ensure traceability of results

- Detailed documentation providing all information necessary on the system and its purpose for authorities to assess its compliance;

- Clear and adequate information to the user;

- Appropriate human oversight measures to minimise risk;

- High level of robustness, security and accuracy.

These can also be categorised as (i) Risk management; (ii) Data governance; (iii) Technical documentation; (iv) Record keeping (traceability); (v) Transparency and provision of information to users; (vi) Human oversight; (vii) Accuracy; (viii) Cybersecurity robustness.

There will be some exceptions to this when the AI application is required by governmental and law enforcement agencies in certain circumstances.

Limited Risk (Yellow section)

“non-high-risk” AI systems should be encouraged to develop codes of conduct intended to foster the voluntary application of the mandatory requirements applicable to high-risk AI systems.

AI application within this Limited Risk category pose a limited risk, transparency requirements are imposed. For example, AI systems which are intended to interact with natural persons must be designed and developed in such a way that users are informed they are interacting with an AI system, unless it is “obvious from the circumstances and the context of use.”

Minimal Risk (Green section)

The Minimal Risk category a allows for all other AI systems can be developed and used in the EU without additional legal obligations than existing legislation For example, AI-enabled video games or spam filters. Some discussion suggest the vast majority of AI systems currently used in the EU fall into this category, where they represent minimal or no risk.

Ireland AI Strategy (2021)

Over the past year or more there was been a significant increase in publications, guidelines, regulations/laws and various other intentions relating to these. Artificial Intelligence (AI) has been attracting a lot of attention. Most of this attention has been focused on how to put controls on how AI is used across a wide range of use cases. We have heard and read lots and lots of stories of how AI has been used in questionable and ethical scenarios. These have, to a certain extent, given the use of AI a bit of a bad label. While some of this is justified, some is not, but some allows us to question the ethical use of these technologies. But not all AI, and the underpinning technologies, are bad. Most have been developed for good purposes and as these technologies mature they sometimes get used in scenarios that are less good.

We constantly need to develop new technologies and deploy these in real use scenarios. Ireland has a long history as a leader in the IT industry, with many of the top 100+ IT companies in the world having research and development operations in Ireland, as well as many service suppliers. The Irish government recently released the National AI Strategy (2021).

“The National AI Strategy will serve as a roadmap to an ethical, trustworthy and human-centric design, development, deployment and governance of AI to ensure Ireland can unleash the potential that AI can provide”. “Underpinning our Strategy are three core principles to best embrace the opportunities of AI – adopting a human-centric approach to the application of AI; staying open and adaptable to innovations; and ensuring good governance to build trust and confidence for innovation to flourish, because ultimately if AI is to be truly inclusive and have a positive impact on all of us, we need to be clear on its role in our society and ensure that trust is the ultimate marker of success.” Robert Troy, Minister of State for Trade Promotion, Digital and Company Regulation.

The eight different strands are identified and each sets out how Ireland can be an international leader in using AI to benefit the economy and society.

- Building public trust in AI

- Strand 1: AI and society

- Strand 2: A governance ecosystem that promotes trustworthy AI

- Leveraging AI for economic and societal benefit

- Strand 3: Driving adoption of AI in Irish enterprise

- Strand 4: AI serving the public

- Enablers for AI

- Strand 5: A strong AI innovation ecosystem

- Strand 6: AI education, skills and talent

- Strand 7: A supportive and secure infrastructure for AI

- Strand 8: Implementing the Strategy

Each strand has a clear list of objectives and strategic actions for achieving each strand, at national, EU and at a Global level.

Check out the full document here.

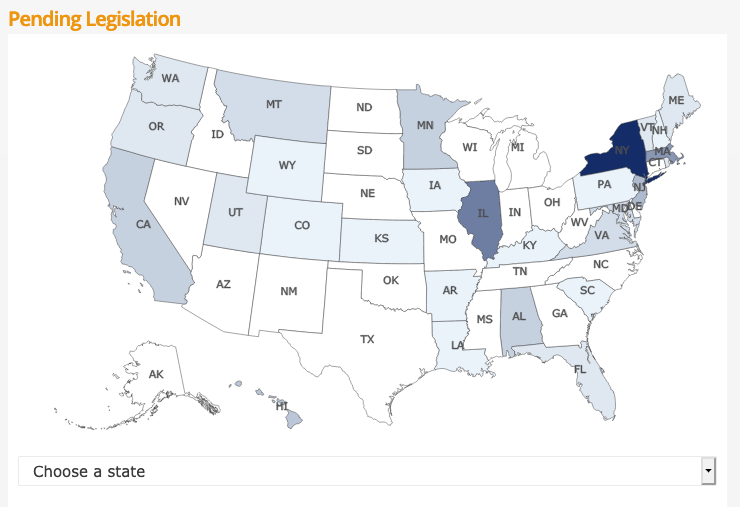

Regulating AI around the World

Continuing my series of blog posts on various ML and AI regulations and laws, this post will look at what some other countries are doing to regulate ML and AI, with a particular focus on facial recognition and more advanced applications of ML. Some of the examples listed below are work-in-progress, while others such as EU AI Regulations are at a more advanced stage with introduction of regulations and laws.

[Note: What is listed below is in addition to various data protection regulations each country or region has implemented in recent years, for example EU GDPR and similar]

Things are moving fast in this area with more countries introducing regulations all the time. The following list is by no means exhaustive but it gives you a feel for what is happening around the world and what will be coming to your country very soon. The EU and (parts of) USA are leading in these areas, it is important to know these regulations and laws will impact on most AI/ML applications and work around the world. If you are processing data about an individual in these geographic regions then these laws affect you and what you can do. It doesn’t matter where you live.

New Zealand

New Zealand along wit the World Economic Forum (WEF) are developing a governance framework for AI regulations. It is focusing on three areas:

- Inclusive national conversation on the use of AI

- Enhancing the understand of AI and it’s application to inform policy making

- Mitigation of risks associated with AI applications

Singapore

The Personal Data Protection Commission has released a framework called ‘Model AI Governance Framework‘, to provide a model on implementing ethical and governance issues when deploying AI application. It supports having explainable AI, allowing for clear and transparent communications on how the AI applications work. The idea is to build understanding and trust in these technological solutions. It consists of four principles:

- Internal Governance Structures and Measures

- Determining the Level of Human Involvement in AI-augmented Decision Making

- Operations Management, minimizing bias, explainability and robustness

- Stakeholder Interaction and Communication.

USA

Progress within the USA has been divided between local state level initiatives, for example California where different regions have implemented their own laws, while at a state level there has been attempts are laws. But California is not along with almost half of the states introducing laws restricting the use of facial recognition and personal data protection. In addition to what is happening at State level, there has been some orders and laws introduced at government level.

- Executive Order on Promoting the Use of Trustworthy Artificial Intelligence in the Federal Government

- This provides guidelines to help Federal Agencies with AI adoption and to foster public trust in the technology. It directs agencies to ensure the design, development, acquisition and use of AI is done in a manner to protects privacy, civil rights, and civil liberties. It includes the following actions:

- Principles for the Use of AI in Government

- Common Policy form Implementing Principles

- Catalogue of Agency Use Cases of AI

- Enhanced AI Implementation Expertise

- This provides guidelines to help Federal Agencies with AI adoption and to foster public trust in the technology. It directs agencies to ensure the design, development, acquisition and use of AI is done in a manner to protects privacy, civil rights, and civil liberties. It includes the following actions:

- Government – Facial Recognition and Biometric Technology Moratorium Act of 2020. Limits the use of biometric surveillance systems such as facial recognition systems by federal and state government entities

USA – Washington State

Many of the States in USA have enacted laws on Facial Recognition and the use of AI. There are too many to list here, but go to this website to explore what each State has done. Taking Washington State as an example, it has enacted a law prohibiting the use of facial recognition technology for ongoing surveillance and limits its use to acquiring evidence of serious criminal offences following authorization of a search warrant.

Canada

The Privacy Commissioner of Canada introduced the Regulatory Framework for AI, and calls for legislation supporting the benefits of AI while upholding privacy of individuals. Recommendations include:

- allow personal information to be used for new purposes towards responsible AI innovation and for societal benefits

- authorize these uses within a rights-based framework that would entrench privacy as a human right and a necessary element for the exercise of other fundamental rights

- create a right to meaningful explanation for automated decisions and a right to contest those decisions to ensure they are made fairly and accurately

- strengthen accountability by requiring a demonstration of privacy compliance upon request by the regulator

- empower the OPC to issue binding orders and proportional financial penalties to incentivize compliance with the law

- require organizations to design AI systems from their conception in a way that protects privacy and human rights

The above list is just a sample of what is happening around the World, and we are sure to see lots more of this over the next few years. There are lots of pros and cons to these regulations and laws. One of the biggest challenges being faced by people with AI and ML technologies is knowing what is and isn’t possible/allowed, as most solutions/applications will be working across many geographic regions

Truth, Fairness & Equality in AI – US Federal Trade Commission

Over the past few months we have seen a growing level of communication, guidelines, regulations and legislation for the use of Machine Learning (ML) and Artificial Intelligence (AI). Where Artificial Intelligence is a superset containing all possible machine or computer generated or apply intelligence consisting of any logic that makes a decision or calculation.

Although the EU has been leading the charge in this area, other countries have been following suit with similar guidelines and legislation.

There has been several examples of this in the USA over the past couple of years. Some of this has been prefaced by the debates and issues around the use of facial recognition. Some States in USA have introduced laws to control what can and cannot be done, but, at time of writing, where is no federal law governing the whole of USA.

In April 2021, the US Federal Trade Commission published and article on titled ‘Aiming for truth, fairness, and equity in Company’s use of AI‘.

They provide guidelines on how to build AI applications while avoiding potential issues such as bias and unfair outcomes, and at the same time incorporating transparency. In addition to the recommendations in the report, they point to three laws (which have been around for some time) which are important for developers of AI applications. These include:

- Section 5 of the FTC Act: The FTC Act prohibits unfair or deceptive practices. That would include the sale or use of – for example – racially biased algorithms.

- Fair Credit Reporting Act: The FCRA comes into play in certain circumstances where an algorithm is used to deny people employment, housing, credit, insurance, or other benefits.

- Equal Credit Opportunity Act: The ECOA makes it illegal for a company to use a biased algorithm that results in credit discrimination on the basis of race, color, religion, national origin, sex, marital status, age, or because a person receives public assistance.

These guidelines aims for truthfully, fairly and equitably. With these covering the technical and non-technical side of AI applications. The guidelines include:

- Start with the right direction: Get your data set right, what is missing, is it balanced, what’s missing, etc. Look at how to improve the data set and address any shortcomings, and this may limit you use model

- Watch out of discriminatory outcomes: Are the outcomes biased? If it works for you data set and scenario, will it work in others eg. Applying the model in a different hospital? Regular and detail testing is needed to ensure no discrimination gets included

- Embrace transparency and independence: Think about how to incorporate transparency from the beginning of the AI project. Use international best practice and standards, have independent audits and publish results, by opening the data and source code to outside inspection.

- Don’t exaggerate what you algorithm can do or whether it can deliver fair or unbiased results: That kind of says it all really. Under the FTC Act, your statements to business customers and consumers must be truthful, no-deceptive and backed up by evidence. Typically with the rush to introduce new technologies and products there can be a tendency to over exaggerate what it can do. Don’t do this

- Tell the truth about how you use data: Be careful about what data you used and how you got this data. For example, Facebook using facial recognition software on pictures default, when they asked for your permission but ignored what you said. Misrepresentation of what the customer/consumer was told.

- Do more good than harm: A practice is unfair if it causes more harm than good. Making decisions based on race, color, religion, sex, etc. If the model causes more harm than good, if it causes or is likely to cause substantial injury to consumers that I not reasonably avoidable by consumers and not outweighed by countervailing benefits to consumers or to competition, their model is unfair.

- Hold yourself accountable: If you use AI, in any form, you will be held accountable for the algorithm’s performance.

Some of these guidelines build upon does from April 2020, on Using Artificial Intelligence and Algorithms, where there is a focus on fair use of data, transparency of data usage, algorithms and models, ability to clearly explain how a decision was made, and ensure all decisions made are fair and unbiased

Working with AI products and applications can be challenging in many different ways. Most of the focus, information and examples is about building these. But that can be the easy part. With the growing number of legal aspects from different regions around the world the task of managing AI products and applications is becoming more and more complicated.

The EU AI Regulations supports the role of person to oversee these different aspects, and this is something we will see job adverts for very very soon, no matter what country or region you live in. The people in these roles will help steer and support companies through this difficult and evolving area, to ensure compliance with local as well and global compliance and legal requirements.

Responsible AI: Principles & Standards around the World

During 2019 there was been a increase awareness of AI and the need for Responsible AI. During 2020 (and beyond) we will see more and more on this topic. To get you started on some of the details and some background reading, here are links to various Principles and Standards for Responsible AI from around the World.

| Standard/Principles | Description |

|---|---|

| EU AI Ethics Guidelines | The Ethics Guidelines for Trustworthy Artificial Intelligence developed by EU High-Level Expert Group on AI highlights that trustworthy AI should be lawful, ethical and robust. Puts forward seven key requirements for AI systems should meet in order to be deemed trustworthy, including among others diversity, non-discrimination, societal and environmental well-being, transparency and accountability. |

| OECD principles on Artificial Intelligence | OECD’s member countries along with partner countries adopted the first ever set of intergovernmental policy guidelines on AI, agreeing to uphold international standards that aim to ensure AI systems are designed in a way that respects the rule of law, human rights, democratic values and diversity. They emphasize that AI should benefit people and the planet by driving inclusive growth, sustainable development and well-being. |

| CoE: Human Rights impacts of Algorithms | Council of Europe draft recommendation on the human rights impacts of algorithmic AI systems, released for consultation in August 2019 and to be adopted in early 2020. The document explicitly refers to the UN Guiding Principles on Business and Human Rights as a guidance for due diligence process and Human Rights Impact Assessments. |

| IEEE Global Initiative: Ethically Aligned Design | Ethically Aligned Design (EAD) Document is created to educate a broader public and to inspire academics, engineers, policy makers and manufacturers of autonomous and intelligent systems to take action on prioritizing ethical considerations. The general principles for AI design, manufacturing and use include: human rights, wellbeing, data agency, effectiveness, transparency, accountability, awareness of misuse, competence. The unique IEEE P7000 Standards series address specific issues at the intersection of technology and ethics and aimed to empower innovation across borders and enable societal benefit. |

| UN Sustainable Development Goals | The UN Sustainable Goals include the annual AI for Good Global Summit is the leading UN platform for global and inclusive dialogue on how artificial intelligence could help accelerate progress towards the Global Goals. |

| UN Business and Human Rights | The UN Guiding Principles on Business and Human Rights (UNGPs)gives a framework offering a roadmap to navigate responsibility-related challenges, rapid technological disruption and rising inequality, business has a unique opportunity to implement human-centered innovation by taking into account social, ethical and human rights implications of AI. |

| EU Collaborative Platforms and Social Learning | Several EU countries have articulated their ambitions related to artificial intelligence, it is of paramount importance to find your unique voice, track and join essential conversations, strategically engage in collective efforts and leave meaningful digital footprint. |

You must be logged in to post a comment.