SAS

Migrating SAS files to CSV and database

Many organizations have been using SAS Software to analyse their data for many decades. As with most technologies organisations will move to alternative technologies are some point. Recently I’ve experienced this kind of move. In doing so any data stored in one format for the older technology needed to be moved or migrated to the newer technology. SAS Software can process data in a variety of format, one of which is their own internal formats. Thankfully Pandas in Python as a function to read such SAS files into a pandas dataframe, from which it can be saved into some alternative format such as CSV. The following code illustrates this conversion, where it will migrate all the SAS files in a particular directory, converts them to CSV and saves the new file in a destination directory. It also copies any existing CSV files to the new destination, while ignore any other files. The following code is helpful for batch processing of files.

import os

import pandas as pd

#define the Source and Destination directories

source_dir='/Users/brendan.tierney/Dropbox/4-Datasets/SAS_Data_Sets'

dest_dir=source_dir+'/csv'

#What file extensions to read and convert

file_ext='.sas7bdat'

#Create output directory if it does not already exist

if not os.path.exists(dest_dir):

os.mkdir(dest_dir)

else: #If directory exists, delete all files

for filename in os.listdir(source_dir):

os.remove(filename)

#Process each file

for filename in os.listdir(source_dir):

#Process the SAS file

if filename.endswith(file_ext):

print('.processing file :',filename)

print('...converting file to csv')

df=pd.read_sas(os.path.join(source_dir, filename))

df.to_csv(os.path.join(dest_dir, filename))

print('.....finished creating CSV file')

elif filename.endswith('csv'):

#Copy any CSV files to the Destination Directory

print('.copying CSV file')

cmd_copy='cp '+os.path.join(source_dir, filename)+' '+os.path.join(dest_dir, filename)

os.system(cmd_copy)

print('.....finished copying CSV file')

else:

#Ignore any other type of files

print('.ignoring file :',filename)

print('--Finished--')That’s it. All the files have now been converted and located in the destination directory.

For most, the above might be all you need, but sometimes you’ll need to move the the newer technology. In most cases the newer technology will easily use the CSV files. But in some instance your final destination might be a database. In my scenarios I use the CSV2DB app developed by Gerald Venzi. You can download the code from GitHub. You can use this to load CSV files into Oracle, MySQL, PostgreSQL, SQL Server and Db2 databases. Here’s and example of the command line to load into an Oracle Database.

csv2db load -f /Users/brendan.tierney/Dropbox/4-Datasets/SAS_Data_Sets/csv -t pva97nk -u analytics -p analytics -d PDB1

Creating and Reading SPSS and SAS data sets in R

Have you ever been faced with having to generate a data set in the format that is needed by another analytics tool? or having to generate a data set in a particular format but you don’t have the software that generates that format? For example, if you are submitting data to the FDA and other bodies, you may need to submit the data in a SAS formatted file. There are a few ways you can go about this.

One option is that you can use the Haven R package to generate your dataset in SAS and SPSS formats. But you can also read in SAS and SPSS formatted files. I have to deal with these formatted data files all the time, and it can be a challenge, but I’ve recently come across the Haven R package that has just made my life just a little bit/lots easier. Now I can easily generate SAS and SPSS formatted data sets for my data in my Oracle Database, using R and ORE. ORE we can now use the embedded feature to build the generation of these data sets into some of our end-user applications.

Let us have a look at Haven and what it can do.

Firstly there is very little if any documentation online for it. That is ok so we will have to rely on the documentation that comes with the R packages. Again there isn’t much to help and that is because the R package mainly consists of functions to Read in these data sets, functions to Write these data sets and some additional functions for preparing data.

For reading in data sets we have the following functions:

# SAS

read_sas("mtcars.sas7bdat")

# Stata

read_dta("mtcars.dta")

# SPSS

read_sav("mtcars.sav")

For writing data sets we have the following functions:

# SAS write_sas(mtcars, "mtcars.sas7bdat") # Stata write_dta(mtcars, "mtcars.dta") # SPSS write_sav(mtcars, "mtcars.sav")

Let us now work through an example of creating a SAS data set. We can use some of the sample data sets that come with the Oracle Database in the SH schema. I’m going to use the data in the CUSTOMER table to create a SAS data set. In the following code I’m using ORE to connect to the database but you can use your preferred method.

> library(ORE)

> # Create your connection to the schema in the DB

> ore.connect(user="sh", password="sh", host="localhost", service_name="PDB12C",

port=1521, all=TRUE)

> dim(CUSTOMERS)

[1] 55500 23

> names(CUSTOMERS)

[1] "CUST_ID" "CUST_FIRST_NAME" "CUST_LAST_NAME"

[4] "CUST_GENDER" "CUST_YEAR_OF_BIRTH" "CUST_MARITAL_STATUS"

[7] "CUST_STREET_ADDRESS" "CUST_POSTAL_CODE" "CUST_CITY"

[10] "CUST_CITY_ID" "CUST_STATE_PROVINCE" "CUST_STATE_PROVINCE_ID"

[13] "COUNTRY_ID" "CUST_MAIN_PHONE_NUMBER" "CUST_INCOME_LEVEL"

[16] "CUST_CREDIT_LIMIT" "CUST_EMAIL" "CUST_TOTAL"

[19] "CUST_TOTAL_ID" "CUST_SRC_ID" "CUST_EFF_FROM"

[22] "CUST_EFF_TO" "CUST_VALID"

Next we can prepare the data, take a subset of the data, reformat the data, etc. For me I just want to use the data as it is. All I need to do now is to pull the data from the database to my local R environment.

dat <- ore.pull(CUSTOMERS)

Then I need to load the Haven library and then create the SAS formatted file.

library(haven) write_sas(dat, "c:/app/my_customers.sas7bdat")

That’s it. Nice and simple.

But has it worked? Has it created the file correctly? Will it load into my SAS tool?

There is only one way to test this and that is to only it in SAS. I have an account on SAS OnDemand with access to several SAS products. I’m going to use SAS Studio.

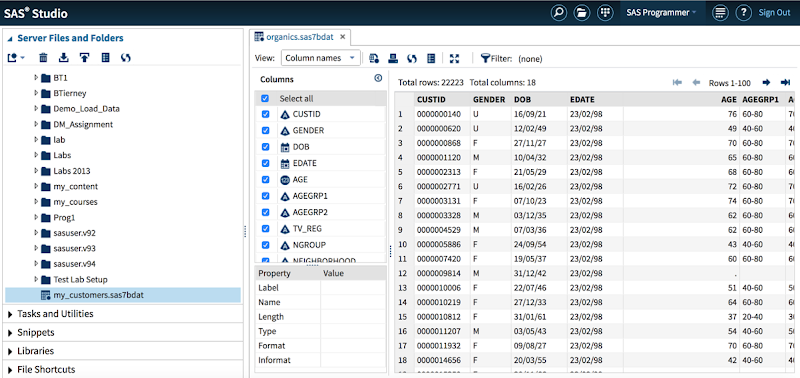

Well it works! The following image shows SAS Studio after I had loaded the data set with the variables and data shown.

WARNING: When you load the data set into SAS you may get a warning message saying that it isn’t a SAS data set. What this means is that it is not a data set generated by SAS. But as you can see in the image above all the data got loaded OK and you can work away with it as normal in your SAS tools.

The next step is to test the loading of a SAS data set into R. I’m going to use one of the standard SAS data sets called PVA97NK.SAS7BDAT. If you have worked with SAS products then you will have come across this data set.

When you use Haven to load in your SAS data set, it will create the data in tribble format. This is a slight varient of a data.frame. So if you want the typical format of a data.frmae then you will need to convert the loaded data, as shown in the following code.

> data_read dim(data_read) [1] 9686 28 > d class(data_read) [1] "tbl_df" "tbl" "data.frame" > class(d) [1] "data.frame" > head(d) TARGET_B ID TARGET_D GiftCnt36 GiftCntAll GiftCntCard36 GiftCntCardAll 1 0 00014974 NA 2 4 1 3 2 0 00006294 NA 1 8 0 3 3 1 00046110 4 6 41 3 20 ...

I think this package to going to make my life a little bit easier, and if you work with SPSS and SAS data sets then hopefully some of your tasks have become a little bit easier too.