Model Interchange

Pre-build Machine Learning Models

Machine learning has seen widespread adoption over the past few years. In more recent times we have seem examples of how the models, created by the machine learning algorithms, can be shared. There have been various approaches to sharing these models using different model interchange languages. Some of these have become more or less popular over time, for example a few years ago PMML was very popular, and in more recent times ONNX seems to popular. Who knows what it will be next year or in a couple of years time.

With the increased use of machine learning models and the ability to share them, we are now seeing other uses of them. Typically the sharing of models involved a company transferring a model developed by the data scientists in their lab environment, to DevOps teams who then deploy the model into the production environment. This has developed a new are of expertise of MLOps or AIOps.

The languages and tools used by the data scientists in the lab environment are different to the languages used to deploy applications in production. The model interchange languages can be used take the model parameters, algorithm type and data transformations, etc and map these into the interchange language. The production environment would read this interchange object and apply it to the production language. In such situations the models will use the algorithms already coded in the production language. For example, the lab environment could be using Python. But the product environment could be using C, Java, Go, etc. Python is an interpretative language and in a lot of cases is not suitable for real-time use in a production environment, due to speed and scalability issues. In this case the underlying algorithm of the production language will be used and not algorithm used in the lab. In theory the algorithms should be the same. For example a decision tree algorithm using Gini Index in one language should function in the same way in another language. We all know there can be a small to a very large difference between what happens in theory and how it works in practice. Different language and different developers will do things slightly differently. This means there will be differences between the accuracy of the models developed in the lab versus the accuracy of the (same) model used in production. As long as everyone is aware of this, then everything will be ok. But it will be important task, for the data science team, to have some measurements of these differences.

Moving on a little this a little, we are now seeing some other developments with the development and sharing of machine learning models, and the use of these open model interchange languages, like ONNX, makes this possible.

We are now seeing people making their machine learning models available to the wider community, instead of keeping them within their own team or organization.

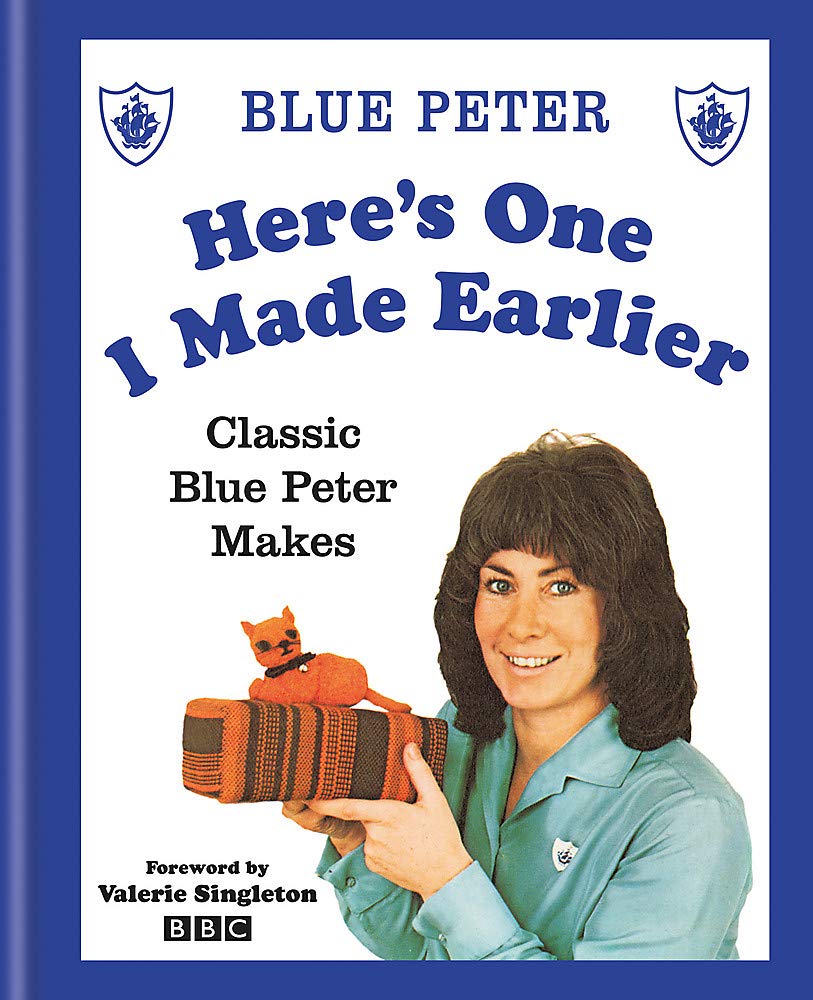

Why would some one do this? why would they share their machine learning model? It’s a bit like the picture to the left which comes from a very popular kids programme on the BBC called Blue Peter. They would regularly show some craft projects for kids to work on at home. They would never show all the steps needed to finish the project and would end up showing us “one I made earlier”. It always looked perfect and nothing like what they tried to make in the studio and nothing like my attempt.

But having pre-made machine learning models is now a thing. There ware lots of examples of these and for example the ONNX website has several pre-trained models ready for you to use. These cover various examples for image classification, object detection, machine translation and comprehension, language modeling, speech and audio processing, etc. More are being added over time.

Most of these pre-trained models are based on defined data sets and problems and allows others to see what they have done, and start building upon their work without the need to go through the training and validating phase.

Could we have something like this in the commercial world? Could we have pre-trained machine learning models being standardized and shared across different organizations? Again the in-theory versus in-practical terms apply. Many organizations within a domain use the same or similar applications for capturing, storing, processing and analyzing their data. In this case could the sharing of machine learning models help everyone be more competitive or have better insights and discoveries from their data? Again the difference between in-theory versus in-practice applies.

Some might remember in the early days of Data Warehousing we used to have some industry (dimensional) models, and vendors and consulting companies would offer their custom developed industry models and how to populate these. In theory these were supposed to help companies to speed up their time to data insights and save money. We have seem similar attempts at doing similar things over the decades. But the reality was most projects ended up being way more expensive and took way too long to deploy due to lots of technical difficulties and lots of differences in the business understand, interpretation and deployment of the underlying applications. The pre-built DW model was generic and didn’t really fit in with the business needs.

Although we are seeing more and more pre-trained machine learning models appearing on the market. Many vendors are offering pre-trained solutions. But can these really work. Some of these pre-trained models are based on certain data preparation, using one particular machine learning model and using only one particular evaluation matric. As with the custom DW models of twenty years ago, pre-trained ML models are of limited use.

Everyone is different, data is different, behavior is different, etc. the list goes on. Using the principle of the “No Free Lunch” theorem, although we might be using the same or similar applications for capturing, storing, processing and analysing their data, the underlying behavior of the data (and the transactions, customers etc that influence that), will be different, the marketing campaigns will be different, business semantics may be different, general operating models will be different, etc. Based on “No Free Lunch” we need to explore the data using a variety of different algorithms, to determine what works for our data at this point in time. The behavior of the data (and business influences on it) keep on changing and evolving on a daily, weekly, monthly, etc basis. A great example of this but in a more extreme and rapid rate of change happened during the COVID pandemic. Most of the machine learning models developed over the preceding period no longer worked, the models developed during the pandemic have a very short life span, and it will take some time before “normal” will return and newer models can be built to represent the “new normal”