Truth, Fairness & Equality in AI – US Federal Trade Commission

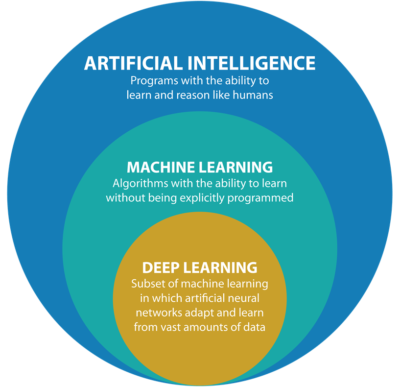

Over the past few months we have seen a growing level of communication, guidelines, regulations and legislation for the use of Machine Learning (ML) and Artificial Intelligence (AI). Where Artificial Intelligence is a superset containing all possible machine or computer generated or apply intelligence consisting of any logic that makes a decision or calculation.

Although the EU has been leading the charge in this area, other countries have been following suit with similar guidelines and legislation.

There has been several examples of this in the USA over the past couple of years. Some of this has been prefaced by the debates and issues around the use of facial recognition. Some States in USA have introduced laws to control what can and cannot be done, but, at time of writing, where is no federal law governing the whole of USA.

In April 2021, the US Federal Trade Commission published and article on titled ‘Aiming for truth, fairness, and equity in Company’s use of AI‘.

They provide guidelines on how to build AI applications while avoiding potential issues such as bias and unfair outcomes, and at the same time incorporating transparency. In addition to the recommendations in the report, they point to three laws (which have been around for some time) which are important for developers of AI applications. These include:

- Section 5 of the FTC Act: The FTC Act prohibits unfair or deceptive practices. That would include the sale or use of – for example – racially biased algorithms.

- Fair Credit Reporting Act: The FCRA comes into play in certain circumstances where an algorithm is used to deny people employment, housing, credit, insurance, or other benefits.

- Equal Credit Opportunity Act: The ECOA makes it illegal for a company to use a biased algorithm that results in credit discrimination on the basis of race, color, religion, national origin, sex, marital status, age, or because a person receives public assistance.

These guidelines aims for truthfully, fairly and equitably. With these covering the technical and non-technical side of AI applications. The guidelines include:

- Start with the right direction: Get your data set right, what is missing, is it balanced, what’s missing, etc. Look at how to improve the data set and address any shortcomings, and this may limit you use model

- Watch out of discriminatory outcomes: Are the outcomes biased? If it works for you data set and scenario, will it work in others eg. Applying the model in a different hospital? Regular and detail testing is needed to ensure no discrimination gets included

- Embrace transparency and independence: Think about how to incorporate transparency from the beginning of the AI project. Use international best practice and standards, have independent audits and publish results, by opening the data and source code to outside inspection.

- Don’t exaggerate what you algorithm can do or whether it can deliver fair or unbiased results: That kind of says it all really. Under the FTC Act, your statements to business customers and consumers must be truthful, no-deceptive and backed up by evidence. Typically with the rush to introduce new technologies and products there can be a tendency to over exaggerate what it can do. Don’t do this

- Tell the truth about how you use data: Be careful about what data you used and how you got this data. For example, Facebook using facial recognition software on pictures default, when they asked for your permission but ignored what you said. Misrepresentation of what the customer/consumer was told.

- Do more good than harm: A practice is unfair if it causes more harm than good. Making decisions based on race, color, religion, sex, etc. If the model causes more harm than good, if it causes or is likely to cause substantial injury to consumers that I not reasonably avoidable by consumers and not outweighed by countervailing benefits to consumers or to competition, their model is unfair.

- Hold yourself accountable: If you use AI, in any form, you will be held accountable for the algorithm’s performance.

Some of these guidelines build upon does from April 2020, on Using Artificial Intelligence and Algorithms, where there is a focus on fair use of data, transparency of data usage, algorithms and models, ability to clearly explain how a decision was made, and ensure all decisions made are fair and unbiased

Working with AI products and applications can be challenging in many different ways. Most of the focus, information and examples is about building these. But that can be the easy part. With the growing number of legal aspects from different regions around the world the task of managing AI products and applications is becoming more and more complicated.

The EU AI Regulations supports the role of person to oversee these different aspects, and this is something we will see job adverts for very very soon, no matter what country or region you live in. The people in these roles will help steer and support companies through this difficult and evolving area, to ensure compliance with local as well and global compliance and legal requirements.