Digital Ethics

Ireland AI Strategy (2021)

Over the past year or more there was been a significant increase in publications, guidelines, regulations/laws and various other intentions relating to these. Artificial Intelligence (AI) has been attracting a lot of attention. Most of this attention has been focused on how to put controls on how AI is used across a wide range of use cases. We have heard and read lots and lots of stories of how AI has been used in questionable and ethical scenarios. These have, to a certain extent, given the use of AI a bit of a bad label. While some of this is justified, some is not, but some allows us to question the ethical use of these technologies. But not all AI, and the underpinning technologies, are bad. Most have been developed for good purposes and as these technologies mature they sometimes get used in scenarios that are less good.

We constantly need to develop new technologies and deploy these in real use scenarios. Ireland has a long history as a leader in the IT industry, with many of the top 100+ IT companies in the world having research and development operations in Ireland, as well as many service suppliers. The Irish government recently released the National AI Strategy (2021).

“The National AI Strategy will serve as a roadmap to an ethical, trustworthy and human-centric design, development, deployment and governance of AI to ensure Ireland can unleash the potential that AI can provide”. “Underpinning our Strategy are three core principles to best embrace the opportunities of AI – adopting a human-centric approach to the application of AI; staying open and adaptable to innovations; and ensuring good governance to build trust and confidence for innovation to flourish, because ultimately if AI is to be truly inclusive and have a positive impact on all of us, we need to be clear on its role in our society and ensure that trust is the ultimate marker of success.” Robert Troy, Minister of State for Trade Promotion, Digital and Company Regulation.

The eight different strands are identified and each sets out how Ireland can be an international leader in using AI to benefit the economy and society.

- Building public trust in AI

- Strand 1: AI and society

- Strand 2: A governance ecosystem that promotes trustworthy AI

- Leveraging AI for economic and societal benefit

- Strand 3: Driving adoption of AI in Irish enterprise

- Strand 4: AI serving the public

- Enablers for AI

- Strand 5: A strong AI innovation ecosystem

- Strand 6: AI education, skills and talent

- Strand 7: A supportive and secure infrastructure for AI

- Strand 8: Implementing the Strategy

Each strand has a clear list of objectives and strategic actions for achieving each strand, at national, EU and at a Global level.

Check out the full document here.

Australia New AI Regulations Framework

Over the past few weeks/months we have seen more and more countries addressing the potential issues and challenges with Artificial Intelligence (and it’s components of Statistical Analysis, Machine Learning, Deep Learning, etc). Each country has either adopted into law controls on how these new technologies can be used and where they can be used. Many of these legal frameworks have implications beyond their geographic boundaries. This makes working with such technology and ever increasing and very difficult challenging.

In this post, I’ll have look at the new AI Regulations Framework recently published in Australia.

[I’ve written posts on what other countries had done. Make sure to check those out]

The Australia AI Regulations Framework is available from tech.humanrights.gov.au, is a 240 page report giving 38 different recommendations. This framework does not present any new laws, but provides a set of recommendations for the government to address and enact new legislation.

It should be noted that a large part of this framework is focused on Accessible Technology. It is great to see such recommendations. Apart from the section relating to Accessibility, the report contains 2 main sections addressing the use of Artificial Intelligence (AI) and how to support the implementation and regulation of any new laws with the appointment of an AI Safety Commissioner.

Focusing on the section on the use of Artificial Intelligence, the following is a summary of the 20 recommendations:

Chapter 5 – Legal Accountability for Government use of AI

Introduce legislation to require that a human rights impact assessment (HRIA) be undertaken before any department or agency uses an AI-informed decision-making system to make administrative decisions. When an AI decision is made measures are needed to improve transparency, including notification of the use of AI and strengthening a right to reasons or an explanation for AI-informed administrative decisions, and an independent review for all AI-informed administrative decisions.

Chapter 6 – Legal Accountability for Private use of AI

In a similar manner to governmental use of AI, human rights and accountability are also important when corporations and other non-government entities use AI to make decisions. Corporations and other non-government bodies are encouraged to undertake HRIAs before using AI-informed decision-making systems and individuals be notified about the use of AI-informed decisions affecting them.

Chapter 7 – Encouraging Better AI Informed Decision Making

Complement self-regulation with legal regulation to create better AI-informed decision-making systems with standards and certification for the use of AI in decision making, creating ‘regulatory sandboxes’ that allow for experimentation and innovation, and rules for government procurement of decision-making tools and systems.

Chapter 8 – AI, Equality and Non-Discrimination (Bias)

Bias occurs when AI decision making produces outputs that result in unfairness or discrimination. Examples of AI bias has arisen in in the criminal justice system, advertising, recruitment, healthcare, policing and elsewhere. The recommendation is to provide guidance for government and non-government bodies in complying with anti-discrimination law in the context of AI-informed decision making

Chapter 9 – Biometric Surveillance, Facial Recognition and Privacy

There is lot of concern around the use of biometric technology, especially Facial Recognition. The recommendations include law reform to provide better human rights and privacy protection regarding the development and use of these technologies through regulation facial and biometric technology (Recommendations 19, 21), and a moratorium on the use of biometric technologies in high-risk decision making until such protections are in place (Recommendation 20).

In addition to the recommendations on the use of AI technologies, the framework also recommends the establishment of a AI Safety Commissioner to support the ongoing efforts with building capacity and implementation of regulations, to monitor and investigate use of AI, and support the government and private sector with complying with laws and ethical requirements with the use of AI.

Regulating AI around the World

Continuing my series of blog posts on various ML and AI regulations and laws, this post will look at what some other countries are doing to regulate ML and AI, with a particular focus on facial recognition and more advanced applications of ML. Some of the examples listed below are work-in-progress, while others such as EU AI Regulations are at a more advanced stage with introduction of regulations and laws.

[Note: What is listed below is in addition to various data protection regulations each country or region has implemented in recent years, for example EU GDPR and similar]

Things are moving fast in this area with more countries introducing regulations all the time. The following list is by no means exhaustive but it gives you a feel for what is happening around the world and what will be coming to your country very soon. The EU and (parts of) USA are leading in these areas, it is important to know these regulations and laws will impact on most AI/ML applications and work around the world. If you are processing data about an individual in these geographic regions then these laws affect you and what you can do. It doesn’t matter where you live.

New Zealand

New Zealand along wit the World Economic Forum (WEF) are developing a governance framework for AI regulations. It is focusing on three areas:

- Inclusive national conversation on the use of AI

- Enhancing the understand of AI and it’s application to inform policy making

- Mitigation of risks associated with AI applications

Singapore

The Personal Data Protection Commission has released a framework called ‘Model AI Governance Framework‘, to provide a model on implementing ethical and governance issues when deploying AI application. It supports having explainable AI, allowing for clear and transparent communications on how the AI applications work. The idea is to build understanding and trust in these technological solutions. It consists of four principles:

- Internal Governance Structures and Measures

- Determining the Level of Human Involvement in AI-augmented Decision Making

- Operations Management, minimizing bias, explainability and robustness

- Stakeholder Interaction and Communication.

USA

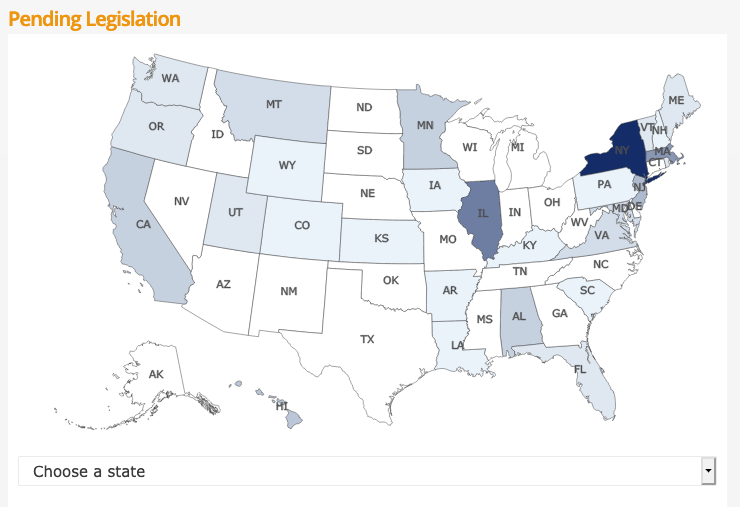

Progress within the USA has been divided between local state level initiatives, for example California where different regions have implemented their own laws, while at a state level there has been attempts are laws. But California is not along with almost half of the states introducing laws restricting the use of facial recognition and personal data protection. In addition to what is happening at State level, there has been some orders and laws introduced at government level.

- Executive Order on Promoting the Use of Trustworthy Artificial Intelligence in the Federal Government

- This provides guidelines to help Federal Agencies with AI adoption and to foster public trust in the technology. It directs agencies to ensure the design, development, acquisition and use of AI is done in a manner to protects privacy, civil rights, and civil liberties. It includes the following actions:

- Principles for the Use of AI in Government

- Common Policy form Implementing Principles

- Catalogue of Agency Use Cases of AI

- Enhanced AI Implementation Expertise

- This provides guidelines to help Federal Agencies with AI adoption and to foster public trust in the technology. It directs agencies to ensure the design, development, acquisition and use of AI is done in a manner to protects privacy, civil rights, and civil liberties. It includes the following actions:

- Government – Facial Recognition and Biometric Technology Moratorium Act of 2020. Limits the use of biometric surveillance systems such as facial recognition systems by federal and state government entities

USA – Washington State

Many of the States in USA have enacted laws on Facial Recognition and the use of AI. There are too many to list here, but go to this website to explore what each State has done. Taking Washington State as an example, it has enacted a law prohibiting the use of facial recognition technology for ongoing surveillance and limits its use to acquiring evidence of serious criminal offences following authorization of a search warrant.

Canada

The Privacy Commissioner of Canada introduced the Regulatory Framework for AI, and calls for legislation supporting the benefits of AI while upholding privacy of individuals. Recommendations include:

- allow personal information to be used for new purposes towards responsible AI innovation and for societal benefits

- authorize these uses within a rights-based framework that would entrench privacy as a human right and a necessary element for the exercise of other fundamental rights

- create a right to meaningful explanation for automated decisions and a right to contest those decisions to ensure they are made fairly and accurately

- strengthen accountability by requiring a demonstration of privacy compliance upon request by the regulator

- empower the OPC to issue binding orders and proportional financial penalties to incentivize compliance with the law

- require organizations to design AI systems from their conception in a way that protects privacy and human rights

The above list is just a sample of what is happening around the World, and we are sure to see lots more of this over the next few years. There are lots of pros and cons to these regulations and laws. One of the biggest challenges being faced by people with AI and ML technologies is knowing what is and isn’t possible/allowed, as most solutions/applications will be working across many geographic regions

Truth, Fairness & Equality in AI – US Federal Trade Commission

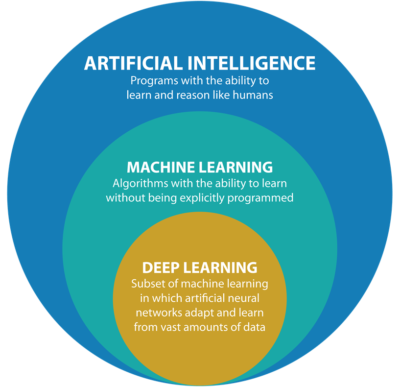

Over the past few months we have seen a growing level of communication, guidelines, regulations and legislation for the use of Machine Learning (ML) and Artificial Intelligence (AI). Where Artificial Intelligence is a superset containing all possible machine or computer generated or apply intelligence consisting of any logic that makes a decision or calculation.

Although the EU has been leading the charge in this area, other countries have been following suit with similar guidelines and legislation.

There has been several examples of this in the USA over the past couple of years. Some of this has been prefaced by the debates and issues around the use of facial recognition. Some States in USA have introduced laws to control what can and cannot be done, but, at time of writing, where is no federal law governing the whole of USA.

In April 2021, the US Federal Trade Commission published and article on titled ‘Aiming for truth, fairness, and equity in Company’s use of AI‘.

They provide guidelines on how to build AI applications while avoiding potential issues such as bias and unfair outcomes, and at the same time incorporating transparency. In addition to the recommendations in the report, they point to three laws (which have been around for some time) which are important for developers of AI applications. These include:

- Section 5 of the FTC Act: The FTC Act prohibits unfair or deceptive practices. That would include the sale or use of – for example – racially biased algorithms.

- Fair Credit Reporting Act: The FCRA comes into play in certain circumstances where an algorithm is used to deny people employment, housing, credit, insurance, or other benefits.

- Equal Credit Opportunity Act: The ECOA makes it illegal for a company to use a biased algorithm that results in credit discrimination on the basis of race, color, religion, national origin, sex, marital status, age, or because a person receives public assistance.

These guidelines aims for truthfully, fairly and equitably. With these covering the technical and non-technical side of AI applications. The guidelines include:

- Start with the right direction: Get your data set right, what is missing, is it balanced, what’s missing, etc. Look at how to improve the data set and address any shortcomings, and this may limit you use model

- Watch out of discriminatory outcomes: Are the outcomes biased? If it works for you data set and scenario, will it work in others eg. Applying the model in a different hospital? Regular and detail testing is needed to ensure no discrimination gets included

- Embrace transparency and independence: Think about how to incorporate transparency from the beginning of the AI project. Use international best practice and standards, have independent audits and publish results, by opening the data and source code to outside inspection.

- Don’t exaggerate what you algorithm can do or whether it can deliver fair or unbiased results: That kind of says it all really. Under the FTC Act, your statements to business customers and consumers must be truthful, no-deceptive and backed up by evidence. Typically with the rush to introduce new technologies and products there can be a tendency to over exaggerate what it can do. Don’t do this

- Tell the truth about how you use data: Be careful about what data you used and how you got this data. For example, Facebook using facial recognition software on pictures default, when they asked for your permission but ignored what you said. Misrepresentation of what the customer/consumer was told.

- Do more good than harm: A practice is unfair if it causes more harm than good. Making decisions based on race, color, religion, sex, etc. If the model causes more harm than good, if it causes or is likely to cause substantial injury to consumers that I not reasonably avoidable by consumers and not outweighed by countervailing benefits to consumers or to competition, their model is unfair.

- Hold yourself accountable: If you use AI, in any form, you will be held accountable for the algorithm’s performance.

Some of these guidelines build upon does from April 2020, on Using Artificial Intelligence and Algorithms, where there is a focus on fair use of data, transparency of data usage, algorithms and models, ability to clearly explain how a decision was made, and ensure all decisions made are fair and unbiased

Working with AI products and applications can be challenging in many different ways. Most of the focus, information and examples is about building these. But that can be the easy part. With the growing number of legal aspects from different regions around the world the task of managing AI products and applications is becoming more and more complicated.

The EU AI Regulations supports the role of person to oversee these different aspects, and this is something we will see job adverts for very very soon, no matter what country or region you live in. The people in these roles will help steer and support companies through this difficult and evolving area, to ensure compliance with local as well and global compliance and legal requirements.

[New Book] 97 Things about Data Ethics in Data Science – Collective Wisdom from the Experts

Some months ago I was approached about being part and contributing to a new book on Data Ethics for Data Science. It is now available to purchase on Amazon (and elsewhere), and this book now becomes the Sixth book that I’ve either solely or co-written. Check out my all my books here.

Some months ago I was approached about being part and contributing to a new book on Data Ethics for Data Science. It is now available to purchase on Amazon (and elsewhere), and this book now becomes the Sixth book that I’ve either solely or co-written. Check out my all my books here.

This has been an area I’ve been working in for some time now, in both research and assisting companies. I was able to make a couple of contributions to this book, and there has been great contributions from (other) global experts in Data Science and Data Ethics, and has been edited by Bill Franks.

Most of the high-profile cases of real or perceived unethical activity in data science aren’t matters of bad intent. Rather, they occur because the ethics simply aren’t thought through well enough. Being ethical takes constant diligence, and in many situations identifying the right choice can be difficult.

In this in-depth book, contributors from top companies in technology, finance, and other industries share experiences and lessons learned from collecting, managing, and analyzing data ethically. Data science professionals, managers, and tech leaders will gain a better understanding of ethics through powerful, real-world best practices.

The book is available in paper back and kindle formats and is published by O’Reilly Press.

You might be interested in my previous book on Data Science, part of the MIT Press Essentials Series. This book has been a Best Seller in 2018 and 2019 on Amazon.

Always watching, always listening. Be careful with your data

The saying ‘Big Brother is Watching’ has been around a long time and typically gets associated with government organisations. But over the past few years we have a few new Big Brothers appearing. These are in the form of Google and Facebook and a few others.

These companies gather lots and lots. Some companies gather enormous amounts of data. This data will include details of your interactions with the companies through various websites, applications, etc. But some are gathering data in ways that you might not be aware. For example, take this following video. Data is being gathered about what you do and where you go even if you have disconnected your phone.

Did you know this kind of data was being gathered about you?

Just think of what they could be doing with that data, that data you didn’t know they were gathering about you. Companies like these generate huge amounts of income from selling advertisements and the more data they have about individuals the more the can understand what they might be interested. The generate customer profiles and sell expensive advertising based on having these very detailed customer profiles.

But it doesn’t stop there. Recently Google bought Fitbit. Just think about what they can do now. Combining their existing profiles of you as a person with you activities throughout every day, week and month. Just think about how various health and insurance companies would love to have this data. Yes they would and companies like Google would be able to charge these companies even more money for this level of detail on individuals/customers.

But it doesn’t stop there. There have been lots of reports of various apps sharing health and other related data with various companies, without their customers being aware this is happening.

What about Google Assistant? In a recent article by MIT Technology Review title Inside Amazon’s plan for Alexa to run your entire life, they discuss how Alexa can be used to control virtually everything. In this article Alexa’s cheif scientist say “plan is for the voice assistant to move from passive to proactive interactions. Rather than wait for and respond to requests, Alexa will anticipate what the user might want. The idea is to turn Alexa into an omnipresent companion that actively shapes and orchestrates your life. This will require Alexa to get to know you better than ever before.” When combined with other products this will allow “these new products let Alexa listen to and log data about a dramatically larger portion of your life“.

Just imagine if Google did the same with their Google Assistant! Big Brother isn’t just Watching, they are also Listening!

There has been some recent report of Google looking to get into Banking by offering checking accounts. The project, code-named Cache, is due to launch in 2020. Google has partnered with Citigroup and a credit union at Stanford University, which will administer the accounts. Users will be able to access their accounts through Google’s digital payment platform, Google Pay.

And there are the reports of Google having access to the health records of over 50 million people. In addition to this, Google has signed a deal with Ascension, the second-largest hospital system in the US, to collect and analyze millions of Americans’ personal health data. Ascension operates in 150 hospitals in 21 states.

What if they also had access to your banking details and spending habits? Google is looking at different options to extend financial products from the google pay into more main stream banking. There has been some recent report of them looking at offering current accounts.

I won’t go discussing their attempts at Ethics and their various (failed) attempts at establishing and Ethics Advisory Board. This has been well documented elsewhere.

Things are getting a bit scary and the saying ‘Big Brother is Watching You’, is very, very true.

In the ever increasing connected world, all of us have a responsibility to know what data companies are gathering on us. We need to decide how comfortable we are with this and if you aren’t then you need to take steps to ensure you protect yourself. Maybe part of this protection requires us to become less connected, stop using some apps, turn off more notification, turn off updates, turn off tracking, etc

While taking each product or offering individually, it may seem ok to us for Google and other companies to offer such services and to analyze our data to provide a better service. But for most people the issues arise when each of these products start to be combined. By doing this they get to have greater access and understanding our our data and our behaviors. What role does (digital) ethics play in all of this? This is something for the company and the employees to decide where things should stop. But when/how do you decide this? when do you/they know things have gone too far? how can you undo some of this work to go back to an acceptable level? what is an acceptable level and how do you define this?

As yo can see there are lots of things to consider and a vital component is the role of (digital) ethics. All organizations who process and analyze data need to have an ethics board and ethics needs to be a core part of every project. To support this everyone needs more training and awareness of ethics and what is acceptable or not.

You must be logged in to post a comment.