oraclebigdata

Data Science Is Multidisciplinary

A few weeks ago I had a blog post called Domain Knowledge + Data Skills = Data Miner.

In that blog post I was saying that to be a Data Scientist all you needed was Domain Knowledge and some Data Skills, which included Data Mining.

The reality is that the skill set of a Data Scientist will be much larger. There is a saying ‘A jack of all trades and a master of none’. When it comes to being a data scientist you need to be a bit like this but perhaps a better saying would be ‘A jack of all trades and a master of some’.

I’ve put together the following diagram, which includes most of the skills with an out circle of more fundamental skills. It is this outer ring of skills that are fundamental in becoming a data scientist. The skills in the inner part of the diagram are skills that most people will have some experience in one or more of them. The other skills can be developed and learned over time, all depending on the type of person you are.

Can we train someone to become a data scientist or are they born to be a data scientist. It is a little bit of both really but you need to have some of the fundamental skills and the right type of personality. The learning of the other skills should be easy(ish)

What do you think? Are their Skill that I’m missing?

Domain Knowledge + Data Skills = Data Miner

Over the past few weeks I have been talking to a lot of people who are looking at how data mining can be used in their organisation, for their projects and to people who have been doing data mining for a log time.

What comes across from talking to the experienced people, and these people are not tied to a particular product, is that you need to concentrate on the business problem. Once you have this well defined then you can drill down to the deeper levels of the project. Some of these levels will include what data is needed (not what data you have), tools, algorithms, etc.

Statistics is only a very small part of a data mining project. Some people who have PhDs in statistics who work in data mining say you do not use or very rarely use their statistics skills.

Some quotes that I like are:

“Focus hard on Business Question and the relevant target variable that captures the essence of the question.” Dean Abbott PAW Conf April 2012

“Find me something interesting in my data is a question from hell. Analysis should be guided by business goals.” Colin Shearer PAW Conf Oct 2011

There has need a lot of blog posting and articles on what are the key skills for a Data Miner and the more popular Data Scientist. What is very clear from all of these is that you will spend most of your time looking at, examining, integrating, manipulating, preparing, standardising and formatting the data. It has been quoted that all of these tasks can take up to 70% to 85% of a Data Mining/Data Scientist time. All of these tasks are commonly performed by database developers and in particular the developers and architects involved in Data Warehousing projects. The rest of the time for the running of the data mining algorithms, examining the results, and yes some stats too.

Every little time is spent developing algorithms!!! Why is this ? Would it be that the algorithms are already developed (for a long time now and are well turned) and available in all the data mining tools. We can almost treat these algorithms as a black box. So one of the key abilities of a data miner/data scientist would be to know what the algorithms can do, what kind of problems they can be used for, know what kind of outputs they produce, etc.

Domain knowledge is important, no matter how little it is, in preparing for and being involved in a data mining project. As we define our business problem the domain expert can bring their knowledge to the problem and allows us separate the domain related problems from the data related problems. So the domain expertise is critical at that start of a project, but the domain expertise is also critical when we have the outputs from the data mining algorithms. We can use the domain knowledge to tied the outputs from the data mining algorithms back to the original problem to bring real meaning to the original business problem we are working on.

So what is the formula of skill sets for a data mining or data scientist. Well it is a little like the title of this blog;

Domain Knowledge + Data Skills + Data Mining Skills + a little bit of Machine Learning + a little bit of Stats = a Data Miner / Data Scientist

2 Day Oracle Data Miner course material

Last week I managed to get my hands on the training material for the 2 Day Oracle Data Miner course. This course is run by Oracle University.

Many thanks to Michael O’Callaghan who is a BI Sales person here in Ireland and Oracle University, for arranging this.

The 2 days are pretty packed with a mixture of lecture type material, lots of hands on exercises and some time for open discussions. In particular, day 2 will be very busy day.

Check out the course outline and published schedule – click here

You can have this course on site at your organisation. If this is something that interests you then contact your Oracle University account manager. There is also the traditional face-to-face delivery and the newer online delivery, where people from around the world come together for the online class.

Oracle Analytics Sessions at COLLABORATE12

There are a number of Oracle Advanced Analytics and related topics taking place this week at COLLABORATE12 in Las Vegas (http://collaborate12.com).

| Date | Time | Presentation | Presenter |

| Sun 22nd | 9:00-3pm | Oracle Business Intelligence Application Journey | |

| Mon 23rd | 9:45-10:45 | Managing Unstructured Data using Hadoop, Oracle 11g and Oracle Exadata Database Machine | Jim Steiner |

| Mon 23rd | 9:45-10:45 | Environmental Data Management and Analytics-a Real World Perspective | Angela Miller |

| Mon 23rd | 11-12 | Public Safety and Environmental Real-Time Analytics using Oracle Business Intelligence | Raghav Venkat Therese Arguelles |

| Mon 23rd | 11-12 | BI is more than slice and dice | Peter Scott |

| Mon 23rd | 14:30-15:30 | In-Database Analytics: Predictive Analytics, Data Mining, Exadata & Business Intelligence | Jacek Myczkowski |

| Mon 23rd | 15:45-16:45 | Big Data Analytics, R you ready | Mark Hornick Shyam Nath |

| Tues 24th | 10:45-11:45 | BI Analytics and Oracle NoSQL. The Future of Now | Manish Khera |

| Wed. 25th | 8:15-9:15 | Oracle Data Mining – A Component of the Oracle Advanced Analytics Option-Hands-on Lab | Charlie Berger |

| Wed 25th | 9:30-10:30 | Oracle R Enterprise – A Component of the Oracle Advanced Analytics Option-Hands-on Lab | Mark Hornick |

Here are the abstracts from the two main Oracle Advanced Analytics presentations by Charlie Berger and Mark Hornick

Oracle Data Mining – A Component of the Oracle Advanced Analytics Option

This Hands-on Lab provides an introduction to Oracle Data Mining and the Oracle Data Miner GUI.

Oracle Data Mining (ODM), now part of Oracle Advanced Analytics, provides an extensive set of in-database data mining algorithms that solve a wide range of business problems. It can predict customer behavior, detect fraud, analyze market baskets, segment customers, and mine text to extract sentiments. ODM provides powerful data mining algorithms that run as native SQL functions for in-database model building and model deployment. There is no need for the time delays and security risks of data movement.

The free Oracle Data Miner GUI is an extension to Oracle SQL Developer 3.1 that enables data analysts to work directly with data inside the database, explore the data graphically, build and evaluate multiple data mining models, apply ODM models to new data, and deploy ODM’s predictions and insights throughout the enterprise. Oracle Data Miner work flows capture and document the user’s analytical methodology and can be saved and shared with others to automate advanced analytical methodologies.

Oracle R – A component of the Oracle Advanced Analytics Option

This Hands-on Lab provides an introduction to Oracle R Enterprise.

Oracle R Enterprise, a part of the Oracle Advanced Analytics Option, makes the open source R statistical programming language and environment ready for the enterprise by integrating R with Oracle Database. R users can interactively and transparently execute R scripts for statistical and graphical analyses on data stored in Oracle Database. R scripts can be executed in Oracle Database using potentially multiple database-managed R engines – resulting in data parallel execution. ORE also provides a rich set of statistical functions and advanced analytics techniques.

In this lab, attendees will be introduced to Oracle’s strategy for R, including the Oracle R Distribution, Oracle R Enterprise (ORE), and Oracle R Connector for Hadoop (ORCH). We will focus on Oracle R Enterprise with hands-on exercises exploring the transparency layer, embedded R execution, and statistics engine.

Data Visualization Videos & Resources

Here is a selection of videos and websites on Data Visualisations.

Hans Rosling videos of his TED talks

- World Population Growth

- Global Population Growth (TED)

- Asia’s Rise – How and When

- HIV: New facts and stunning data visuals

- Video for the BBC

Other videos

Useful Websites

Oracle Advanced Analytics Video by Charlie Berger

Charlie Berger (Sr. Director Product Management, Data Mining & Advanced Analytics) as produced a video based on a recent presentation called ‘Oracle Advanced Analytics: Oracle R Enterprise & Oracle Data Mining’.

This is a 1 hour video, including some demos, of product background, product features, recent developments and new additions, examples of how Oracle is including Oracle Data Mining into their fusion applications, etc.

Oracle has 2 data mining products, with main in-database Oracle Data Mining and the more recent extensions to R to give us Oracle R Enterprise.

Check out the video – Click here.

Check out Charlie’s blog at https://blogs.oracle.com/datamining/

Oracle University : 2 Day Oracle Data Mining training course

ODM–Attribute Importance using PL/SQL API

In a previous blog post I explained what attribute importance is and how it can be used in the Oracle Data Miner tool (click here to see blog post).

In this post I want to show you how to perform the same task using the ODM PL/SQL API.

The ODM tool makes extensive use of the Automatic Data Preparation (ADP) function. ADP performs some data transformations such as binning, normalization and outlier treatment of the data based on the requirements of each of the data mining algorithms. In addition to these transformations we can specify our own transformations. We do this by creating a setting tables which will contain the settings and transformations we can the data mining algorithm to perform on the data.

ADP is automatically turned on when using the ODM tool in SQL Developer. This is not the case when using the ODM PL/SQL API. So before we can run the Attribute Importance function we need to turn on ADP.

Step 1 – Create the setting table

CREATE TABLE Att_Import_Mode_Settings (

setting_name VARCHAR2(30),

setting_value VARCHAR2(30));

Step 2 – Turn on Automatic Data Preparation

BEGIN

INSERT INTO Att_Import_Mode_Settings (setting_name, setting_value)

VALUES (dbms_data_mining.prep_auto,dbms_data_mining.prep_auto_on);

COMMIT;

END;

Step 3 – Run Attribute Importance

BEGIN

DBMS_DATA_MINING.CREATE_MODEL(

model_name => ‘Attribute_Importance_Test’,

mining_function => DBMS_DATA_MINING.ATTRIBUTE_IMPORTANCE,

data_table_name > ‘mining_data_build_v’,

case_id_column_name => ‘cust_id’,

target_column_name => ‘affinity_card’,

settings_table_name => ‘Att_Import_Mode_Settings’);

END;

Step 4 – Select Attribute Importance results

SELECT *

FROM TABLE(DBMS_DATA_MINING.GET_MODEL_DETAILS_AI(‘Attribute_Importance_Test’))

ORDER BY RANK;

ATTRIBUTE_NAME IMPORTANCE_VALUE RANK

——————– —————- ———-

HOUSEHOLD_SIZE .158945397 1

CUST_MARITAL_STATUS .158165841 2

YRS_RESIDENCE .094052102 3

EDUCATION .086260794 4

AGE .084903512 5

OCCUPATION .075209339 6

Y_BOX_GAMES .063039952 7

HOME_THEATER_PACKAGE .056458722 8

CUST_GENDER .035264741 9

BOOKKEEPING_APPLICAT .019204751 10

ION

CUST_INCOME_LEVEL 0 11

BULK_PACK_DISKETTES 0 11

OS_DOC_SET_KANJI 0 11

PRINTER_SUPPLIES 0 11

COUNTRY_NAME 0 11

FLAT_PANEL_MONITOR 0 11

Update on Exalytics Pricing

In my previous blog post (Exalytics : How much will it cost me ?) I gave an outline of the pricing you might expect for an Exalytics machine.

The final pricing that I gave of approx $3+M was based on the per processor licencing.

Yesterday (24th Jan) the Oracle Business Intelligence blog by Manan, included the pricing based on the per user licences.

The following is a breakdown of the Exalytics pricing based on the minimum 100 user licencing.

Licence Costs (100 users)

Exalytics machine = $135,000

TimesTen = $300 x 100 users = $30,000

BI Foundation Suite = $3,675 x 100 users = $367,500

Giving a grand total of $532,500.

Support Costs (100 users)

But we need to add the annual support costs to this.

Exalytics machine support = $29,700.

TimesTen support = $66 x 100 users = $6,600

BI Foundations suite = $809 x 100 users = $80,900

Total support costs (100 users) = $116,500

First year & on-going costs costs

Total first year cost for an Exalytics machine = $532,500 + $117,200 = $649,700

Plus on going annual support costs of $117,200 in year 2 and subsequent years.

Discounted Costs

If you are one of the lucky customer who can If I use the same discounts, as I did in my previous blog post, of 25% discount on hardware and 60% discount on the software, we get:

Year 1 cost of : ($135,000*0.75) + ($397,500*0.40) = $260,250

So it might be possible to get an Exalytics machine for $260+K, plus annual support costs.

Exalytics : How much will it cost me ?

Over the past couple of weeks the costing for the Oracle Exalytics machine has been made public by Oracle and there has been a number of articles. What I’ve done in this blog post is to collate this information. I give what I understand to be the cost of purchasing an Exalytic machine and to get setup and running.

The pricing structure starts at

Exalytics machine + cost of BI Foundation Suite + TimesTen licences

Exalytics machine = $135,000

TimesTen = $34,500 per processor licence or $300 per named user(min 100 users)

BI Foundation Suite = $450,000 per processor licence or $3,675 per named user (same number of users as for TimesTen = min 100 users)

Annual Support Costs

Exalytics machine = $29,700

TimesTen = 22% of software licence – $7,590 per processor licence or $66 per named user (min 100 users)

BI Foundation Suite = $99,000 per processor licence or $809 per named user(min 100 users)

The Exalytics machine consists of a single server with 1TB of RAM and 4 Intel Xeon E7-4800 processors, with 10 cores each.

So the total cost of an Exalytics machine based on the processor licence will be something towards the $10M. Now this is before the discounts that you can negotiate. There are reports of discounts ranging up to 25% on hardware and 60% on software. The size of the discount is depended on your size etc. So this initial $10M cost could be reduced to $3M+.

Please note that I may have gotten some or all of this pricing wrong. If I have then forgive me and let me know what is wrong. I can correct it to ensure that we have the correct costs.

ODM 11gR2–Real-time scoring of data

In my previous posts I gave sample code of how you can use your ODM model to score new data.

Applying an ODM Model to new data in Oracle – Part 2

Applying an ODM Model to new data in Oracle – Part 1

The examples given in this previous post were based on the new data being in a table.

In some scenarios you may not have the data you want to score in table. For example you want to score data as it is being recorded and before it gets committed to the database.

The format of the command to use is

prediction(ODM_MODEL_NAME USING )

prediction_probability(ODM_Model_Name, Target Value, USING )

So we can list the model attributes we want to use instead of using the USING * as we did in the previous blog posts

Using the same sample data that I used in my previous posts the command would be:

Select prediction(clas_decision_tree

USING

20 as age,

‘NeverM’ as cust_marital_status,

‘HS-grad’ as education,

1 as household_size,

2 as yrs_residence,

1 as y_box_games) as scored_value

from dual;

SCORED_VALUE

————

0

Select prediction_probability(clas_decision_tree, 0

USING

20 as age,

‘NeverM’ as cust_marital_status,

‘HS-grad’ as education,

1 as household_size,

2 as yrs_residence,

1 as y_box_games) as probability_value

from dual;

PROBABILITY_VALUE

—————–

1

So we get the same result as we got in our previous examples.

Depending of what data we have gathered we may or may not have all the values for each of the attributes used in the model. In this case we can submit a subset of the values to the function and still get a result.

Select prediction(clas_decision_tree

USING

20 as age,

‘NeverM’ as cust_marital_status,

‘HS-grad’ as education) as scored_value2

from dual;

SCORED_VALUE2

————-

0

Select prediction_probability(clas_decision_tree, 0

USING

20 as age,

‘NeverM’ as cust_marital_status,

‘HS-grad’ as education) as probability_value2

from dual;

PROBABILITY_VALUE2

——————

1

Again we get the same results.

ODM 11gR2–Using different data sources for Build and Testing a Model

There are 2 ways to connect a data source to the Model build node in Oracle Data Miner.

The typical method is to use a single data source that contains the data for the build and testing stages of the Model Build node. Using this method you can specify what percentage of the data, in the data source, to use for the Build step and the remaining records will be used for testing the model. The default is a 50:50 split but you can change this to what ever percentage that you think is appropriate (e.g. 60:40). The records will be split randomly into the Built and Test data sets.

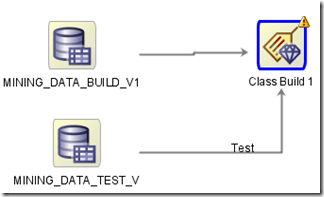

The second way to specify the data sources is to use a separate data source for the Build and a separate data source for the Testing of the model.

To do this you add a new data source (containing the test data set) to the Model Build node. ODM will assign a label (Test) to the connector for the second data source.

If the label was assigned incorrectly you can swap what data sources. To do this right click on the Model Build node and select Swap Data Sources from the menu.

Oracle Analytics Update & Plan for 2012

On Friday 16th December, Charlie Berger (Sr. Director, Product Management, Data Mining & Advanced Analytics) posted the following on the Oracle Data Mining forum on OTN.

“… soon you’ll be able to use the new Oracle R Enterprise (ORE) functionality. ORE is currently in beta and is targeted to go General Availability in the near future. ORE brings additional functionality to the ODM Option, which will then be renamed to the Oracle Advanced Analytics Option to reflect the significant adv. analytical functionality enhancements. ORE will allow R users to write R scripts and run them inside the database and eliminate and/or minimize data movement in/out of the DB. ORE will provide R to SQL transparency for SQL push-down to in-DB SQL and and expanding library of Oracle in-DB statistical functions. Packages that cannot be pushed down will be run in embedded R mode while the DB manages all data flows to the multiple R engines running inside the DB.

In January, we’ll open up a new OTN discussion forum specifically for Oracle R Enterprise focused technical discussions. Stay tuned.”

I’m looking forward to getting my hands on the new Oracle R Enterprise, in 2012. In particular I’m keen to see what additional functionality will be added to the Oracle Data Mining option in the DB.

So watch out for the rebranding to Oracle Advanced Analytics

Charlie – Any chance of an advanced copy of ORE and related DB bits and bobs.

- ← Previous

- 1

- 2

- 3

- Next →

You must be logged in to post a comment.