Oracle Data Miner

How to speed up your Oracle Data Mining with in-memory and parallel

Have you have found running a workflow in Oracle Data Miner slow or running the scripts in the database slow ?

No. Good, because I haven’t found it slow.

But (there is always a but) it really depends on the volume of data your are dealing with. For the vast majority of us who aren’t of the size of google, amazon, etc have data volumes that are not that large really and a basic server can process many millions of records extremely quickly using Oracle Data Mining.

But what if we have a large volume of data. In one recent project I had a data set containing over 3.5 billion records. Now that is big data. All of this data sitting in an Oracle Database.

So how can we process over 3.5 billion records in a couple of seconds, building 4 machine learning models in that time? Is that really possible with just using an Oracle Database? Yes is the answer and very easily. (Surely I needed Hadoop and Spark to process this data? Nope!)

The Oracle Data Miner (ODMr) tool comes with a new feature in SQL Developer 4 (and higer) that allows you to manage using Parallel execution and the in-memory DB features. These can be accessed on the ODMr Worksheet tool bar.

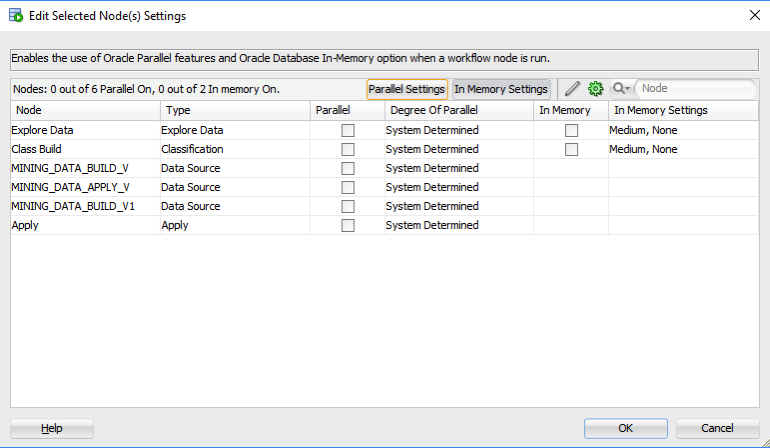

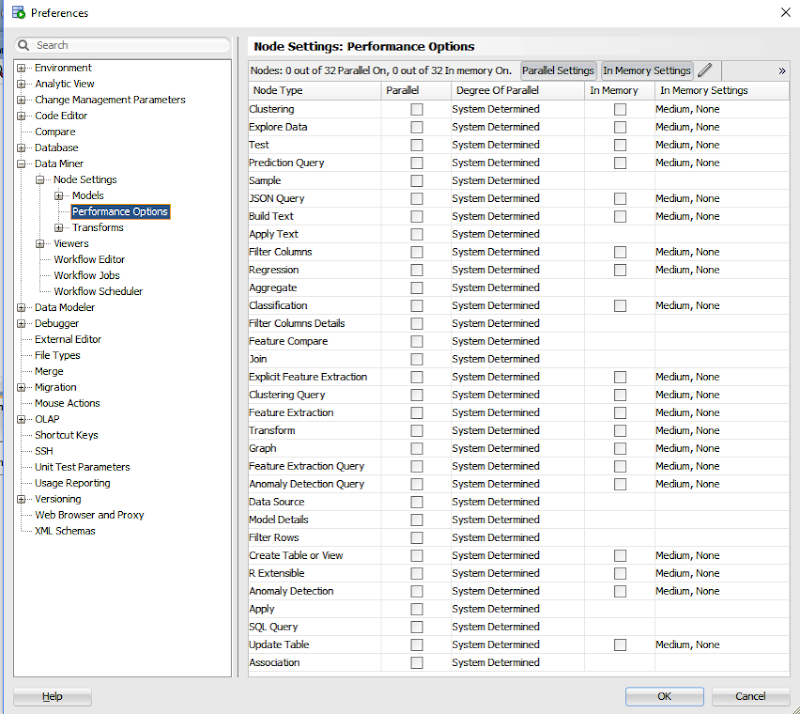

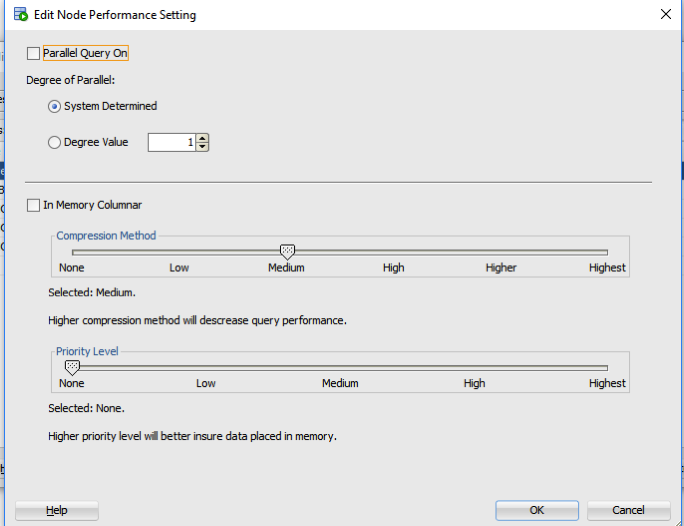

The best time to look at these setting is when you have created your workflow and are ready to run it for the first time. When you click on the ‘Performance Options’ link, you will get the following window. It will display the list of nodes you have in the workflow and will then indicate if the Degree of Parallel and the In-Memory options can be set for each of the nodes.

The default values are shown and you can changes these. For example, in a lot of scenarios you might prefer to leave the Degree of Parallel as System Determined. This will then use whatever the the default is for the database and controlled by the DBA, but if you want to specify a particular value then you can, for example setting the degree of parallel to 4 for the ‘Class Build’ node, in the above image. Similarly for the in-memory option, this will only be available for nodes where the in-memory option would be applicable. This will be where there is a lot of data processing (preparing data, transforming data, performing specific statistics, etc) and for storing any data that is generated by Oracle Data Mining.

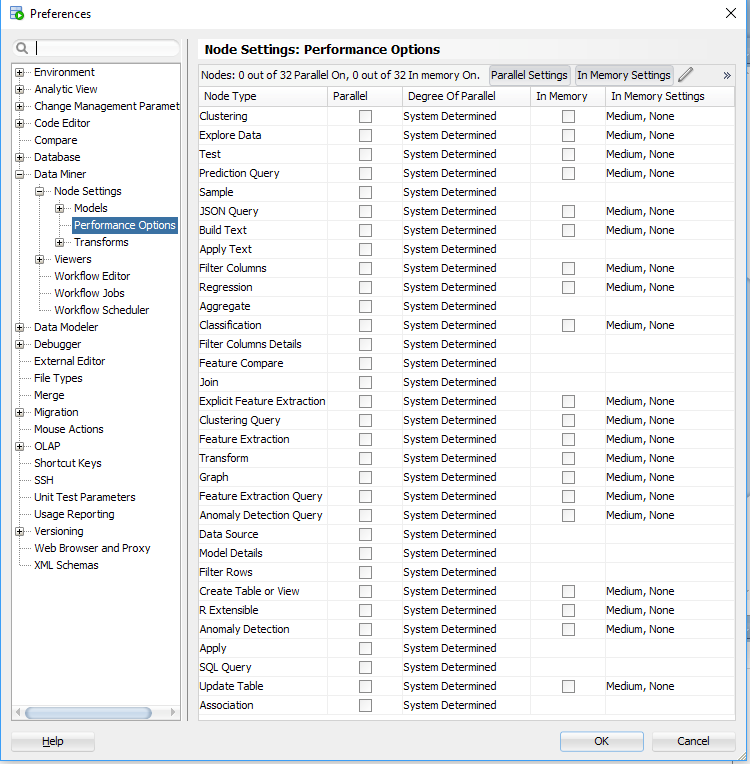

But what if you want to change the default values. You can change these at a global level within the SQL Developer Preferences. Here you can set the default to be used for each of the different types of Oracle Data Mining nodes.

I mentioned at the start that I’ve been able to build 4 machine learning models using Oracle Data Mining on a data set of over 3.5 billion records, all in a couple of seconds. In my scenario Parallel was set to 16 and we didn’t use in-memory as we didn’t have the licence for it. You can see that machine learning at lighting speed (ish) is possible. This timing is only for building the models, which is the step that consumes the most about of resources and time. When it comes to scoring the data, that is lighting fast. In may scenario, scoring over 300,000 was less than a second, and I didn’t use parallel or anything else to speed things up. Because we didn’t need to.

Go give it a try!

Scheduling ODMr Workflows in SQL Developer 4.2+

A new feature for Oracle Data Mining (ODM) (part of SQL Developer 4.2) is the ability to schedule an ODM workflow to run a defined time or frequency.

This blog post will bring you through the steps need to schedule an ODM workflow using this new feature.

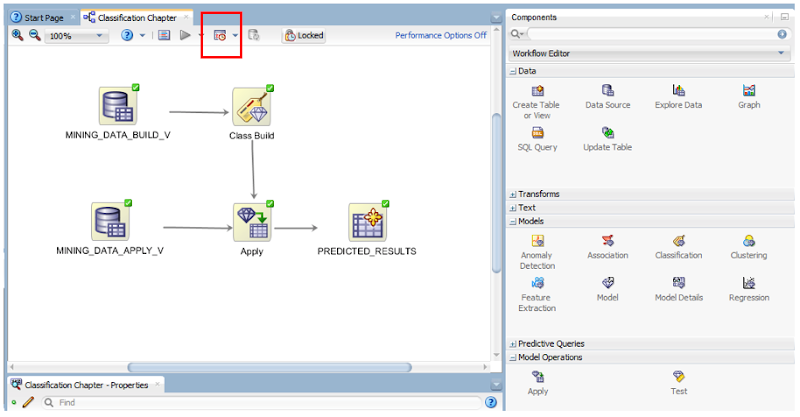

The first thing that you need is an ODMr workflow. The following image is a familiar looking one that I typically use to get a very quick demo of how easy it is to build a machine learning workflow.

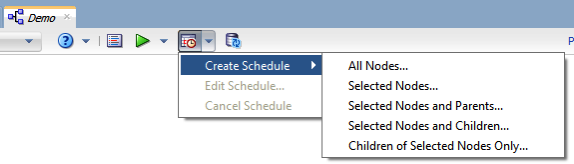

Just above the workflow worksheet we have a row of icon buttons. In the above image one of these is highlighted by a red box. This is the workflow scheduler. So go ahead on click on it.

In most cases you will want to run the entire workflow. The default option presented to is ‘All Nodes’. If you would only like a subset of the nodes to run, you can click-on or select the node in the workflow and then click on the scheduler icon. In our example we are going to run the entire workflow, so select ‘All Nodes’ from the menu.

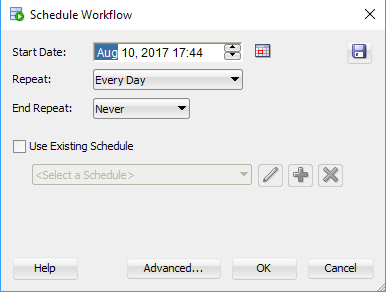

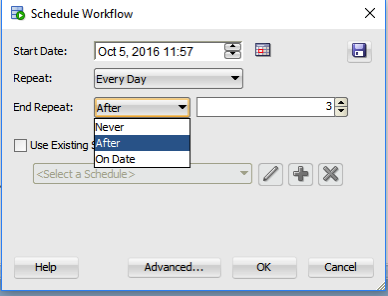

The main scheduler window will open. Here you can set the Start Date and time of the first run, what the Repeat frequency is (none, every day, every week or custom) and to End the Repeat (Never, After, On Date). To schedule a once off run of the workflow just set the Date and Time, set the Repeat to ‘None’ and End Repeat should disappear in this instance. If Repeat was set to another value then you can set a value for End Repeat.

Go ahead and run the scheduler by clicking on the OK button.

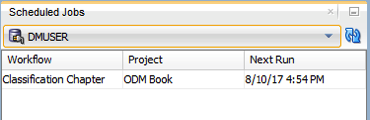

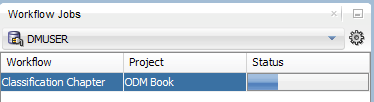

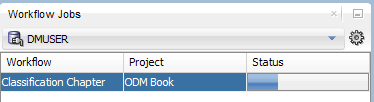

A Scheduled Jobs window should open that will display the details of the scheduled job. When this job is run in the database, this will be shown in the Workflow Jobs window. Here you can see and monitor the progress of the of the workflow.

and that’s it. Nice an simple.

But there is a something you needed to be WARNED about. When you schedule a workflow, Oracle Data Miner will lock the workflow. This is to ensure that no changes can be made to the scheduled workflow. This is indicated with the Locked button appearing on the icon menu. If you click on this button to unlock the workflow, it will also cancel your scheduled jobs associated with this workflow.

Also when the scheduled workflow is finished, the workflow will remain locked. So you will have to click on this Locked button to unlock the workflow.

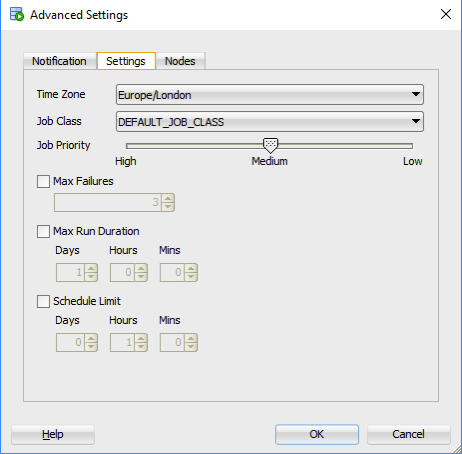

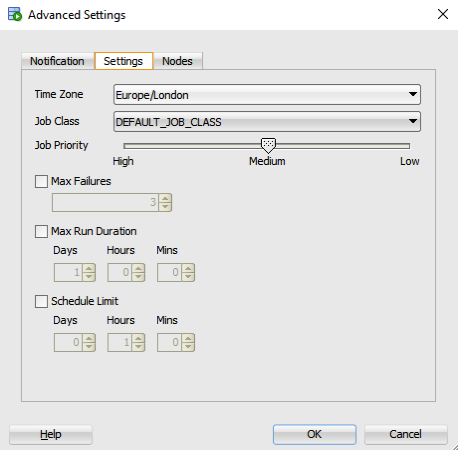

There are a few additional advanced features. These can be found by clicking on the ‘Advanced…’ button in the main scheduler window. The first table displayed allows you to specify if you want an email sent for the different stages of the scheduled job. The second tab allows you to set the Job Priority, Max Failures, Max Run Duration and Schedule Limits.

Auditing Oracle Data Mining model usage

In a previous blog post I talked about how you can rename and comment your Oracle Data Mining models. This is to allow you to easily to see and understand the intended use of the data mining model.

Another feature available to you is to audit the usage of the the data mining models. As your data mining environment grows to many 10s or more typically 100s of models, you will need to have some way of tracking their usage. This can allow you to discover what models are frequently being used and those that are not being used in-frequently. You can then use this information to investigate if there are any issues. Or in some companies I’ve seen an internal charging scheme in place for each time the models are used.

The following outlines the steps required to setup the auditing of your models and how to inspect the usage.

Note: You will need to the AUDIT_ADMIN role to audit the models.

First create an audit policy for the data mining model in a particular schema.

CREATE AUDIT POLICY oaa_odm_audit_usage ACTIONS ALL ON MINING MODEL dmuser.high_value_churn_clas_svm;

This creates a policy that monitors all activity on the data mining model HIGH_VALUE_CHURN_CLAS_SVM in the DMUSER schema.

Now we need to enable the policy and allow to to tract all activity on the model.

AUDIT POLICY oaa_odm_audit_usage BY oaa_model_user;

This will track all usage of the data mining model by the schema call OAA_MODEL_USER. We can then use the following query to search for the audit records for the OAA_MODEL_USER schema.

SELECT dbusername,

action_name,

systemm_privilege_used,

return_code,

object_schema,

object_name,

sql_text

FROM unified_audit_trail

WHERE object_name = 'HIGH_VALUE_CHURN_CLAS_SVM';

But there is a little problem with using what I’ve just shown you above. The problem is that it will track all activity on the data mining model. Perhaps this isn’t what we really want. Perhaps we only want to track only certain activity of the data mining model. Instead of creating the policy using ‘ACTIONS ALL’, we can list out the actions or operations we want to track. For example, we want to tract when it is used in a SELECT. The following shows how you can set this up for just SELECT.

CREATE AUDIT POLICY oaa_odm_audit_select ACTIONS SELECT ON MINING MODEL dmuser.high_value_churn_clas_svm; AUDIT POLICY oaa_odm_audit_select BY oaa_model_user;

The list of individual audit events you can use include:

- AUDIT

- COMMENT

- GRANT

- RENAME

- SELECT

A policy can be setup to tract one or more of these events. For example, if we wanted a policy to track SELECT and GRANT, we would have list each event separated by a comma.

CREATE AUDIT POLICY oaa_odm_audit_select_grant ACTIONS SELECT ON MINING MODEL dmuser.high_value_churn_clas_svm, ACTIONS GRANT ON MINING MODEL dmuser.high_value_churn_clas_svm, ; AUDIT POLICY oaa_odm_audit_select_grant BY oaa_model_user;

Using the Identity column for Oracle Data Miner

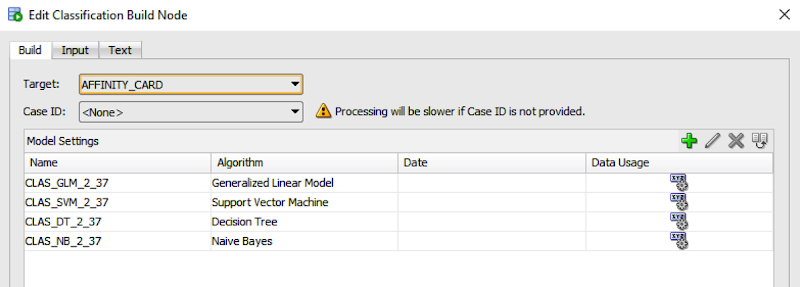

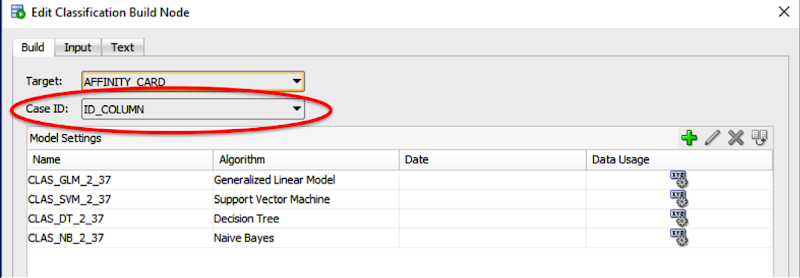

If you are a user of the Oracle Data Miner tool (the workflow data mining tool that is part of SQL Developer), then you will have noticed that for many of the algorithms you can specify a Case Id attribute along with, say, the target attribute.

The idea is that you have one attribute that is a unique identifier for each case record. This may or may not be the case in your data model and you may have a multiple attribute primary key or case record identifier.

But what is the Case Id field used for in Oracle Data Miner?

Based on the documentation this field does not need to have a value. But it is recommended that you do identify an attribute for the Case Id, as this will allow for reproducible results. What this means is that if we run our workflow today and again in a few days time, on the exact same data, we should get the same results. So the Case Id allows this to happen. But how? Well it looks like the attribute used or specified for the Case Id is used as part of the Hashing algorithm to partition the data into a train and test data set, for classification problems.

So if you don’t have a single attribute case identifier in your data set, then you need to create one. There are a few options open to you to do this.

- Create one: write some code that will generate a unique identifier for each of your case records based on some defined rule.

- Use a sequence: and update the records to use this sequence.

- Use ROWID: use the unique row identifier value. You can write some code to populate this value into an attribute. Or create a view on the table containing the case records and add a new attribute that will use the ROWID. But if you move the data, then the next time you use the view then you will be getting different ROWIDs and that in turn will mean we may have different case records going into our test and training data sets. So our workflows will generate different results. Not what we want.

- Use ROWNUM: This is kind of like using the ROWID. Again we can have a view that will select ROWNUM for each record. Again we may have the same issues but if we have our data ordered in a way that ensures we get the records returned in the same order then this approach is OK to use.

- Use Identity Column: In Oracle 12c we have a new feature called Identify Column. This kind of acts like a sequence but we can defined an attribute in a table to be an Identity Column, and as records are inserted into the the data (in our scenario our case table) then this column will automatically generate a unique number for our data. Again if we need to repopulate the case table, you will need to drop and recreate the table to get the Identity Column to reset, otherwise the newly inserted records will start with the next number of the Identity Column

Here is an example of using the Identity Column in a case table.

CREATE TABLE case_table ( id_column NUMBER GENERATED ALWAYS AS IDENTITY, affinity_card NUMBER, age NUMBER, cust_gender VARCHAR2(5), country_name VARCHAR2(20) ... );

You can now use this Identity Column as the Case Id in your Oracle Data Miner workflows.

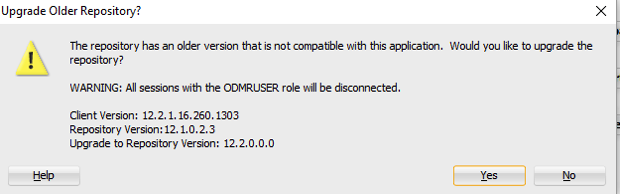

Oracle Data Miner (ODMr) 4.2 Repository Upgrade

With each new release of the Oracle Data Miner (ODMr) tool (part of SQL Developer) an upgrade of your ODMr Repository is needed. This is because of the numerous new features in the tool. This is particularly the case with ODMr (SQLDev) 4.2.

No most of the new features for ODMr 4.2 will not be visible until you are running a 12.2 Database. But a small number of new features are available if you are running an earlier version of the DB. Check out my blog post on some of these.

Before upgrading the ODMr repository, just like with any upgrade, make sure to do your backups. Although there is some coping of objects done during the repository upgrade (lot story but a few versions ago my ODMr repository and work got wiped during an upgrade), you should always export and save your workflows. You will need to do this using your current version of ODMr/SQL Dev before you start using ODMr 4.2.

When you have saved your workflows etc you can then start using ODMr/SQLDev 4.2.

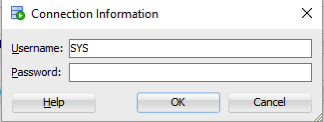

The easiest way to do the ODMr 4.2 Repository upgrade is to let the tool do it for you. You can do this by trying to open one of your ODMr connections.

IMPORTANT: You will need to have the SYS password for the ODMr upgrade, so have your DBA do this step for you or have them on standby to enter the password for you.

NOTE: This upgrade is being done on a CDB/PDB 12.2 DB.

When prompted enter the SYS password.

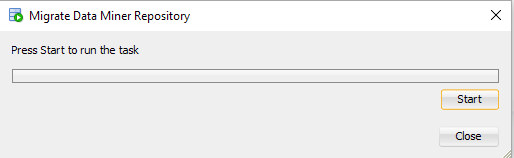

When promoted click on the Start button.

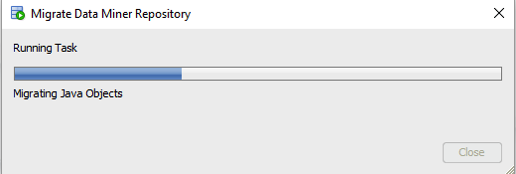

The progress bar will let you know things are going.

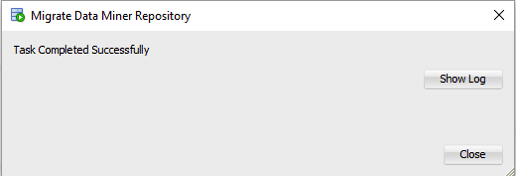

When complete you will get the following.

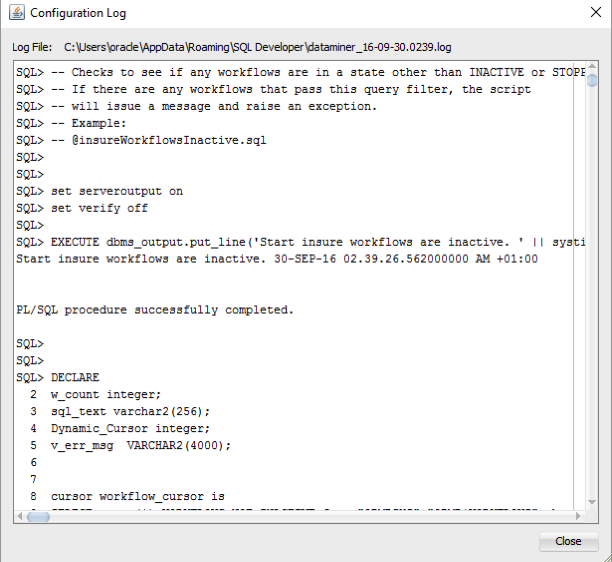

It is always good to check the Log file/report. Especially if you encounter errors !

Job Done!

You can now start using all (well almost all) the new features of ODMr 4.2.

When the 12.2 Database is available you will get to see lots more features.

Oracle Data Miner 4.2 EA : New Features

A couple of weeks ago during the madness of Oracle Open World there was some new product releases and lots of updates to existing products.

One such product was SQL Developer. They released an Early Adopter version (EA1). This is where you can try out the new version of the product, but you need to be careful as it is not the GA/Production version. So it may have some “features”.

One component of SQL Developer is the Oracle Data Miner tool. This tool GUI workflow based tool based on the Oracle Advanced Analytics option. At OOW we got to hear about the various new Oracle Data Mining features that are coming with Oracle 12.2 Database. For Oracle Data Miner (ODMr) 4.2 (EA) there are a lot of new features but most of these are hidden and will only come available when you are using the Oracle 12.2 DB.

But if you are using a 12.1 (or earlier) then there are some new features. I’ve been having a bit of a look around the EA1 release to see what is new and available to us now (while we wait for 12.2).

If you are on Oracle 12.1 DB or earlier there are two main new features. These are a new Workflow Scheduler and being able to specify in-memory options for ODMr objects. These can be easily found on the ODMr menu bar, are highlighted in the following image.

Let us now have a quick look at these.

ODMr Workflow Scheduler

The Workflow Scheduler allows us to take an ODMr Workflow and to use schedule it to run in the Oracle Database at a defined time or for a defined schedule. Previously we would have to write the SQL and PL/SQL code to enable the scheduling. Plus the ODMr schedule was outputted in a number of SQL scripts. So it was a little bit of challenge to get the workflow running on a regular basis.

Now with the new in-built ODMr Schedular we can quickly and easily do this without having to write a line of SQL or PL/SQL. The tool will look after the hard bit for us. We can schedule the entire workflow or certain parts of the workflow.

When setting up your schedule you can pick the Start Date, how frequently you would like it run (daily, weekly, monthly or some other custom frequency), when it should end (never, after X number of runs or on a specific date). You can also re-use an existing schedule.

For the advanced settings you can setup email notification, the job priority level, maximum run durations and limits, and timezone to use.

ODMr In-memory Options

To access the in-memory options you can click on the ‘Performance Options’ button on the ODMr menu or you can access it via the menu (Tools -> Preferences) to get the complete list of in-memory settings.

When you use ODMr to build your data mining workflows, ODMr will create a number of objects for each of the nodes of the workflow. These are typically created as tables in your schema. The previous version of ODMr introduced the Performance Options, where you could set the degree of parallel to use for some Nodes and the underlying SQL and PL/SQL code that is generated.

Now we can specify if the tables created should be in-memory, and available of the significant performance response times when you are using the data in these tables. This is particularly useful as we work with larger and larger data sets and we want our lighting fast response from some of our data mining tasks.

In addition to turning on the in-memory option for certain nodes, we can also specify the in-memory configuration settings such as the level of Columnar Compression to use and the Priority Level.

(I’ve been on the 12.2 beta so I’ve had a chance to try out many of the new features. There is some good stuff coming and I’ll have blog posts about these when 12.2 comes GA)

Oracle Data Miner (ODM 4.1) New Features

With the release of SQL Developer 4.1 we also get a number of new features with Oracle Data Miner (ODMr). These include:

- Data Source node can now include data sources that contain JSON data, generating JSON schema and has a JSON viewer

- Create Table can now create data in JSON

- JSON Query Node allows you to view, query and process JSON data, combine it with relational data, generate sub-group by, and nested columns to be part of input to algorithms

- New PL/SQL APIs for managing Data Miner projects and workflows. This includes run, cancel, rename, delete, import and export of workflows using PL/SQL.

- New ODMr Repository views that allows us to query and monitor our workflows.

- Transformation Node now allows you different ways of handling NULLS.

- Transformation Node now allows us to create Custom Bins, define bin labels and bin values

- Overall Workflow and ODMr environment improvements to allow for greater efficiency in workflow behaviour and interactions with the database. So using ODMr should feel quicker and more responsive.

What out for the Gotchas: Although support for JSON has been added to ODMr, as outlined above, you are still a bit limited to what else you can do with your JSON data. Based on the documentation you can use JSON data in the Association and Classification build nodes.

I’m not sure about the other nodes and this will need a bit of investigation to see what nodes can and cannot use JSON data. I’m sure this will all be sorted out in the next release.

Keep an eye out for some blog posts over the coming weeks on how to explore and use these new features of Oracle Data Miner.

SQL Developer 4.1 : ODM Repository upgrade

Earlier today (4th May) SQL Developer 4.1 was released 🙂

For those of you who use the Oracle Data Miner tool (that is part of SQL Developer) you will need to upgrade your repository. The following steps will walk you through the process.

1. Download SQL Developer (you do need to have Java 8 installed) This download does not come with the JRE built into it. This usually comes a few days after the release.

2. Unzip the downloaded file and copy the extracted directory to where you like to keep your applications etc.

3. Start up SQL Developer by running the sqldeveloper.exe file. This will located in the extracted folder \sqldeveloper-4.1.0.19.07-no-jre\sqldeveloper

4. If you have been a previous install of SQL Developer you will be asked if you want to migrate your current settings. Click on the Yes button and all your connections and settings will be migrated.

5. To upgrade your Oracle Data Miner (ODMr) repository, you will need to open one of your ODMr connections. When you do this ODMr will check to see if the repository in your database needs to be updated. If it does you will get the following window.

6. Enter the password for SYS

6. When you get the following window you can click on the Start button to begin the Oracle Data Miner repository upgrade.

7. After a couple of minutes (and depending on the number of ODM Workflows and ODM schemas to have) you will get the following window.

Congratulations. You have now upgraded your Oracle Data Miner repository.

If you do encounter any errors during the upgrade of the repository then you should get onto the OTN Forum for Oracle Data Miner and report the errors. The Oracle Data Miner team monitor this forum and will get back to you quickly with a response.

Evaluating Classification Models in ODM (Part 2)

In a previous blog post I talked about and showed some of the typical statistical methods to evaluate the classification models that you develop. Click to see this (first) blog post.

In this blog post I want to show you how you can go about evaluating your classification models that you develop using Oracle Data Miner (part of SQL Developer).

What I’m not going to show you here is how to develop classification models using Oracle Data Mining 😦 I’ve had several blog posts over the years on this topics. So you can go and search of those posts or alternately this topic is cover in a lot more detail in my Oracle Data Miner book 🙂

After you have developed your ODM models in Oracle Data Miner you have 2 levels of details available to you. The first of these is the Compare Test Results. You can find this by right clicking on the Classification node of your ODM Workflow, as showing below.

When you select the Compare Test Results a new (worksheet) tab will open. This will display summary statistics and graphics for the summary statistics for each Oracle Data Ming model created. In the following image an ODM model was created for each In-Database Classification algorithm in the Oracle Database.

Here we get to see 2 of the statistical measures that I talked about in my previous blog post, the (average) Accuracy and the Overall Accuracy. We can look at and examine this in a bit more detail in a minute. A new measure that I haven’t mentioned before is the Predictive Confidence.

The Predictive Confidence measure provides an estimate of the overall goodness of the model. Predictive Confidence is a number between 0 and 1. Data Miner displays Predictive Confidence as a percent.

- If Predictive Confidence=0, then it indicates that the predictions of the model are no better than the predictions made by using the naive model.

- If Predictive Confidence=1, then it indicates that the predictions are perfect.

- If Predictive Confidence=0.5, then it indicates that the model has cut the error of a naive model by 50%./li>

So the higher the value for Predictive Confidence the better the model. Particularly when it is higher than 50%.

After evaluation these summary statistical measures you will want to drill down on these to see the lower level statistical measures, for example you will want to see the confusion matrix and the corresponding statistical measures. To view the confusion matrix all you need to do is to click on the Performance Matrix tab. Before you can really start evaluating the models you will need to click on the Display drop down and select ‘Show Detail’ from the drop down list. Another thing you will need to do is to click/check the ‘Show totals and codes’ check box on the lower part of the screen. This will give you some of the statistical measures that I outlined in my previous blog post.

When you examine the statistical measures displayed on the screen you will notice that some of the statistical measures I outlined in my previous blog post are missing. Some of these missing measures are ones that you will want to consider and use as part of your evaluation of you ODM models.

So what how do you find out what these missing statistical measures are? Well ODM does not display these so the only real option open to you is to go and calculate them yourself 😦 This is not ideal but these are relatively easy to calculate and you can do this on a piece of paper or you can open your spreadsheet software and let it calculate them for you (once you have defined to formula for each). Here is an example of the completed/extended confusion matrix based on the results from the CLAS_SVM_1_59 model shown in the above image.

In my next blog post I will look at how you can evaluate a classification model that was developed using the in-database Oracle Data Mining algorithms (Oracle Data Miner GUI was not used). The evaluation criteria that I will show will be based on the statistical methods that I highlighted in my first blog post on this topic.

ODMr 4.1 EA1 Repository Upgrade

If you are downloading the EA1 of SQL Developer that includes Oracle Data Miner (ODMr), and you intend to use Oracle Data Miner then you will need to update the ODMr Repository.

You could do it the hard way and run the upgrade repository sql scripts that are located in the …\sqldeveloper-4.1.0.17.29-no-jre\sqldeveloper\dataminer\scripts directory.

Or you could do it the easy way and let the inbuilt functionality in Oracle Data Miner do it for you.

To do it the easy way all you need to do is to open the ODMr Connections window and the double click on one of your ODM connections.

ODMr will check the version of the repository you have installed and if needed it will prompt you about upgrading the repository. Select Yes and you will be prompted to enter the SYS password. So talk kindly with your DBA for them to enter the password for you. Then click on the Start button. They will lick off the OMDr Repository Upgrade scripts.

NB: Make sure you have a backup of your workflows before you do this. A little think happened to me during the SQL Dev / ODMr 4.0 upgrade back in September 2013 where all my workflows disappeared. You can imagine how happy I was about that. Since then the ODMr team have added some functionality to ensure something like this doesn’t happen again. But you never know.

To backup your ODMr workflows use the Export Workflow option.

When the repository upgrade has finished you will get a ‘Task Complete Successfully’ message in the upgrade window. Click on the close button and away you go with this updated version.

Check out this blog post for details of what is new in ODMr 4.1.

Oracle Data Miner (SQL Dev) 4.1 EA1

A few days ago the first Early Adaptor release of SQL Developer 4.1 (EA1) was made available. You can go ahead and download it from here and make sure to check out the blog post by Jeff Smith on some install and setup that is required around the latest version of Java.

I’ve been using SQL Developer since its very first release, so getting my hands on a new release is very exciting. There are lots and lots of new features in the tool. Again check out the blog posts by Jeff Smith and Kris Rice on some of these new features. I really like the new DBA screens 🙂 But this screen really needs some scroll bars and not everything fits on my screen. So Jeff and Kris if you are reading this, can you add some scroll bars.

In addition they have been working on “new” SQL*Plus that is called SDSQL. This is a new command line tool that is supposed to be bigger and better than SQL*Plus but still gives us a command line tool to run our scripts and demos. To download and install the tool go to here.

As you know I’m a bit of an Oracle Data Miner/Mining fan. There are now new in-database features, but there are a lot of new features in the GUI tool (aka ODMr) along with some improvements and bug fixes. Here is a list of the ODMr 4.1 EA1 new and updated features (taken from the ODMr Help in SQL Dev)

JSON Data Support for Oracle Database 12.1.0.2 and above

In response to the growing popularity of JSON data and its use in Big Data configurations, Data Miner now provides an easy to use JSON Query node. The JSON Query node allows you to select and aggregate JSON data without entering any SQL commands. The JSON Query node opens up using all of the existing Data Miner features with JSON data. The enhancements include:

Data Source Node

Automatically identifies columns containing JSON data by identifying those with the IS_JSON constraint.

Generates JSON schema for any selected column that contain JSON data.

Imports a JSON schema for a given column.

JSON schema viewer.

Create Table Node

Ability to select a column to be typed as JSON.

Generates JSON schema in the same manner as the Data Source node.

JSON Data Type

Columns can be specifically typed as JSON data.

JSON Query Node

Ability to utilize any of the selection and aggregation features without having to enter SQL commands.

Ability to select data from a graphical layout of the JSON schema, making data selection as easy as it is with scalar relational data columns.

Ability to partially select JSON data as standard relational scalar data while leaving other parts of the same JSON document as JSON data.

Ability to aggregate JSON data in combination with relational data. Includes the Sub-Group By option, used to generate nested data that can be passed into mining model build nodes.

General Improvements

Improved database session management resulting in less database sessions being generated and a more responsive user interface.

Filter Columns Node

Combined primary Editor and associated advanced panel to improve usability.

Explore Data Node

Allows multiple row selection to provide group chart display.

Classification Build Node

Automatically filters out rows where the Target column contains NULLs or all Spaces. Also, issues a warning to user but continues with Model build.

Workflow

Enhanced workflows to ensure that Loading, Reloading, Stopping, Saving operations no longer block the UI.

Online Help

Revised the Online Help to adhere to topic-based framework.

Selected Bug Fixes (does not include 4.0 patch release fixes)

GLM Model Algorithm Settings: Added GLM feature identification sampling option (Oracle Database 12.1 and above).

Filter Rows Node: Custom Expression Editor not showing all possible available columns.

WebEx Display Issues: Fixed problems affecting the display of the Data Miner UI through WebEx conferencing.

Denny Wong of the ODM team in Oracle has made available a tutorial on importing JSON data for use with ODMr. Check it out here.

I’ve been told there will be a couple of tutorials on the new features coming out (from the ODMr team) over the next few weeks. So keep an eye out of these.

Check out my blog post on what you need to do to get started/using ODMr 4.1 EA1.

ODMr : Graph Node: Zooming in on Graphs

When Oracle Data Miner (ODMr) 4.0 (which is part of SQL Developer) came out back in late 2013 there was a number of new features added to the tool. One of these was a Graph node that allows us to create various graphs and charts that include Line, Scatter, Bar, Histogram and Box plot.

I’ve been using this node recently to produce graphs and particularly scatter plots. I’ve been using the scatter plots to graph the Actual values in a data set against the Predicted values that was generated by ODMr. In this scenario I had a separate data set for training my ODM data mining models and another testing data set for, well testing how well the model performed against an unseen data set.

In general the graphs produced by the Graph node look good and gives you the information that you need. But what I found was that as you increased the size of the data set, the scatter plot can look a messy. This was in part due to the size of the square used to represent a data point. As the volume of data increased then your scatter plot could just look like a coloured in area of blue squares. This is illustrated in the following image.

What I discovered today is that you can zoom in on this graph to explore different regions and data point on it. This do this you need to select an data that is within the x-axis and y-axis area. When you do this you will see a box form on your graph that selects the area that you indicate by moving your mouse. After you have finished selecting the area, the Graph Node will zooms into this part of the graph and shows the data points. For example if I select the area from about 1000 on the x-axis and 1000 on the y-axis, I will get the following.

Again if I select a similar are area of 350 on the x-axis and 400 on the y-axis I get the following zoomed area.

You can keep zooming in on various areas.

At some point you will have finished zooming in and you will want to return to the original graph. To zoom back outward all you need to do in the graph is to click on it. When you do this you will go back to the previous step or image of the graph. You can keep doing this until you get back to the original graph. Alternatively you can zoom in and out on various parts of the graph.

Hopefully you will find this feature useful.

You must be logged in to post a comment.