ODM 11g R2

Accepted for BIWA Summit–9th to 10th January

I received an email today to say that I had a presentation accepted for the BIWA Summit. This conference will be in the Sofitel Hotel beside the Oracle HQ in Redwood City.

The title of the presentation is “The Oracle Data Scientist” and the abstract is

Over the past 18 months we have seen a significant increase in the demand for Data Scientists. But how does someone become a data scientist. If we examine the requirements and job descriptions of this role we can see that being able to understand and process data are fundamental skills. So an Oracle developer is ideally suited to being a Data Scientist. The presentation will show how an Oracle developer can evolve into a data scientist through a number of stages, including BI developer, OBIEE developer, statistical analysis, data miner and data scientist. The tasks and tools will be discussed and explored through each of these roles. The second half of the presentation will focus on the data mining functionality available in SQL and PL/SQL. This will consist of a demonstration of an Analytics Development environment and how you can migrate (and use) your models in a Production environment

For some reason Simon Cowell of XFactor fame kept on popping into my head and it now looks like he will be making an appearance in the presentation too. You will have to wait until the conference to find out what Simon Cowell and Being an Oracle Data Scientist have in common.

Check out the BIWA Summit website for more details and to register for the event.

I’ll see you there ![]()

Oracle Advanced Analytics Option in Oracle 12c

At Oracle Open World a few weeks ago there was a large number of presentations on Big Data and Analytics. Most of these were marketing type presentations, with a couple of presentations on using R and how it can not be integrated into the Oracle Database 11.2.

In addition this these there was one presentation that focused on the Oracle Advanced Analytics (OAA) Option.

The Oracle Advanced Analytics Option covers the Oracle Data Mining features and the Oracle R Enterprise features in the Database.

The purpose of this blog post is to outline and summarise what was mentioned at these presentations, and will include what changes are/may be coming in the “Next Release” of the database i.e. Oracle 12c.

Health Warning: As with all the presentations at OOW that talked about what may be in or may be in the next release, there is not guarantee that the features will actually be in the release version of the database. Here is the slide that gives the Safe Harbor statement.

- 12c will come with R embedded into it. So there will be no need for any configurations.

- Oracle R client will come as part of the server install.

- Oracle R client will be able to use the Analytics functions that exist in the database.

- Will be able to run R code in the database.

- The database (12c) will be able to spawn multiple R engines.

- Will be able to emulate map-reduce style algorithms.

- There will be new PREDICTION function, replacing the existing (11g) functionality. This will combine a number of steps of building a model and applying it to the data to be scored into one function. But we will still need the functionality of the existing PREDICTION function that is in 11g. So it will be interesting to see how this functionality will be kept in addition to the new functionality being proposed in 12c.

- Although the Oracle Data Miner tool will still exits and will have many new features. It was also referred to as the ‘OAA Workflow’. So those this indicate a potential name change? We will have to wait and see.

- Oracle Data Miner will come with a new additional graphing feature. This will be in addition to the Explore Node and will allow us to produce more typical attribute related graphs. From what I could see these would be similar to the type of box plot, scatter, bar chart, etc. graphs that you can get from R.

- There will be a number of new algorithms too, including a useful One Class Support Vector Machine. This can be used when we have a data set with just one class value. This algorithm will work out what records/cases are more important and others.

- There will be a new SQL node. This will allow us to write our own data transformation code.

- There will be a new node to allow the calling of R code.

- The tool also comes with a slightly modified layout and colour scheme.

Again, the points that I have given above are just my observations. They may or may not appear in 12c, or maybe I misunderstood what was being said.

It certainly looks like we will have a integrate analytics environment in 12c with full integration of R and the ODM in-database features.

Extracting the rules from an ODM Decision Tree model

One of the most interesting of important aspects of a Decision Model is that we as a user can get to see what rules the machine learning algorithm has generated for our data.

I’ve give a number of examples in various blog posts over the past few years on how to generate a number of classification models. An example of the workflow is below.

In the Class Build node we get four models being generated. These include a Generalised Linear Model, Support Vector Machine, Naive Bayes and a Decision Tree model.

We can explore the Decision Tree model by right clicking on the Class Build Node, selecting View Models and then the Decision Tree model, which will be labelled with a ‘DT’ in the name.

As we explore the nodes and branches of the Decision Tree we can see the rule that was generated for a node in the lower pane of the applications. So by clicking on each node we get a different rule appearing in this pane

Sometimes there is a need to extract this rules so that they can be presented to a number of different types of users, to explain to them what is going on.

How can we extract the Decision Tree rules?

To do this, you will need to complete the following steps:

- From the Models section of the Component Palette select the Model Details node.

- Click on the Workflow pane and the Model Details node will be created

- Connect the Class Build node to the Model Details node. To do this right click on the Class Build node and select Connect. Then move the mouse to the Model Details node and click. The two nodes should now be connected.

- Edit the Model Details node, uncheck the Auto Settings, select Model Type to be Decision Tree, Output to be Full Tree and all the columns.

- Run the Model Details node. Right click on the node and select run. When complete you you will have the little green box with a tick mark, on the top right hand corner.

- To view the details produced, right click on the Model Details node and select View Data

- The rules for each node will now be displayed. You will need to scroll to the right of this pane to get to the rules and you will need to expand the columns for the rules to see the full details

My Presentations on Oracle Advanced Analytics Option

I’ve recently compiled my list of presentation on the Oracle Analytics Option. All these presentations are for a 45 minute period.

I have two versions of the presentation ‘How to do Data Mining in SQL & PL/SQL’, one is for 45 minutes and the second version is for 2 hour.

I have given most of these presentations at conferences or SIGS.

Let me know if you are interesting in having one of these presentations at your SIG or conference.

- Oracle Analytics Option – 12c New Features – available 2013

- Real-time prediction in SQL & Oracle Analytics Option – Using the 12c PREDICTION function – available 2013

- How to do Data Mining in SQL & PL/SQL

- From BIG Data to Small Data and Everything in Between

- Oracle R Enterprise : How to get started

- Oracle Analytics Option : R vs Oracle Data Mining

- Building Predictive Analysts into your Forms Applications

- Getting Real Business Value from OBIEE and Oracle Data Mining (This is a cut down and merged version of the follow two presentations)

- Getting Real Business Value from OBIEE and Oracle Data Mining – Part 1 : The Oracle Data Miner part

- Getting Real Business Value from OBIEE and Oracle Data Mining – Part 2 : The OBIEE part

- How to Deploying and Using your Oracle Data Miner Models in Production

- Oracle Analytics Option 101

- From SQL Programmer to Data Scientist: evolving roles of an Oracle programmer

- Using an Oracle Oracle Data Mining Model in SQL & PL/SQL

- Getting Started with Oracle Data Mining

- You don’t need a PhD to do Data Mining

Check out the ‘My Presentations’ page for updates on new presentations.

Using ODM Regression for the Leaning Tower of Pisa tilt problem

This blog post will look at how you can use the Regression feature in Oracle Data Miner (ODM) to predict the lean/tilt of the Leaning Tower of Pisa in the future.

This is a well know regression exercise, and it typically comes with a set of know values and the year for these values. There are lots of websites that contain the details of the problem. A summary of it is:

The following table gives measurements for the years 1975-1985 of the “lean” of the Leaning Tower of Pisa. The variable “lean” represents the difference between where a point on the tower would be if the tower were straight and where it actually is. The data is coded as tenths of a millimetre in excess of 2.9 meters, so that the 1975 lean, which was 2.9642.

Given the lean for the years 1975 to 1985, can you calculate the lean for a future date like 200, 2009, 2012.

Step 1 – Create the table

Connect to a schema that you have setup for use with Oracle Data Miner. Create a table (PISA) with 2 attributes, YEAR_MEASURED and TILT. Both of these attributes need to have the datatype of NUMBER, as ODM will ignore any of the attributes if they are a VARCHAR or you might get an error.

CREATE TABLE PISA

(

YEAR_MEASURED NUMBER(4,0),

TILT NUMBER(9,4)

);

Step 2 – Insert the data

There are 2 sets of data that need to be inserted into this table. The first is the data from 1975 to 1985 with the known values of the lean/tilt of the tower. The second set of data is the future years where we do not know the lean/tilt and we want ODM to calculate the value based on the Regression model we want to create.

Insert into DMUSER.PISA (YEAR_MEASURED,TILT) values (1975,2.9642);

Insert into DMUSER.PISA (YEAR_MEASURED,TILT) values (1976,2.9644);

Insert into DMUSER.PISA (YEAR_MEASURED,TILT) values (1977,2.9656);

Insert into DMUSER.PISA (YEAR_MEASURED,TILT) values (1978,2.9667);

Insert into DMUSER.PISA (YEAR_MEASURED,TILT) values (1979,2.9673);

Insert into DMUSER.PISA (YEAR_MEASURED,TILT) values (1980,2.9688);

Insert into DMUSER.PISA (YEAR_MEASURED,TILT) values (1981,2.9696);

Insert into DMUSER.PISA (YEAR_MEASURED,TILT) values (1982,2.9698);

Insert into DMUSER.PISA (YEAR_MEASURED,TILT) values (1983,2.9713);

Insert into DMUSER.PISA (YEAR_MEASURED,TILT) values (1984,2.9717);

Insert into DMUSER.PISA (YEAR_MEASURED,TILT) values (1985,2.9725);

Insert into DMUSER.PISA (YEAR_MEASURED,TILT) values (1986,2.9742);

Insert into DMUSER.PISA (YEAR_MEASURED,TILT) values (1987,2.9757);

Insert into DMUSER.PISA (YEAR_MEASURED,TILT) values (1988,null);

Insert into DMUSER.PISA (YEAR_MEASURED,TILT) values (1989,null);

Insert into DMUSER.PISA (YEAR_MEASURED,TILT) values (1990,null);

Insert into DMUSER.PISA (YEAR_MEASURED,TILT) values (1995,null);

Insert into DMUSER.PISA (YEAR_MEASURED,TILT) values (2000,null);

Insert into DMUSER.PISA (YEAR_MEASURED,TILT) values (2005,null);

Insert into DMUSER.PISA (YEAR_MEASURED,TILT) values (2010,null);

Insert into DMUSER.PISA (YEAR_MEASURED,TILT) values (2009,null);

Step 3 – Start ODM and Prepare the data

Open SQL Developer and open the ODM Connections tab. Connect to the schema that you have created the PISA table in. Create a new Project or use an existing one and create a new Workflow for your PISA ODM work.

Create a Data Source node in the workspace and assign the PISA table to it. You can select all the attributes..

The table contains the data that we need to build our regression model (our training data set) and the data that we will use for predicting the future lean/tilt (our apply data set).

We need to apply a filter to the PISA data source to only look at the training data set. Select the Filter Rows node and drag it to the workspace. Connect the PISA data source to the Filter Rows note. Double click on the Filter Row node and select the Expression Builder icon. Create the where clause to select only the rows where we know the lean/tilt.

Step 4 – Create the Regression model

Select the Regression Node from the Models component palette and drop it onto your workspace. Connect the Filter Rows node to the Regression Build Node.

Double click on the Regression Build node and set the Target to the TILT variable. You can leave the Case ID at . You can also select if you want to build a GLM or SVM regression model or both of them. Set the AUTO check box to unchecked. By doing this Oracle will not try to do any data processing or attribute elimination.

You are now ready to create your regression models.

To do this right click the Regression Build node and select Run. When everything is finished you will get a little green tick on the top right hand corner of each node.

Step 5 – Predict the Lean/Tilt for future years

The PISA table that we used above, also contains our apply data set

We need to create a new Filter Rows node on our workspace. This will be used to only look at the rows in PISA where TILT is null. Connect the PISA data source node to the new filter node and edit the expression builder.

Next we need to create the Apply Node. This allows us to run the Regression model(s) against our Apply data set. Connect the second Filter Rows node to the Apply Node and the Regression Build node to the Apply Node.

Double click on the Apply Node. Under the Apply Columns we can see that we will have 4 attributes created in the output. 3 of these attributes will be for the GLM model and 1 will be for the SVM model.

Click on the Data Columns tab and edit the data columns so that we get the YEAR_MEASURED attribute to appear in the final output.

Now run the Apply node by right clicking on it and selecting Run.

Step 6 – Viewing the results

Where we get the little green tick on the Apply node we know that everything has run and completed successfully.

To view the predictions right click on the Apply Node and select View Data from the menu.

We can see the the GLM mode gives the results we would expect but the SVM does not.

2 Day Oracle Data Miner course material

Last week I managed to get my hands on the training material for the 2 Day Oracle Data Miner course. This course is run by Oracle University.

Many thanks to Michael O’Callaghan who is a BI Sales person here in Ireland and Oracle University, for arranging this.

The 2 days are pretty packed with a mixture of lecture type material, lots of hands on exercises and some time for open discussions. In particular, day 2 will be very busy day.

Check out the course outline and published schedule – click here

You can have this course on site at your organisation. If this is something that interests you then contact your Oracle University account manager. There is also the traditional face-to-face delivery and the newer online delivery, where people from around the world come together for the online class.

2 Day Oracle Data Miner training course by Oracle University

In the past few days Oracle University has advertised a new 2 Day instructor led training course on Oracle Data Miner.

There are no advertised dates or locations for this course yet. I suppose it will depend on the level of interest in the product.

There is the overview from the Oracle University webpage

“In this course, students review the basic concepts of data mining and learn how leverage the predictive analytical power of the Oracle Database Data Mining option by using Oracle Data Miner 11g Release 2. The Oracle Data Miner GUI is an extension to Oracle SQL Developer 3.0 that enables data analysts to work directly with data inside the database.

The Data Miner GUI provides intuitive tools that help you to explore the data graphically, build and evaluate multiple data mining models, apply Oracle Data Mining models to new data, and deploy Oracle Data Mining’s predictions and insights throughout the enterprise. Oracle Data Miner’s SQL APIs automatically mine Oracle data and deploy results in real-time. Because the data, models, and results remain in the Oracle Database, data movement is eliminated, security is maximized and information latency is minimized”

Click on the following link to access the details of the training course

http://education.oracle.com/pls/web_prod-plq-dad/db_pages.getCourseDesc?dc=D73528GC10

To view a PDF of the course details – click here

ODM–Attribute Importance using PL/SQL API

In a previous blog post I explained what attribute importance is and how it can be used in the Oracle Data Miner tool (click here to see blog post).

In this post I want to show you how to perform the same task using the ODM PL/SQL API.

The ODM tool makes extensive use of the Automatic Data Preparation (ADP) function. ADP performs some data transformations such as binning, normalization and outlier treatment of the data based on the requirements of each of the data mining algorithms. In addition to these transformations we can specify our own transformations. We do this by creating a setting tables which will contain the settings and transformations we can the data mining algorithm to perform on the data.

ADP is automatically turned on when using the ODM tool in SQL Developer. This is not the case when using the ODM PL/SQL API. So before we can run the Attribute Importance function we need to turn on ADP.

Step 1 – Create the setting table

CREATE TABLE Att_Import_Mode_Settings (

setting_name VARCHAR2(30),

setting_value VARCHAR2(30));

Step 2 – Turn on Automatic Data Preparation

BEGIN

INSERT INTO Att_Import_Mode_Settings (setting_name, setting_value)

VALUES (dbms_data_mining.prep_auto,dbms_data_mining.prep_auto_on);

COMMIT;

END;

Step 3 – Run Attribute Importance

BEGIN

DBMS_DATA_MINING.CREATE_MODEL(

model_name => ‘Attribute_Importance_Test’,

mining_function => DBMS_DATA_MINING.ATTRIBUTE_IMPORTANCE,

data_table_name > ‘mining_data_build_v’,

case_id_column_name => ‘cust_id’,

target_column_name => ‘affinity_card’,

settings_table_name => ‘Att_Import_Mode_Settings’);

END;

Step 4 – Select Attribute Importance results

SELECT *

FROM TABLE(DBMS_DATA_MINING.GET_MODEL_DETAILS_AI(‘Attribute_Importance_Test’))

ORDER BY RANK;

ATTRIBUTE_NAME IMPORTANCE_VALUE RANK

——————– —————- ———-

HOUSEHOLD_SIZE .158945397 1

CUST_MARITAL_STATUS .158165841 2

YRS_RESIDENCE .094052102 3

EDUCATION .086260794 4

AGE .084903512 5

OCCUPATION .075209339 6

Y_BOX_GAMES .063039952 7

HOME_THEATER_PACKAGE .056458722 8

CUST_GENDER .035264741 9

BOOKKEEPING_APPLICAT .019204751 10

ION

CUST_INCOME_LEVEL 0 11

BULK_PACK_DISKETTES 0 11

OS_DOC_SET_KANJI 0 11

PRINTER_SUPPLIES 0 11

COUNTRY_NAME 0 11

FLAT_PANEL_MONITOR 0 11

ODM 11gR2–Attribute Importance

I had a previous blog post on Data Exploration using Oracle Data Miner 11gR2. This blog post builds on the steps illustrated in that blog post.

After we have explored the data we can identity some attributes/features that have just one value or mainly one value, etc. In most of these cases we know that these attributes will not contribute to the model build process.

In our example data set we have a small number of attributes. So it is easy to work through the data and get a good understanding of some of the underlying information that exists in the data. Some of these were pointed out in my previous blog post.

The reality is that our data sets can have a large number of attributes/features. So it will be very difficult or nearly impossible to work through all of these to get a good understanding of what is a good attribute to use, and keep in our data set, or what attribute does not contribute and should be removed from the data set.

Plus as our data evolves over time, the importance of the attributes will evolve with some becoming less important and some becoming more important.

The Attribute Importance node in Oracle Data Miner allows use to automate this work for us and can save us many hours or even days, in our work on this task.

The Attribute Importance node using the Minimum Description Length algorithm.

The following steps, builds on our work in my previous post, and shows how we can perform Attribute Importance on our data.

1. In the Component Palette, select Filter Columns from the Transforms list

2. Click on the workflow beside the data node.

3. Link the Data Node to the Filter Columns node. Righ-click on the data node, select Connect, move the mouse to the Filter Columns node and click. the link will be created

4. Now we can configure the Attribute Importance settings.Click on the Filter Columns node. In the Property Inspector, click on the Filters tab.

– Click on the Attribute Importance Checkbox

– Set the Target Attribute from the drop down list. In our data set this is Affinity Card

5. Right click the Filter Columns node and select Run from the menu

After everything has run, we get the little green box with the tick mark on the Filter Column node. To view the results we right clicking on the Filter Columns node and select View Data from the menu. We get the list of attributes listed in order of importance and their Importance measure.

We see that there are a number of attributes that have a zero value. It algorithm has worked out that these attributes would not be used in the model build step. If we look back to the previous blog post, some of the attributes we identified in it have also been listed here with a zero value.

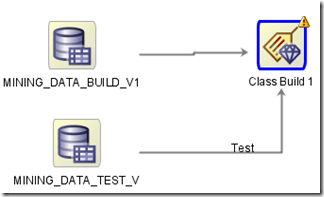

ODM 11gR2–Using different data sources for Build and Testing a Model

There are 2 ways to connect a data source to the Model build node in Oracle Data Miner.

The typical method is to use a single data source that contains the data for the build and testing stages of the Model Build node. Using this method you can specify what percentage of the data, in the data source, to use for the Build step and the remaining records will be used for testing the model. The default is a 50:50 split but you can change this to what ever percentage that you think is appropriate (e.g. 60:40). The records will be split randomly into the Built and Test data sets.

The second way to specify the data sources is to use a separate data source for the Build and a separate data source for the Testing of the model.

To do this you add a new data source (containing the test data set) to the Model Build node. ODM will assign a label (Test) to the connector for the second data source.

If the label was assigned incorrectly you can swap what data sources. To do this right click on the Model Build node and select Swap Data Sources from the menu.

Updating your ODM (11g R2) model in production

In my previous blog posts on creating an ODM model, I gave the details of how you can do this using the ODM PL/SQL API.

But at some point you will have a fairly stable environment. What this means is that you will know what type of algorithm and its corresponding settings work best for for your data.

At this point you should be able to re-create your ODM model in the production database. The frequency of doing this update is dependent on number of new cases that you have. So you need to update your ODM model could be daily, weekly, monthly, etc.

To update your model you will need to:

– Creating a settings table for your model

– Create a new ODM model

– Rename your new ODM model to the production name

The following examples are based on the example data, model names, etc that I’ve used in my previous post.

Creating a Settings Table

The first step is to create a setting table for your algorithm. This will contain all the parameter settings needed to create the new model. You will have worked out these setting from your previous attempts at creating your models and you will know what parameters and their values work best.

— Create the settings table

CREATE TABLE decision_tree_model_settings (

setting_name VARCHAR2(30),

setting_value VARCHAR2(30));

— Populate the settings table

— Specify DT. By default, Naive Bayes is used for classification.

— Specify ADP. By default, ADP is not used.

BEGIN

INSERT INTO decision_tree_model_settings (setting_name, setting_value)

VALUES (dbms_data_mining.algo_name,

dbms_data_mining.algo_decision_tree);

INSERT INTO decision_tree_model_settings (setting_name, setting_value)

VALUES (dbms_data_mining.prep_auto,dbms_data_mining.prep_auto_on);

COMMIT;

END;

Create a new ODM Model

We will need to use the DBMS_DATA_MINING.CREATE_MODEL procedure. In our example we will want to create a Decision Tree based on our sample data, which contains the previously generated cases and the new cases since the last model rebuild.

BEGIN

DBMS_DATA_MINING.CREATE_MODEL(

model_name => ‘Decision_Tree_Method2′,

mining_function => dbms_data_mining.classification,

data_table_name => ‘mining_data_build_v’,

case_id_column_name => ‘cust_id’,

target_column_name => ‘affinity_card’,

settings_table_name => ‘decision_tree_model_settings’);

END;

Rename your ODM model to production name

The model we have create created above is not the name that is used in our production software. So we will need to rename it to our production name.

But we need to be careful about when we do this. If you drop a model or rename a model when it is being used then you can end up with indeterminate results.

What I suggest you do, is to pick a time of the day when your production software is not doing any data mining. You should drop the existing mode (or rename it) and the to rename the new model to the production model name.

DBMS_DATA_MINING.DROP_MODEL(‘CLAS_DECISION_TREE‘);

and then

DBMS_DATA_MINING.RENAME_MODEL(‘Decision_Tree_Method2’, ‘CLAS_DECISION_TREE’);

Oracle Analytics Update & Plan for 2012

On Friday 16th December, Charlie Berger (Sr. Director, Product Management, Data Mining & Advanced Analytics) posted the following on the Oracle Data Mining forum on OTN.

“… soon you’ll be able to use the new Oracle R Enterprise (ORE) functionality. ORE is currently in beta and is targeted to go General Availability in the near future. ORE brings additional functionality to the ODM Option, which will then be renamed to the Oracle Advanced Analytics Option to reflect the significant adv. analytical functionality enhancements. ORE will allow R users to write R scripts and run them inside the database and eliminate and/or minimize data movement in/out of the DB. ORE will provide R to SQL transparency for SQL push-down to in-DB SQL and and expanding library of Oracle in-DB statistical functions. Packages that cannot be pushed down will be run in embedded R mode while the DB manages all data flows to the multiple R engines running inside the DB.

In January, we’ll open up a new OTN discussion forum specifically for Oracle R Enterprise focused technical discussions. Stay tuned.”

I’m looking forward to getting my hands on the new Oracle R Enterprise, in 2012. In particular I’m keen to see what additional functionality will be added to the Oracle Data Mining option in the DB.

So watch out for the rebranding to Oracle Advanced Analytics

Charlie – Any chance of an advanced copy of ORE and related DB bits and bobs.

- ← Previous

- 1

- 2

- 3

- 4

- Next →

You must be logged in to post a comment.