Uncategorized

Oracle Machine Learning Users on ADWC

One of the new features of the Autonomous Data Warehouse Cloud (ADWC) service is Oracle Machine Learning. This is a Zeppelin based notebook for your machine learning on ADWC. Check out my previous blog post about this.

In order to be able to use this new product and the in-database machine learning in ADWC, you will need your database user to have certain privileges. The first step in this is to create a typical user for accessing the ADWC and grant it the necessary OML privileges.

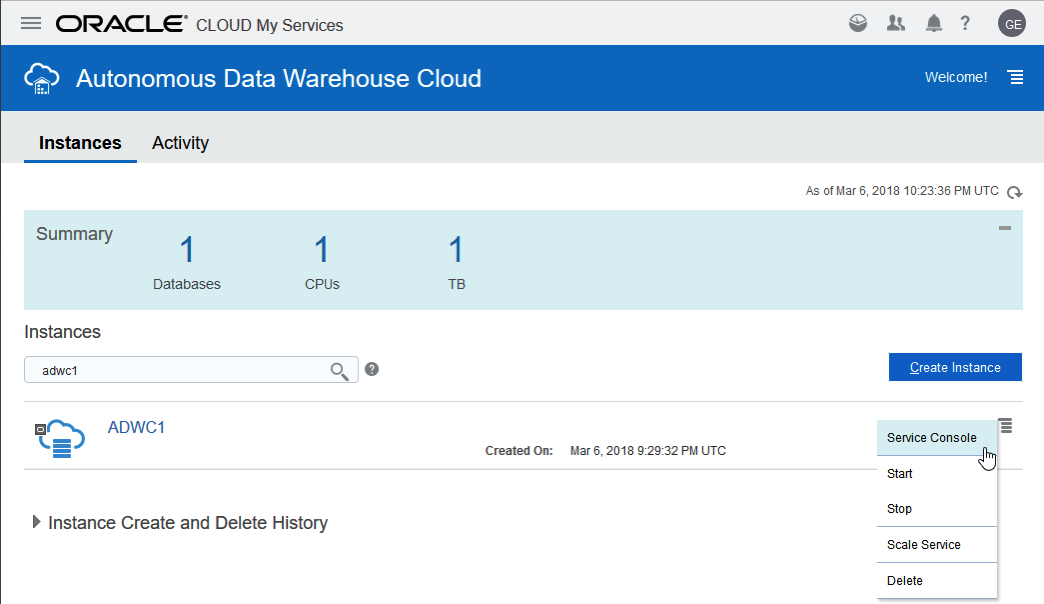

To do this open the ADWC console and then open the Service Console.

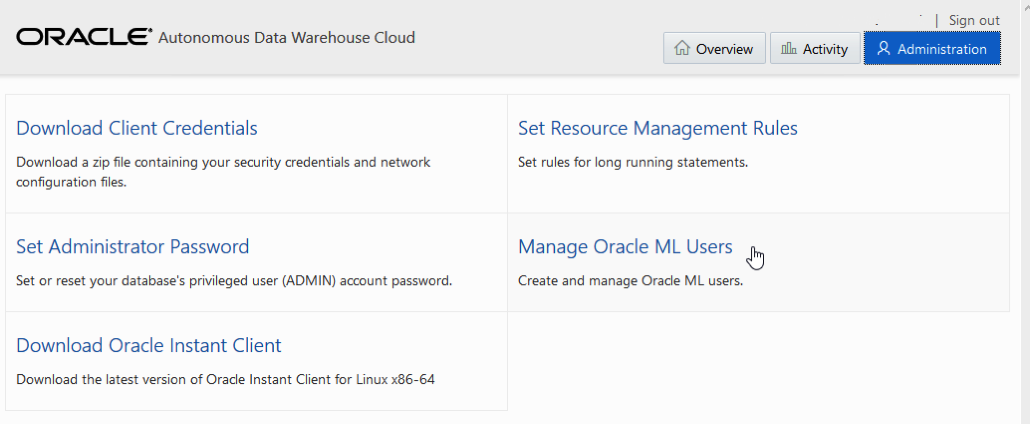

This will then open a new admin page which contains a link for ‘Manage Oracle ML User’. Click on this.

You can then enter the Username, Password and other details for the user, and then click Create.

This will then create a new user that is specific for Oracle Machine Learning. This new user will be granted the DWROLE, that contains the basic schema privileges and the privileges required to run the in-database machine learning algorithms. For those that a familiar with Oracle Data Mining/Oracle Advanced Analytics option in the Enterprise Edition of the Oracle database, you will see that these privileges are very similar.

You can examine the privileges granted to this DWROLE in the database as an administrator. When you do you will see the following:

CREATE ANALYTIC VIEW CREATE ATTRIBUTE DIMENSION ALTER SESSION CREATE HIERARCHY CREATE JOB CREATE MINING MODEL CREATE PROCEDURE CREATE SEQUENCE CREATE SESSION CREATE SYNONYM CREATE TABLE CREATE TRIGGER CREATE TYPE CREATE VIEW READ,WRITE ON directory DATA_PUMP_DIR EXECUTE privilege on the PL/SQL package DBMS_CLOUD

Predicting IBS in Dogs using Oracle 18c in the Cloud

Over the past 6-8 months I’ve been working on a project with the Veterinary School of Medicine, part of University of Dublin. The project was focused on using Machine Learning to find patterns in blood tests and x-rays from dogs who are are suffering with Irritable Bowel Syndrome (IBS) in dogs.

Over the past five years the Veterinary School of Medicine has built up a very larger data set of blood samples, x-rays, family history of the dog, eating habits of the dogs, etc.

This project has finally completed and it is only now that I can share with you some of the results and lessons we learned during the project.

The first part of the project was getting all this historical data loaded into and Oracle 12.2c database. This was a relatively simple task but this it did take a bit of coding to get the data model correct and to perform the necessary data transformations and integration needed, as illustrated in the following diagram.

Once the data was loaded into the database we could start using the in-database Machine Learning algorithms to find patterns that indicated if a dog was suffering from IBS, and ideally if they were in the early stages of IBS. The treatments for early detection had a higher success rate.

We began using the GUI Oracle Data Miner tool, part of SQL Developer, to build the predictive model workflows.

But the results we were getting was very disappointing. We were getting average predictive accuracy of 56% for all the models. This is not good and not much better than flipping a coin.

We were a bit stuck at this point about what to do next, and then Oracle’s Cloud Engagement team heard about our troubles and suggested that we join the Oracle 18c beta. They got us setup and running with a beta Oracle 18c cloud instance in no time and within a couple of days we are generating new machine learning models.

Oracle 18c has a number of new features that Oracle thought would be useful to use. Firstly they had a new and improved Neural Networks algorithm that had better accuracy when working with images stored as BLOBs, plus there was a number of new SQL analytic functions. One particular function was TO_DOG_YEAR(). A year in a dogs life is not 365 days, and is dependent on the age of the dog as the length of a dog year changes with age and the breed of the dog. Some recent research indicates the geography location and origin of the dog also plays a part.

The syntax of this function is

TO_DOG_YEAR (

DOB DATE

FEMALE BOOLEAN

NLS_BREED STRING )

If you do a bit of googling you will find other blog posts that discuss this new function. Some of these can be found here and here.

Oracle has been working with veterinary schools around the world on this problem and hence the introduction of this new SQL analytic function in Oracle 18c. This function accepts the DOB of the dog (DATE), if the dog is Female, and the Breed of the dog. It then calculates the appropriate age of the dog down to the nearest day. We built this into our machine learning workflow, and were very surprised by the outcomes. The predictive accuracy of the models went from 56% to 93%. That is an amazing jump. Perhaps too amazing. But after a few days of extra validation we concluded the difference was down to the new TO_DOG_YEAR() function and the ability to so accurately calculate the age of the dog.

In the last few weeks we have noticed, after the latest Oracle 18c patch has automatically been applied, that this function now has an additional parameter, this is OWN_SMOKE, and seems to indicated if the dog is owned by someone who smokes. This will indeed affect the age of the animal. We having had a chance to try this new parameter yet, but hope to soon.

The following diagram shows the updated workflow along with the transformation node that uses the TO_DOG_YEAR() function.

If you do a bit of googling you will find lots of research by various veterinary schools around the world, who spend so much time researching the various aspects that apply to this calculation. It was this research and Oracle’s involvement in previous research that resulted in the TO_DOG_YEAR() function being included in Oracle 18c.

A more detailed research paper going to be published in the International Journal of Veterinary Science and Medicine in June 2018 (Volume 6, Issue 1). This paper will explain in more details of the effects of age, breed and sex has on the accuracy of the machine learning models.

We have also been asked to submit our project to Oracle Open World, and it is currently been considered for early selection. This will allow OOW to include this project in their promotional material.

Oracle Machine Learning notebooks

With the recent release of Oracle’s Autonomous Data Warehouse Cloud (ADWC), Oracle has given data scientists a new tool for data discovery and machine learning on the ADWC. Oracle Machine Learning is based on Apache Zeppelin and gives us a new machine learning tool for accessing the in-database machine learning algorithms and in-database statistical functions.

Oracle Machine Learning (OML) SQL notebooks provide easy access to Oracle’s parallelized, scalable in-database implementations of a library of Oracle Advanced Analytics’ machine learning algorithms (classification, regression, anomaly detection, clustering, associations, attribute importance, feature extraction, times series, etc.), SQL, PL/SQL and Oracle’s statistical and analytical SQL functions. Oracle Machine Learning SQL notebooks and Oracle Advanced Analytics’ library of machine learning SQL functions combined with PL/SQL allow companies to automate their discovery of new insights, generate predictions and add “AI” to data viz dashboards and enterprise applications.

The key features of Oracle Machine Learning include:

- Collaborative SQL notebook UI for data scientists

- Packaged with Oracle Autonomous Data Warehouse Cloud

- Easy access to shared notebooks, templates, permissions, scheduler, etc.

- Access to 30+ parallel, scalable in-database implementations of machine learning algorithms

- SQL and PL/SQL scripting language supported

- Enables and Supports Deployments of Enterprise Machine Learning Methodologies in ADWC

Here is a list of key resources for Oracle Machine Learning:

- Oracle Machine Learning Notebooks

- Video overview of Oracle Machine Learning

- Download sample Oracle Machine Learning notebooks

- Quick Start Tutorial for getting started with Oracle Machine Learning

- Documentation: Using Oracle Machine Learning

Oracle and Python setup with cx_Oracle

Is Python the new R?

Maybe, maybe not, but that I’m finding in recent months is more companies are asking me to use Python instead of R for some of my work.

In this blog post I will walk through the steps of setting up the Oracle driver for Python, called cx_Oracle. The documentation for this drive is good and detailed with plenty of examples available on GitHub. Hopefully there isn’t anything new in this post, but it is my experiences and what I did.

1. Install Oracle Client

The Python driver requires Oracle Client software to be installed. Go here, download and install. It’s a straightforward install. Make sure the directories are added to the search path.

2. Download and install cx_Oracle

You can use pip3 to do this.

pip3 install cx_Oracle

Collecting cx_Oracle

Downloading cx_Oracle-6.1.tar.gz (232kB)

100% |████████████████████████████████| 235kB 679kB/s

Building wheels for collected packages: cx-Oracle

Running setup.py bdist_wheel for cx-Oracle ... done

Stored in directory: /Users/brendan.tierney/Library/Caches/pip/wheels/0d/c4/b5/5a4d976432f3b045c3f019cbf6b5ba202b1cc4a36406c6c453

Successfully built cx-Oracle

Installing collected packages: cx-Oracle

Successfully installed cx-Oracle-6.1

3. Create a connection in Python

Now we can create a connection. When you see some text enclosed in angled brackets <>, you will need to enter your detailed for your schema and database server.

# import the Oracle Python library

import cx_Oracle

# define the login details

p_username = ""

p_password = ""

p_host = ""

p_service = ""

p_port = "1521"

# create the connection

con = cx_Oracle.connect(user=p_username, password=p_password, dsn=p_host+"/"+p_service+":"+p_port)

# an alternative way to create the connection

# con = cx_Oracle.connect('/@/:1521')

# print some details about the connection and the library

print("Database version:", con.version)

print("Oracle Python version:", cx_Oracle.version)

Database version: 12.1.0.1.0

Oracle Python version: 6.1

4. Query some data and return results to Python

In this example the query returns the list of tables in the schema.

# define a cursor to use with the connection

cur = con.cursor()

# execute a query returning the results to the cursor

cur.execute('select table_name from user_tables')

# for each row returned to the cursor, print the record

for row in cur:

print("Table: ", row)

Table: ('DECISION_TREE_MODEL_SETTINGS',)

Table: ('INSUR_CUST_LTV_SAMPLE',)

Table: ('ODMR_CARS_DATA',)

Now list the Views available in the schema.

# define a second cursor

cur2 = con.cursor()

# return the list of Views in the schema to the cursor

cur2.execute('select view_name from user_views')

# display the list of Views

for result_name in cur2:

print("View: ", result_name)

View: ('MINING_DATA_APPLY_V',)

View: ('MINING_DATA_BUILD_V',)

View: ('MINING_DATA_TEST_V',)

View: ('MINING_DATA_TEXT_APPLY_V',)

View: ('MINING_DATA_TEXT_BUILD_V',)

View: ('MINING_DATA_TEXT_TEST_V',)

5. Query some data and return to a Panda in Python

Pandas are commonly used for storing, structuring and processing data in Python, using a data frame format. The following returns the results from a query and stores the results in a panda.

# in this example the results of a query are loaded into a Panda

# load the pandas library

import pandas as pd

# execute the query and return results into the panda called df

df = pd.read_sql_query("SELECT * from INSUR_CUST_LTV_SAMPLE", con)

# print the records returned by query and stored in panda

print(df.head())

CUSTOMER_ID LAST FIRST STATE REGION SEX PROFESSION \

0 CU13388 LEIF ARNOLD MI Midwest M PROF-2

1 CU13386 ALVA VERNON OK Midwest M PROF-18

2 CU6607 HECTOR SUMMERS MI Midwest M Veterinarian

3 CU7331 PATRICK GARRETT CA West M PROF-46

4 CU2624 CAITLYN LOVE NY NorthEast F Clerical

BUY_INSURANCE AGE HAS_CHILDREN ... MONTHLY_CHECKS_WRITTEN \

0 No 70 0 ... 0

1 No 24 0 ... 9

2 No 30 1 ... 2

3 No 43 0 ... 4

4 No 27 1 ... 4

MORTGAGE_AMOUNT N_TRANS_ATM N_MORTGAGES N_TRANS_TELLER \

0 0 3 0 0

1 3000 4 1 1

2 980 4 1 3

3 0 2 0 1

4 5000 4 1 2

CREDIT_CARD_LIMITS N_TRANS_KIOSK N_TRANS_WEB_BANK LTV LTV_BIN

0 2500 1 0 17621.00 MEDIUM

1 2500 1 450 22183.00 HIGH

2 500 1 250 18805.25 MEDIUM

3 800 1 0 22574.75 HIGH

4 3000 2 1500 17217.25 MEDIUM

[5 rows x 31 columns]

6. Wrapping it up and closing things

Finally we need to wrap thing up and close our cursors and our connection to the database.

# close the cursors cur2.close() cur.close() # close the connection to the database con.close()

Useful links

Watch out for more blog posts on using Python with Oracle, Oracle Data Mining and Oracle R Enterprise.

Make SQL Great Again baseball call

Make SQL great again baseball cap

Let me know if you would like to order one.

They cost €15 + P&P

My Oracle Open World 2017 Presentations

Oracle Open World 2017 will be happening very soon (1st-5th October). Still lots to do before I can get on that plane to San Francisco.

This year I’ll be giving 2 presentations (see table below). One on the Sunday during the User Groups Sunday sessions. I’ve been accepted on the EMEA track. I then get a few days off to enjoy and experience OOW until Thursday when I have my second presentation that is part of JavaOne (I think!)

My OOW kicks off on Friday 29th September with the ACE Director briefing at Oracle HQ, after flying to SFO on Thursday 28th. This year it is only for one day instead of two days. I really enjoy this event as we get to learn and see what Oracle will be announcing at OOW as well as some things that will be coming out during the following few months.

table.myTable { border-collapse:collapse; }

table.myTable td, table.myTable th { border:1px solid black;padding:5px; }

| Day | Time | Presentation | Location |

|---|---|---|---|

| Sunday | 13:45-14:30 | SQL: One Language to Rule All Your Data [OOW SUN1238]

SQL is a very powerful language that has been in use for almost 40 years. SQL comes with many powerful techniques for analyzing your data, and you can analyze data outside the database using SQL as well. Using the new Oracle Big Data SQL it is now possible to analyze data that is stored in a database, in Hadoop, and in NoSQL all at the same time. This session explores the capabilities in Oracle Database that allow you to work with all your data. Discover how SQL really is the unified language for processing all your data, allowing you to analyze, process, run machine learning, and protect all your data. Hopefully this presentation will be a bit of Fun! For those who have been working with the database for a long time, we can sometimes forget what we can really do. For those starting out in the career may not realise what the database can do. The presentation delivers an important message while having a laugh or two (probably at me). | Marriott Marquis (Golden Gate Level) – Golden Gate C1/C2 |

| Thursday | 13:45-14:30 | Is SQL the Best Language for Statistics and Machine Learning?

[OOW and JavaOne CON7350] Did you know that Oracle Database comes with more than 300 statistical functions? And most of these statistical functions are available in all versions of Oracle Database? Most people do not seem to know this. When we hear about people performing statistical analytics, we hear them talking about Excel and R, but what if we could do statistical analysis in the database without having to extract any data onto client machines? This presentation explores the various statistical areas available in Oracle Database and gives several demonstrations. We can also greatly expand our statistical capabilities by using Oracle R Enterprise with the embedded capabilities in SQL. This presentation is just one of the 14 presentations that are scheduled for the Thursday! I believe this session is already fully booked, but you can still add yourself to the wait list. |

Marriott Marquis (Golden Gate Level) – Golden Gate C3 |

My flights and hotel have been paid by OTN as part of the Oracle ACE Director program. Yes this costs a lot of money and there is no way I’d be able to pay these costs. Thank you.

My diary for OOW is really full. No it is completely over booked. It is just mental. Between attending conference session, meeting with various product teams (we only get to meet at OOW), attending various community meet-ups, this year I get to attend some events for OUG leaders (representing UKOUG), spending some time on the EMEA User Group booth, various meetings with people to discuss how they can help or contribute to the UKOUG, then there is Oak Table World, trying to check out the exhibition hall, spend some time at the OTN/ODC hangout area, getting a few OTN t-shirts, doing some book promotions at the Oracle Press shop, etc., etc., etc. I’m exhausted just thinking about it. Mosts days start at 7am and then finish around 10pm.

I’ll need a holiday when I get home! but it will be straight back to work 😦

If you are at OOW and want to chat then contact me via DM on Twitter or WhatsApp (these two are best) or via email (this will be the slowest way).

I’ll have another blog post listing the presentations from various people and partners from the Republic of Ireland who are speaking at OOW.

Part 5 – The right to be forgotten (EU GDPR)s

This is the fifth part of series of blog posts on ‘How the EU GDPR will affect the use of Machine Learning‘

Article 17 is titled Right of Erasure (right to be forgotten) allows a person to obtain their data and for the data controller to ensure that the personal data is erased without any any delay.

This does not mean that their data can be flagged for non-contact, as I’ve seen done in many companies, only for the odd time when one of these people have been contacted.

It will also allow for people to choose to no take part in data profiling.

Click back to ‘How the EU GDPR will affect the use of Machine Learning – Part 1‘ for links to all the blog posts in this series.

Part 4b – (Article 22: Profiling) Why me? and how Oracle 12c saves the day

This is the fourth part of series of blog posts on ‘How the EU GDPR will affect the use of Machine Learning‘

In this blog post (Part4b) I will examine some of the more technical aspects and how the in-database machine learning functions saves the day!

Probably in most cases where machine learning has been used and/or deployed in your company to analyse, profile and predict customers, it is more than likely that some sort of black box machine learning has been used.

Typical black box machine learning will include using algorithms like Neural Networks, but these can extended to other algorithms, within the context of the EU GDPR requirements, such as SVMs, GLM, etc. Additionally most companies don’t just use one algorithm to make a decision on a customer. Many algorithms and rules based decision make can be used together, using some sort of voting system, to determine if a customer is targeted in a certain way.

Basically all of these do not really support the requirements of the EU GDPRs.

In most cases we need to go back to basics. Back to more simpler approaches of machine learning for customer profiling and prediction. This means no more, for now, ensemble models, unless you can explain why a customer was selected. This means having to use simple algorithms like Decision Trees, at a push Naive Bayes, and using some well defined rules based methods. All of these approaches allows us to see and understand why a customer was selected and based on Article 22 being able to explain why.

But there is some hope. Some of the commercial machine learning vendors already for some prediction insights built into their software. Very few if any open source solutions have this capability.

For example, Oracle introduced a new function called PREDICTION_DETAILS in Oracle 12.1c and this was expanded in Oracle 12.2c to cover all their in-database machine learning algorithms.

The following is an example of using this function for an SVM model. When you examine the boxes in the following image you an see that a slightly different set of attributes and the values of these attributes are listed. Each box corresponds to a different customer. This means we can give an explanation of why a customer was selected. Oracle 12c saves the day.

select cust_id,

prediction(clas_svm_1_27 using *) pred_value,

prediction_probability(clas_svm_1_27 using *) pred_prob,

prediction_details(clas_svm_1_27 using *) pred_details

from mining_data_apply_v;

If you have a look at other commercial machine learning solutions, you will find some give similar functionality or it will be available soon. Can we get the same level of detail from open source solutions. Not really unless you are using Decision Tress and maybe Naive Bayes. This means that companies that have gone done the pure open source for their machine learning may have to look at using alternative software and may have to folk out some hard earned dollars/euros to make sure that they are complainant with Article 22 of the EU GDPRs.

Click back to ‘How the EU GDPR will affect the use of Machine Learning – Part 1‘ for links to all the blog posts in this series.

Part 4a – (Article 22: Profiling) Why me? and how Oracle 12c saves the day

This is the fourth part of series of blog posts on ‘How the EU GDPR will affect the use of Machine Learning‘

In this blog post (Part4a) I will discuss the specific issues relating to the use of machine learning algorithms and models. In the next blog post (Part 4a) I will examine some of the more technical aspects and how the in-database machine learning functions saves the day!

The EU GDPR has some rules that will affect the use of machine learning models for predicting customers.

As with all the other section of the EU GDPR, the use of machine learning and profiling of individuals does not affect organisations based in within Europe but affects all organisations around the globe who will be using these methods and associated data.

Article 22 of the EU GDPR deals with the “Automated individual decision-making, including profiling” and effectively creates a “right to explanation”. This means that an individual is entitled to an explanation of the decisions made by automated decision making models or profiling that has resulted in a decision being made about them. These new regulations present many challenges for organisations and their teams of data scientists.

To be able to give an explanation of the decision made by the machine learning models or by profile, requires the ability of the underlying models and their associated algorithms to be able to gives details of the model processing and how the decision about the individual has been obtained. For most machine learning models and algorithms this is generally not possible. For a limited set of algorithms, for example with decision trees, this is possible, but with other algorithms such as support vector machines, some regression models, and in particular neural networks, the ability to give these explanations is not possible. Some of these can be considered black box modelling (for neural networks) and grey box modelling for the others. But these algorithms are in widespread use in many organisations and are core to their predictive analytics solutions. This presents many challenges for organisations as they will need to look at alternative algorithms that many not have the same degree of predictive accuracy. With the recent rise of deep learning using neural networks, is extremely difficult to explain the multilayer neural net with various learned weights between each of the nodes at each layer.

Ensemble machine learning methods like Random Forests are also a challenge. Although the underlying machine learning algorithm is explainable, the ensemble approach of Random Forest, and other similar methods, result from an aggregation, averaging or voting process. Additionally, scenarios when machine learning models are combine with multiple other models, along with rules based solutions, where the predicted outcome is based on the aggregation or voting of all methods may no longer be useable. The ability to explain a predicted outcome using ensemble methods may not be possible and this will affect their continued use for predictive analytics.

In addition to the requirements of Article 22, Articles 13 and 14 state that the a person has a right to the meaningful information about the logic involved in profiling the person.

Over the past few years many of the commercially available machine learning solutions have been preparing for changes required to meet the EU GDPR. Some vendors have been able to add in greater model explanation features as well as specific explanations for each of the individual predictions. Many other vendors are will working on adding the required level of explanations and some of these many not be available in time for when the EU GDPR goes live in April 2018. This will present many challenges for organisations around the world who will be using data gathered within the EU region.

For machine learning based on open source languages and tools the EU GDPR present a very different challenge. While a small number of these come with some simple explanations for some of the more basic machine learning algorithms, there seems to be little information available on what work is currently being done to update these languages and tools. The limiting factor with making the required updates in the open source community lies with there being no commercial push to so. As a result of these limitation, many organisations may be forced into using commercial machine learning products, but for many other organisation the cost of doing so will be prohibitive.

It is clear that the tasks of building machine learning models have become significantly more complex with the introduction of the new EU GDPR. This complexity applies to the selection of what data can be used, ensuring there is no inherent discrimination in the machine learning models and the ability of these models to give an explanation of how the predicted outcome was determined. Companies around the World need to address these issues and in doing so may limit what software and algorithms that can be used for the customer profiling and predictive analytics. Although some of the commercially available machine learning languages and products can give the required insights, more product enhancements are required. Many challenges are facing machine learning open source community, with many research group only starting in recent months to look at how their languages, packages and tools can be enhanced to facilitate the requirements of the EU GDPR.

Click back to ‘How the EU GDPR will affect the use of Machine Learning – Part 1‘ for links to all the blog posts in this series.

Part 3 – Ensuring there is no Discrimination in the Data and machine learning models

This is the third part of series of blog posts on ‘How the EU GDPR will affect the use of Machine Learning‘

The new EU GDPR has some new requirements that will affect what data can be used to ensure there is no discrimination. Additionally, the machine learning models needs to ensure that there is no discrimination with the predictions it will make. There is an underlying assumption that the organisation has the right to use the data about individuals and that this data has been legitimately obtained. The following outlines the areas relating to discrimination:

- Discrimination based on unfair treatment of an individual based on using certain variables that may be inherently discriminatory. For example, race, gender, etc., and any decisions based on machine learning methods or not, that are based on an individual being part of one or more of these variables. This is particularly challenging for data scientists and it can limit some of the data points that can be included in their data sets.

- All data mining models need to tested to ensure that there is no discrimination built into them. Although the data scientist has removed any obvious variables that may cause discrimination, the machine learning models may have been able to discover some bias or discrimination based on the patterns it has discovered in the data.

- In the text preceding the EU GDPR (paragraph 71), details the requirements for data controllers to “implement appropriate technical and organizational measures” that “prevent, inter alia, discriminatory effects” based on sensitive data. Paragraph 71 and Article 22 paragraph 4 addresses discrimination based on profiling (using machine learning and other methods) that uses sensitive data. Care is needed to remove any associated correlated data.

- If one group of people are under represented in a training data set then, depending on the type of prediction being used, may unknowingly discriminate this group when it comes to making a prediction. The training data sets will need to be carefully partitioned and separate machine learning models built on each partition to ensure that such discrimination does not occur.

In the next blog post I will look at addressing the issues relating to Article 22 on the right to an explanation on outcomes automated individual decision-making, including profiling using machine learning and other methods.

Click back to ‘How the EU GDPR will affect the use of Machine Learning – Part 1‘ for links to all the blog posts in this series.

How the EU GDPR will affect the use of Machine Learning – Part 1

On 5 December 2015, the European Parliament, the Council and the Commission reached agreement on the new data protection rules, establishing a modern and harmonised data protection framework across the EU. Then on 14th April 2016 the Regulations and Directives were adopted by the European Parliament.

The EU GDPR comes into effect on the 25th May, 2018.

Are you ready ?

The EU GDPR will affect every country around the World. As long as you capture and use/analyse data captured with the EU or by citizens in the EU then you have to comply with the EU GDPR.

Over the past few months we have seen a increase in the amount of blog posts, articles, presentations, conferences, seminars, etc being produced on how the EU GDPR will affect you. Basically if your company has not been working on implementing processes, procedures and ensuring they comply with the regulations then you a bit behind and a lot of work is ahead of you.

Like I said there was been a lot published and being talked about regarding the EU GDPR. Most of this is about the core aspects of the regulations on protecting and securing your data. But very little if anything is being discussed regarding the use of machine learning and customer profiling.

Do you use machine learning to profile, analyse and predict customers? Then the EU GDPRs affect you.

Article 22 of the EU GDPRs outlines some basic capabilities regarding machine learning, and in additionally Articles 13, 14, 19 and 21.

Over the coming weeks I will have the following blog posts. Each of these address a separate issue, within the EU GDPR, relating to the use of machine learning.

- Part 2 – Do I have permissions to use the data for data profiling?

- Part 3 – Ensuring there is no Discrimination in the Data and machine learning models.

- Part 4 – (Article 22: Profiling) Why me? and how Oracle 12c saves the day

You must be logged in to post a comment.