2024 Leaving Certificate Results – Inline

The 2024 Leaving Certificate results are out. Up, down and across the country there have been tears of joy and some muted celebrations. But there have been some disappointments too. Although the government has been talking about how the marks have been increased, with post mark adjustments, this doesn’t help students come to terms with their results.

In previous years, I’ve looked at the profile of marks across some (not all) of the Leaving Certificate tools (the tools I used included an Oracle Database, and used Oracle Analytics Cloud to do some of the analysis along with other tools). Check out these previous posts

- Leaving Certificate 2023 – Inline or more adjustments

- CAO Points 2023

- Leaving Certificate 2022 – Inflation, deflation or in-line

- CAO Points 2022

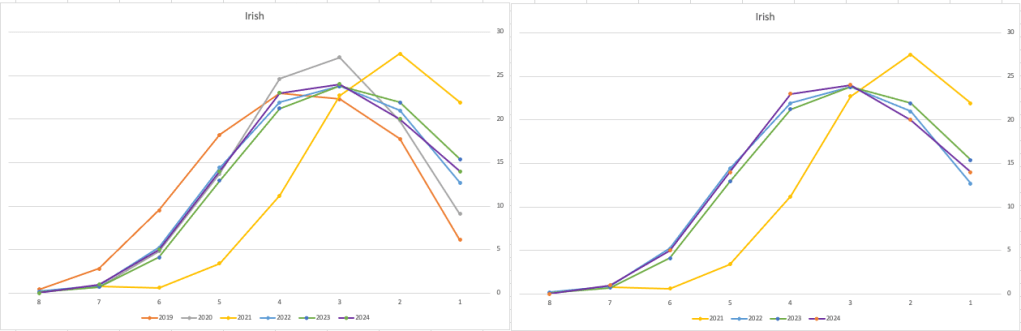

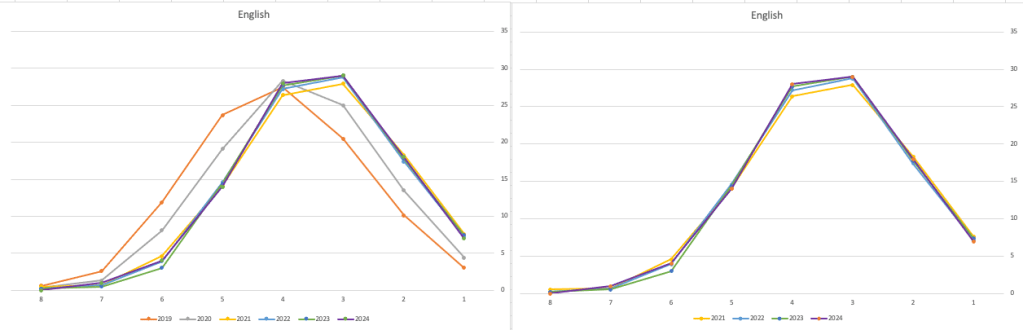

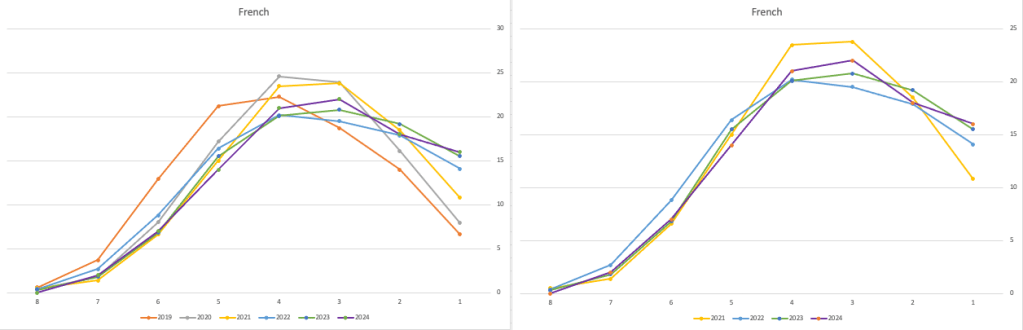

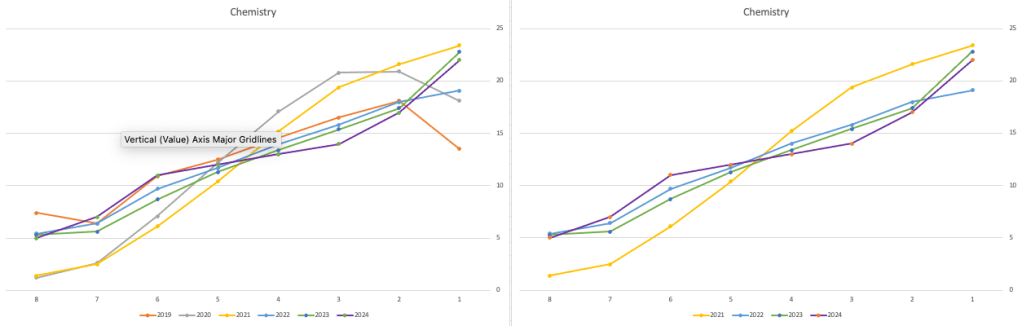

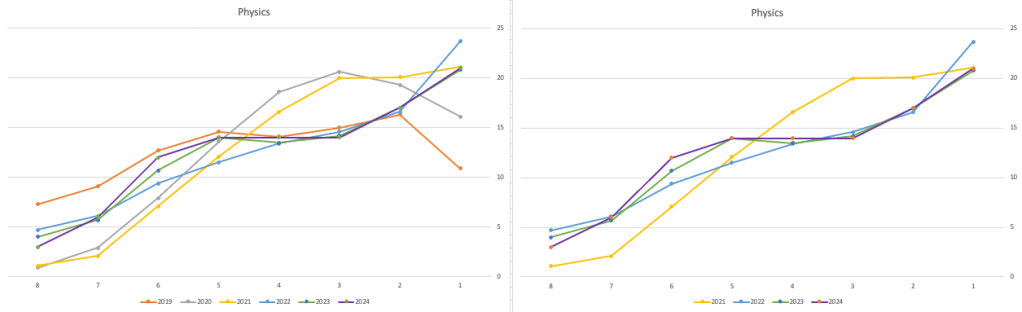

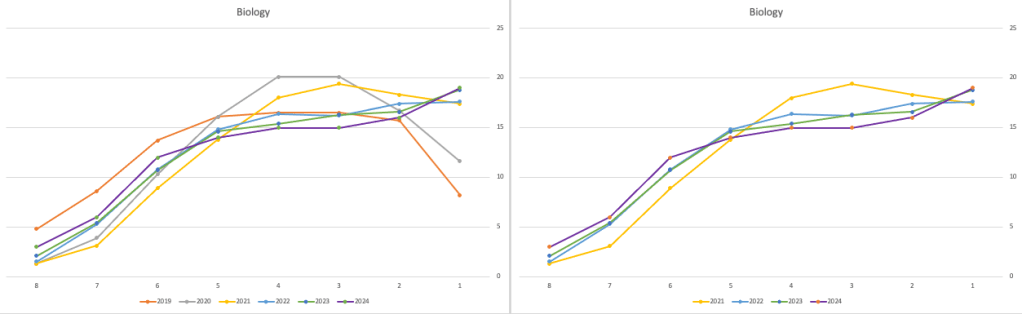

From analysing the results for 2024, both numerically and graphically, we can see the results this year are broadly inline with last year. That news might bring some joy to some students but will be slightly disappointing for others. You can see this for yourself from the graphics below. However, in some subjects, there appears to be some minor (yes very minor) changes in the profiles, with a slight shift to the left, indicating a slight decrease in the higher grades and a corresponding increase in lower grades. But across many subjects, we have seen a slight increase in those achieving a H1 grade. The impact of these slight changes will be seen in the CAO points needed for the courses in the 550+ courses. But for the courses below 500 points, we might not see much of a change in CAO points. Although there might be some minor fluctuations based on demand for certain courses, which is typical most years.

I’ll have another post looking at the CAO points after they are released, so look out for that post.

The charts below give the profile of marks for each year between 2019 (pre-Covid) and 2024. The chart on the left includes all years, and the chart on the right is for the last four years. This allows us to see if there have been any adjustments in the profiles over those years. For most subjects, we can see a marked reduction of marks for certain subjects since the 2021 exceptionally high marks. While some subjects are almost back to matching the 2019 profile (science subjects, Irish), for others the stepback needed is small. Based on the messaging from the government, the stepping back will commence in 2025

Back in April, an Irish Times article discussed the changes coming from 2025, where there will be a gradual return to “normal” (pre-Covid) profile of marks. Looking at the profile of marks over the past couple of years we can clearly see there has been some stepping back in the profile of marks. Some subjects are back to pre-Covid 2019 profiles. In some subjects, this is more evident than in others. They’ve used the phrase “on aggregate” to hide the stepping back in some subjects and less so in others.

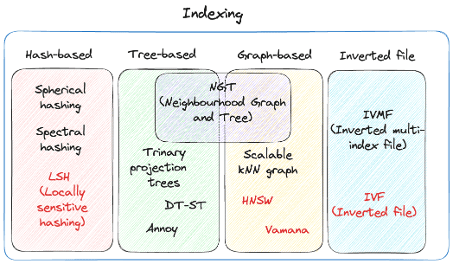

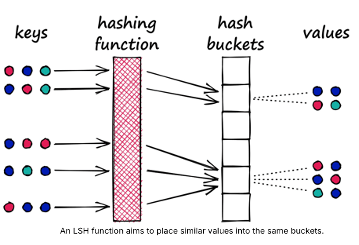

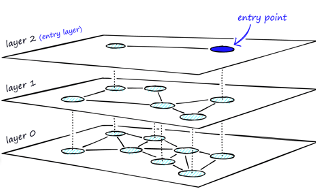

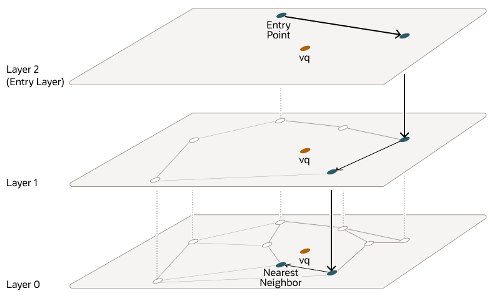

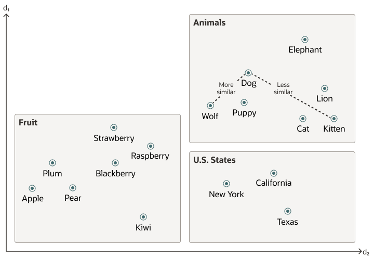

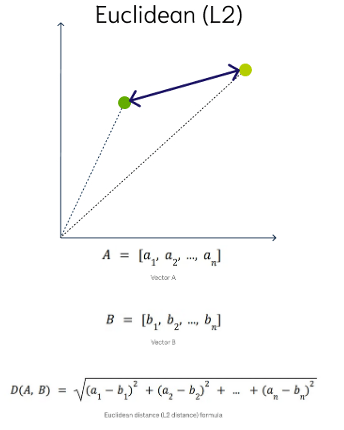

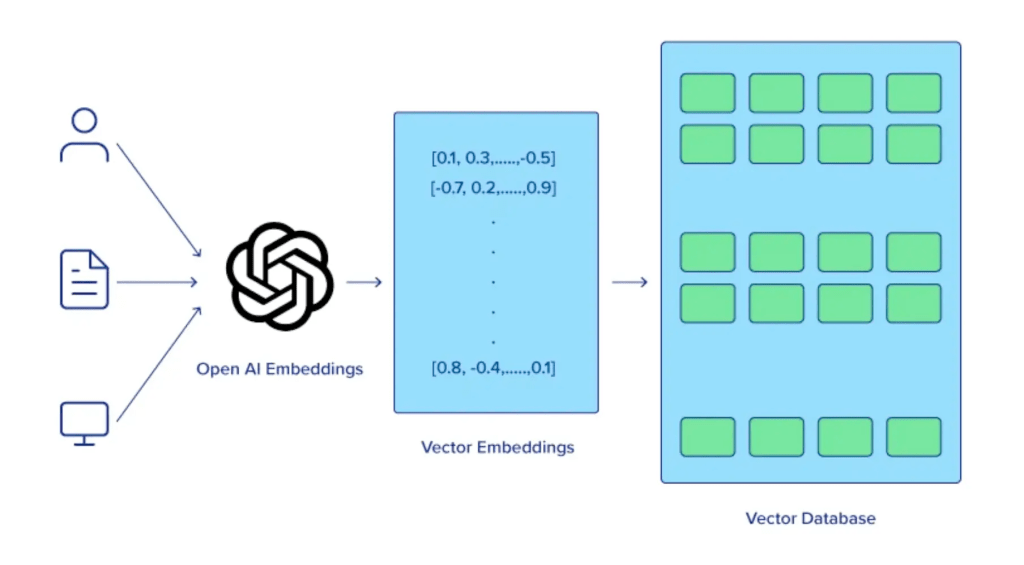

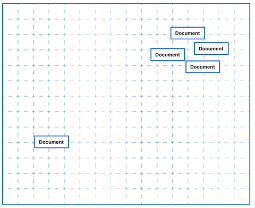

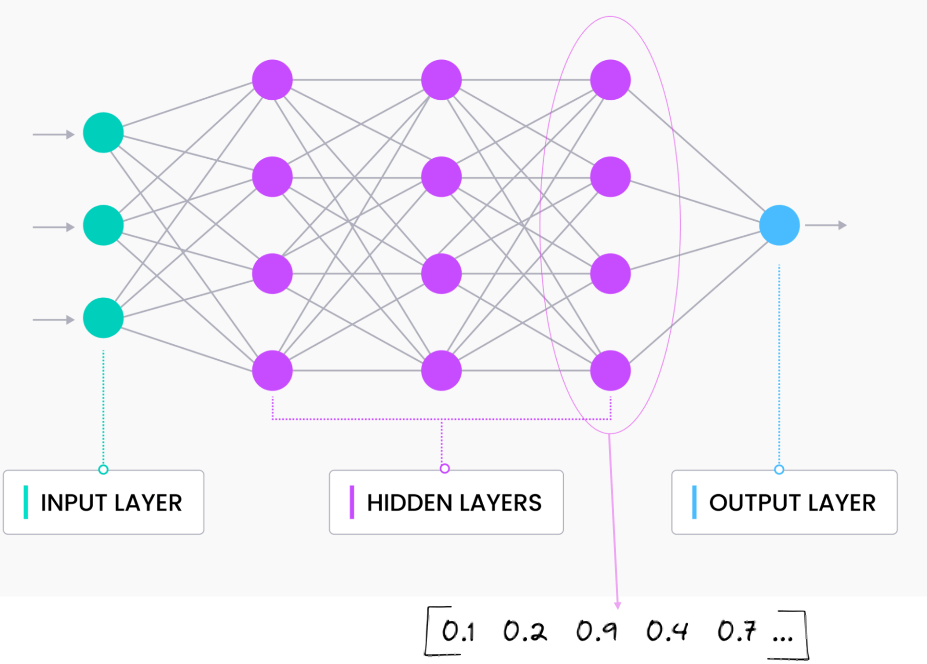

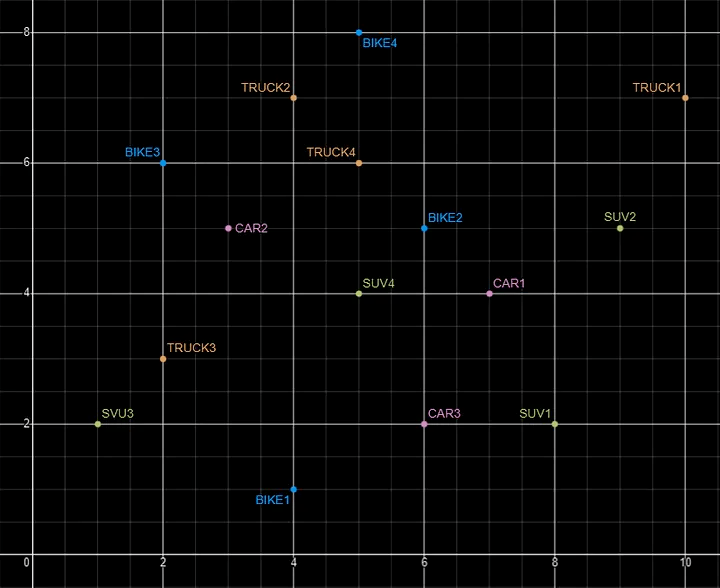

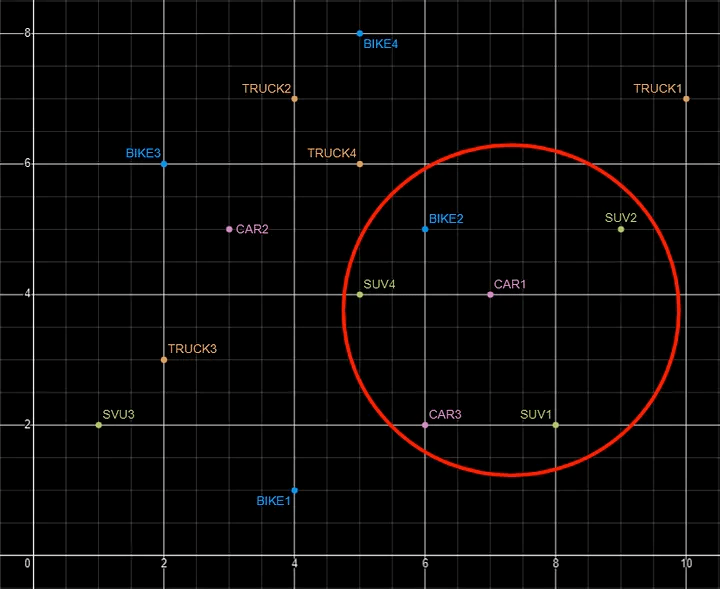

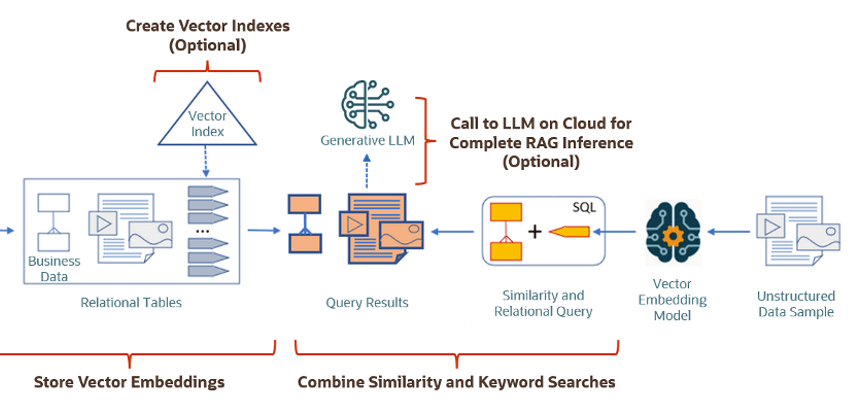

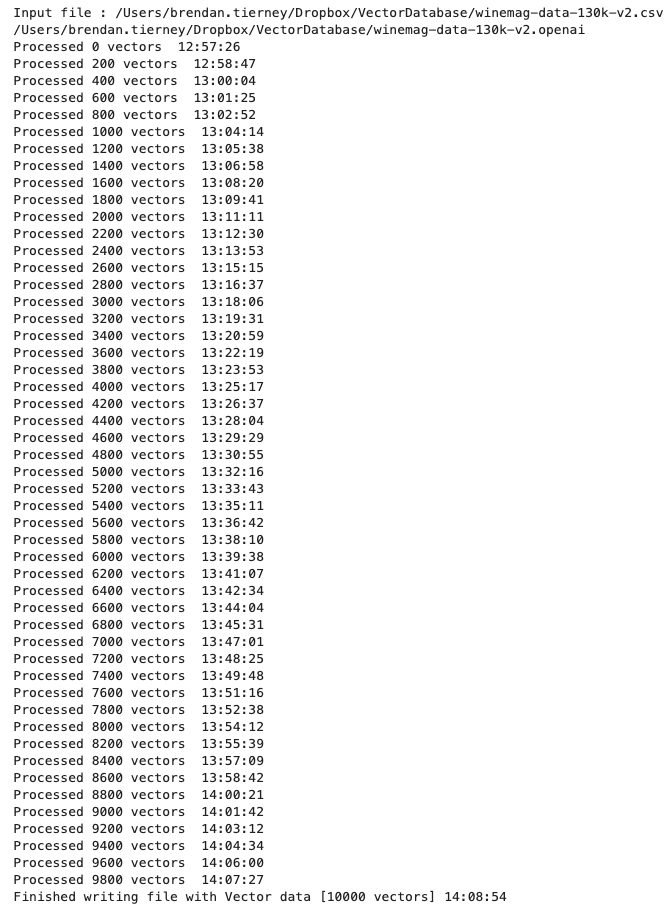

Using Cohere to Generate Vector Embeddings

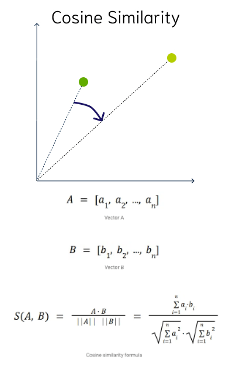

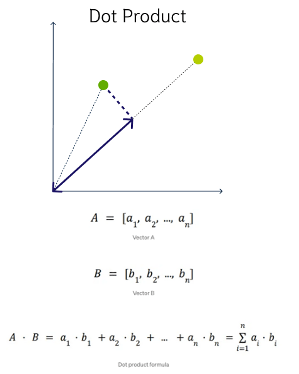

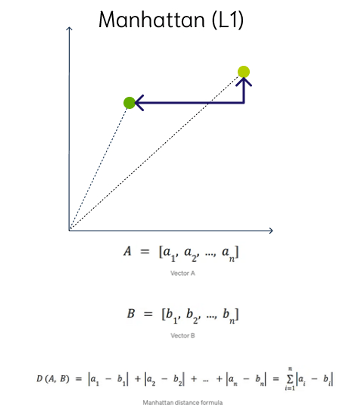

When using Vector Databases or Vector Embedding features in most (modern, multi-model) Databases, you can gain additional insights. The Vector Embeddings can be utilised for semantic searches, where you can find related data or information based on the values of the Vectors. One of the challenges is generating suitable vectors. There are a lot of options available for generating these Vector Embeddings. In this post, I’ll illustrate how to generate these using Cohere, and in a future post, I’ll illustrate using OpenAI. There are advantages and disadvantages to using these solutions. I’ll point out some of these for each solution (Cohere and OpenAI), using their Python API library.

The first step is to install the Cohere Python library

pip install cohereNext, you need to create an account with Cohere. This will allow you to get an API key. You can get a Trial API key, but you will be restricted to the number of calls you are allowed. As with most free or trial keys these allow you to do a limited number of calls. This is commonly referred to as Rate Limiting. The trial key for the embedding models allows you to have up to 40 calls per minute. This is very very limited and each call is very slow. (I’ll discuss related issues about OpenAI rate limiting in another post)

The dataset I’ll be using is the Wine Reviews 130K (dropbox link). This is widely available on many sites. I want to create Vector Embeddings for the ‘Description’ field in this dataset which contains a review of each wine. There are some columns with no values, and these need to be handled. For each wine review, I’ll create a SQL INSERT statement and print this out to a file. This file will contain an INSERT statement for each wine review, including the vector embedding.

Here’s the code, (you’ll need to enter an API key and change the directory for the data file)

import numpy as np

import os

import time

import pandas as pd

import cohere

co = cohere.Client(api_key="...")

data_file = ".../VectorDatabase/winemag-data-130k-v2.csv"

df = pd.read_csv(data_file)

print_every = 200

rate_limit = 1000 #Cohere limits to 40 API calls per minute

print("Input file :", data_file)

v_file = os.path.splitext(data_file)[0]+'.cohere'

print(v_file)

#Open file with write (over-writes previous file)

f=open(v_file,"w")

for index,row in df.head(rate_limit).iterrows():

phrases=list(row['description'])

model="embed-english-v3.0"

input_type="search_query"

#####

res = co.embed(texts=phrases,

model=model,

input_type=input_type) #,

# embedding_types=['float'])

v_embedding = str(res.embeddings[0])

tab_insert="INSERT into WINE_REVIEWS_130K VALUES ("+str(row["Seq"])+"," \

+'"'+str(row["description"])+'",' \

+'"'+str(row["designation"])+'",' \

+str(row["points"])+"," \

+'"'+str(row["province"])+'",' \

+str(row["price"])+"," \

+'"'+str(row["region_1"])+'",' \

+'"'+str(row["region_2"])+'",' \

+'"'+str(row["taster_name"])+'",' \

+'"'+str(row["taster_twitter_handle"])+'",' \

+'"'+str(row["title"])+'",' \

+'"'+str(row["variety"])+'",' \

+'"'+str(row["winery"])+'",' \

+"'"+v_embedding+"'"+");\n"

f.write(tab_insert)

if (index%print_every == 0):

print(f'Processed {index} vectors ', time.strftime("%H:%M:%S", time.localtime()))

#Close vector file

f.close()

print(f"Finished writing file with Vector data [{index+1} vectors]", time.strftime("%H:%M:%S", time.localtime()))The vector generated has 1024 dimensions. At this time there isn’t a parameter to change/reduce the number of dimensions.

The output file can now be run in your database, assuming you’ve created a table called WINE_REVIEWS_130K and has a column with the appropriate data type (e.g. VECTOR)

Warnings: When using the Cohere API you are limited to maximum of 40 calls per minute. I’ve found this to be incorrect and it was more like 38 calls (for me). I also found the ‘per minute’ to be incorrect. I had to wait several minutes and up to five minutes before I could attempt another run.

In an attempt to overcome this, I create a production API key. This involved giving some payment details, and this in theory should remove the ‘per minute’ rate limit, among other things. Unfortunately, for me this was not a good experience, as I had to make multiple attempts to run for 1000 records before I could have a successful outcome. I experienced multiple Server 500 errors and other errors that related to Cohere server problems.

I wasn’t able to process more that 600 records before the errors occurred and I wasn’t able to generate for a larger percentage of the dataset.

An additional issue is with the response time from Cohere. It was taking approx. 5 minutes to process 200 API calls.

So overall a rather poor experience. I then switched to OpenAI and had a slightly different experience. Check out that post for more details.

EU AI Act: Key Dates and Impact on AI Developers

The official text of the EU AI Act has been published in the EU Journal. This is another landmark point for the EU AI Act, as these regulations are set to enter into force on 1st August 2024. If you haven’t started your preparations for this, you really need to start now. See the timeline for the different stages of the EU AI Act below.

The EU AI Act is a landmark piece of legislation and similar legislation is being drafted/enacted in various geographic regions around the world. The EU AI Act is considered the most extensive legal framework for AI developers, deployers, importers, etc and aims to ensure AI systems introduced or currently being used in the EU internal market (even if they are developed and located outside of the EU) are secure, compliant with existing and new laws on fundamental rights and align with EU principles.

The key dates are:

- 2 February 2025: Prohibitions on Unacceptable Risk AI

- 2 August 2025: Obligations come into effect for providers of general purpose AI models. Appointment of member state competent authorities. Annual Commission review of and possible legislative amendments to the list of prohibited AI.

- 2 February 2026: Commission implements act on post market monitoring

- 2 August 2026: Obligations go into effect for high-risk AI systems specifically listed in Annex III, including systems in biometrics, critical infrastructure, education, employment, access to essential public services, law enforcement, immigration and administration of justice. Member states to have implemented rules on penalties, including administrative fines. Member state authorities to have established at least one operational AI regulatory sandbox. Commission review, and possible amendment of, the list of high-risk AI systems.

- 2 August 2027: Obligations go into effect for high-risk AI systems not prescribed in Annex III but intended to be used as a safety component of a product. Obligations go into effect for high-risk AI systems in which the AI itself is a product and the product is required to undergo a third-party conformity assessment under existing specific EU laws, for example, toys, radio equipment, in vitro diagnostic medical devices, civil aviation security and agricultural vehicles.

- By End of 2030: Obligations go into effect for certain AI systems that are components of the large-scale information technology systems established by EU law in the areas of freedom, security and justice, such as the Schengen Information System.

Here is the link to the official text in the EU Journal publication.

Select AI – OpenAI changes

A few weeks ago I wrote a few blog posts about using SelectAI. These illustrated integrating and using Cohere and OpenAI with SQL commands in your Oracle Cloud Database. See these links below.

- SelectAI – the beginning of a journey

- SelectAI – Doing something useful

- SelectAI – Can metadata help

- SelectAI – the APEX version

With the constantly changing world of APIs, has impacted the steps I outlined in those posts, particularly if you are using the OpenAI APIs. Two things have changed since writing those posts a few weeks ago. The first is with creating the OpenAI API keys. When creating a new key you need to define a project. For now, just select ‘Default Project’. This is a minor change, but it has caused some confusion for those following my steps in this blog post. I’ve updated that post to reflect the current setup in defining a new key in OpenAI. This is a minor change, oh and remember to put a few dollars into your OpenAI account for your key to work. I put an initial $10 into my account and a few minutes later API key for me from my Oracle (OCI) Database.

The second change is related to how the OpenAI API is called from Oracle (OCI) Databases. The API is now expecting a model name. From talking to the Oracle PMs, they will be implementing a fix in their Cloud Databases where the default model will be ‘gpt-3.5-turbo’, but in the meantime, you have to explicitly define the model when creating your OpenAI profile.

BEGIN

--DBMS_CLOUD_AI.drop_profile(profile_name => 'COHERE_AI');

DBMS_CLOUD_AI.create_profile(

profile_name => 'COHERE_AI',

attributes => '{"provider": "cohere",

"credential_name": "COHERE_CRED",

"object_list": [{"owner": "SH", "name": "customers"},

{"owner": "SH", "name": "sales"},

{"owner": "SH", "name": "products"},

{"owner": "SH", "name": "countries"},

{"owner": "SH", "name": "channels"},

{"owner": "SH", "name": "promotions"},

{"owner": "SH", "name": "times"}],

"model":"gpt-3.5-turbo"

}');

END;Other model names you could use include gpt-4 or gpt-4o.

Oracle Object Storage – Parallel Downloading

In previous posts, I’ve given example Python code (and functions) for processing files into and out of OCI Object and Bucket Storage. One of these previous posts includes code and a demonstration of uploading files to an OCI Bucket using the multiprocessing package in Python.

Building upon these previous examples, the code below will download a Bucket using parallel processing. Like my last example, this code is based on the example code I gave in an earlier post on functions within a Jupyter Notebook.

Here’s the code.

import oci

import os

import argparse

from multiprocessing import Process

from glob import glob

import time

####

def upload_file(config, NAMESPACE, b, f, num):

file_exists = os.path.isfile(f)

if file_exists == True:

try:

start_time = time.time()

object_storage_client = oci.object_storage.ObjectStorageClient(config)

object_storage_client.put_object(NAMESPACE, b, os.path.basename(f), open(f,'rb'))

print(f'. Finished {num} uploading {f} in {round(time.time()-start_time,2)} seconds')

except Exception as e:

print(f'Error uploading file {num}. Try again.')

print(e)

else:

print(f'... File {f} does not exist or cannot be found. Check file name and full path')

####

def check_bucket_exists(config, NAMESPACE, b_name):

#check if Bucket exists

is_there = False

object_storage_client = oci.object_storage.ObjectStorageClient(config)

l_b = object_storage_client.list_buckets(NAMESPACE, config.get("tenancy")).data

for bucket in l_b:

if bucket.name == b_name:

is_there = True

if is_there == True:

print(f'Bucket {b_name} exists.')

else:

print(f'Bucket {b_name} does not exist.')

return is_there

####

def download_bucket_file(config, NAMESPACE, b, d, f, num):

print(f'..Starting Download File ({num}):',f, ' from Bucket', b, ' at ', time.strftime("%H:%M:%S"))

try:

start_time = time.time()

object_storage_client = oci.object_storage.ObjectStorageClient(config)

get_obj = object_storage_client.get_object(NAMESPACE, b, f)

with open(os.path.join(d, f), 'wb') as f:

for chunk in get_obj.data.raw.stream(1024 * 1024, decode_content=False):

f.write(chunk)

print(f'..Finished Download ({num}) in ', round(time.time()-start_time,2), 'seconds.')

except:

print(f'Error trying to download file {f}. Check parameters and try again')

####

if __name__ == "__main__":

#setup for OCI

config = oci.config.from_file()

object_storage = oci.object_storage.ObjectStorageClient(config)

NAMESPACE = object_storage.get_namespace().data

####

description = "\n".join(["Upload files in parallel to OCI storage.",

"All files in <directory> will be uploaded. Include '/' at end.",

"",

"<bucket_name> must already exist."])

parser = argparse.ArgumentParser(description=description,

formatter_class=argparse.RawDescriptionHelpFormatter)

parser.add_argument(dest='bucket_name',

help="Name of object storage bucket")

parser.add_argument(dest='directory',

help="Path to local directory containing files to upload.")

args = parser.parse_args()

####

bucket_name = args.bucket_name

directory = args.directory

if not os.path.isdir(directory):

parser.usage()

else:

dir = directory + os.path.sep + "*"

start_time = time.time()

print('Starting Downloading Bucket - Parallel:', bucket_name, ' at ', time.strftime("%H:%M:%S"))

object_storage_client = oci.object_storage.ObjectStorageClient(config)

object_list = object_storage_client.list_objects(NAMESPACE, bucket_name).data

count = 0

for i in object_list.objects:

count+=1

print(f'... {count} files to download')

proc_list = []

num=0

for o in object_list.objects:

p = Process(target=download_bucket_file, args=(config, NAMESPACE, bucket_name, directory, o.name, num))

p.start()

num+=1

proc_list.append(p)

for job in proc_list:

job.join()

print('---')

print(f'Download Finished in {round(time.time()-start_time,2)} seconds.({time.strftime("%H:%M:%S")})')

#### the end ####

I’ve saved the code to a file called bucket_parallel_download.py.

To call this, I run the following using the same DEMO_Bucket and directory of files I used in my previous posts.

python bucket_parallel_download.py DEMO_Bucket /Users/brendan.tierney/Dropbox/OCI-Vision-Images/Blue-Peter/

This creates the following output, and between 3.6 seconds to 4.4 seconds to download the 13 files, based on my connection.

[16:30~/Dropbox]> python bucket_parallel_download.py DEMO_Bucket /Users/brendan.tierney/DEMO_BUCKET

Starting Downloading Bucket - Parallel: DEMO_Bucket at 16:30:05

... 13 files to download

..Starting Download File (0): 2017-08-31 19.46.42.jpg from Bucket DEMO_Bucket at 16:30:08

..Starting Download File (1): 2017-10-16 13.13.20.jpg from Bucket DEMO_Bucket at 16:30:08

..Starting Download File (2): 2017-11-22 20.18.58.jpg from Bucket DEMO_Bucket at 16:30:08

..Starting Download File (3): 2018-12-03 11.04.57.jpg from Bucket DEMO_Bucket at 16:30:08

..Starting Download File (11): thumbnail_IMG_2333.jpg from Bucket DEMO_Bucket at 16:30:08

..Starting Download File (5): IMG_2347.jpg from Bucket DEMO_Bucket at 16:30:08

..Starting Download File (9): thumbnail_IMG_1711.jpg from Bucket DEMO_Bucket at 16:30:08

..Starting Download File (4): 347397087_620984963239631_2131524631626484429_n.jpg from Bucket DEMO_Bucket at 16:30:08

..Starting Download File (10): thumbnail_IMG_1712.jpg from Bucket DEMO_Bucket at 16:30:08

..Starting Download File (8): thumbnail_IMG_1710.jpg from Bucket DEMO_Bucket at 16:30:08

..Starting Download File (7): oug_ire18_1.jpg from Bucket DEMO_Bucket at 16:30:08

..Starting Download File (6): IMG_6779.jpg from Bucket DEMO_Bucket at 16:30:08

..Starting Download File (12): thumbnail_IMG_2336.jpg from Bucket DEMO_Bucket at 16:30:08

..Finished Download (9) in 0.67 seconds.

..Finished Download (11) in 0.74 seconds.

..Finished Download (10) in 0.7 seconds.

..Finished Download (5) in 0.8 seconds.

..Finished Download (7) in 0.7 seconds.

..Finished Download (1) in 1.0 seconds.

..Finished Download (12) in 0.81 seconds.

..Finished Download (4) in 1.02 seconds.

..Finished Download (6) in 0.97 seconds.

..Finished Download (2) in 1.25 seconds.

..Finished Download (8) in 1.16 seconds.

..Finished Download (0) in 1.47 seconds.

..Finished Download (3) in 1.47 seconds.

---

Download Finished in 4.09 seconds.(16:30:09)Oracle Object Storage – Parallel Uploading

In my previous posts on using Python to work with OCI Object Storage, I gave code examples and illustrated how to create Buckets, explore Buckets, upload files, download files and delete files and buckets, all using Python and files on your computer.

- Oracle Object Storage – Setup and Explore

- Oracle Object Storage – Buckets & Loading files

- Oracle Object Storage – Downloading and Deleting

- Oracle Object Storage – Parallel Uploading

Building upon the code I’ve given for uploading files, which did so sequentially, in his post I’ve taken that code and expanded it to allow the files to be uploaded in parallel to an OCI Bucket. This is achieved using the Python multiprocessing library.

Here’s the code.

import oci

import os

import argparse

from multiprocessing import Process

from glob import glob

import time

####

def upload_file(config, NAMESPACE, b, f, num):

file_exists = os.path.isfile(f)

if file_exists == True:

try:

start_time = time.time()

object_storage_client = oci.object_storage.ObjectStorageClient(config)

object_storage_client.put_object(NAMESPACE, b, os.path.basename(f), open(f,'rb'))

print(f'. Finished {num} uploading {f} in {round(time.time()-start_time,2)} seconds')

except Exception as e:

print(f'Error uploading file {num}. Try again.')

print(e)

else:

print(f'... File {f} does not exist or cannot be found. Check file name and full path')

####

def check_bucket_exists(config, NAMESPACE, b_name):

#check if Bucket exists

is_there = False

object_storage_client = oci.object_storage.ObjectStorageClient(config)

l_b = object_storage_client.list_buckets(NAMESPACE, config.get("tenancy")).data

for bucket in l_b:

if bucket.name == b_name:

is_there = True

if is_there == True:

print(f'Bucket {b_name} exists.')

else:

print(f'Bucket {b_name} does not exist.')

return is_there

####

if __name__ == "__main__":

#setup for OCI

config = oci.config.from_file()

object_storage = oci.object_storage.ObjectStorageClient(config)

NAMESPACE = object_storage.get_namespace().data

####

description = "\n".join(["Upload files in parallel to OCI storage.",

"All files in <directory> will be uploaded. Include '/' at end.",

"",

"<bucket_name> must already exist."])

parser = argparse.ArgumentParser(description=description,

formatter_class=argparse.RawDescriptionHelpFormatter)

parser.add_argument(dest='bucket_name',

help="Name of object storage bucket")

parser.add_argument(dest='directory',

help="Path to local directory containing files to upload.")

args = parser.parse_args()

####

bucket_name = args.bucket_name

directory = args.directory

if not os.path.isdir(directory):

parser.usage()

else:

dir = directory + os.path.sep + "*"

#### Check if Bucket Exists ####

b_exists = check_bucket_exists(config, NAMESPACE, bucket_name)

if b_exists == True:

try:

proc_list = []

num=0

start_time = time.time()

#### Start uploading files ####

for file_path in glob(dir):

print(f"Starting {num} upload for {file_path}")

p = Process(target=upload_file, args=(config, NAMESPACE, bucket_name, file_path, num))

p.start()

num+=1

proc_list.append(p)

except Exception as e:

print(f'Error uploading file ({num}). Try again.')

print(e)

else:

print('... Create Bucket before uploading Directory.')

for job in proc_list:

job.join()

print('---')

print(f'Finished uploading all files ({num}) in {round(time.time()-start_time,2)} seconds')

#### the end ####

I’ve saved the code to a file called bucket_parallel.py.

To call this, I run the following using the same DEMO_Bucket and directory of files I used in my previous posts.

python bucket_parallel.py DEMO_Bucket /Users/brendan.tierney/Dropbox/OCI-Vision-Images/Blue-Peter/

This creates the following output, and between 3.3 seconds to 4.6 seconds to upload the 13 files, based on my connection.

[15:29~/Dropbox]> python bucket_parallel.py DEMO_Bucket /Users/brendan.tierney/Dropbox/OCI-Vision-Images/Blue-Peter/

Bucket DEMO_Bucket exists.

Starting 0 upload for /Users/brendan.tierney/Dropbox/OCI-Vision-Images/Blue-Peter/thumbnail_IMG_2336.jpg

Starting 1 upload for /Users/brendan.tierney/Dropbox/OCI-Vision-Images/Blue-Peter/2017-08-31 19.46.42.jpg

Starting 2 upload for /Users/brendan.tierney/Dropbox/OCI-Vision-Images/Blue-Peter/thumbnail_IMG_2333.jpg

Starting 3 upload for /Users/brendan.tierney/Dropbox/OCI-Vision-Images/Blue-Peter/347397087_620984963239631_2131524631626484429_n.jpg

Starting 4 upload for /Users/brendan.tierney/Dropbox/OCI-Vision-Images/Blue-Peter/thumbnail_IMG_1712.jpg

Starting 5 upload for /Users/brendan.tierney/Dropbox/OCI-Vision-Images/Blue-Peter/thumbnail_IMG_1711.jpg

Starting 6 upload for /Users/brendan.tierney/Dropbox/OCI-Vision-Images/Blue-Peter/2017-11-22 20.18.58.jpg

Starting 7 upload for /Users/brendan.tierney/Dropbox/OCI-Vision-Images/Blue-Peter/thumbnail_IMG_1710.jpg

Starting 8 upload for /Users/brendan.tierney/Dropbox/OCI-Vision-Images/Blue-Peter/2018-12-03 11.04.57.jpg

Starting 9 upload for /Users/brendan.tierney/Dropbox/OCI-Vision-Images/Blue-Peter/IMG_6779.jpg

Starting 10 upload for /Users/brendan.tierney/Dropbox/OCI-Vision-Images/Blue-Peter/oug_ire18_1.jpg

Starting 11 upload for /Users/brendan.tierney/Dropbox/OCI-Vision-Images/Blue-Peter/2017-10-16 13.13.20.jpg

Starting 12 upload for /Users/brendan.tierney/Dropbox/OCI-Vision-Images/Blue-Peter/IMG_2347.jpg

. Finished 2 uploading /Users/brendan.tierney/Dropbox/OCI-Vision-Images/Blue-Peter/thumbnail_IMG_2333.jpg in 0.752561092376709 seconds

. Finished 5 uploading /Users/brendan.tierney/Dropbox/OCI-Vision-Images/Blue-Peter/thumbnail_IMG_1711.jpg in 0.7750208377838135 seconds

. Finished 4 uploading /Users/brendan.tierney/Dropbox/OCI-Vision-Images/Blue-Peter/thumbnail_IMG_1712.jpg in 0.7535321712493896 seconds

. Finished 0 uploading /Users/brendan.tierney/Dropbox/OCI-Vision-Images/Blue-Peter/thumbnail_IMG_2336.jpg in 0.8419861793518066 seconds

. Finished 7 uploading /Users/brendan.tierney/Dropbox/OCI-Vision-Images/Blue-Peter/thumbnail_IMG_1710.jpg in 0.7582859992980957 seconds

. Finished 10 uploading /Users/brendan.tierney/Dropbox/OCI-Vision-Images/Blue-Peter/oug_ire18_1.jpg in 0.8714470863342285 seconds

. Finished 12 uploading /Users/brendan.tierney/Dropbox/OCI-Vision-Images/Blue-Peter/IMG_2347.jpg in 0.8753311634063721 seconds

. Finished 1 uploading /Users/brendan.tierney/Dropbox/OCI-Vision-Images/Blue-Peter/2017-08-31 19.46.42.jpg in 1.2201581001281738 seconds

. Finished 11 uploading /Users/brendan.tierney/Dropbox/OCI-Vision-Images/Blue-Peter/2017-10-16 13.13.20.jpg in 1.2848408222198486 seconds

. Finished 3 uploading /Users/brendan.tierney/Dropbox/OCI-Vision-Images/Blue-Peter/347397087_620984963239631_2131524631626484429_n.jpg in 1.325110912322998 seconds

. Finished 9 uploading /Users/brendan.tierney/Dropbox/OCI-Vision-Images/Blue-Peter/IMG_6779.jpg in 1.6633048057556152 seconds

. Finished 8 uploading /Users/brendan.tierney/Dropbox/OCI-Vision-Images/Blue-Peter/2018-12-03 11.04.57.jpg in 1.8549730777740479 seconds

. Finished 6 uploading /Users/brendan.tierney/Dropbox/OCI-Vision-Images/Blue-Peter/2017-11-22 20.18.58.jpg in 2.018144130706787 seconds

---

Finished uploading all files (13) in 3.9126579761505127 secondsOracle Object Storage – Downloading and Deleting

In my previous posts on using Object Storage I illustrated what you needed to do to setup your connect, explore Object Storage, create Buckets and how to add files. In this post, I’ll show you how to download files from a Bucket, and to delete Buckets.

- Oracle Object Storage – Setup and Explore

- Oracle Object Storage – Buckets & Loading files

- Oracle Object Storage – Downloading and Deleting

- Oracle Object Storage – Parallel Uploading

Let’s start with downloading the files in a Bucket. In my previous post, I gave some Python code and functions to perform these steps for you. The Python function below will perform this for you. A Bucket needs to be empty before it can be deleted. The function checks for files and if any exist, will delete these files before proceeding with deleting the Bucket.

Namespace needs to be defined, and you can see how that is defined by looking at my early posts on this topic.

def download_bucket(b, d):

if os.path.exists(d) == True:

print(f'{d} already exists.')

else:

print(f'Creating {d}')

os.makedirs(d)

print('Downloading Bucket:',b)

object_list = object_storage_client.list_objects(NAMESPACE, b).data

count = 0

for i in object_list.objects:

count+=1

print(f'... {count} files')

for o in object_list.objects:

print(f'Downloading object {o.name}')

get_obj = object_storage_client.get_object(NAMESPACE, b, o.name)

with open(os.path.join(d,o.name), 'wb') as f:

for chunk in get_obj.data.raw.stream(1024 * 1024, decode_content=False):

f.write(chunk)

print('Download Finished.')Here’s an example of this working.

download_dir = '/Users/brendan.tierney/DEMO_BUCKET'

download_bucket(BUCKET_NAME, download_dir)

/Users/brendan.tierney/DEMO_BUCKET already exists.

Downloading Bucket: DEMO_Bucket

... 14 files

Downloading object .DS_Store

Downloading object 2017-08-31 19.46.42.jpg

Downloading object 2017-10-16 13.13.20.jpg

Downloading object 2017-11-22 20.18.58.jpg

Downloading object 2018-12-03 11.04.57.jpg

Downloading object 347397087_620984963239631_2131524631626484429_n.jpg

Downloading object IMG_2347.jpg

Downloading object IMG_6779.jpg

Downloading object oug_ire18_1.jpg

Downloading object thumbnail_IMG_1710.jpg

Downloading object thumbnail_IMG_1711.jpg

Downloading object thumbnail_IMG_1712.jpg

Downloading object thumbnail_IMG_2333.jpg

Downloading object thumbnail_IMG_2336.jpg

Download Finished.

We can also download individual files. Here’s a function to do that. It’s a simplified version of the previous function

def download_bucket_file(b, d, f):

print('Downloading File:',f, ' from Bucket', b)

try:

get_obj = object_storage_client.get_object(NAMESPACE, b, f)

with open(os.path.join(d, f), 'wb') as f:

for chunk in get_obj.data.raw.stream(1024 * 1024, decode_content=False):

f.write(chunk)

print('Download Finished.')

except:

print('Error trying to download file. Check parameters and try again')

download_dir = '/Users/brendan.tierney/DEMO_BUCKET'

file_download = 'oug_ire18_1.jpg'

download_bucket_file(BUCKET_NAME, download_dir, file_download)

Downloading File: oug_ire18_1.jpg from Bucket DEMO_Bucket

Download Finished.The final function is to delete a Bucket from your OCI account.

def delete_bucket(b_name):

bucket_exists = check_bucket_exists(b_name)

objects_exist = False

if bucket_exists == True:

print('Starting - Deleting Bucket '+b_name)

print('... checking if objects exist in Bucket (bucket needs to be empty)')

try:

object_list = object_storage_client.list_objects(NAMESPACE, b_name).data

objects_exist = True

except Exception as e:

objects_exist = False

if objects_exist == True:

print('... ... Objects exists in Bucket. Deleting these objects.')

count = 0

for o in object_list.objects:

count+=1

object_storage_client.delete_object(NAMESPACE, b_name, o.name)

if count > 0:

print(f'... ... Deleted {count} objects in {b_name}')

else:

print(f'... ... Bucket is empty. No objects to delete.')

else:

print(f'... No objects to delete, Bucket {b_name} is empty')

print(f'... Deleting bucket {b_name}')

response = object_storage_client.delete_bucket(NAMESPACE, b_name)

print(f'Deleted bucket {b_name}') Before running this function, lets do a quick check to see what Buckets I have in my OCI account.

list_bucket_counts()

Bucket name: ADW_Bucket

... num of objects : 2

Bucket name: Cats-and-Dogs-Small-Dataset

... num of objects : 100

Bucket name: DEMO_Bucket

... num of objects : 14

Bucket name: Demo

... num of objects : 210

Bucket name: Finding-Widlake-Bucket

... num of objects : 424

Bucket name: Planes-in-Satellites

... num of objects : 89

Bucket name: Vision-Demo-1

... num of objects : 10

Bucket name: root-bucket

... num of objects : 2I’ve been using DEMO_Bucket in my previous examples and posts. We’ll use this to demonstrate the deleting of a Bucket.

delete_bucket(BUCKET_NAME)

Bucket DEMO_Bucket exists.

Starting - Deleting Bucket DEMO_Bucket

... checking if objects exist in Bucket (bucket needs to be empty)

... ... Objects exists in Bucket. Deleting these objects.

... ... Deleted 14 objects in DEMO_Bucket

... Deleting bucket DEMO_Bucket

Deleted bucket DEMO_Bucket

You must be logged in to post a comment.