Data Scientist

Data Profiling in Python

With every data analytics and data science project, one of the first tasks to that everyone needs to do is to profile the data sets. Data profiling allows you to get an initial picture of the data set, see data distributions and relationships. Additionally it allows us to see what kind of data cleaning and data transformations are necessary.

Most data analytics tools and languages have some functionality available to help you. Particular the various data science/machine learning products have this functionality built-in them and can do a lot of the data profiling automatically for you. But if you don’t use these tools/products, then you are probably using R and/or Python to profile your data.

With Python you will be working with the data set loaded into a Pandas data frame. From there you will be using various statistical functions and graphing functions (and libraries) to create a data profile. From there you will probably create a data profile report.

But one of the challenges with doing this in Python is having different coding for handling numeric and character based attributes/features. The describe function in Python (similar to the summary function in R) gives some statistical summaries for numeric attributes/features. A different set of functions are needed for character based attributes. The Python Library repository (https://pypi.org/) contains over 200K projects. But which ones are really useful and will help with your data science projects. Especially with new projects and libraries being released on a continual basis? This is a major challenge to know what is new and useful.

For example the followings shows loading the titanic data set into a Pandas data frame, creating a subset and using the describe function in Python.

import pandas as pd

df = pd.read_csv("/Users/brendan.tierney/Dropbox/4-Datasets/titanic/train.csv")

df.head(5)

df2 = df.iloc[:,[1,2,4,5,6,7,8,10,11]] df2.head(5)

df2.describe()

You will notice the describe function has only looked at the numeric attributes.

One of those 200+k Python libraries is one called pandas_profiling. This will create a data audit report for both numeric and character based attributes. This most be good, Right? Let’s take a look at what it does.

For each column the following statistics – if relevant for the column type – are presented in an interactive HTML report:

- Essentials: type, unique values, missing values

- Quantile statistics like minimum value, Q1, median, Q3, maximum, range, interquartile range

- Descriptive statistics like mean, mode, standard deviation, sum, median absolute deviation, coefficient of variation, kurtosis, skewness

- Most frequent values

- Histogram

- Correlations highlighting of highly correlated variables, Spearman, Pearson and Kendall matrices

- Missing values matrix, count, heatmap and dendrogram of missing values

The first step is to install the pandas_profiling library.

pandas-profiling package naming was changed. To continue profiling data use ydata-profiling instead!

pip3 install pandas_profiling

Now run the pandas_profiling report for same data frame created and used, see above.

import pandas_profiling as pp df2.profile_report()

The following images show screen shots of each part of the report. Click and zoom into these to see more details.

Automatic Analytics is So main stream. Not something new.

Everyone is doing advanced analytics. Right? Hmm

Everyone is talking about advanced analytics? Yes that is true.

Everyone is an expert in advanced analytics? This is so not true. Watch out for these Great Pretenders. You know what I mean! You know who I mean! Maybe you know some of them already? If not, watch out for these Great Pretenders!!!

Some people are going around talking about data mining, predictive analytics, advanced analytics, machine learning etc as if this is some new topic. Well it isn’t. It isn’t anything new and most of the techniques have been about for 10, 20, 30+ years.

Some people are saying you should only use language X or tool Y because. Everything else is basically rubbish.

What we do have is a wider understanding of how to use these techniques on our various data sources.

What we have is a lot more tools that allow us to perform these tasks a lot easier, at greater speed, with more functionality and without the need to fully understand the hard core maths that is going on behind the scenes.

What we have is a lot more languages to perform these tasks and to support the vast amount of work that goes into understanding the data and preparing the data.

Someone thing for all of us to watch out for, when we ready about these topics, is what kind of problem area they are addressing. The following table illustrates the three main types or categories of Analytics. These categories are Descriptive Analytics, Predictive Analytics and Prescriptive Analytics. I think most people would agree that the Descriptive and Predictive Analytics categories are very mature at this stage. With Predictive Analytics we are perhaps still evolving in this category and a lot more work needs to be done before this this become wide spread.

Some people talk as if Predictive Analytics is some new and exciting topic. But isn’t all that new. It was been around for the past 30+ years. If you go back over the Gartner Hype Cycle that comes out every September, Predictive Analytics is no longer being shown on this graph. The last time it appeared on the Gartner Hype Cycle was back in 2013 and it was positioned on the far right of the graph in the section called Plateau of Productivity.

So Predictive Analytics is very mature and main stream. Part of the reason that it is main stream is that Predictive Analytics has allowed for a new category of Analytics to evolve and this is Automatic Analytics.

Automatic Analytics is where Advanced and Predictive Analytics has been build into our day to day applications that are used to run our business. We do not need the hard core type of data scientists to perform various analytic on our data. Instead these task, once they have been defined, can then be added to our applications to process, evaluate and make decisions all automatically. This is were we need the data scientists to be able to communicate with the business and be able to work with them to solve real world business projects. This is a different type of data scientist to the “hard” core data scientist who delves into the various statistical methods, machine learning methods, data management methods, etc.

The following table extends the table given above to include Automatic Analytics, and is my own take on how and where Automatic Analytics fits.

Every time we get an insurance quote, health insurance quote, get a “random” call from our Telco offering a free upgrade, get our loyalty card statements, get a loan from the bank, look at or buy a book on Amazon, etc. the list could go on and on, but these are all examples of how predictive analytics has been automated into our everyday business application.

But this is nothing new. When I first got into data mining/predictive analytics over 16 years ago, it was considered a common thing that certain types of companies did. What has happened in the time since and particularly in the past few years is that a lot more people are seeing the value in using it.

Before I finish off this post we can have a quick look at what Oracle has been doing in this area. They have their Advanced Analytics Option and Real-Time Decisions tools to all data scientists do their magic. But over the past X years (nobody can give me an exact number) they have been very, very active in building in lots and lots of predictive analytics into their various business applications, particularly with into with Fusion Apps and BI Apps.

A recent quote from Oracle highlights their aim with this,

” … products designed to close the gap between data scientists and businesses.“

Now with Oracle making a big push to the cloud, they are busy adding in more and more Automatic (Predictive) Analytics into their Cloud Applications. What we need from Oracle is a clearer identification of where they have done this. Plus with the migration of their Apps to the cloud, their Advanced Analytics Option is a core part of their Cloud platform. As they upgrade or add new features into their Cloud Apps, you will now be able to get the benefit of these Automatic (Predictive) Analytics as they come available.

Part 3–Getting start with Statistics for Oracle Data Science projects

This is the Part 3 blog post on getting started with Statistics for Oracle Data Science projects.

- The first blog post in the series looked at the DBMS_STAT_FUNCS PL/SQL package, what it can be used for and I give some sample code on how to use it in your data science projects. I also give some sample code that I typically run to gather some additional stats.

- The second blog post will look at some of the other statistical functions that exist in SQL that you will/may use regularly in your data science projects.This is the second blog on getting started with Statistics for Oracle Data Science projects.

- The third blog post will provide a summary of the other statistical functions that exist in the database.

The table below is a collection of most of the statistical functions in Oracle 11.2. The links in the table bring you to the relevant section of the Oracle documentation where you will find a description of each function, the syntax and some examples of each.

The list about may not be complete (I’m sure it is not), but it will cover most of what you will need to use in your Oracle projects.

If you come across or know of other useful statistical functions in Oracle let me know the details and I will update the table above to include them.

Part 2–Getting start with Statistics for Oracle Data Science projects

This is the second blog on getting started with Statistics for Oracle Data Science projects.

- The first blog post in the series looked at the DBMS_STAT_FUNCS PL/SQL package, what it can be used for and I give some sample code on how to use it in your data science projects. I also give some sample code that I typically run to gather some additional stats.

- The second blog post will look at some of the other statistical functions that exist in SQL that you will/may use regularly in your data science projects.

- The third blog post will provide a summary of the other statistical functions that exist in the database.

In this blog post I will look at 3 more useful statistical functions that are available in the Oracle database. Remember these come are standard with the database. The first function I will look at is the WIDTH_BUCKET function. This can be used to create some histograms of the data. A common task in analytics projects is to produce some cross tabs of the data. Oracle has the STATS_CROSSTAB. The last function I will look the different ways you an sample the data.

Histograms using WIDTH_BUCKET

When exploring your data it is useful to group values together into a number of buckets. Typically you might want to define the width of each bucket yourself before passing the data into your data mining tools, but before you can decide what these are you need to do some exploring using a variety of widths. A good way to do this is to use the WIDTH_BUCKET function. This takes the following inputs:

Expression: This is the expression or attribute on which the you want to build the histogram.

Min Value: This is the lower or starting value of the first bucket

Max Value: This is the last or highest value for the last bucket

Num Buckets: This is the number of buckets you want created.

Typically the Min Value and the Max Value can be calculated using the MIN and MAX functions. As a starting point you generally would select 10 for the number of buckets. This is the number you will change, downwards as well as upwards, to if a particular pattern exists in the attribute.

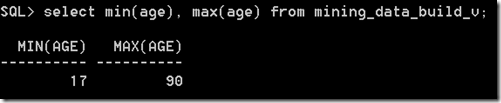

Using the example scenario that I used in the first blog post, let us start by calculating the MIN and MAX for the AGE attribute.

Lets say that we wanted to create 10 buckets. This would create a bucket width of 7.3 for each bucket, giving us the following.

Bucket 1 : 17-24.3

Bucket 2: 24.3-31.6

Bucket 3: 31.6-38.8

Bucket 4: 38.8-46.1

Bucket 5: 46.1-53.4

Bucket 6: 53.4-60.7

Bucket 7: 60.7-68

Bucket 8: 68-75.3

Bucket 9: 75.3-82.6

Bucket 10: 82.6-90

These are the buckets that the WIDTH_BUCKET function gives us in the following:

SELECT cust_id,

age,

width_bucket(age,

(SELECT min(age) from mining_data_build_v),

(select max(age)+1 from mining_data_build_v),

10) bucket

from mining_data_build_v

where rownum <=12

group by cust_id, age

An additional level of detail that is needed to allow us to plot the histograms for AGE, we need to aggregate up for all the records by bucket.

select intvl, count(*) freq

from (select width_bucket(salary,

(select min(salary) from employees),

(select max(salary)+1 from employees), 10) intvl

from HR.employees)

group by intvl

order by intvl;

We can take this code and embed it into the GATHER_DATA_STATS procedure that I gave in my Part 1 blog post.

Cross Tabs using STATS_CROSSTAB

Typically cross tabulation (or crosstabs for short) is a statistical process that summarises categorical data to create a contingency table. They provide a basic picture of the interrelation between two variables and can help find interactions between them.

Because Crosstabs creates a row for each value in one variable and a column for each value in the other, the procedure is not suitable for continuous variables that assume many values.

In Oracle we can perform crosstabs using one of their reporting tools. But if you don’t have one of these we will need to use the in-database function STATS_CROSSTAB. This function takes three parameters, the first two of these are the attributes you want to compare and the third is what test we want to perform. The tests available include:

- CHISQ_OBS: Observed value of chi-squared

- CHISQ_SIG: Significance of observed chi-squared

- CHISQ_DF: Degree of freedom for chi-squared

- PHI_COEFFICIENT: Phi coefficient

- CRAMERS_V: Cramer’s V statistic

- CONT_COEFFICIENT: Contingency coefficient

- COHENS_K: Cohen’s kappa

CHISQ_SIG is the default.

Now let us look at some examples using our same data set.

Sampling Data

When our datasets are of relatively small size consisting of a few hundred thousand records we can explore the data is a relatively short period of time. But if your data sets are larger that that you may need to explore the data by taking a sample of it. What sampling does is that it takes a “random” selection of records from our data set up to the new number of records we have specified in the sample.

In Oracle the SAMPLE function takes a percentage figure. This is the percentage of the entire data set you want to have in the Sampled result.

There is also a variant called SAMPLE BLOCK and the figure given is the percentage of records to select from each block.

Each time you use the SAMPLE function Oracle will generate a random seed number that it will use as a Seed for the SAMPLE function. If you omit a Seed number (like in the above examples), you will get a different result set in each case and the result set will have a slightly different number of records. If you run the sample code above over and over again you will see that the number of records returned varies by a small amount.

If you would like to have the same Sample data set returned each time then you will need to specify a Seed value. The Seed much be an integer between 0 and 4294967295.

In this case because we have specified the Seed we get the same “random” records being returned with each execution.

Part 1–Getting started with Statistics for Oracle Data Science projects

With all analytics or data science projects one of the first steps typically involves the extraction of data from various sources, merging the data and then performing various statistics.

The extraction and merging of the data is well covered by lots of other people blogging about how to use Oracle Data Integration (ODI), Oracle Warehouse Builder (OWB), among many others.

What I’m going to look at in this series of blog posts will be what statistical functions you might look at using in the Oracle and how to use them.

- This the first blog post in the series will look at the DBMS_STAT_FUNCS PL/SQL package, what it can be used for and I give some sample code on how to use it in your data science projects. I also give some sample code that I typically run to gather some additional stats.

- The second blog post will look at some of the other statistical functions that exist in SQL that you will/may use regularly in your data science projects.

- The third blog post will provide a summary of the other statistical functions that exist in the database.

These statistical functions can be grouped into 2 main types. The first is the descriptive statistics that are available by using the DBMS_STAT_FUNCS PL/SQL package and then there is the extensive list of other SQL stats functions. It is worth mentioning at this point that all these stats packages and functions come as standard in the database (i.e. they are FREE, you do not have to pay for an add on option for the database to use them). So once you have you Oracle database installed you can start using them. There is no need to spend money buying another stats package to do stats. All you need to know is some SQL and what the stats functions are.

DBMS_STAT_FUNCS

One of the stats package that I use a lot is the SUMMARY function. This is part of the DBMS_STAT_FUNC PL/SQL package. This package calculates a number of common statistics for an attribute in a table. Yes that’s correct, it only gather statistics for just one attribute. So you will have to run it for all the numeric attributes in the table.

For does people who are familiar with the Oracle Data Miner tool, the explore data node produces a lot of these statistics that the SUMMARY function produces. See below for details of how to produce the Histograms.

The SUMMARY function has the following parameters

Although you will probably be running this this function on the data in your schema you still have to give the schema name. The table name is the name of the table where the data exists, the column name is the name of the column that contains the actual data you want to analyse, and the ‘s’ is the record that will be returned by the SUMMARY function that contains all the summary information.

An example of the basic script to run the SUMMARY function is given below. It will use the data that is available in the sample schemas and the views that where setup for the Oracle Data Mining sample schemas. The table (or in this case the view) that we are going to use is the MINING_DATA_BUILD_V. What we are going to do is to replicate some of what the Explore Node does in the Oracle Data Miner tool.

set serveroutput on

declare

s DBMS_STAT_FUNCS.SummaryType;

begin

DBMS_STAT_FUNCS.SUMMARY(‘DMUSER’, ‘MINING_DATA_BUILD_V’, ‘AGE’, 3, s);

dbms_output.put_line(‘SUMMARY STATISTICS’);

dbms_output.put_line(‘Count : ‘||s.count);

dbms_output.put_line(‘Min : ‘||s.min);

dbms_output.put_line(‘Max : ‘||s.max);

dbms_output.put_line(‘Range : ‘||s.range);

dbms_output.put_line(‘Mean : ‘||round(s.mean));

dbms_output.put_line(‘Mode Count : ‘||s.cmode.count);

dbms_output.put_line(‘Mode : ‘||s.cmode(1));

dbms_output.put_line(‘Variance : ‘||round(s.variance));

dbms_output.put_line(‘Stddev : ‘||round(s.stddev));

dbms_output.put_line(‘Quantile 5 : ‘||s.quantile_5);

dbms_output.put_line(‘Quantile 25 : ‘||s.quantile_25);

dbms_output.put_line(‘Median : ‘||s.median);

dbms_output.put_line(‘Quantile 75 : ‘||s.quantile_75);

dbms_output.put_line(‘Quantile 95 : ‘||s.quantile_95);

dbms_output.put_line(‘Extreme Count : ‘||s.extreme_values.count);

dbms_output.put_line(‘Extremes : ‘||s.extreme_values(1));

dbms_output.put_line(‘Top 5 : ‘||s.top_5_values(1)||’,’||

s.top_5_values(2)||’,’||

s.top_5_values(3)||’,’||

s.top_5_values(4)||’,’||

s.top_5_values(5));

dbms_output.put_line(‘Bottom 5 : ‘||s.bottom_5_values(5)||’,’||

s.bottom_5_values(4)||’,’||

s.bottom_5_values(3)||’,’||

s.bottom_5_values(2)||’,’||

s.bottom_5_values(1));

end;

/

We can compare this to what is produced by the Explore Node in ODM

We can see that the Explore Node gives us more statistics to help us with understanding the data.

What Statistics does the Explore Node produce

We can see the actual SQL code that the Explore Node runs to get the statistics that are displayed in the Explore Node View Data window. To do this you will need to right-click on the Explore Node and move the mouse down to the Deploy option. The submenu will open and select ‘SQL to Clipboard’ from the list. Open a text editor and past the code. You will need to tidy up some of this code to point it at the actual data source you want. You will get the following

SELECT /*+ inline */ ATTR,

DATA_TYPE,

NULL_PERCENT,

DISTINCT_CNT,

DISTINCT_PERCENT,

MODE_VALUE,

AVG,

MIN,

MAX,

STD,

VAR,

SKEWNESS,

KURTOSIS,

HISTOGRAMS

FROM OUTPUT_1_23;

Where OUTPUT_1_23 is a working table that ODM has created to store intermediate results from some of its processing. In this case the Explore Node. You will need to change this to the ODM working table in your schema.

This query does not perform any of the statistics gathering. It just presents the results.

Creating our own Statistics gathering script – Part 1

The attribute names in the above SQL query tells us what statistics functions within Oracle that were used. We can replicate this statistics gathering task using the following script. There are four parts to this script. The first part gathers most of the common statistics for the attribute. The second and third parts calculate the Skewness and Kurtosis for the attribute. The final (fourth) part combines the first three parts and lists the outputs.

The one statistic function that we are not including at this point is the Histogram information. I will cover this in the next (second) blog post on statistics.

The following script has the data source table/view name included (MINING_DATA_BUILD_V) and the attribute we are going to use (AGE). You will need to modify this script to run it for each attribute.

WITH

basic_statistics AS (select (sum(CASE WHEN age IS NULL THEN 1 ELSE 0 END)/COUNT(*))*100 null_percent,

count(*) num_value,

count(distinct age) distinct_count,

(count(distinct age)/count(*))*100 distinct_percent,

avg(age) avg_value,

min(age) min_value,

max(age) max_value,

stddev(age) std_value,

stats_mode(age) mode_value,

variance(age) var_value

from mining_data_build_v),

skewness AS (select avg(SV) S_value

from (select power((age – avg(age) over ())/stddev(age) over (), 3) SV

from mining_data_build_v) ),

kurtosis AS (select avg(KV) K_value

from (select power((age – avg(age) over ())/stddev(age) over (), 4) KV

from mining_data_build_v) )

SELECT null_percent,

num_value,

distinct_percent,

avg_value,

min_value,

max_value,

std_value,

mode_value,

var_value,

S_value,

K_value

from basic_statistics,

skewness,

kurtosis;

Part 2 – Lets do it for all the attributes in a table

In the code above I’ve shown how you can gather the statistics for one particular attribute of one table.But in with an analytics project you will want to gather the statistics on all the attributes.

What we can do is to take the code above and put it into a procedure. This procedure accepts a table name as input, loops through the attributes for this table and calculates the various statistics. The statistics are saved in a table called DATA_STATS (see below).

drop table data_stats;

create table DATA_STATS (

table_name VARCHAR2(30) NOT NULL,

column_name VARCHAR2(30) NOT NULL,

data_type VARCHAR2(106) NOT NULL,

data_length NUMBER,

data_percision NUMBER,

data_scale NUMBER,

num_records NUMBER,

distinct_count NUMBER,

null_percent NUMBER,

distinct_percent NUMBER,

avg_value NUMBER,

min_value NUMBER,

max_value NUMBER,

std_value NUMBER,

mode_value VARCHAR2(1000),

var_value NUMBER,

s_value NUMBER,

k_value NUMBER,

PRIMARY KEY (table_name, column_name));

This is one of the first things that I do when I start on a new project. I create the DATA_STATS table and run my procedure GATHER_DATA_STATS for each table that we will be using. By doing this it allows me to have a permanent records of the stats for each attribute and saves me time in having to rerun various stats at different points of the project. I can also use these stats to produces some additional stats or to produce some graphs.

He is the code for the GATHER_DATA_STATS procedure.

CREATE OR REPLACE PROCEDURE gather_data_stats(p_table_name IN varchar2) AS

cursor c_attributes (c_table_name varchar2)

is SELECT table_name,

column_name,

data_type,

data_length,

data_precision,

data_scale

FROM user_tab_columns

WHERE table_name = upper(c_table_name);

v_sql NUMBER;

v_rows NUMBER;

BEGIN

dbms_output.put_line(‘Starting to gather statistics for ‘||upper(p_table_name)||’ at ‘||to_char(sysdate,’DD-MON-YY HH24:MI:SS’));

FOR r_att in c_attributes(p_table_name) LOOP

—

— remove any previously generated stats

—

v_sql := DBMS_SQL.OPEN_CURSOR;

DBMS_SQL.PARSE(v_sql, ‘delete from DATA_STATS where table_name = ”’||r_att.table_name||”’ and column_name = ”’||r_att.column_name||””, DBMS_SQL.NATIVE);

v_rows := DBMS_SQL.EXECUTE(v_sql);

–dbms_output.put_line(‘delete from DATA_STATS where table_name = ”’||r_att.table_name||”’ and column_name = ”’||r_att.column_name||””);

IF r_att.data_type = ‘NUMBER’ THEN

dbms_output.put_line(r_att.table_name||’ : ‘||r_att.column_name||’ : ‘||r_att.data_type);

—

— setup the insert statement and execute

—

v_sql := DBMS_SQL.OPEN_CURSOR;

DBMS_SQL.PARSE(v_sql, ‘insert into data_stats select ”’||r_att.table_name||”’, ”’||r_att.column_name||”’, ”’||r_att.data_type||”’, ‘||r_att.data_length||’, ‘||nvl(r_att.data_precision,0)||’, ‘||nvl(r_att.data_scale,0)||’, count(*) num_value, (sum(CASE WHEN ‘||r_att.column_name||’ IS NULL THEN 1 ELSE 0 END)/COUNT(*))*100 null_percent, count(distinct ‘||r_att.column_name||’) distinct_count, (count(distinct ‘||r_att.column_name||’)/count(*))*100 distinct_percent, avg(‘||r_att.column_name||’) avg_value, min(‘||r_att.column_name||’) min_value, max(‘||r_att.column_name||’) max_value, stddev(‘||r_att.column_name||’) std_value, stats_mode(‘||r_att.column_name||’) mode_value, variance(‘||r_att.column_name||’) var_value, null, null from ‘|| r_att.table_name, DBMS_SQL.NATIVE);

v_rows := DBMS_SQL.EXECUTE(v_sql);

ELSIF r_att.data_type IN (‘CHAR’, ‘VARCHAR’, ‘VARCHAR2’) THEN

dbms_output.put_line(r_att.table_name||’ : ‘||r_att.column_name||’ : ‘||r_att.data_type);

—

— We need to gather a smaller number of stats for the character attributes

—

v_sql := DBMS_SQL.OPEN_CURSOR;

begin

DBMS_SQL.PARSE(v_sql, ‘insert into data_stats select ”’||r_att.table_name||”’, ”’||r_att.column_name||”’, ”’||r_att.data_type||”’, ‘||r_att.data_length||’, ‘||nvl(r_att.data_precision,0)||’, ‘||nvl(r_att.data_scale,0)||’, count(*) num_value, (sum(CASE WHEN ‘||r_att.column_name||’ IS NULL THEN 1 ELSE 0 END)/COUNT(*))*100 null_percent, count(distinct ‘||r_att.column_name||’) distinct_count, (count(distinct ‘||r_att.column_name||’)/count(*))*100 distinct_percent, null, null, null, null, stats_mode(‘||r_att.column_name||’) mode_value, null, null, null from ‘|| r_att.table_name, DBMS_SQL.NATIVE);

v_rows := DBMS_SQL.EXECUTE(v_sql);

— dbms_output.put_line(‘insert into data_stats select ”’||r_att.table_name||”’, ”’||r_att.column_name||”’, ”’||r_att.data_type||”’, ‘||r_att.data_length||’, ‘||nvl(r_att.data_precision,0)||’, ‘||nvl(r_att.data_scale,0)||’, count(*) num_value, (sum(CASE WHEN ‘||r_att.column_name||’ IS NULL THEN 1 ELSE 0 END)/COUNT(*))*100 null_percent, count(distinct ‘||r_att.column_name||’) distinct_count, (count(distinct ‘||r_att.column_name||’)/count(*))*100 distinct_percent, null, null, null, null, stats_mode(‘||r_att.column_name||’) mode_value, null, null, null from ‘|| r_att.table_name);

exception

when others then

dbms_output.put_line(v_rows);

end;

ELSE

dbms_output.put_line(‘Unable to gather statistics for ‘||r_att.column_name||’ with data type of ‘||r_att.data_type||’.’);

END IF;

END LOOP;

dbms_output.put_line(‘Finished gathering statistics for ‘||upper(p_table_name)||’ at ‘||to_char(sysdate,’DD-MON-YY HH24:MI:SS’));

commit;

END;

Then to run it for a table:

exec gather_data_stats(‘mining_data_build_v’);

We can view the contents of the DATA_STATS table by executing the following in SQL*Plus or SQL Developer

select * from DATA_STATS;

Type I and Type II Data Scientists

Over the past 18 months we have seen a significant increase in the use of the term Data Scientist. Maybe it is because the HBR and many other publications have been promoting it.

Yes the areas of statistics and predictive analytics has evolved to include a lot more techniques and technologies.

Unfortunately the term Data Scientist has been over used and a lot of people have joined in with the Marketing hype. There are reports of organisations hiring a data scientist only to fire them within a few months because they did not deliver anything useful. Data Science is not some silver bullet to an organization problems and data science may not deliver anything useful, but in the vast majority of cases it will.

One thing that has been emerging over the past few weeks is that there seems to be two main types of Data Scientist. There are the Data Scientists who perform certain tasks or are focused on specific technologies. Then there are the Data Scientists who are not as technical as the previous group but are focused on how they can use the technologies to deliver business benefit. I like to call these Type I and Type II Data Scientist.

The Type I Data Scientist

This is perhaps to most common type of Data Scientist we see around, or the most common type of person who is calling themselves a Data Scientist. These are people who know a lot about and are really good at a technique or technology that is associated with Data Science. Some of these would be the “old school” type of people and include:

- Statisticians

- Data Miners

- Predictive Modellers

- Machine Learning

- Data Warehousing

- Business Intelligence & Visualization

- Big Data

- R / Oracle / SAS / SPSS / etc.

The people in each of these have a deep knowledge of their topic and can tell/show you lots of detail about how best to explore data in their given field.

Yes you don’t have to have a Stats background to call yourself a Data Scientist, but some knowledge of Stats would be useful (you don’t need a PhD or Master)

The Type II Data Scientist

A Type II Data Scientist is a slightly different breed of person. They would have a little bit of knowledge of some or all of the areas listed under the Type I Data Scientist, but would not have the depth of knowledge of a topic that a Type I Data Scientist would have.

The Type II Data Scientist approaches the types of problems that organisations are facing in a different way. They will concentrate on the business goals and business problems that the organisation are facing. Based on these they will identify what the data scientist project will focus on, ensuring that there is a measurable outcome and business goal. The Type II Data Scientist will be a good communicator, being able to translate between the business problem and the technical environment necessary to deliver what is needed. During the project the data science team will discovery various insight about the data. The Type II Data Scientist will prioritise these and feed them back to the various business units. Some of these insights can range from something new, verifying business knowledge beliefs, areas where better data capture is needed, improvements in applications, etc.

The Type II Data Scientist would be the Data Science team leader within the organisation that manages the Type I Data Scientists, keeping them focused on the key deliverables of delivering measurable business benefits.

I really like the following phrase that I have come across recently:

“We haven’t learned how to handle small data well, let alone throw big data on there.”

Data Science is not about Big Data. There is much more an organization can do with Data Science without having to get involved with Big Data. This is where the skills of the Type II Data Scientist is important, as they can direct the managers of an organization to focus on their real data problems and not get carried away with some of the marketing hype. When the time is right they will look at incorporating typical big data problems within their existing analytical environment.

One thing is for sure. The definition of “what is a” Data Scientist is still evolving. But there does seem to be some consensus the corresponds to the separation of the Type I and Type II Data Scientist roles.

You must be logged in to post a comment.