Formatting results from ORE script in a SELECT statement

This blog post looks at how to format the output or the returned returns from an Oracle R Enterprise (ORE), user defined R function, that is run using a SELECT statement in SQL.

Sometimes this can be a bit of a challenge to work out, but it can be relatively easy once you have figured out how to do it. The following examples works through some scenarios of different results sets from a user defined R function that is stored in the Oracle Database.

To run that user defined R function using a SELECT statement I can use one of the following ORE SQL functions.

- rqEval

- rqTableEval

- “rqGroupEval“

- rqRowEval

For simplicity we will just use the first of these ORE SQL functions to illustrate the problem and how to go about solving it. The rqEval ORE SQL function is a generate purpose function to call a user defined R script stored in the database. The function does not require any input data set and but it will return some data. You could use this to generate some dummy/test data or to find some information in the database. Here is noddy example that returns my name.

BEGIN

--sys.rqScriptDrop('GET_NAME');

sys.rqScriptCreate('GET_NAME',

'function() {

res<-data.frame("Brendan")

res

} ');

END;

To call this user defined R function I can use the following SQL.

select *

from table(rqEval(null,

'select cast(''a'' as varchar2(50)) from dual',

'GET_NAME') );

For text strings returned you need to cast the returned value giving a size.

If we have a numeric value being returned we can don’t have to use the cast and instead use ‘1’ as shown in the following example. This second example extends our user defined R function to return my name and a number.

BEGIN

sys.rqScriptDrop('GET_NAME');

sys.rqScriptCreate('GET_NAME',

'function() {

res<-data.frame(NAME="Brendan", YEAR=2017)

res

} ');

END;

To call the updated GET_NAME function we now have to process two returned columns. The first is the character string and the second is a numeric.

select *

from table(rqEval(null,

'select cast(''a'' as varchar2(50)) as "NAME", 1 AS YEAR from dual',

'GET_NAME') );

These example illustrate how you can process character strings and numerics being returned by the user defined R script.

The key to setting up the format of the returned values is knowing the structure of the data frame being returned by the user defined R script. Once you know that the rest is (in theory) easy.

Explicit Semantic Analysis setup using SQL and PL/SQL

In my previous blog post I introduced the new Explicit Semantic Analysis (ESA) algorithm and gave an example of how you can build an ESA model and use it. Check out this link for that blog post.

In this blog post I will show you how you can manually create an ESA model. The reason that I’m showing you this way is that the workflow (in ODMr and it’s scheduler) may not be for everyone. You may want to automate the creation or recreation of the ESA model from time to time based on certain business requirements.

In my previous blog post I showed how you can setup a training data set. This comes with ODMr 4.2 but you may need to expand this data set or to use an alternative data set that is more in keeping with your domain.

Setup the ODM Settings table

As with all ODM algorithms we need to create a settings table. This settings table allows us to store the various parameters and their values, that will be used by the algorithm.

-- Create the settings table

CREATE TABLE ESA_settings (

setting_name VARCHAR2(30),

setting_value VARCHAR2(30));

-- Populate the settings table

-- Specify ESA. By default, Naive Bayes is used for classification.

-- Specify ADP. By default, ADP is not used. Need to turn this on.

BEGIN

INSERT INTO ESA_settings (setting_name, setting_value)

VALUES (dbms_data_mining.algo_name,

dbms_data_mining.algo_explicit_semantic_analys);

INSERT INTO ESA_settings (setting_name, setting_value)

VALUES (dbms_data_mining.prep_auto,dbms_data_mining.prep_auto_on);

INSERT INTO ESA_settings (setting_name, setting_value)

VALUES (odms_sampling,odms_sampling_disable);

commit;

END;

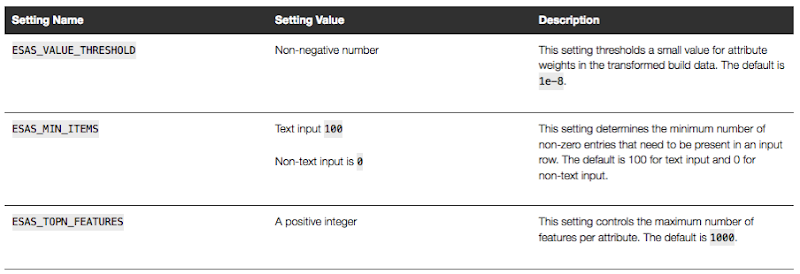

These are the minimum number of parameter setting needed to run the ESA algorithm. The other ESA algorithm setting include:

Setup the Oracle Text Policy

You also need to setup an Oracle Text Policy and a lexer for the Stopwords.

DECLARE

v_policy_name varchar2(30);

v_lexer_name varchar2(3)

BEGIN

v_policy_name := 'ESA_TEXT_POLICY';

v_lexer_name := 'ESA_LEXER';

ctx_ddl.create_preference(v_lexer_name, 'BASIC_LEXER');

v_stoplist_name := 'CTXSYS.DEFAULT_STOPLIST'; -- default stop list

ctx_ddl.create_policy(policy_name => v_policy_name, lexer => v_lexer_name, stoplist => v_stoplist_name);

END;

Create the ESA model

Once we have the settings table created with the parameter values set for the algorithm and the Oracle Text policy created, we can now create the model.

To ensure that the Oracle Text Policy is applied to the text we want to analyse we need to create a transformation list and add the Text Policy to it.

We can then pass the text transformation list as a parameter to the CREATE_MODEL, procedure.

DECLARE

v_xlst dbms_data_mining_transform.TRANSFORM_LIST;

v_policy_name VARCHAR2(130) := 'ESA_TEXT_POLICY';

v_model_name varchar2(50) := 'ESA_MODEL_DEMO_2';

BEGIN

v_xlst := dbms_data_mining_transform.TRANSFORM_LIST();

DBMS_DATA_MINING_TRANSFORM.SET_TRANSFORM(v_xlst, '"TEXT"', NULL, '"TEXT"', '"TEXT"', 'TEXT(POLICY_NAME:'||v_policy_name||')(MAX_FEATURES:3000)(MIN_DOCUMENTS:1)(TOKEN_TYPE:NORMAL)');

DBMS_DATA_MINING.DROP_MODEL(v_model_name, TRUE);

DBMS_DATA_MINING.CREATE_MODEL(

model_name => v_model_name,

mining_function => DBMS_DATA_MINING.FEATURE_EXTRACTION,

data_table_name => 'WIKISAMPLE',

case_id_column_name => 'TITLE',

target_column_name => NULL,

settings_table_name => 'ESA_SETTINGS',

xform_list => v_xlst);

END;

NOTE: Yes we could have merged all of the above code into one PL/SQL block.

Use the ESA model

We can now use the FEATURE_COMPARE function to use the model we just created, just like I did in my previous blog post.

SELECT FEATURE_COMPARE(ESA_MODEL_DEMO_2

USING 'Oracle Database is the best available for managing your data' text

AND USING 'The SQL language is the one language that all databases have in common' text) similarity

FROM DUAL;

Go give the ESA algorithm a go and see where you could apply it within your applications.

next OUG Ireland Meet-up on 12th Janiary

Our next OUG Ireland Meet-up with be on Thursday 12th January, 2017.

The theme for this meet up is DevOps and How to Migrate to the Cloud.

Come along on the night here about these topics and how companies in Ireland are doing these things.

Venue : Bank of Ireland, Grand Canal Dock, Dublin.

The agenda for the meet-up is:

18:00-18:20 Sign-in, meet and greet, networking, grab some refreshments, etc

18:20-18:30 : Introductions & Welcome, Agenda, what is OUG Ireland, etc.

18:30-19:00 : Dev Ops and Oracle PL/SQL development – Alan McClean

Abstract

In recent years the need to deliver changes to production as soon as possible has led to the rise of continuous delivery; continuous integration and continuous deployment. These issues have become standards in the application development, particularly for code developed in languages such as Java. However, database development has lagged behind in supporting this paradigm. There are a number of steps that can be taken to address this. This presentation examines how database changes can be delivered in a similar manner to other languages. The presentation will look at unit testing frameworks, code reviews and code quality as well as tools for managing database deployment.

19:00-1930 : Simplifying the journey to Oracle Cloud : Decision makers across Managers, DBA’s and Cloud Architects who need to progress an Oracle Cloud Engagement in the organization – Ken MacMahon, Head of Oracle Cloud Services at Version1

Abstract

The presentation will cover the 5 steps that Version 1 use to try and help customers with Oracle Cloud adoption in the organisation. By attending you will hear, how to deal with cloud adoption concerns, choose candidates for cloud migration, how to design the cloud architecture, how to use automation and agility in your Cloud adoption plans, and finally how to manage your Cloud environment.

This event is open to all, you don’t have to be a member of the user group and best of all it is a free event. So spread the word with all your Oracle developer, DBAs, architects, data warehousing, data vizualisations, etc people.

We hope you can make it! and don’t forget to register for the event.

Oracle Data Miner 4.2 New Features

Oracle Data Miner 4.2 (part of SQL Developer 4.2) got released as an Early Adopter versions (EA) a few weeks ago.

With the new/updated Oracle Data Miner (ODMr) there are a number of new features. These can be categories as 1) features all ODMr users can use now, 2) New features that are only usable when using Oracle 12.2 Database, and 3) Updates to existing algorithms that have been exposed via the ODMr tool.

The following is a round up of the main new features you can enjoy as part of ODMr 4.2 (mainly covering points 1 and 2 above)

- You can now schedule workflows to run based on a defined schedule

- Support for additional data types (RAW, ROWID, UROWID, URITYPE)

- Better support for processing JSON data in the JSON Query node

- Additional insights are displayed as part of the Model Details View

- Additional alert monitoring and reporting

- Better support for processing in-memory data

- A new R Model node that allows you to include in-database ORE user defined R function to support model build, model testing and applying of new model.

- New Explicit Semantic Analysis node (Explicit Feature Extraction)

- New Feature Compare and Test nodes

- New workflow status profiling perfoance improvements

- Refresh the input data definition in nodes

- Attribute Filter node now allows for unsupervised attribute importance ranking

- The ability to build Partitioned data mining models

Look out for the blog posts on most of these new features over the coming months.

WARNING: Most of these new features requires an Oracle 12.2 Database.

2016: A review of the year

As 2016 draws to a close I like to look back at what I have achieved over the year. Most of the following achievements are based on my work with the Oracle User Group community. I have some other achievements are are related to the day jobs (Yes I have multiple day jobs), but I won’t go into those here.

As you can see from the following 2016 was another busy year. There was lots of writing, which I really enjoy and I’ll be continuing with in 2017. As they say, watch this space for writing news in 2017.

Books

Yes 2016 was a busy year for writing and most of the later half of 2015 and the first half of 2016 was taken up writing two books. Yes two books. One of the books was on Oracle R Enterprise and this book compliments my previous published book on Oracle Data Mining. I now have the books that cover both components of the Oracle Advanced Analytics Option.

I also co-wrote a book with legends of Oracle community. These were Arup Nada, Martin Widlake, Heli Helskyaho and Alex Nuijten.

More news coming in 2017.

Blog Posts

One of the things I really enjoy doing is playing with various features of Oracle and then writing some blog posts about them. When writing the books I had to cut back on writing blog posts. I was luck to be part of the 12.2 Database beta this year and over the past few weeks I’ve been playing with 12.2 in the cloud. I’ve already written a blog post or two already on this and I also have an OTN article on this coming out soon. There will be more 12.2 analytics related blog posts in 2017.

In 2016 I have written 55 blog posts (including this one). This number is a little bit less when compared with previous years. I’ll blame the book writing for this. But more posts are in the works for 2017.

Articles

In 2016 I’ve written articles for OTN and for Toad World. These included:

OTN

- Oracle Advanced Analytics : Kicking the Tires/Tyres

- Kicking the Tyres of Oracle Advanced Analytics Option – Using SQL and PL/SQL to Build an Oracle Data Mining Classification Model

- Kicking the Tyres of Oracle Advanced Analytics Option – Overview of Oracle Data Miner and Build your First Workflow

- Kicking the Tyres of Oracle Advanced Analytics Option – Using SQL to score/label new data using Oracle Data Mining Models

- Setting up and configuring RStudio on the Oracle 12.2 Database Cloud Service

ToadWorld

- Introduction to Oracle R Enterprise

- ORE 1.5 – User Defined R Scripts

Conferences

- January – Yes SQL Summit, NoCOUG Winter Conference, Redwood City, CA, USA **

- January – BIWA Summit, Oracle HQ, Redwood City, CA, USA **

- March – OUG Ireland, Dublin, Ireland

- June – KScope, Chicago, USA (3 presentations)

- September – Oracle Open World (part of EMEA ACEs session) **

- December – UKOUG Tech16 & APPs16

** for these conferences the Oracle ACE Director programme funded the flights and hotels. All other expenses and other conferences I paid for out of my own pocket.

OUG Activities

I’m involved in many different roles in the user group. The UKOUG also covers Ireland (incorporating OUG Ireland), and my activities within the UKOUG included the following during 2016:

- Editor of Oracle Scene: We produced 4 editions in 2016. Thank you to all who contributed and wrote articles.

- Created the OUG Ireland Meetup. We had our first meeting in October. Our next meetup will be in January.

- OUG Ireland Committee member of TECH SIG and BI & BA SIG.

- Committee member of the OUG Ireland 2 day Conference 2016.

- Committee member of the OUG Ireland conference 2017.

- KScope17 committee member for the Data Visualization & Advanced Analytics track.

I’m sure I’ve forgotten a few things, I usually do. But it gives you a taste of some of what I got up to in 2016.

Auditing Oracle Data Mining model usage

In a previous blog post I talked about how you can rename and comment your Oracle Data Mining models. This is to allow you to easily to see and understand the intended use of the data mining model.

Another feature available to you is to audit the usage of the the data mining models. As your data mining environment grows to many 10s or more typically 100s of models, you will need to have some way of tracking their usage. This can allow you to discover what models are frequently being used and those that are not being used in-frequently. You can then use this information to investigate if there are any issues. Or in some companies I’ve seen an internal charging scheme in place for each time the models are used.

The following outlines the steps required to setup the auditing of your models and how to inspect the usage.

Note: You will need to the AUDIT_ADMIN role to audit the models.

First create an audit policy for the data mining model in a particular schema.

CREATE AUDIT POLICY oaa_odm_audit_usage ACTIONS ALL ON MINING MODEL dmuser.high_value_churn_clas_svm;

This creates a policy that monitors all activity on the data mining model HIGH_VALUE_CHURN_CLAS_SVM in the DMUSER schema.

Now we need to enable the policy and allow to to tract all activity on the model.

AUDIT POLICY oaa_odm_audit_usage BY oaa_model_user;

This will track all usage of the data mining model by the schema call OAA_MODEL_USER. We can then use the following query to search for the audit records for the OAA_MODEL_USER schema.

SELECT dbusername,

action_name,

systemm_privilege_used,

return_code,

object_schema,

object_name,

sql_text

FROM unified_audit_trail

WHERE object_name = 'HIGH_VALUE_CHURN_CLAS_SVM';

But there is a little problem with using what I’ve just shown you above. The problem is that it will track all activity on the data mining model. Perhaps this isn’t what we really want. Perhaps we only want to track only certain activity of the data mining model. Instead of creating the policy using ‘ACTIONS ALL’, we can list out the actions or operations we want to track. For example, we want to tract when it is used in a SELECT. The following shows how you can set this up for just SELECT.

CREATE AUDIT POLICY oaa_odm_audit_select ACTIONS SELECT ON MINING MODEL dmuser.high_value_churn_clas_svm; AUDIT POLICY oaa_odm_audit_select BY oaa_model_user;

The list of individual audit events you can use include:

- AUDIT

- COMMENT

- GRANT

- RENAME

- SELECT

A policy can be setup to tract one or more of these events. For example, if we wanted a policy to track SELECT and GRANT, we would have list each event separated by a comma.

CREATE AUDIT POLICY oaa_odm_audit_select_grant ACTIONS SELECT ON MINING MODEL dmuser.high_value_churn_clas_svm, ACTIONS GRANT ON MINING MODEL dmuser.high_value_churn_clas_svm, ; AUDIT POLICY oaa_odm_audit_select_grant BY oaa_model_user;

Renaming & Commenting Oracle Data Mining Models

As your company evolves with their data mining projects, the number of models produced and in use in production will increase dramatically.

Care needs to be taken when it comes to managing these. This includes using meaningful names, adding descriptions of what the model is about or for, and being able to track their usage, etc.

I will look at tracking the usage of the models in another blog post, but the following gives examples of how to rename Oracle Data Mining models and how to add comments or descriptions to these models. This is particularly useful because our data analytics teams have a constant turn over or it has been many months since you last worked on a model and you want a quick idea of what purpose of the model was for.

If you have been using the Oracle Data Mining tool (part of SQL Developer) will will see your model being created with some sort of sequencing numbers. For example for a Support Vector Machine (SVM) model you might see it labelled for classification:

CLAS_SVM_5_22

While you are working on this project you will know and understand what it was about and why it is being used. But afterward you may forget as you will be dealing with many hundreds of models. Yes you could check your documentation for the purpose of this model but that can take some time.

What if you could run a SQL query to find out?

But first we need to rename the model.

DBMS_DATA_MINING.RENAME_MODEL('CLAS_SVM_5_22', 'HIGH_VALUE_CHURN_CLAS_SVM');

Next we will want to add a longer description of what the model is about. We can do this by adding a comment to the model.

COMMENT ON MINING MODEL high_value_churn_clas_svm IS 'Classification Model to Predict High Value Customers most likely to Churn';

We can now see these updated details when we query the Oracle Data Mining models in a user schema.

SELECT model_name, mining_function, algorithm, comments FROM user_mining_models;

These are two very useful commands.

12.2 DBaaS (Extreme Edition) possible bug/issue with the DB install/setup

A few weeks ago the 12.2 Oracle Database was released on the cloud. I immediately set an account and got my 12.2 DBaaS setup. This was a relatively painless process and quick.

For me I wanted to test out all the new Oracle Advanced Analytics new features and the new features in SQL Developer 4.2 that only become visible when you are using the 12.2 Database.

When you are go to use the Oracle Data Miner (GUI tool) in SQL Developer 4.2, it will check to see if the ODMr repository is installed in the database. If it isn’t then you will be promoted for the SYS password.

This is the problem. In previous version of the DBaaS (12.1, etc) this was not an issue.

When you go to create your DBaaS you are asked for a password that will be used for the admin accounts of the database.

But when I entered the password for SYS, I got an error saying invalid password.

After using ssh to create a terminal connection to the DBaaS I was able to to connect to the container using

sqlplus / as sysdba

and also using

sqlplus sys/ as sysdba

Those worked fine. But when I tried to connect to the PDB1 I got the invalid username and/or password error.

sqlplus sys/@pdb1 as sysdba

I reconnected as follows

sqlplus / as sysdba

and then changed the password for SYS with containers=all

This command completed without errors but when I tried using the new password to connect the the PDB1 I got the same error.

After 2 weeks working with Oracle Support they eventually pointed me to the issue of the password file for the PDB1 was missing. They claim this is due to an error when I was creating/installing the database.

But this was a DBaaS and I didn’t install the database. This is a problem with how Oracle have configured the installation.

The answer was to create a password file for the PDB1 using the following

Evaluating Cluster Dispersion in Oracle Data Mining

When working with the Clustering algorithms, and particularly k-Means, in the Oracle Data Miner tool there is no way of seeing how compact or dispersed the data is within a cluster.

There are a number of measures typically used in various tools and algorithms, but with Oracle Data Miner we are not presented with any of this information.

But if we flip from using the Oracle Data Miner tool to using SQL we can get to see some more details of the clusters produced by the k-Means algorithm along with some additional and useful information.

As I said there are a number of different measures used to evaluate clusters. The one that Oracle uses is called Dispersion. Now there are a few different definitions of what this could be and I haven’t been able to locate what is Oracle’s own definition of it in any of the documentation.

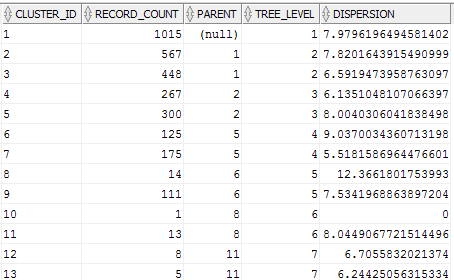

We can use the Dispersion value as a measure of how compact or how spread out the data is within a cluster. The Dispersion value is a number greater than 0. The lower the value of the more compact the cluster is i.e. the data points are close the the centroid of the cluster. The larger the value the more disperse or spread out the data points are.

The DBMS_DATA_MINING PL/SQL package comes with a function called GET_MODEL_DETAILS_KM. This function returns a record of the form DM_CLUSTERS.

(id NUMBER, cluster_id VARCHAR2(4000), record_count NUMBER, parent NUMBER, tree_level NUMBER, dispersion NUMBER, split_predicate DM_PREDICATES, child DM_CHILDREN, centroid DM_CENTROIDS, histogram DM_HISTOGRAMS, rule DM_RULE)

We can not use the following query to get the Dispersion value for each of the clusters from an ODM cluster model.

SELECT cluster_id,

record_count,

parent,

tree_level,

dispersion

FROM table(dbms_data_mining.get_model_details_km('CLUS_KM_3_2'));

UKOUG Conference 1999 : Who was there & what they were talking about

Yes you read the title of this blog post correctly!

Recently I was doing a bit a clear out and I came across a CD of the UKOUG Conference proceedings from 1999. That was my second UKOUG conference and how times have changed.

The CD contained all the conference proceedings consisting of slides and papers.

Here are some familiar names from back in 1999 who you may find presenting at this years conference, some you might remember as being a regular presenter and some are still presenting but not at this years conference.

- Jonathan Lewis

- Carl Dudley

- Fiona Martin

- Peter Robson

- Duncan Mills

- Kent Graziano

- John King

- Toby Price

- Doug Burns

- Dan Hotka

- Joel Goodman

The 1999 Ralph Fiennes did the Keynote speech. I queued up afterwards to get a signed book but they ran out with three people ahead of me 😦

The agenda grid was a bit smaller back then compared to now.

I’ll see you again in Birmingham this year, in a few days time 🙂

Using the Identity column for Oracle Data Miner

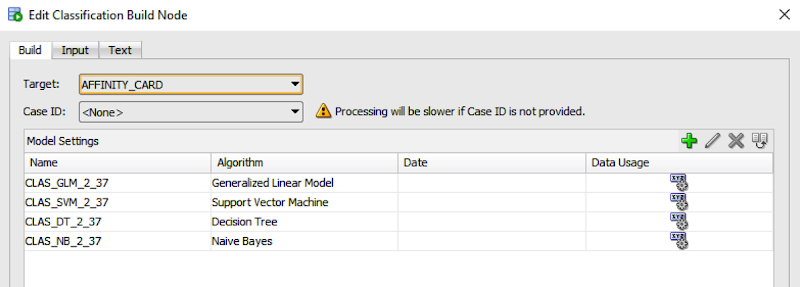

If you are a user of the Oracle Data Miner tool (the workflow data mining tool that is part of SQL Developer), then you will have noticed that for many of the algorithms you can specify a Case Id attribute along with, say, the target attribute.

The idea is that you have one attribute that is a unique identifier for each case record. This may or may not be the case in your data model and you may have a multiple attribute primary key or case record identifier.

But what is the Case Id field used for in Oracle Data Miner?

Based on the documentation this field does not need to have a value. But it is recommended that you do identify an attribute for the Case Id, as this will allow for reproducible results. What this means is that if we run our workflow today and again in a few days time, on the exact same data, we should get the same results. So the Case Id allows this to happen. But how? Well it looks like the attribute used or specified for the Case Id is used as part of the Hashing algorithm to partition the data into a train and test data set, for classification problems.

So if you don’t have a single attribute case identifier in your data set, then you need to create one. There are a few options open to you to do this.

- Create one: write some code that will generate a unique identifier for each of your case records based on some defined rule.

- Use a sequence: and update the records to use this sequence.

- Use ROWID: use the unique row identifier value. You can write some code to populate this value into an attribute. Or create a view on the table containing the case records and add a new attribute that will use the ROWID. But if you move the data, then the next time you use the view then you will be getting different ROWIDs and that in turn will mean we may have different case records going into our test and training data sets. So our workflows will generate different results. Not what we want.

- Use ROWNUM: This is kind of like using the ROWID. Again we can have a view that will select ROWNUM for each record. Again we may have the same issues but if we have our data ordered in a way that ensures we get the records returned in the same order then this approach is OK to use.

- Use Identity Column: In Oracle 12c we have a new feature called Identify Column. This kind of acts like a sequence but we can defined an attribute in a table to be an Identity Column, and as records are inserted into the the data (in our scenario our case table) then this column will automatically generate a unique number for our data. Again if we need to repopulate the case table, you will need to drop and recreate the table to get the Identity Column to reset, otherwise the newly inserted records will start with the next number of the Identity Column

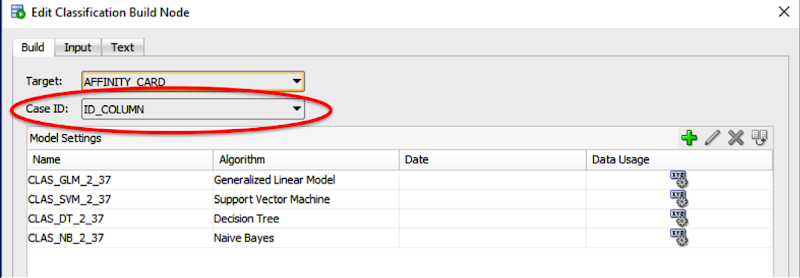

Here is an example of using the Identity Column in a case table.

CREATE TABLE case_table ( id_column NUMBER GENERATED ALWAYS AS IDENTITY, affinity_card NUMBER, age NUMBER, cust_gender VARCHAR2(5), country_name VARCHAR2(20) ... );

You can now use this Identity Column as the Case Id in your Oracle Data Miner workflows.

New OAA features in Oracle 12.2 Database

The Oracle 12.2c Database has been released and is currently available as a Cloud Service. The on-site version should be with us soon.

A few weeks ago I listed some of the new features that you will find in the Oracle Data Miner GUI tool (check out that blog post). I’ll have another blog post soon that looks a bit closer at how the new OAA features are exposed in this tool.

In this blog post I will list most of the new database related features in Oracle 12.2. There is a lot of new features and a lot of updated features. Over the next few months (yes it will take that long) I’ll have blog posts on most of these.

The Oracle Advanced Analytics Option new features include:

- The first new feature is one that you cannot see. Yes that sound a bit odd. But the underlying architecture of OAA has been rebuilt to allow for the algorithms to scale significantly. This is also future proofing OAA for new features coming in future releases of the database.

- Explicit Semantic Analysis. This is a new algorithm allows us to perform text similarity comparison. This is a great new addition and and much, much easier now compared to what we may have had to do previously.

- Using R models using SQL. Although we have been able to do this in the previous version of the database, the framework and supports have been extended to allow for greater and easier usage of user defined R scripts and R models with the in-database environment.

- Partitioned Models. We can now build partitioned mining models. This is where you can specify an attribute and a separate model will be created based on each value in the attribute.

- Partitioned scoring. Similarly we can now dynamically score the data based on an partition attribute.

- Extentions to Association Rules. Over the past few releases of the database, additional insights to the workings and decision making of the algorithms have been included. In 12.2 we now have some additional insights for the Association Rules aglorithm where we can now get to see the calculation of values associated with rules.

- DBMS_DATA_MINING package extended. This PL/SQL package has been extended to include the functionality for the new features listed above. Additional it can now process R algorithms and models.

- SQL Function changes: Change to the followi ODM related SQL functions to allow for partitioned models. CLUSTER_DETAILS, CLUSTER_DISTANCE, CLUSTER_ID, CLUSTER_PROBABILITY, CLUSTER_SET, FEATURE_COMPARE, FEATURE_DETAILS, FEATURE_ID, FEATURE_SET, FEATURE_VALUE, ORA_DM_PARTITION_NAME, PREDICTION, PREDICTION_BOUNDS, PREDICTION_COST, PREDICTION_DETAILS, PREDICTION_PROBABILITY, PREDICTION_SET

- New SQL Hint for ODM models. We have had hints in SQL for many, many versions now, but with 12.2c we now have a hint for partitioned models, called GROUPING hint.

- New CREATE_MODEL function. With the existing CREATE_MODEL function the input data set for the function needed to be defined in a table or accessed using a view. Basically the data needed to resist somewhere. With CREAETE_MODEL2 you can now define the input data set based on a SELECT statement.

In addition to all of these changes there are also some new interesting DB, SQL and PL/SQL new features that are of particular interest for your data science, machine learning, advanced analytics (or whatever the current favourite marketing term is today) projects.

It is going to be a busy few months ahead, working through all of these new features and write blog posts on how to use each of them.

You must be logged in to post a comment.