OCI:Vision – AI for image processing – the Basics

Every cloud service provides some form of AI offering. Some of these can range from very basic features right up to a mediocre level. Only a few are delivering advanced AI services in a useful way.

Oracle AI Services have been around for about a year now, and with all new products or cloud services, a little time is needed to let it develop from an MVP (minimum viable produce) to something that’s more mature, feature-rich, stable and reliable. Oracle’s OCI AI Services come with some pre-training models and to create your own custom models based on your own training datasets.

Oracle’s OCI AI Services include:

- Digital Assistant

- Language

- Speech

- Vision

- Document Understand

- Anomaly Detection

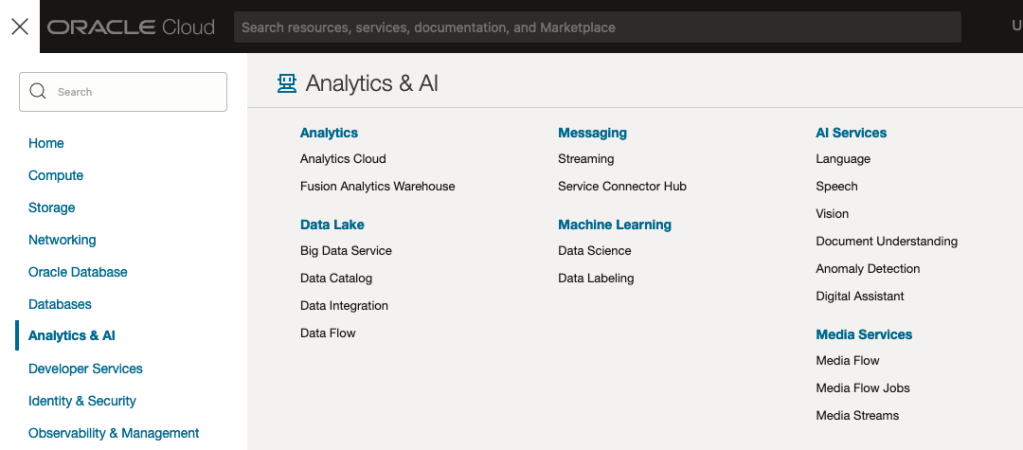

- Forecasting

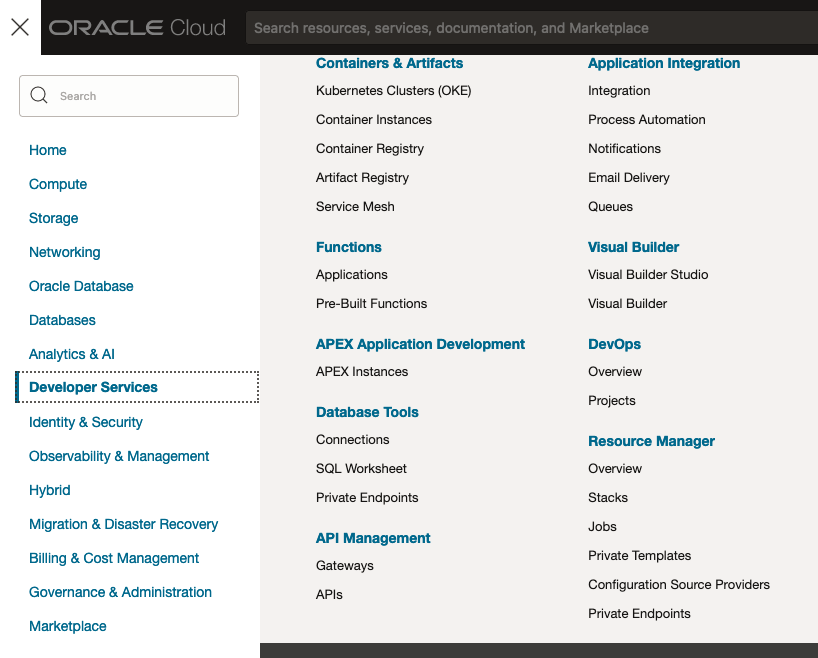

In this post, we’ll explore OCI Vision, and what the capabilities are available with their pre-trained models. To demonstrate this their online/webpage application will be used to demonstrate what it does and what it creates and identifies. You can access the Vision AI Services from the menu as shown in the following image.

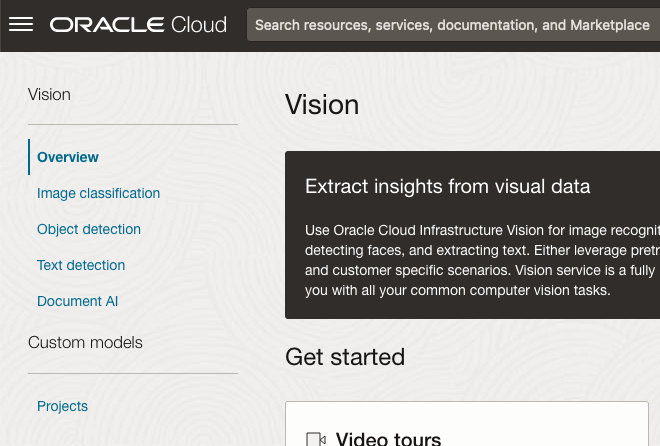

From the main AI Vision webpage, we can see on the menu (on left-hand side of the page), we have three main Vision related options. These are Image Classification, Object Detection and Document AI. These are pre-trained models that perform slightly different tasks.

Let’s start with Image Classification and explore what is possible. Just Click on the link.

Note: The Document AI feature will be moving to its own cloud Service in 2024, so it will disappear from them many but will appear as a new service on the main Analytics & AI webpage (shown above).

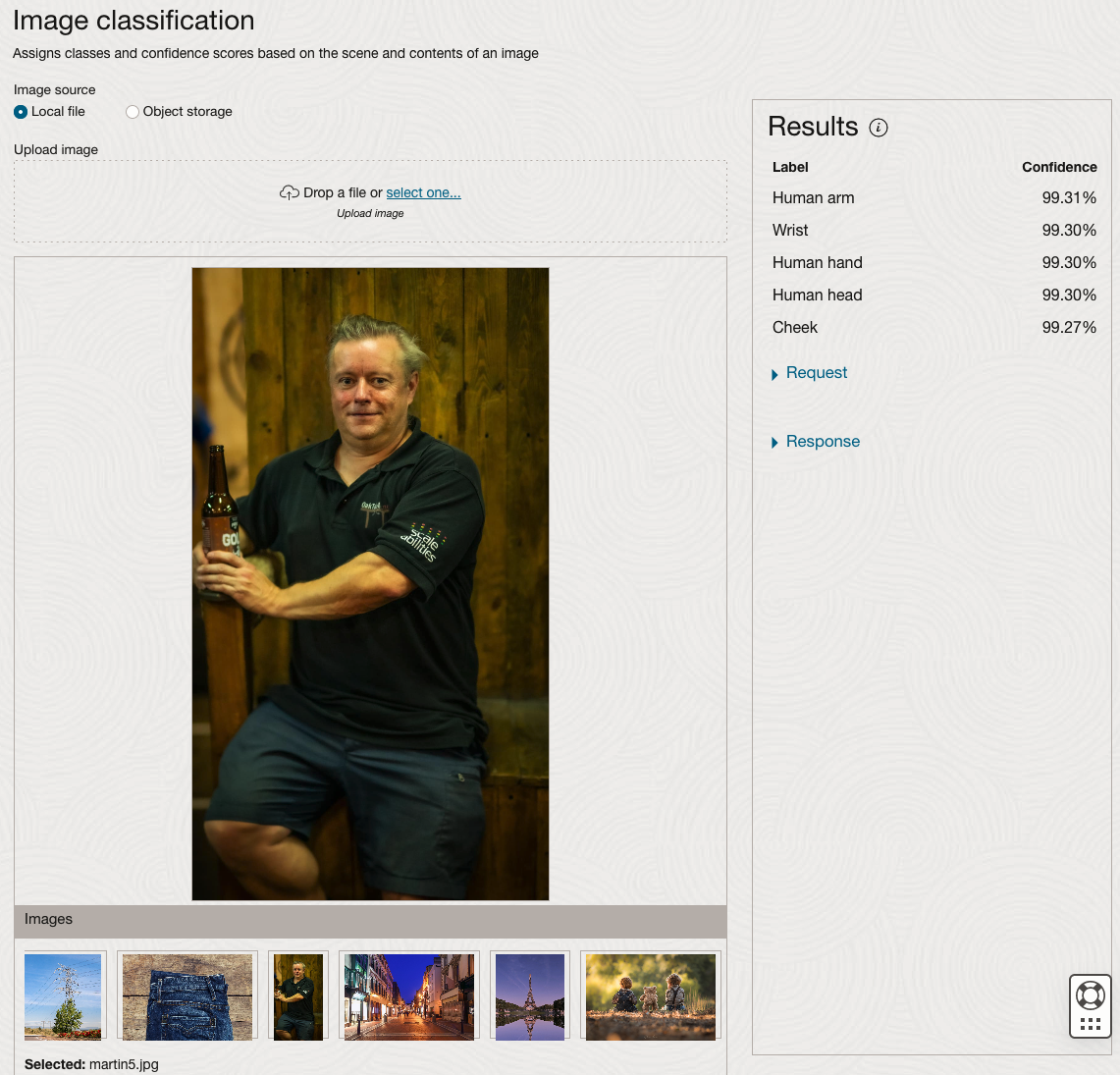

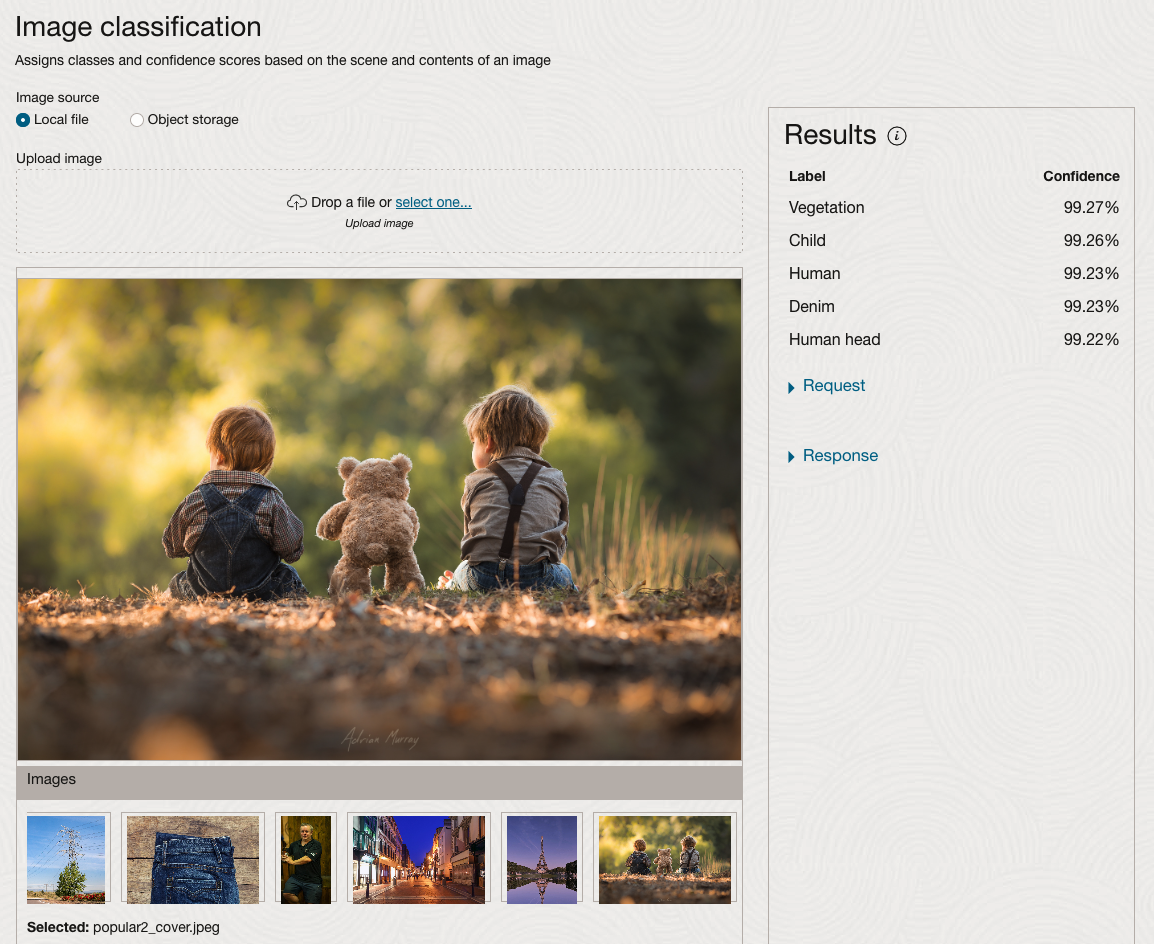

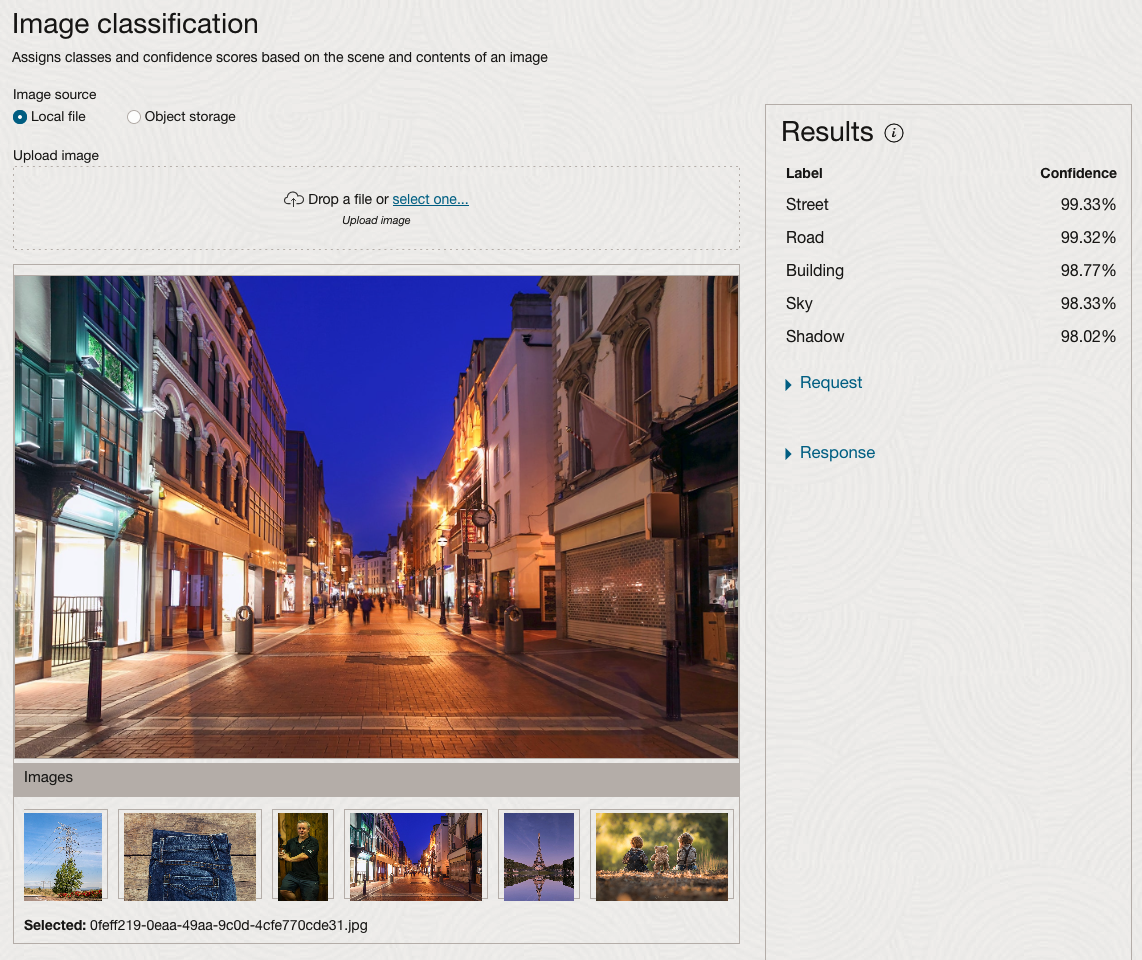

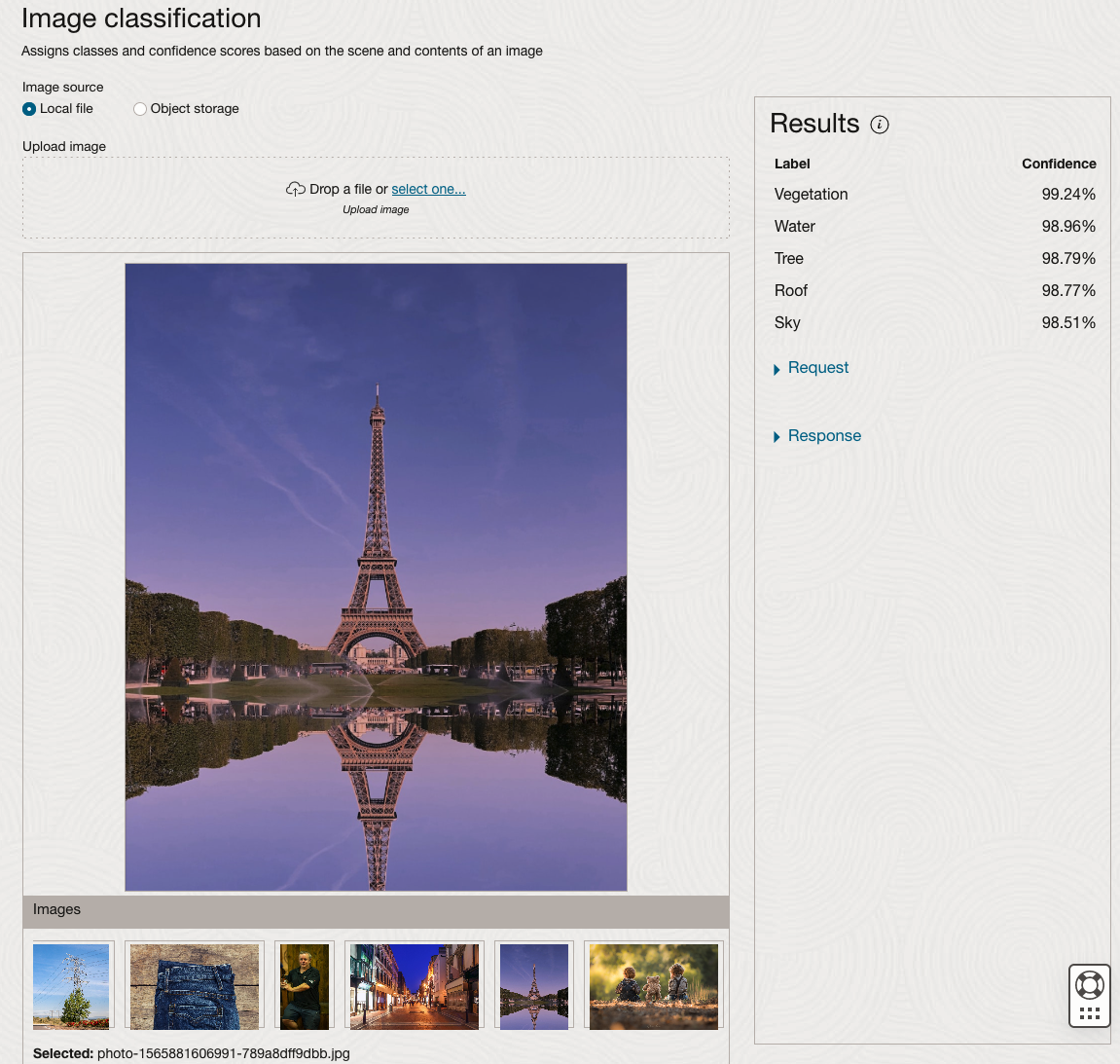

The webpage for each Vision feature comes with a couple of images for you to examine to see how it works. But a better way to explore the capabilities of each feature is to use your own images or images you have downloaded. Here are examples.

We can see the pre-trained model assigns classes and confidence for each image based on the main components it has identified in the image. For example with the Eiffel Tower image, the model has identified Water, Trees, Sky, Vegetation and Roof (of build). But it didn’t do so well with identifying the main object in the image as being a tower, or building of some form. Where with the streetscape image it was able to identify Street, Road, Building, Sky and Shadow.

Just under the Result section, we see two labels that can be expanded. One of these is the Response which contains JSON structure containing the labels, and confidences it has identified. This is what the pre-trained model returns and if you were to use Python to call this pre-trained model, it is this JSON object that you will get returned. You can then use the information contained in the JSON object to perform additional tasks for the image.

As you can see the webpage for OCI Vision and other AI services gives you a very simple introduction to what is possible, but it over simplifies the task and a lot of work is needed outside of this page to make the use of these pre-trained models useful.

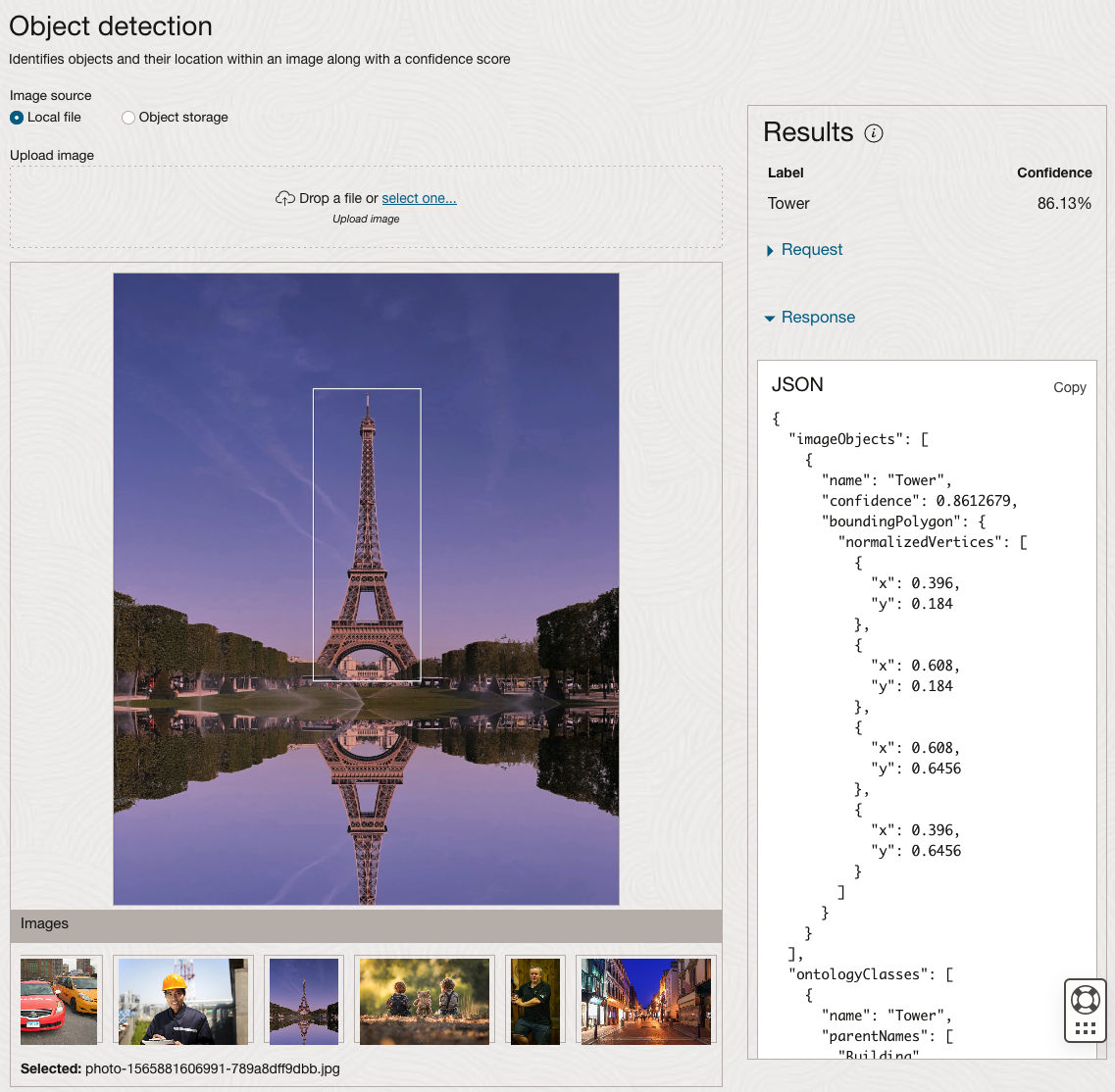

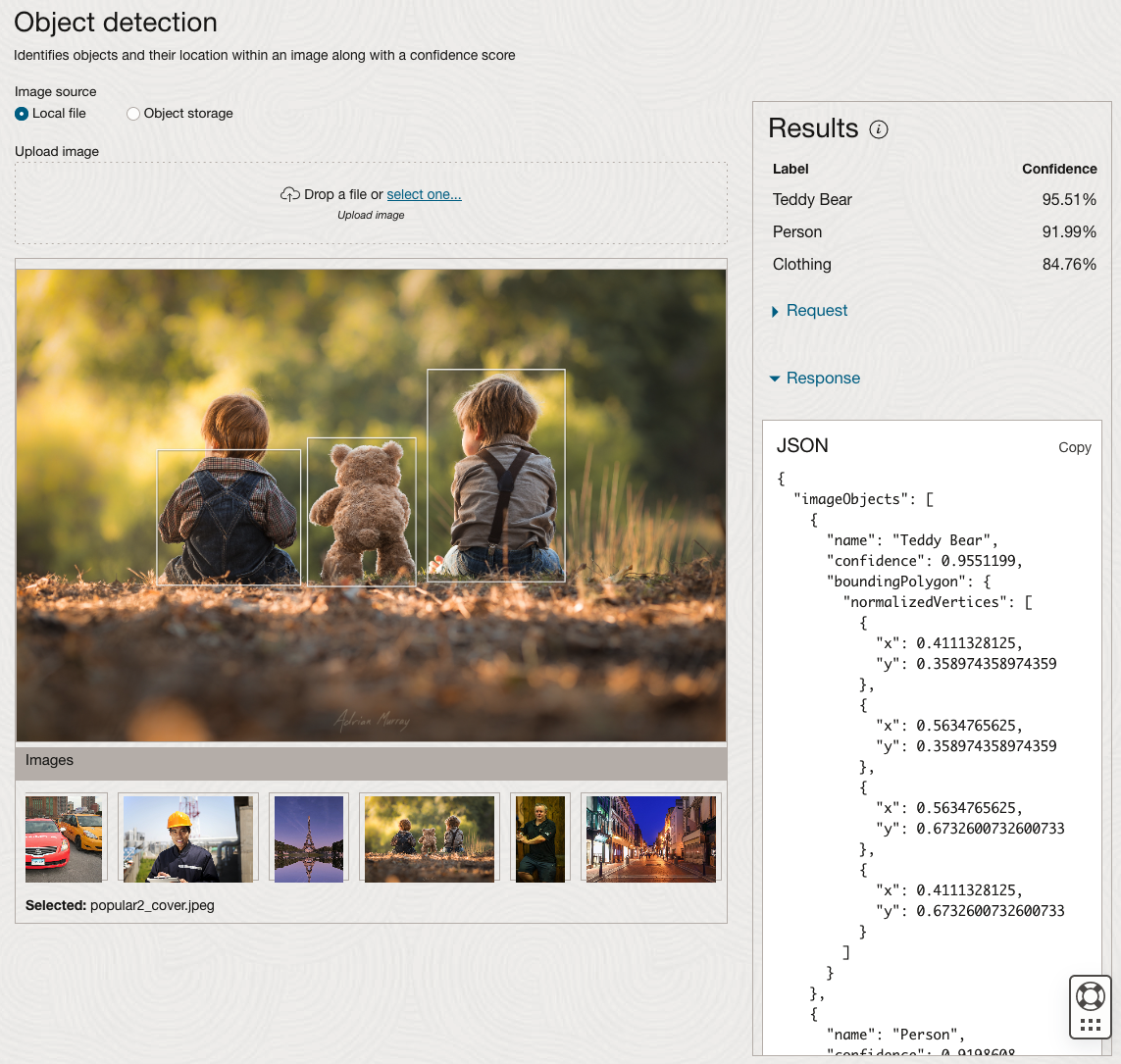

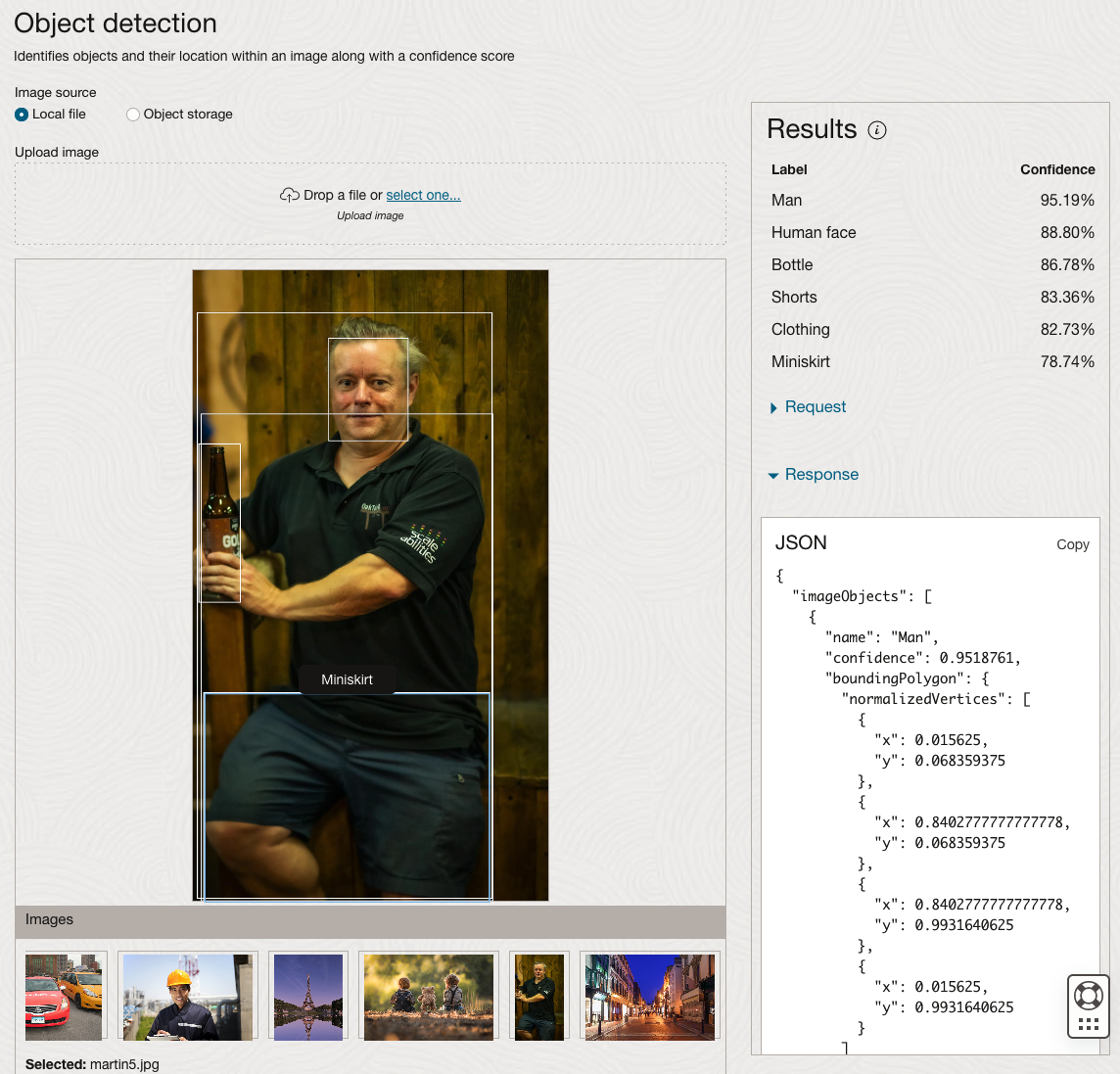

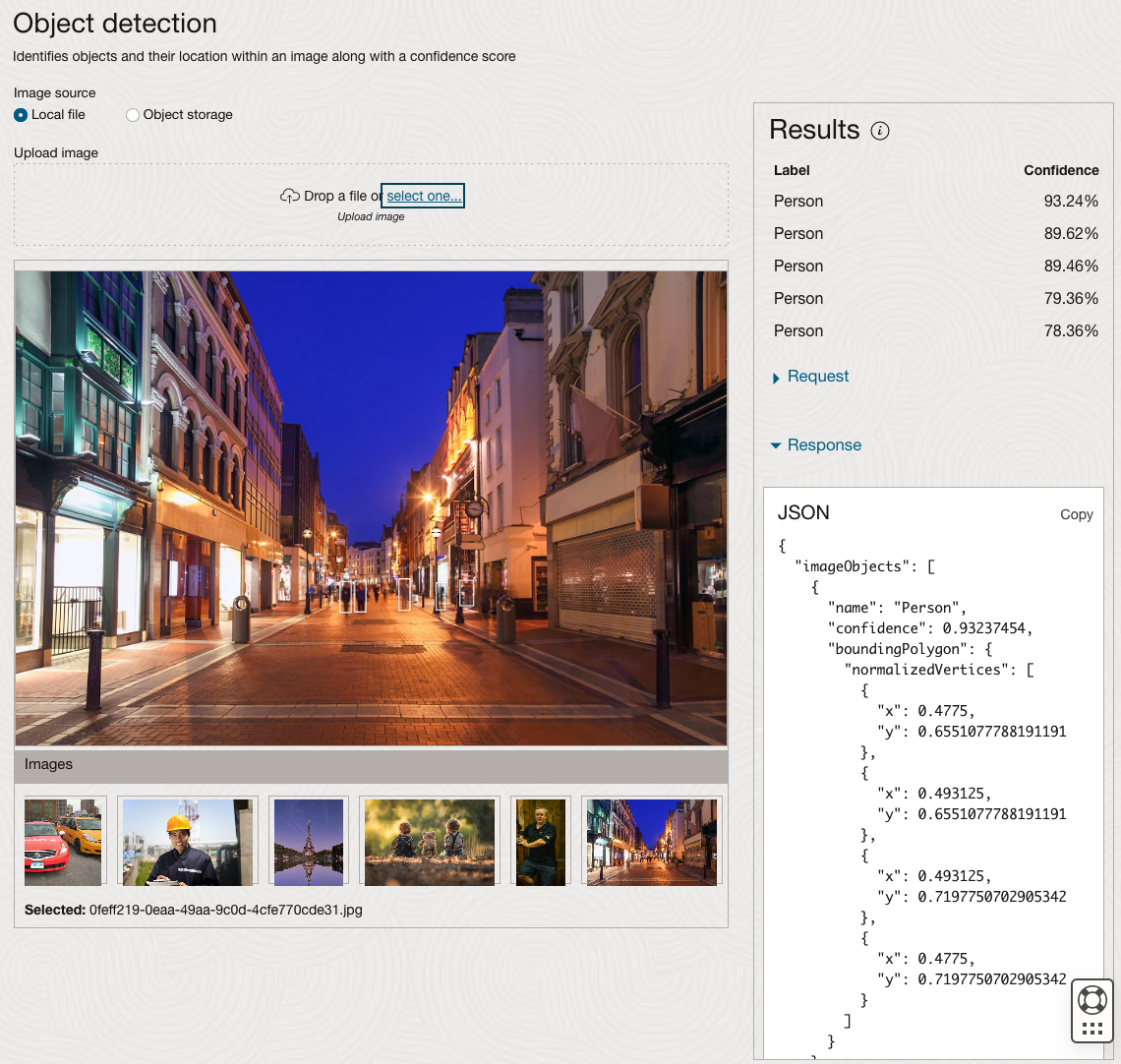

Moving onto the Object Detection feature (left-hand menu) and using the pre-trained model on the same images, we get slightly different results.

The object detection pre-trained model works differently as it can identify different things in the image. For example, with the Eiffel Tower image, it identifies a Tower in the image. In a similar way to the previous example, the model returns a JSON object with the label and also provides the coordinates for a bounding box for the objects detected. In the street scape image, it has identified five people. You’ll probably identify many more but the model identified five. Have a look at the other images and see what it has identified for each.

As I mentioned above, using these pre-trained models are kind of interesting, but are of limited use and do not really demonstrate the full capabilities of what is possible. Look out of additional post which will demonstrate this and steps needed to create and use your own custom model.

CAO Points 2023 – Slight Deflation

There have been lots of talk and news articles written about Grade Inflation over the past few years (the Covid years) and this year was no different. Most of the discussion this year began a couple of days before the Leaving Cert results were released last week and continued right up to the CAO publishing the points needed for each course. Yes, something needs to be done about the grading profiles and to revert back to pre-Covid levels. There are many reasons why this is necessary. Perhaps the most important of which is to bring back some stability to the Leaving Cert results and corresponding CAOs Points for entry to University courses. Last year, we saw there was some minor stepping back or deflating of results. But this didn’t have much of an impact on points needed for University courses. But in 2023 we have seen a slight step back in the points needed. I mentioned this possibility in my post on the Leaving Cert profile of marks. I also mentioned the subject with the biggest step back in marks/grades was Maths, and it looks like this has had an impact on the CAO point needed.

In 2023, we have seen a drop in points for 60% of University courses. In most years (pre and post-Covid) there would always be some fluctuation of points but the fluctuations would be minor. In 2023, some courses have changed by 20+ points.

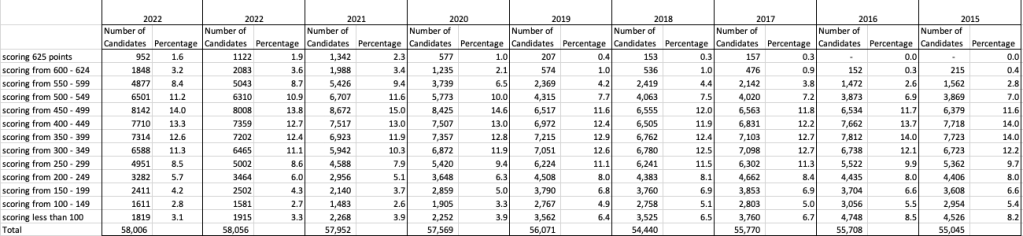

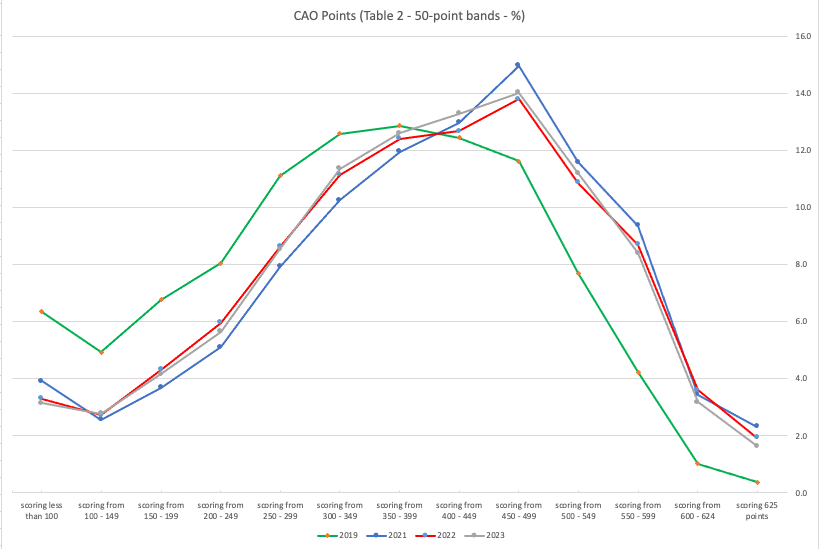

The following table and chart illustrate the profile of CAO points and the percentage of students who achieved this in ranges of 50 points.

An initial look at the data and the chart it appears the 2023 CAO points profile is very similar to that of 2022. But when you look a little closer a few things stand out. At the upper end of CAO points we see a small reduction in the percentage of students. This is reflected when you look at the range of University courses in this range. The points for these have reduced slightly in 2023 and we have fewer courses using random selection. If you now look at the 300-500 range, we see a slight increase in the percentage of students attaining these marks. But this doesn’t seem to reflect an increase in the points needed to gain entry to a course in that range. This could be due to additional places that Universities have made available across the board. Although there are some courses where there is an increase.

In 2023, we have seen a change in the geographic spread of interest in University courses, with more demand/interest in Universities outside of the Dublin region. The lack of accommodation and their costs in Dublin is a major issue, and students have been looking elsewhere to study and to locations they can easily commute to. Although demand for Trinity and UCD remains strong, there was a drop in the number for TU Dublin. There are many reported factors for this which include the accommodation issue and for those who might have considered commuting, the positioning of the Grangegorman campus in Dublin does not make this easy, unlike Trinity, UCD and DCU.

I’ve the Leaving Cert grades by subject and CAO Points datasets in a Database (Oracle). This allows me to easily analyse the data annually and to compare them to previous years, using a variety of tools.

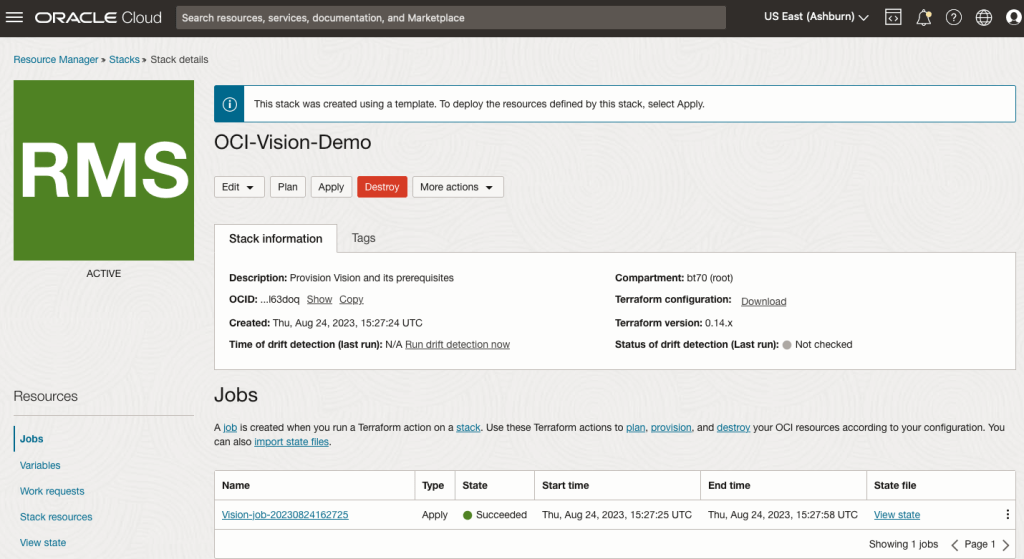

OCI:Vision Template for Policies

When using OCI you’ll need to configure your account and other users to have the necessary privileges and permissions to run the various offerings. OCI Vision is no different. You have two options for doing this. The first is to manually configure these. There isn’t a lot to do but some issues can arise. The other option is to use a template. The OCI Vision team have created a template of what is required and I’ll walk through the steps of setting this up along with some additional steps you’ll need.

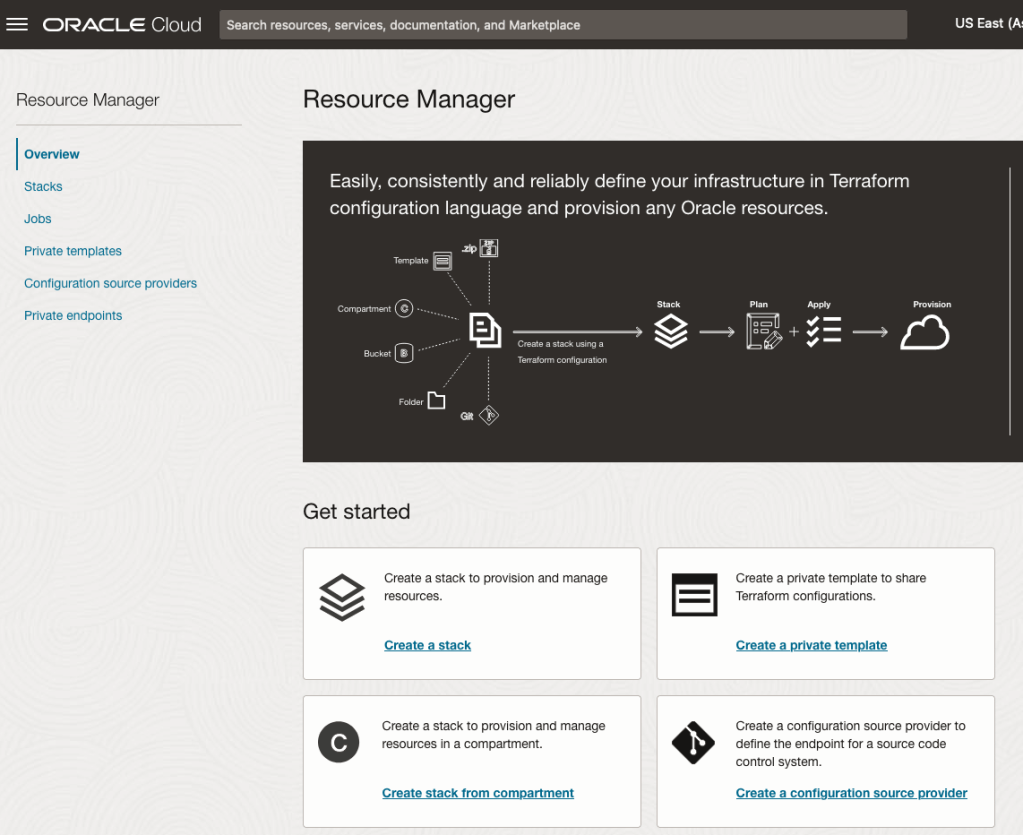

You’ll need to go to the Resource Manager page. This can be found under the menu by going to the Developer Services and then selecting Resource Manager.

First, you’ll need to go to the Resource Manager page. This can be found under the menu by going to the Developer Services and then selecting Resource Manager.

Located just under the main banner image you’ll see a section labelled ‘Create a stack’. Click on this link.

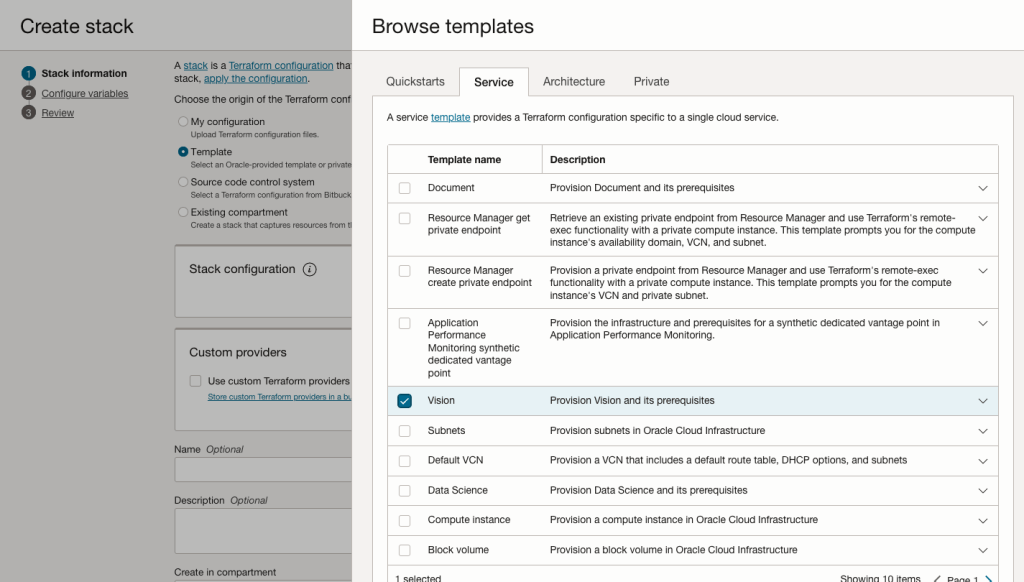

In the Create stack screen select Template from the radio group at the top of the page. Then in the Browse template pop-up screen, select the Service tab (across the top) and locate Vision. Once selected click the Select Template button.

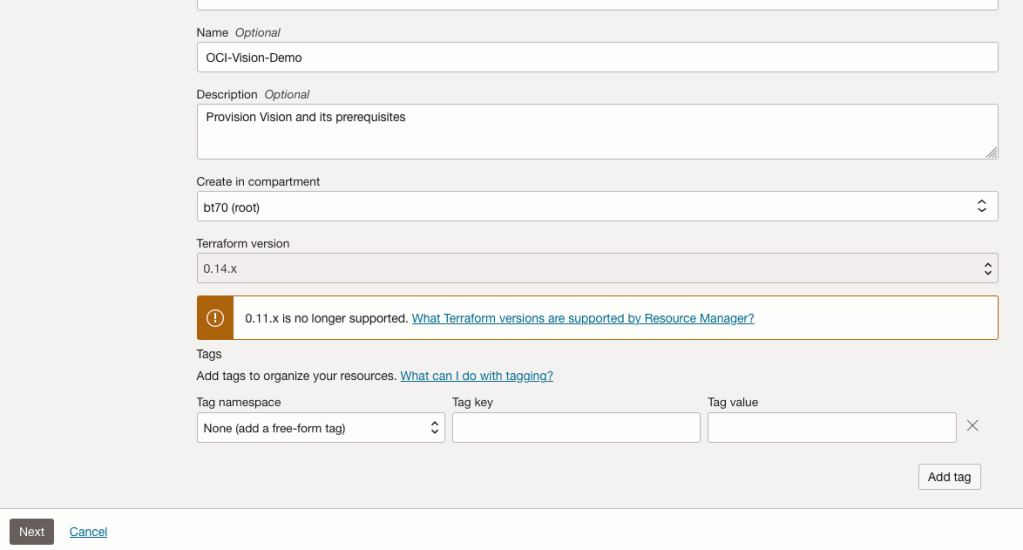

The page will load the necessary configuration. The only other thing you need to change on this page is the Name of the Service. Make it meaningful for you and your project. Click the Next button to continue to the next screen.

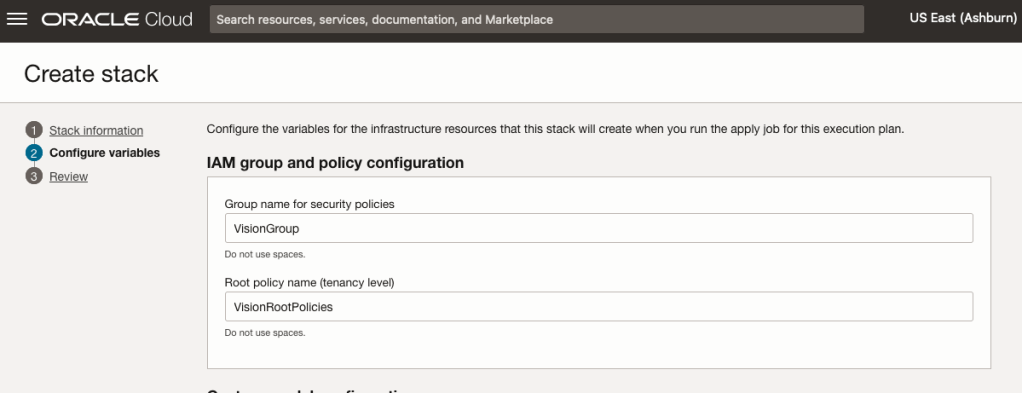

The top section relates to IAM Group name and policy configuration. You take the defaults or if you have specific groups already configured you can change it to it.

Most people will want to create their own customer models, as the supplied pre-built models are a bit basic. To enable Custom Built models, just tick the checkbox in the Custom Model Configuration section.

The second checkbox enables the batch processing of documents/images. If you check this box, you’ll need to specify the compartment you want the workload to be assigned to. Then click the Next button.

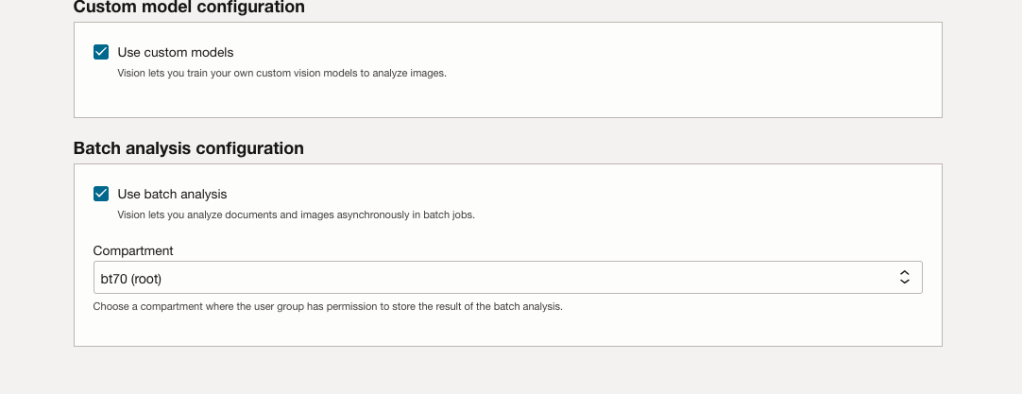

The final part displays a verification page of what was selected in the previous steps.

When ready click on the Run Apply check box and then click on the Create button.

It can take anything from a few seconds or a couple of minutes for the scripts to run.

When completed you’ll a Green box at the top of the screen and the message ‘SUCCEEDED’ under it.

Leaving Certificate 2023: In-line or more adjustments

The Leaving Certificate results were released this morning. Congratulations to everyone who has completed this major milestone. It’s a difficult set of examinations and despite all the calls to reform the examinations process, it has largely remained unchanged in decades, apart from some minor changes.

Last year (2022) I analysed some of the Leaving Certificate results. This was primarily focused on higher level papers and for a subset of the subjects. Some of these are the core subjects and some of the optional subjects. Just like last year we are told by the Department of Education the results this year will in-aggregate be in-line with last year. This statement is very confusing and also misleading. What does it really mean? No one has given a clear definition or explanation. What it tries to convey is the profile marks by subject are the same as last year. We say last year this was Not the case and we saw some grade deflation back towards the pre-Covid profile. Some though a similar stepping back this year, just like we have seen in the UK and other European countries.

The State Examinations Commission has released the break down of numbers and percentages of student who were awarded each grade by students. There are lots of ways to analyse this data from using Python and other Data Analytics tools, but for me I’ve loaded the data into an Oracle Database, and used Oracle Analytics Cloud to do some of the analysis along with other tools.

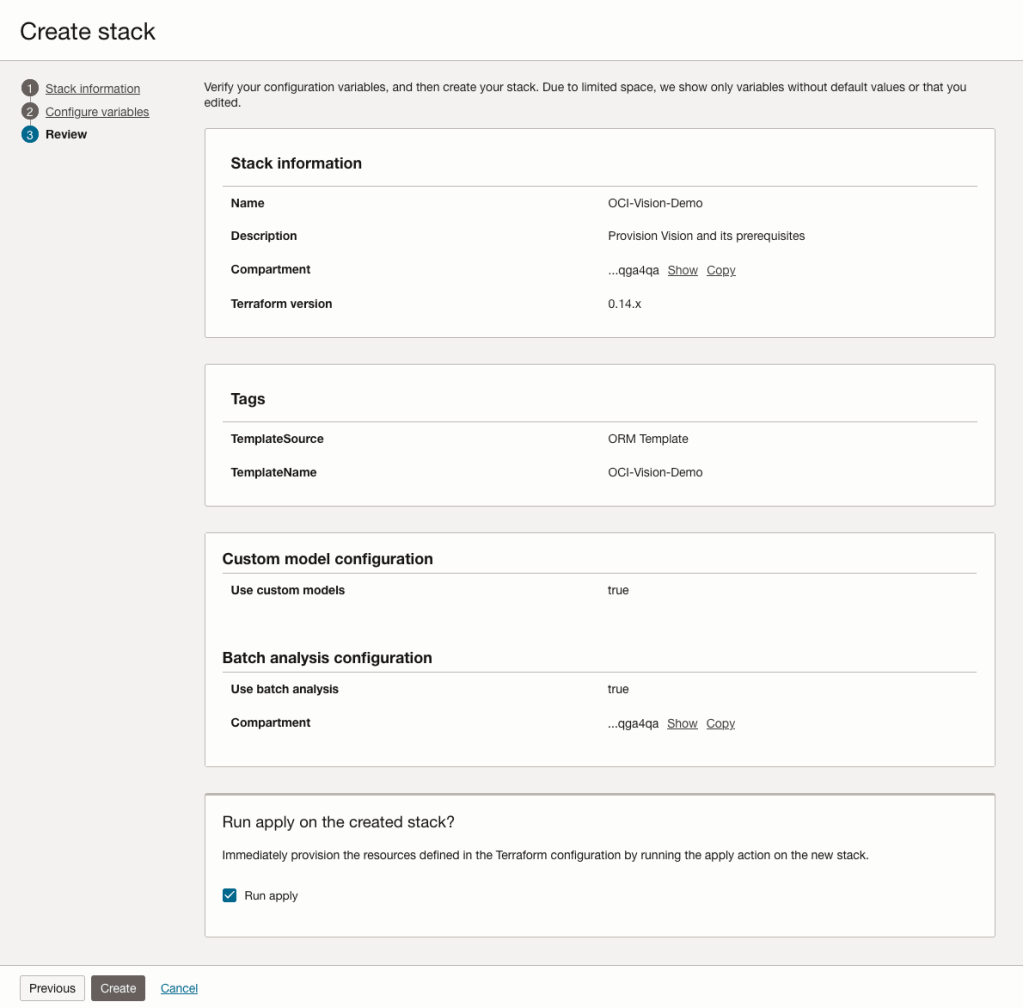

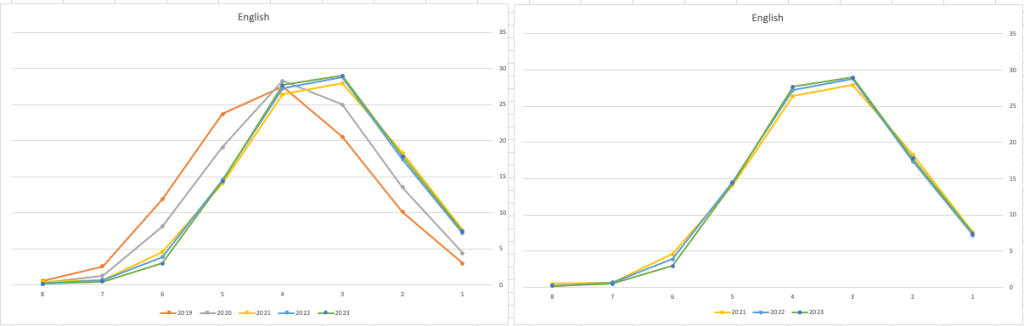

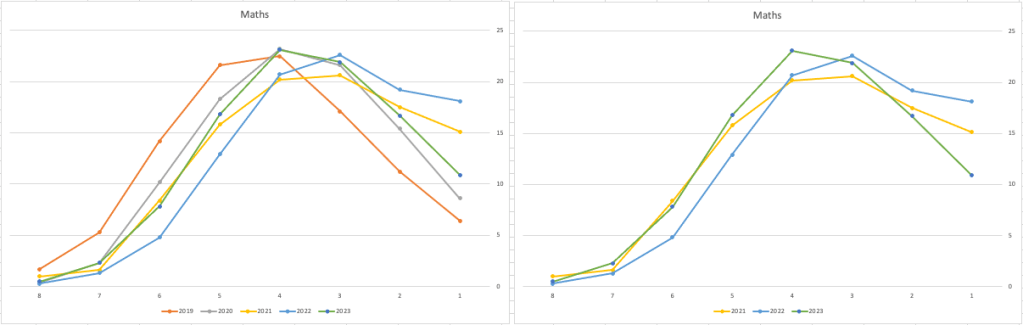

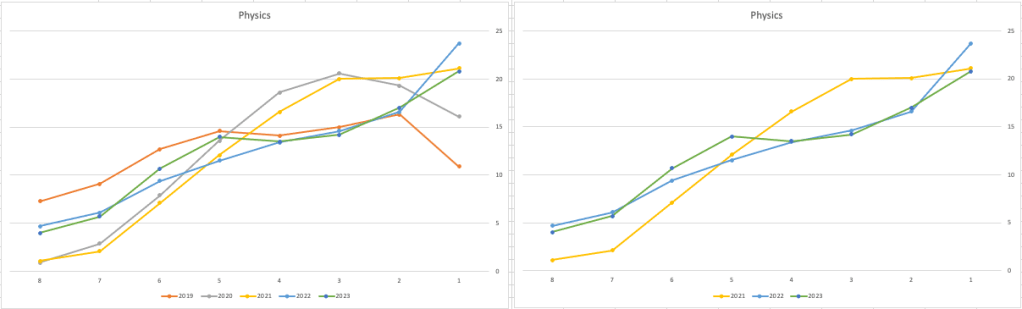

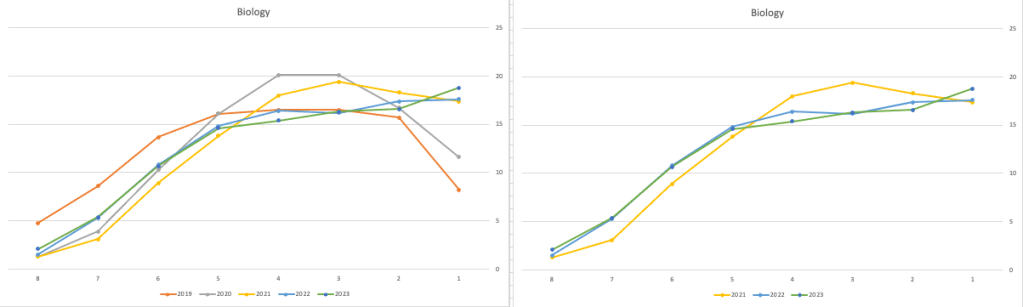

Let’s start with the core exam subjects of Irish, English and Maths. For Irish, last year we saw a step back in the profile of marks. This was a significant step back towards the marks in 2019 (pre-Covid). There wasn’t much discussion about this last year, and perhaps one of the reason is Irish is typically not counted towards their CAO points, as it is typically one of the weaker subjects for a lot of students. This year the profile of marks for Irish is in-line with last year’s profile (+/- small percentages) with slight up tick in H1 grades. For English, the profile of marks for 2021, 2022 and 2023 are almost exactly the same. But for Maths we do see a step back (moving the the left in the figure below) with a significantly lower percentage of student achieving a H2 or H1. Although we do see a slight increase in those getting H4 and H3 grades There was some problems with one of the Maths papers and perhaps marks were not adjusted due to those issues, which isn’t right to me as something should have been done. But perhaps a decision was made to all this step back in Maths to reduce the number of students achieving the top points of 625 and avoiding the scenario where those student do not get their first choice course for university.

The SEC have said the following about the marking of Maths Paper 1. “This process resulted in a marking scheme that was at the more lenient end of the normal range“. Even with more lenient marking and grade adjustments the profile of marks has taken a step backwards. This does raise question about how lenient they were or no and if the post mark adjusts took into account last year’s profile, or they are looking to step back to pre-Covid profiles.

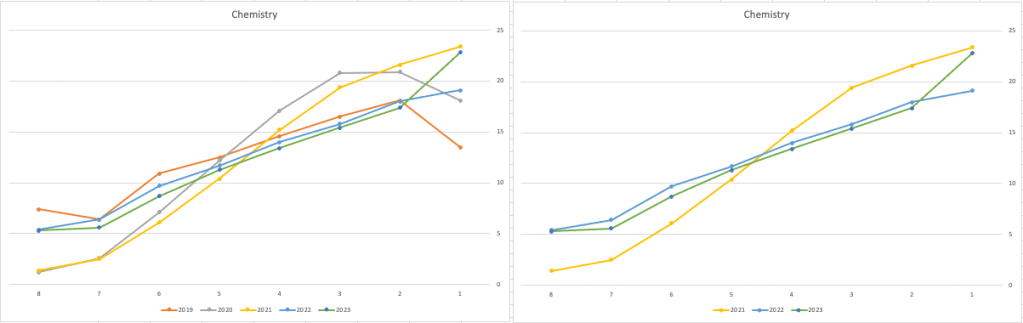

When we look at the Science students of Physics, Chemistry and Biology, we can see the profile of marks for this year is broadly in line with last years (2022). When looking at the profiles for these subjects, we can see that they are very similar to the pre-Covid profiles. Although there are some minor differences. We are still seeing an increased level H1s across this students when compared to pre-Covid 2019 levels. With lower grades having a slightly smaller percentage profile when compared to pre-Covid 2019 level. Look at the profiles 2022 and 2023 are broadly in-line with with 2019 (with some minor variations).

There are more subjects to report upon, but those listed above will cover most students.

What do these results and profile of marks mean for students looking to go to University or further education where the courses are based on the CAO Points systems. It looks like the step back in grade profile for Maths will have the biggest impact on CAO Points for courses. This will particularly impact those courses in the 525-625 range in 2022. We could see a small drop in marks for courses in that range with a possible drop of up to 10 points for some of those courses.

For courses in the 400-520 range in 2022, we might see a small increase in marks. Again this might be due to the profile of marks in Maths, but also with some of the other optional subjects. This year we could see a slight increase of 5-15 points for those courses.

Time will tell if these predictions come true, and hopefully every student will get the course they are hoping for as the nervous wait for CAO Round 1 offers commece. (Wednesday 30th August). I’ll have another post looking at the CAO Points profiles, so look out for that.

SQL:2023 Standard

As of June 2023 the new standard for SQL had been released, by the International Organization for Standards. Although SQL language has been around since the 1970s, this is the 11th release or update to the SQL standard. The first version of the standard was back in 1986 and the base-level standard for all databases was released in 1992 and referred to as SQL-92. That’s more than 30 years ago, and some databases still don’t contain all the base features in that standard, although they aim to achieve this sometime in the future.

| Year | Name | Alias | New Features or Enhancements |

|---|---|---|---|

| 1996 | SQL-86 | SQL-87 | This is the first version of the SQL standard by ANSI. Transactions, CREATE, Read, Update and Delete |

| 1989 | SQL-89 | This version includes minor revisions that added integrity constraints. | |

| 1992 | SQL-92 | SQL2 | This version includes major revisions on the SQL Language. Considered the base version of SQL. Many database systems, including NoSQL databases, use this standard for the base specification language, with varying degrees of implementation. |

| 1999 | SQL:1999 | SQL3 | This version introduces many new features such as regex matching, triggers, object-oriented features, OLAP capabilities and User Defined Types. The BOOLEAN data type was introduced but it took some time for all the major databases to support it. |

| 2003 | SQL:2003 | This version contained minor modifications to SQL:1999. SQL:2003 introduces new features such as window functions, columns with auto-generated values, identity specification and the addition of XML. | |

| 2006 | SQL:2006 | This version defines ways of importing, storing and manipulating XML data. Use of XQuery to query data in XML format. | |

| 2008 | SQL:2008 | This version includes major revisions to the SQL Language. Considered the base version of SQL. Many database systems, including NoSQL databases, use this standard for the base specification language, with varying degrees of implementation. | |

| 2011 | SQL:2011 | This version adds enhancements for window functions and FETCH clause, and Temporal data | |

| 2016 | SQL:2016 | This version adds various functions to work with JSON data and Polymorphic Table functions. | |

| 2019 | SQL:2019 | This version specifies muti-dimensional arrays data type. | |

| 2023 | SQL:2023 | This version contains minor updates to SQL functions to bring them in-line with how databases have implemented them. New JSON updates to include a new JSON data type with simpler dot notation processing. The main new addition to the standard is Property Graph Query (PDQ) which defines ways for the SQL language to represent property graphs and to interact with them. |

The Property Graph Query (PGQ) new features have been added as a new section or part of the standard and can be found labelled as Part-16: Property Graph Queries (SQL/PGQ). You can purchase the document for €191. Or you can read and scroll through the preview here.

For the other SQL updates, these updates were to reflect how the various (mainstream) database vendors (PostgreSQL, MySQL, Oracle, SQL Server, have implemented various functions. The standard is catching up with what is happening across the industry. This can be seen in some of the earlier releases

The following blog posts give a good overview of the SQL changes in the SQL:2023 standard.

Although SQL:2023 has been released there are some discussions about the next release of the standard. Although SQL:PGQ has been introduced, it also looks like (from various reports), some parts of SQL:PGQ were dropped and not included. More work is needed on these elements and will be included in the next release.

Also for the next release, there will be more JSON functionality included, with a particular focus on JSON Schemas. Yes, you read that correctly. There is a realisation that a schema is a good thing and when JSON objects can consist of up to 80% meta-data, a schema will have significant benefits for storage, retrieval and processing.

They are also looking at how to incorporate Streaming data. We can see some examples of this kind of processing in other languages and tools (Spark SQL, etc)

The SQL Standard is still being actively developed and we should see another update in a few years time.

Oracle 23c DBMS_SEARCH – Ubiquitous Search

One of the new PL/SQL packages with Oracle 23c is DBMS_SEARCH. This can be used for indexing (and searching) multiple schema objects in a single index.

Check out the documentation for DBMS_SEARCH.

This type of index is a little different to your traditional index. With DBMS_SEARCH we can create an index across multiple schema objects using just a single index. This gives us greater indexing capabilities for scenarios where we need to search data across multiple objects. You can create a ubiquitous search index on multiple columns of a table or multiple columns from different tables in a given schema. All done using one index, rather than having to use multiples. Because of this wider search capability, you will see this (DBMS_SEARCH) being referred to as a Ubiquitous Search Index. A ubiquitous search index is a JSON search index and can be used for full-text and range-based searches.

To create the index, you will first define the name of the index, and then add the different schema objects (tables, views) to it. The main commands for creating the index are:

- DBMS_SEARCH.CREATE_INDEX

- DBMS_SEARCH.ADD_SOURCE

Note: Each table used in the ADD_SOURCE must have a primary key.

The following is an example of using this type of index using the HR schema/data set.

exec dbms_search.create_index('HR_INDEX');This just creates the index header.

Important: For each index created using this method it will create a table with the Index name in your schemas. It will also create fourteen DR$ tables in your schema. SQL Developer filtering will help to hide these and minimise the clutter.

select table_name from user_tables;

...

HR_INDEX

DR$HR_INDEX$I

DR$HR_INDEX$K

DR$HR_INDEX$N

DR$HR_INDEX$U

DR$HR_INDEX$Q

DR$HR_INDEX$C

DR$HR_INDEX$B

DR$HR_INDEX$SN

DR$HR_INDEX$SV

DR$HR_INDEX$ST

DR$HR_INDEX$G

DR$HR_INDEX$DG

DR$HR_INDEX$KG To add the contents and search space to the index we need to use ADD_SOURCE. In the following, I’m adding two tables to the index.

exec DBMS_SEARCH.ADD_SOURCE('HR_INDEX', 'EMPLOYEES');NOTE: At the time of writing this post some of the client tools and libraries do not support the JSON datatype fully. If they did, you could just query the index metadata, but until such time all tools and libraries fully support the data type, you will need to use the JSON_SERIALIZE function to translate the metadata. If you query the metadata and get no data returned, then try using this function to get the data.

Running a simple select from the index might give you an error due to the JSON type not being fully implemented in the client software. (This will change with time)

select * from HR_INDEX;But if we do a count from the index, we could get the number of objects it contains.

select count(*) from HR_INDEX;

COUNT(*)

___________

107 We can view what data is indexed by viewing the virtual document.

select json_serialize(DBMS_SEARCH.GET_DOCUMENT('HR_INDEX',METADATA))

from HR_INDEX;

JSON_SERIALIZE(DBMS_SEARCH.GET_DOCUMENT('HR_INDEX',METADATA))

___________________________________________________________________________________________________________________________________________________________________________________________________________________________________________________________________________

{"HR":{"EMPLOYEES":{"PHONE_NUMBER":"515.123.4567","JOB_ID":"AD_PRES","SALARY":24000,"COMMISSION_PCT":null,"FIRST_NAME":"Steven","EMPLOYEE_ID":100,"EMAIL":"SKING","LAST_NAME":"King","MANAGER_ID":null,"DEPARTMENT_ID":90,"HIRE_DATE":"2003-06-17T00:00:00"}}}

{"HR":{"EMPLOYEES":{"PHONE_NUMBER":"515.123.4568","JOB_ID":"AD_VP","SALARY":17000,"COMMISSION_PCT":null,"FIRST_NAME":"Neena","EMPLOYEE_ID":101,"EMAIL":"NKOCHHAR","LAST_NAME":"Kochhar","MANAGER_ID":100,"DEPARTMENT_ID":90,"HIRE_DATE":"2005-09-21T00:00:00"}}}

{"HR":{"EMPLOYEES":{"PHONE_NUMBER":"515.123.4569","JOB_ID":"AD_VP","SALARY":17000,"COMMISSION_PCT":null,"FIRST_NAME":"Lex","EMPLOYEE_ID":102,"EMAIL":"LDEHAAN","LAST_NAME":"De Haan","MANAGER_ID":100,"DEPARTMENT_ID":90,"HIRE_DATE":"2001-01-13T00:00:00"}}}

{"HR":{"EMPLOYEES":{"PHONE_NUMBER":"590.423.4567","JOB_ID":"IT_PROG","SALARY":9000,"COMMISSION_PCT":null,"FIRST_NAME":"Alexander","EMPLOYEE_ID":103,"EMAIL":"AHUNOLD","LAST_NAME":"Hunold","MANAGER_ID":102,"DEPARTMENT_ID":60,"HIRE_DATE":"2006-01-03T00:00:00"}}}

{"HR":{"EMPLOYEES":{"PHONE_NUMBER":"590.423.4568","JOB_ID":"IT_PROG","SALARY":6000,"COMMISSION_PCT":null,"FIRST_NAME":"Bruce","EMPLOYEE_ID":104,"EMAIL":"BERNST","LAST_NAME":"Ernst","MANAGER_ID":103,"DEPARTMENT_ID":60,"HIRE_DATE":"2007-05-21T00:00:00"}}} We can search the metadata for certain data using the CONTAINS or JSON_TEXTCONTAINS functions.

select json_serialize(metadata)

from DEMO_IDX

where contains(data, 'winston')>0;select json_serialize(metadata)

from DEMO_IDX

where json_textcontains(data, '$.HR.EMPLOYEES.FIRST_NAME', 'Winston');When the index is no longer required it can be dropped by running the following. Don’t run a DROP INDEX command as that removes some objects and leaves others behind! (leaves a bit of mess) and you won’t be able to recreate the index, unless you give it a different name.

exec dbms_search.drop_index('SH_INDEX');EU AI Act adopts OECD Definition of AI

Over the recent months, the EU AI Act has been making progress through the various hoop in the EU. Various committees and working groups have examined different parts of the AI Act and how it will impact the wider population. Their recommendations have been added to the EU Act and it has now progressed to the next stage for ratification in the EU Parliament which should happen in a few months time.

There are lots of terms within the EU AI Act which needed defining, with the most crucial one being the definition of AI, and this definition underpins the entire act, and all the other definitions of terms throughout the EU AI Act. Back in March of this year, the various political groups working on the EU AI Act reached an agreement on the definition of AI (Artificial Intelligence). The EI AI Act adopts, or is based on, the OECD definition of AI.

“Artificial intelligence system’ (AI system) means a machine-based system that is designed to operate with varying levels of autonomy and that can, for explicit or implicit objectives, generate output such as predictions, recommendations, or decisions influencing physical or virtual environments”

The working groups wanted the AI definition to be closely aligned with the work of international organisations working on artificial intelligence to ensure legal certainty, harmonisation and wide acceptance. The wording includes reference to predictions includes content, this is to ensure generative AI models like ChatGPT are included in the regulation.

Other definitions included are, significant risk, biometric authentication and identification.

“‘Significant risk’ means a risk that is significant in terms of its severity, intensity, probability of occurrence, duration of its effects, and its ability to affect an individual, a plurality of persons or to affect a particular group of persons,” the document specifies.

Remote biometric verification systems were defined as AI systems used to verify the identity of persons by comparing their biometric data against a reference database with their prior consent. That is distinguished by an authentication system, where the persons themselves ask to be authenticated.

On biometric categorisation, a practice recently added to the list of prohibited use cases, a reference was added to inferring personal characteristics and attributes like gender or health.

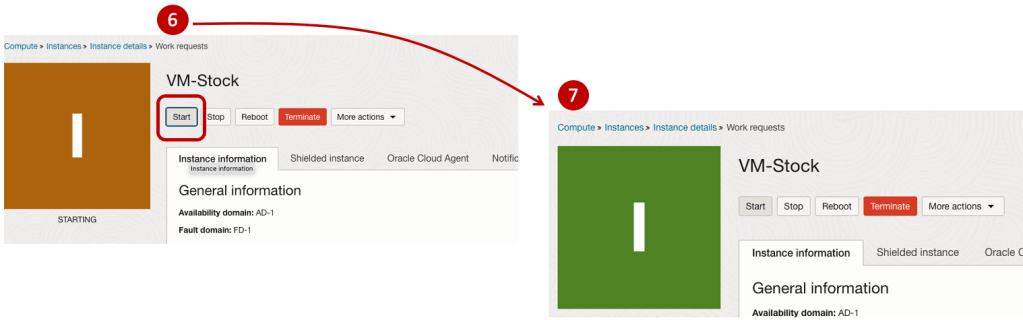

Machine Learning App Migration to Oracle Cloud VM

Over the past few years, I’ve been developing a Stock Market prediction algorithm and made some critical refinements to it earlier this year. As with all analytics, data science, machine learning and AI projects, testing is vital to ensure its performance, accuracy and sustainability. Taking such a project out of a lab environment and putting it into a production setting introduces all sorts of different challenges. Some of these challenges include being able to self-manage its own process, logging, traceability, error and event management, etc. Automation is key and implementing all of these extra requirements tasks way more code and time than developing the actual algorithm. Typically, the machine learning and algorithms code only accounts for less than five percent of the actual code, and in some cases, it can be less than one percent!

I’ve come to the stage of deploying my App to a production-type environment, as I’ve been running it from my laptop and then a desktop for over a year now. It’s now 100% self-managing so it’s time to deploy. The environment I’ve chosen is using one of the Virtual Machines (VM) available on the Oracle Free Tier. This means it won’t cost me a cent (dollar or more) to run my App 24×7.

My App has three different components which use a core underlying machine learning predictions engine. Each is focused on a different set of stock markets. These marks operate in the different timezone of US markets, European Markets and Asian Markets. Each will run on a slightly different schedule than the rest.

The steps outlined below take you through what I had to do to get my App up and running the VM (Oracle Free Tier). It took about 20 minutes to complete everything

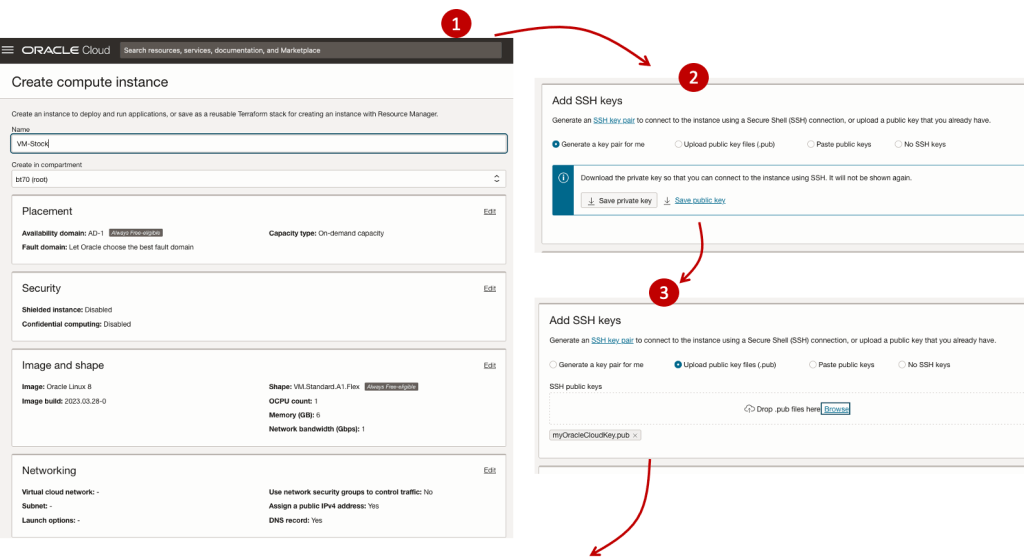

The first thing you need to do is create a ssh key file. There are a number of ways of doing this and the following is an example.

ssh-keygen -t rsa -N "" -b 2048 -C "myOracleCloudkey" -f myOracleCloudkey

This key file will be used during the creation of the VM and for logging into the VM.

Log into your Oracle Cloud account and you’ll find the Create Instances Compute i.e. create a virtual machine/

Complete the Create Instance form and upload the ssh file you created earlier. Then click the Create button. This assumes you have networking already created.

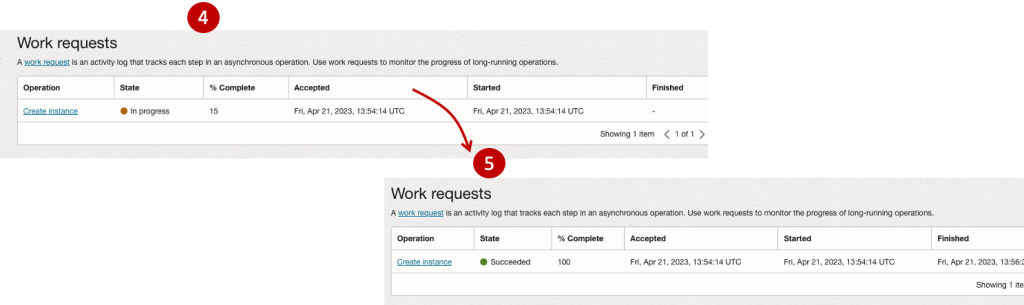

It will take a minute or two for the VM to be created and you can monitor the progress.

After it has been created you need to click on the start button to start the VM.

After it has started you can now log into the VM from a terminal window, using the public IP address

ssh -i myOracleCloudKey opc@xxx.xxx.xxx.xxxAfter you’ve logged into the VM it’s a good idea to run an update.

[opc@vm-stocks ~]$ sudo yum -y update

Last metadata expiration check: 0:13:53 ago on Fri 21 Apr 2023 14:39:59 GMT.

Dependencies resolved.

========================================================================================================================

Package Arch Version Repository Size

========================================================================================================================

Installing:

kernel-uek aarch64 5.15.0-100.96.32.el8uek ol8_UEKR7 1.4 M

kernel-uek-core aarch64 5.15.0-100.96.32.el8uek ol8_UEKR7 47 M

kernel-uek-devel aarch64 5.15.0-100.96.32.el8uek ol8_UEKR7 19 M

kernel-uek-modules aarch64 5.15.0-100.96.32.el8uek ol8_UEKR7 59 M

Upgrading:

NetworkManager aarch64 1:1.40.0-6.0.1.el8_7 ol8_baseos_latest 2.1 M

NetworkManager-config-server noarch 1:1.40.0-6.0.1.el8_7 ol8_baseos_latest 141 k

NetworkManager-libnm aarch64 1:1.40.0-6.0.1.el8_7 ol8_baseos_latest 1.9 M

NetworkManager-team aarch64 1:1.40.0-6.0.1.el8_7 ol8_baseos_latest 156 k

NetworkManager-tui aarch64 1:1.40.0-6.0.1.el8_7 ol8_baseos_latest 339 k

...

...

The VM is now ready to setup and install my App. The first step is to install Python, as all my code is written in Python.

[opc@vm-stocks ~]$ sudo yum install -y python39

Last metadata expiration check: 0:20:35 ago on Fri 21 Apr 2023 14:39:59 GMT.

Dependencies resolved.

========================================================================================================================

Package Architecture Version Repository Size

========================================================================================================================

Installing:

python39 aarch64 3.9.13-2.module+el8.7.0+20879+a85b87b0 ol8_appstream 33 k

Installing dependencies:

python39-libs aarch64 3.9.13-2.module+el8.7.0+20879+a85b87b0 ol8_appstream 8.1 M

python39-pip-wheel noarch 20.2.4-7.module+el8.6.0+20625+ee813db2 ol8_appstream 1.1 M

python39-setuptools-wheel noarch 50.3.2-4.module+el8.5.0+20364+c7fe1181 ol8_appstream 497 k

Installing weak dependencies:

python39-pip noarch 20.2.4-7.module+el8.6.0+20625+ee813db2 ol8_appstream 1.9 M

python39-setuptools noarch 50.3.2-4.module+el8.5.0+20364+c7fe1181 ol8_appstream 871 k

Enabling module streams:

python39 3.9

Transaction Summary

========================================================================================================================

Install 6 Packages

Total download size: 12 M

Installed size: 47 M

Downloading Packages:

(1/6): python39-pip-20.2.4-7.module+el8.6.0+20625+ee813db2.noarch.rpm 23 MB/s | 1.9 MB 00:00

(2/6): python39-pip-wheel-20.2.4-7.module+el8.6.0+20625+ee813db2.noarch.rpm 5.5 MB/s | 1.1 MB 00:00

...

...Next copy the code to the VM, setup the environment variables and create any necessary directories required for logging. The final part of this is to download the connection Wallett for the Database. I’m using the Python library oracledb, as this requires no additional setup.

Then install all the necessary Python libraries used in the code, for example, pandas, matplotlib, tabulate, seaborn, telegram, etc (this is just a subset of what I needed). For example here is the command to install pandas.

pip3.9 install pandasAfter all of that, it’s time to test the setup to make sure everything runs correctly.

The final step is to schedule the App/Code to run. Before setting the schedule just do a quick test to see what timezone the VM is running with. Run the date command and you can see what it is. In my case, the VM is running GMT which based on the current time locally, the VM was showing to be one hour off. Allowing for this adjustment and for day-light saving time, the time +/- markets openings can be set. The following example illustrates setting up crontab to run the App, Monday-Friday, between 13:00-22:00 and at 5-minute intervals. Open crontab and edit the schedule and command. The following is an example

> contab -e

*/5 13-22 * * 1-5 python3.9 /home/opc/Stocks.py >Stocks.txtFor some stock market trading apps, you might want it to run more frequently (than every 5 minutes) or less frequently depending on your strategy.

After scheduling the components for each of the Geographic Stock Market areas, the instant messaging of trades started to appear within a couple of minutes. After a little monitoring and validation checking, it was clear everything was running as expected. It was time to sit back and relax and see how this adventure unfolds.

For anyone interested, the App does automated trading with different brokers across the markets, while logging all events and trades to an Oracle Autonomous Database (Free Tier = no cost), and sends instant messages to me notifying me of the automated trades. All I have to do is Nothing, yes Nothing, only to monitor the trade notifications. I mentioned earlier the importance of testing, and with back-testing of the recent changes/improvements (as of the date of post), the App has given a minimum of 84% annual return each year for the past 15 years. Most years the return has been a lot more!

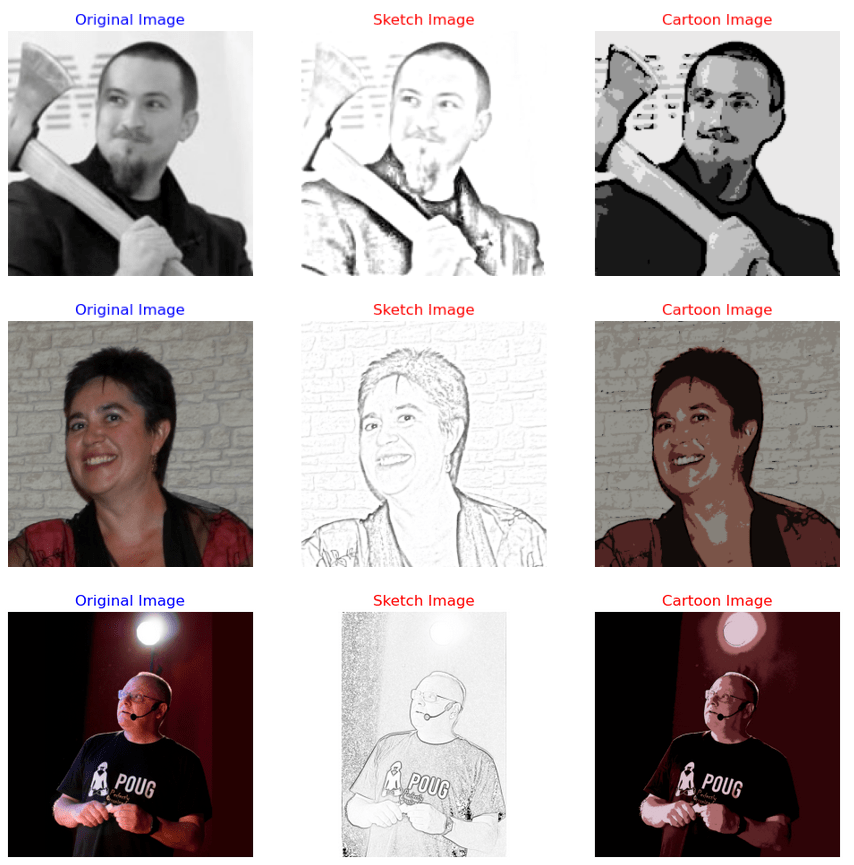

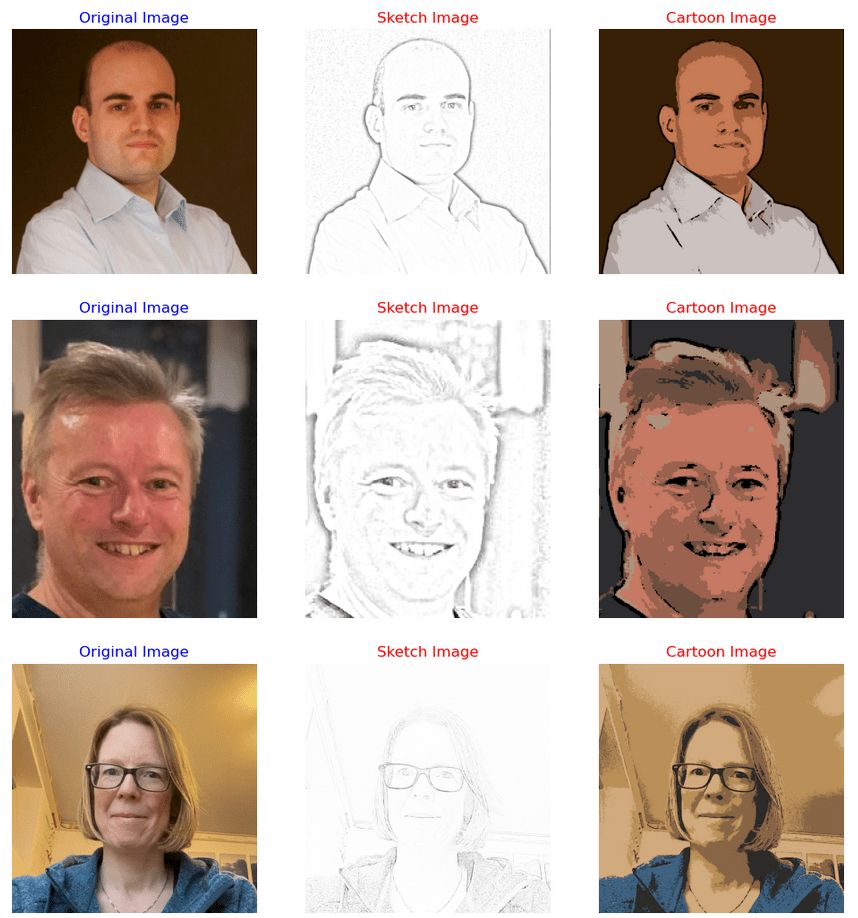

Image Augmentation (Pencil & Cartoon) with OpenCV #SYM42

OpenCV has been with us for over two decades and provides us with a rich open-source library for performing image processing.

In this post I’m going to illustrate how you can use it to convert images (of people) into pencil sketches and cartoon images. As with most examples you find on such technologies there are things it is good at and some things this isn’t good at. Using the typical IT phrase, “It Depends” comes into play with image processing. What might work with one set of images, might not work as well with others.

The example images below consist of the Board of a group called SYM42, or Symposium42. Yes, they said I could use their images and show the output from using OpenCV 🙂 This group was formed by a community to support the community, was born out of an Oracle Community but is now supporting other technologies. They are completely independent of any Vendor which means they can be 100% honest about which aspects of any product do or do not work and are not influenced by the current sales or marketing direction of any company. Check out their About page.

Let’s get started. After downloading the images to process, let’s view them.

import cv2

import matplotlib.pyplot as plt

import numpy as np

dir = '/Users/brendan.tierney/Dropbox/6-Screen-Background/'

file = 'SYM42-Board-Martin.jpg'

image = cv2.imread(dir+file)

img_name = 'Original Image'

#Show the image with matplotlib

#plt.imshow(image)

#OpenCV uses BGR color scheme whereas matplotlib uses RGB colors scheme

#convert BGR image to RGB by using the following

plt.imshow(cv2.cvtColor(image, cv2.COLOR_BGR2RGB))

plt.axis(False)

plt.show()

I’m using Jupyter Notebooks for this work. In the above code, you’ll see I’ve commented out the line [#plt.imshow(image)]. This comment doesn’t really work in Jupyter Notebooks and instead you need to swap to using Matplotlib to display the images

To convert to a pencil sketch, we need to convert to pencil sketch, apply a Gaussian Blur, invert the image and perform bit-wise division to get the final pencil sketch.

#convert to grey scale

#cvtColor function

grey_image = cv2.cvtColor(image, cv2.COLOR_BGR2GRAY)

#invert the image

invert_image = cv2.bitwise_not(grey_image)

#apply Gaussian Blue : adjust values until you get pencilling effect required

blur_image=cv2.GaussianBlur(invert_image, (21,21),0) #111,111

#Invert Blurred Image

#Repeat previous step

invblur_image=cv2.bitwise_not(blur_image)

#The sketch can be obtained by performing bit-wise division between

# the grayscale image and the inverted-blurred image.

sketch_image=cv2.divide(grey_image, invblur_image, scale=256.0)

#display the pencil sketch

plt.imshow(cv2.cvtColor(sketch_image, cv2.COLOR_BGR2RGB))

plt.axis(False)

plt.show()The following code listing contains the same as above and also includes the code to convert to a cartoon style.

import os

import glob

import cv2

import matplotlib.pyplot as plt

import numpy as np

def edge_mask(img, line_size, blur_value):

gray = cv2.cvtColor(img, cv2.COLOR_BGR2GRAY)

gray_blur = cv2.medianBlur(gray, blur_value)

edges = cv2.adaptiveThreshold(gray_blur, 255, cv2.ADAPTIVE_THRESH_MEAN_C, cv2.THRESH_BINARY, line_size, blur_value)

return edges

def cartoon_image(img_cartoon):

img=img_cartoon

line_size = 7

blur_value = 7

edges = edge_mask(img, line_size, blur_value)

#Clustering - (K-MEANS)

imgf=np.float32(img).reshape(-1,3)

criteria=(cv2.TERM_CRITERIA_EPS+cv2.TERM_CRITERIA_MAX_ITER,20,1.0)

compactness,label,center=cv2.kmeans(imgf,5,None,criteria,10,cv2.KMEANS_RANDOM_CENTERS)

center=np.uint8(center)

final_img=center[label.flatten()]

final_img=final_img.reshape(img.shape)

cartoon=cv2.bitwise_and(final_img,final_img,mask=edges)

return cartoon

def sketch_image(image_file, blur):

import_image = cv2.imread(image_file)

#cvtColor function

grey_image = cv2.cvtColor(import_image, cv2.COLOR_BGR2GRAY)

#invert the image

invert_image = cv2.bitwise_not(grey_image)

blur_image=cv2.GaussianBlur(invert_image, (blur,blur),0) #111,111

#Invert Blurred Image

#Repeat previous step

invblur_image=cv2.bitwise_not(blur_image)

sketch_image=cv2.divide(grey_image, invblur_image, scale=256.0)

cartoon_img=cartoon_image(import_image)

#plot images

# plt.figure(figsize=(9,6))

plt.rcParams["figure.figsize"] = (12,8)

#plot cartoon

plt.subplot(1,3,3)

plt.imshow(cv2.cvtColor(cartoon_img, cv2.COLOR_BGR2RGB))

plt.title('Cartoon Image', size=12, color='red')

plt.axis(False)

#plot sketch

plt.subplot(1,3,2)

plt.imshow(cv2.cvtColor(sketch_image, cv2.COLOR_BGR2RGB))

plt.title('Sketch Image', size=12, color='red')

plt.axis(False)

#plot original image

plt.subplot(1,3,1)

plt.imshow(cv2.cvtColor(import_image, cv2.COLOR_BGR2RGB))

plt.title('Original Image', size=12, color='blue')

plt.axis(False)

#plot show

plt.show()

for filepath in glob.iglob(dir+'SYM42-Board*.*'):

#print(filepath)

#import_image = cv2.imread(dir+file)

sketch_image(filepath, 23)For the SYM42 Board members, we get the following output.

As you can see from these images, some are converted in a way you would expect. While others seem to give little effect.

Thanks to the Board of SYM42 for allowing me to use their images.

EU Digital Services Act

The Digital Services Act (DSA) applies to a wide variety of online services, ranging from websites to social networks and online platforms, with a view to “creating a safer digital space in which the fundamental rights of all users of digital services are protected”.

In November 2020, the European Union introduced a new legislation called the Digital Services Act (DSA) to regulate the activities of tech companies operating within the EU. The aim of the DSA is to create a safer and more transparent online environment for EU citizens by imposing new rules and responsibilities on digital service providers. This includes online platforms such as social media, search engines, e-commerce sites, and cloud services. The provisions in the DSA Act will apply from 17th February 2024, thus giving affected parties time to ensure compliance.

The DSA aims to address a number of issues related to digital services, including:

- Ensuring that digital service providers take responsibility for the content on their platforms and that they have effective measures in place to combat illegal content, such as hate speech, terrorist content, and counterfeit goods.

- Requiring digital service providers to be more transparent about their advertising practices, and to disclose more information about the algorithms they use to recommend content.

- Introducing new rules for online marketplaces to prevent the sale of unsafe products and to ensure that consumers are protected when buying online.

- Strengthening the powers of national authorities to enforce these rules and to hold digital service providers accountable for any violations.

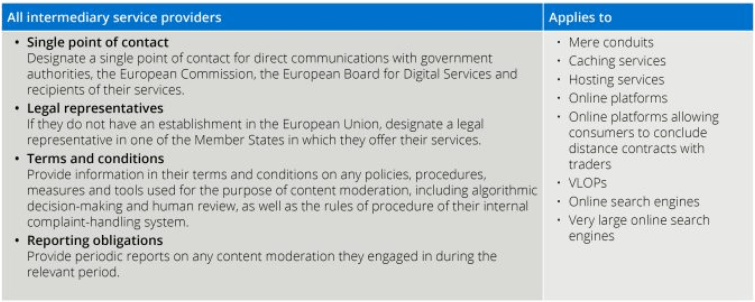

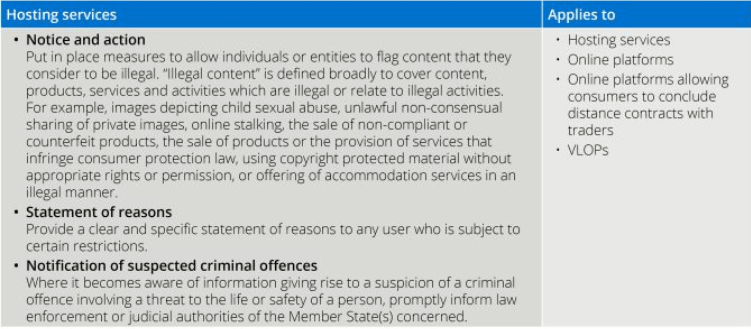

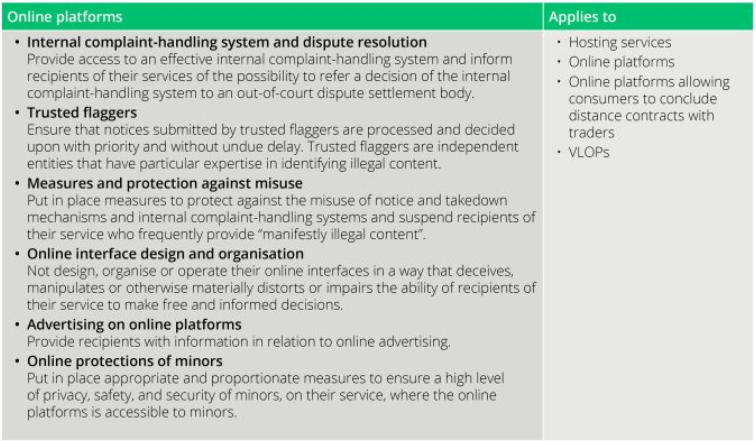

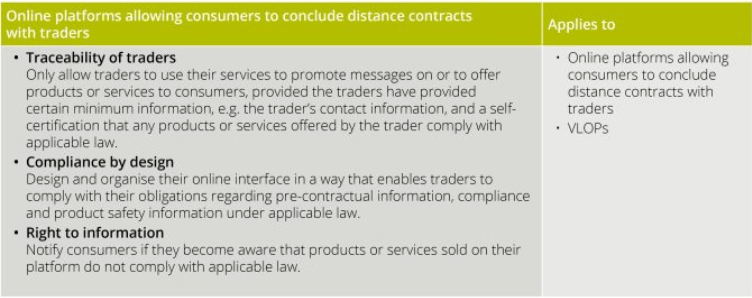

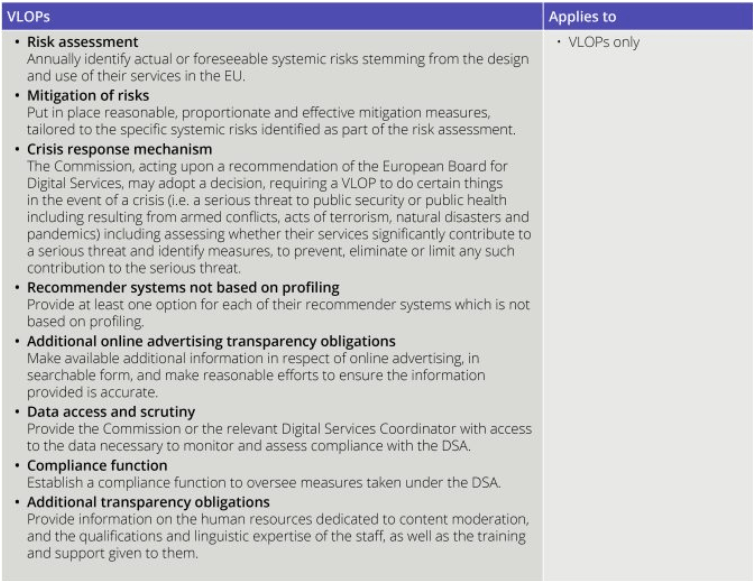

The DSA takes a layered approach to regulation. The basic obligations under the DSA apply to all online intermediary service providers, additional obligations apply to providers in other categories, with the heaviest regulation applying to very large online platforms (VLOPs) and very large online service engines (VLOSEs).

The four categories are:

- Intermediary service providers are online services which consist of either a “mere conduit” service, a “caching” service; or a “hosting” service. Examples include online search engines, wireless local area networks, cloud infrastructure services, or content delivery networks.

- Hosting services are intermediary service providers who store information at the request of the service user. Examples include cloud services and services enabling sharing information and content online, including file storage and sharing.

- Online Platforms are hosting services which also disseminate the information they store to the public at the user’s request. Examples include social media platforms, message boards, app stores, online forums, metaverse platforms, online marketplaces and travel and accommodation platforms.

- (a) VLOPs are online platforms having more than 45 million active monthly users in the EU (representing 10% of the population of the EU). (b) VLOSEs are online search engines having more than 45 million active monthly users in the EU (representing 10% of the population of the EU).

Arther Cox provide a useful table of obligations for each of these categories.

Morse Code with Python

Morse code is a method used in telecommunication to encode text characters as standardized sequences of two different signal duration, called dots and dashes. Morse code is named after Samuel Morse, one of the inventors of the telegraph (wikipedia).

The example code below illustrates taking input from the terminal, converting it into Morse code, playing the Morse code sound signal, then converts the Morse code back into plain text and prints this to the screen. This is a base set of code you can use and can be easily extended to make it more interactive.

When working with sound and audio in Python there are lots of different libraries available for this. But some of the challenges is trying to pick a suitable one, and one that is still supported in more recent times. One of the most commonly referenced library is called Winsound, but that is for Windows based computers. Not everyone uses Windows machines, just like myself using a Mac. So Winsound wasn’t an option. I selected to use the playsound library, mainly based on how commonly referenced it is.

To play the dots and dashs, I needed some sound files and these were originally sources from Wikimedia. The sound files are available on wikimedia, but these come in ogg file formats. I’ve converted the dot and dash files to mp3 files and theses can be downloaded from here, dot download and dash download. I also included a Error sound file in my code for when an error occurs! Error download.

When you download the code and sound files, you might need to adjust the timing for playing the Morse code sound files, as this might be dependent on your computer

The Morse code mapping was setup as a dictionary and a reverse mapping of this dictionary was used to translate morse code into plain text.

import time

from playsound import playsound

toMorse = {'a': ".-", 'b': "-...",

'c': "-.-.", 'd': "-..",

'e': ".", 'f': "..-.",

'g': "--.", 'h': "....",

'i': "..", 'j': ".---",

'k': "-.-", 'l': ".-..",

'm': "--", 'n': "-.",

'o': "---", 'p': ".--.",

'q': "--.-", 'r': ".-.",

's': "...", 't': "-",

'u': "..-", 'v': "...-",

'w': ".--", 'x': "-..-",

'y': "-.--", 'z': "--..",

'1': ".----", '2': "..---",

'3': "...--", '4': "....-",

'5': ".....", '6': "-....",

'7': "--...", '8': "---..",

'9': "----.", '0': "-----",

' ': " ", '.': ".-.-.-",

',': "--..--", '?': "..--..",

"'": ".----.", '@': ".--.-.",

'-': "-....-", '"': ".-..-.",

':': "---...", ';': "---...",

'=': "-...-", '!': "-.-.--",

'/': "-..-.", '(': "-.--.",

')': "-.--.-", 'á': ".--.-",

'é': "..-.."}

#sounds from https://commons.wikimedia.org/wiki/Morse_code

soundPath = "/Users/brendan.tierney/Dropbox/4-Datasets/morse_code_audio/"

#adjust this value to change time between dots/dashes

tBetween = 0.1

def play_morse_beep():

playsound(soundPath + 'Dot_morse_code.mp3')

time.sleep(1 * tBetween)

def play_morse_dash():

playsound(soundPath + 'Dash_morse_code.mp3')

time.sleep(2 * tBetween)

def play_morse_space():

time.sleep(2 * tBetween)

def play_morse_error():

playsound(soundPath + 'Error_invalid_char.mp3')

time.sleep(2 * tBetween)

def text_to_morse(inStr):

mStr = ""

for c in [x for x in inStr]:

m = toMorse[c]

mStr += m + ' '

print("morse=",mStr)

return mStr

def play_morse(inMorse):

for m in inMorse:

if m == ".":

play_morse_beep()

elif m == "-":

play_morse_dash()

elif m == " ":

play_morse_space()

else:

play_morse_error()

#Get Input Text

from colorama import Fore, Back, Style

print(Fore.RED + '')

inputStr = input("Enter text -> morse :").lower() #.strip()

print(Fore.BLACK + '' + inputStr)

mStr = text_to_morse(inputStr)

play_morse(mStr)

play_morse(mStr)

Then to reverse the Morse code.

#reverse the k,v

mToE = {}

for key, value in toMorse.items():

mToE[value] = key

def morse_to_english(inStr):

inStr = inStr.split(" ")

engStr = []

for c in inStr:

if c in mToE:

engStr.append(mToE[c])

return "".join(engStr)

x=morse_to_english(mStr)

print(x)Oracle 23c Free – Developer Release

Oracle 23c if finally available, in the form of Oracle 23c FREE – Developer Release. There was lots of excitement in some parts of the IT community about this release, some of which is to do with people having to wait a while for this release, given 22c was never released due to Covid!

But some caution is needed and reining back on the excitement is needed.

Why? This release isn’t the full bells and whistles full release of 23c Database. There has been several people from Oracle emphasizing the name of this release is Oracle 23c Free – Developer Release. There are a few things to consider with this release. It isn’t a GA (General Available) Release which is due later this year (maybe). Oracle 23c Free – Developer Release is an early release to allow developers to start playing with various developer focused new features. Some people have referred to this as the 23c Beta version 2 release, and this can be seen in the DB header information. It could be viewed in a similar way as the XE releases we had previously. XE was always Free, so we now we have a rename and emphasis of this. These have been many, many organizations using the XE release to build applications. Also the the XE releases were a no cost option, or what most people would like to say, the FREE version.

For the full 23c Database release we will get even more features, but most of these will probably be larger enterprise scale scenarios.

Now it’s time you to go play with 23c Free – Developer Release. Here are some useful links

- Product Release Official Announcement

- Post by Gerald Venzi

- See link for Docker installation below

- VirtualBox Virtual Machine

- You want to do it old school – Download RPM files

- New Features Guide

I’ll be writing posts on some of the more interesting new features and I’ll add the links to those below. I’ll also add some links to post by other people:

- Docker Installation (Intel and Apple Chip)

- 23 Free Virtual Machine

- 23 Free – A Few (New Features) A few Quickies

- JSON Relational Duality – see post by Tim Hall

- more coming soon (see maintained list at https://oralytics.com/23c/)

You must be logged in to post a comment.